ONAP on  deployed by

deployed by  or RKE managed by

or RKE managed by  on

on

Platform

OpenStack

Amazon (384G/m - 201801 to 201808) - thank you

Michael O'Brien (201705-201905)

Amdocs - 201903+

Michael O'Brien - 201905+

Microsoft (201801+)

Amdocs

Intel/Windriver

(2017-)

Scriptedundercloud(Helm/Kubernetes/Docker)andONAPinstall-SingleVM

ONAP on | ||||

| VMs | Microsoft Azure | Google Cloud Platform | OpenStack | |

| Managed | Amazon EKS | AKS | ||

| Sponsor | Amazon (384G/m - 201801 to 201808) - thank you Michael O'Brien (201705-201905) Amdocs - 201903+

| Microsoft (201801+) Amdocs | Intel/Windriver (2017-) | |

This is a private page under daily continuous modification to keep it relevant as a live reference (don't edit it unless something is really wrong) https://twitter.com/_mikeobrien | https://www.linkedin.com/in/michaelobrien-developer/ For general support consult the official documentation at http://onap.readthedocs.io/en/latest/submodules/oom.git/docs/oom_quickstart_guide.html and https://onap.readthedocs.io/en/beijing/submodules/oom.git/docs/oom_cloud_setup_guide.html and raise DOC JIRA's for any modifications required to them. |

|---|

This page details deployment of ONAP on any environment that supports Kubernetes based containers.

Chat: http://onap-integration.eastus.cloudapp.azure.com:3000/group/onap-integration

Separate namespaces - to avoid the 1MB configmap limit - or just helm install/delete everything (no helm upgrade)

https://kubernetes.slack.com/messages/C09NXKJKA/?

https://d1.awsstatic.com/whitepapers/architecture/AWS_Well-Architected_Framework.pdf

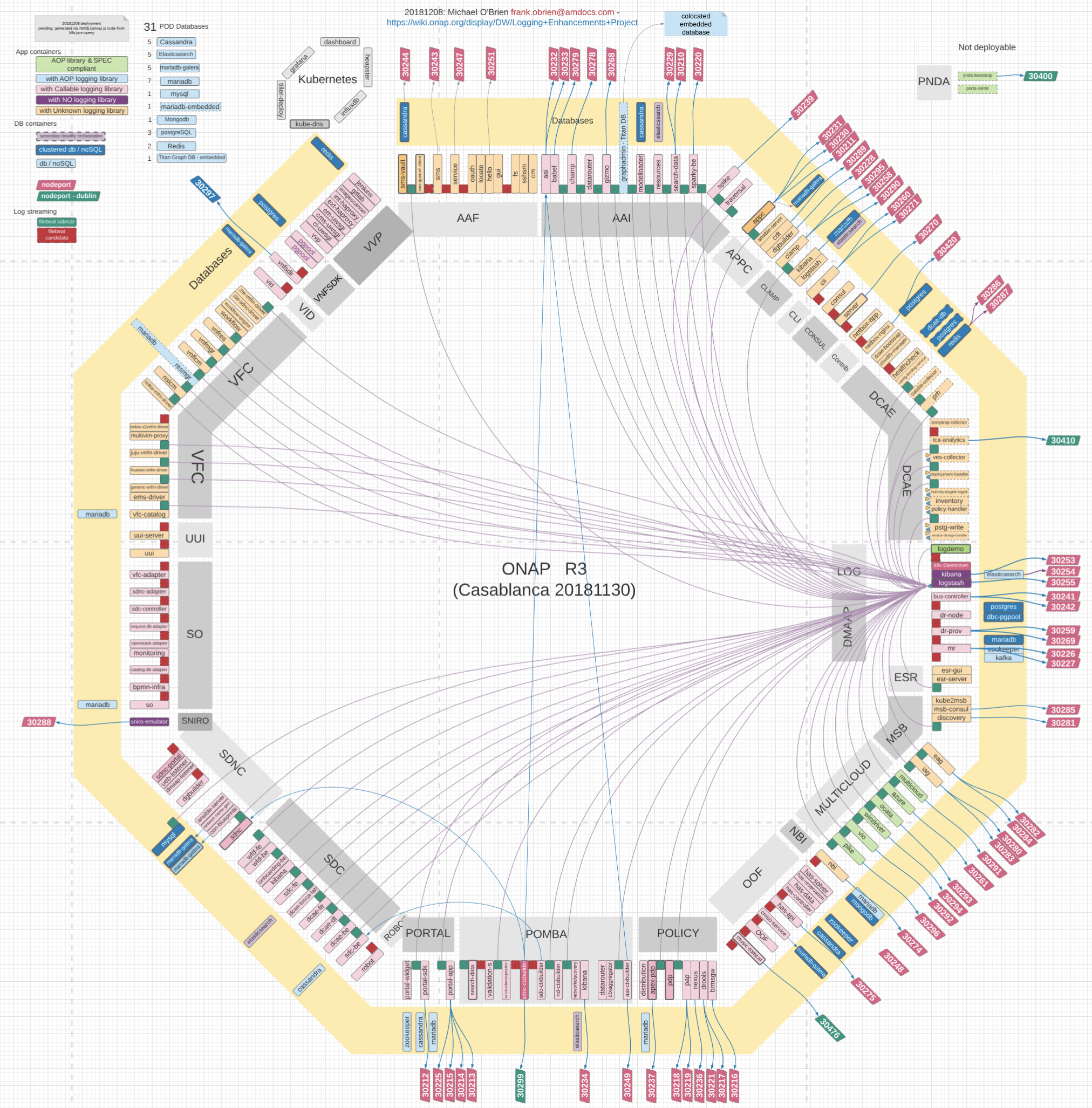

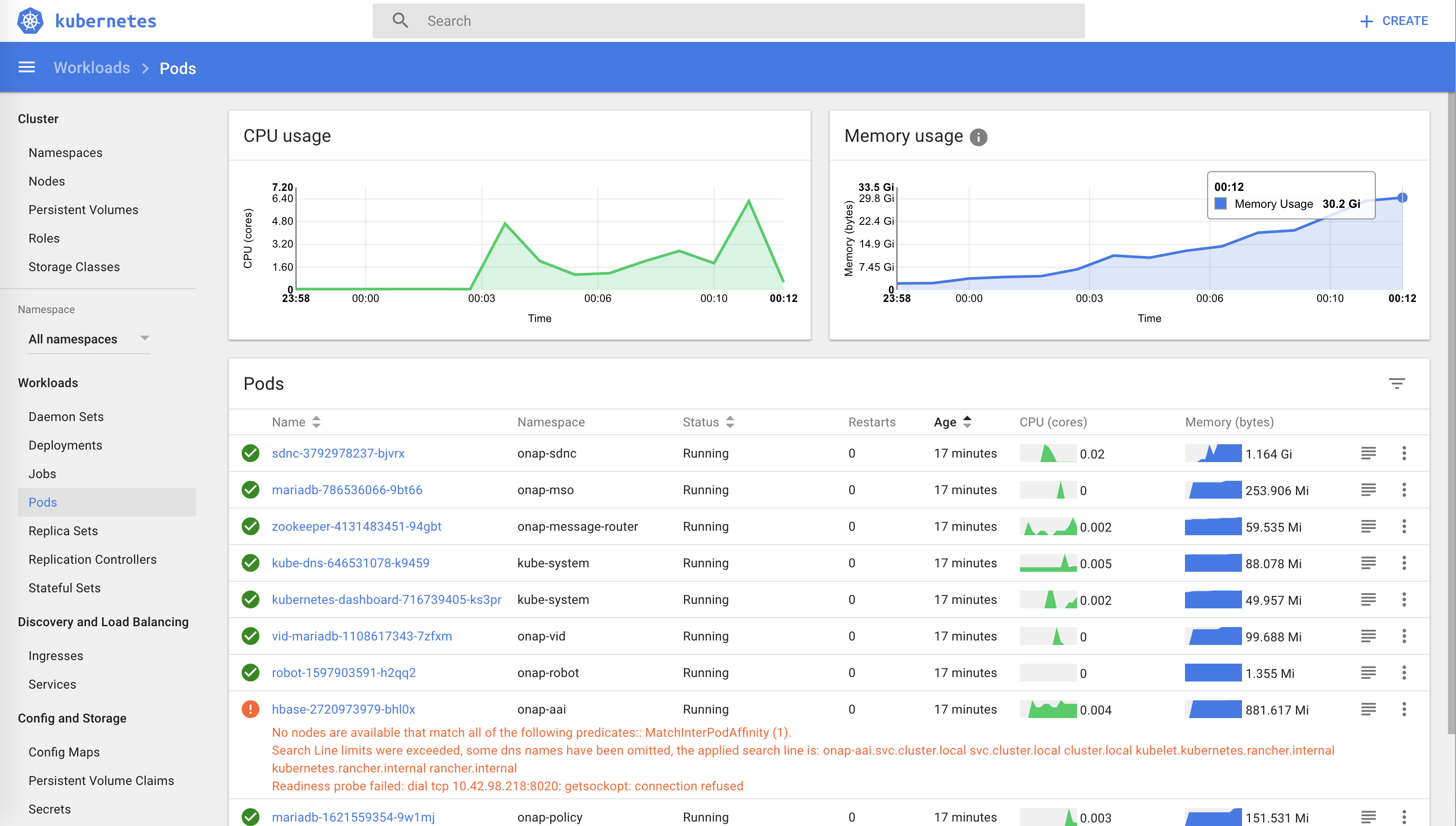

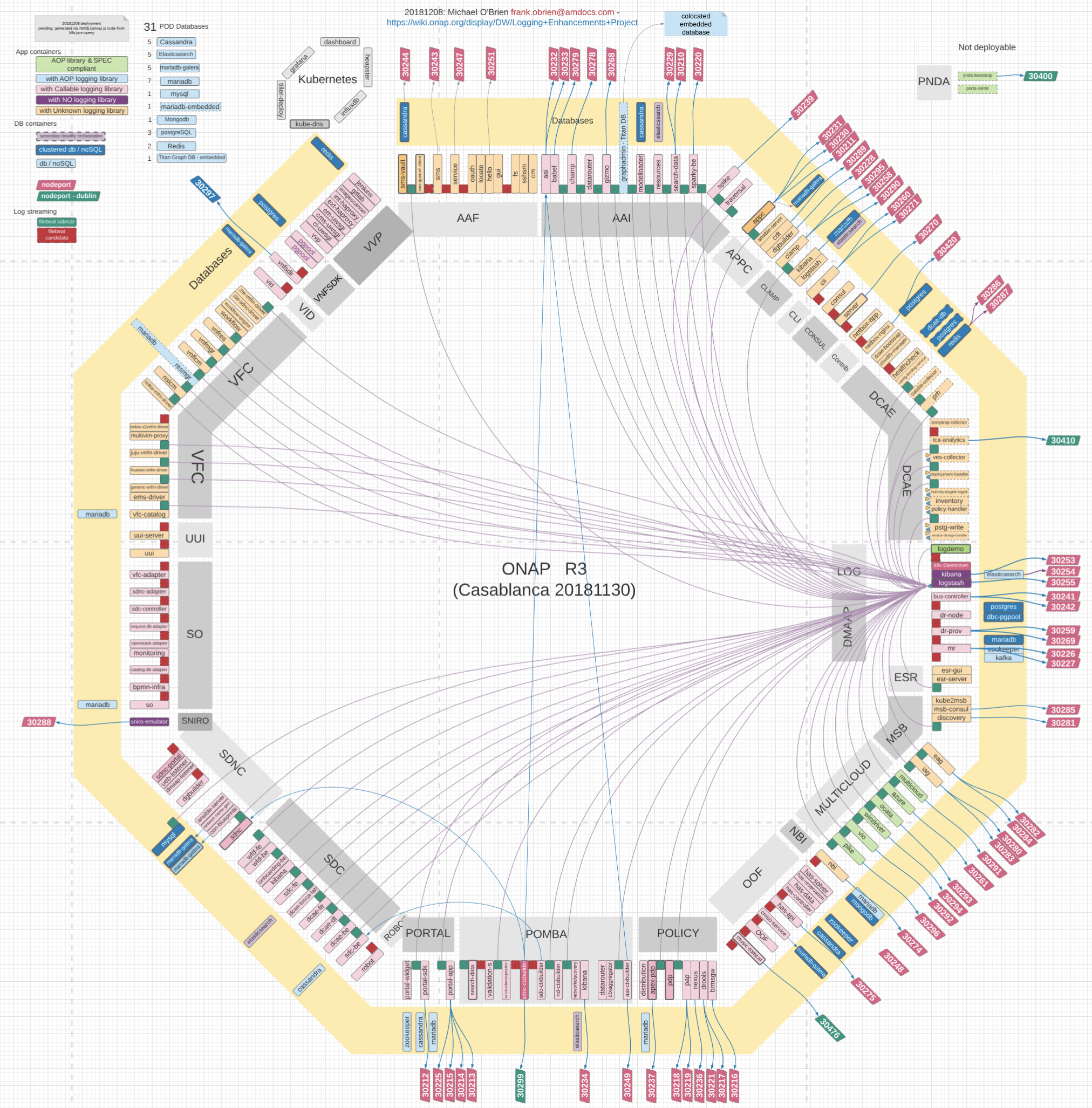

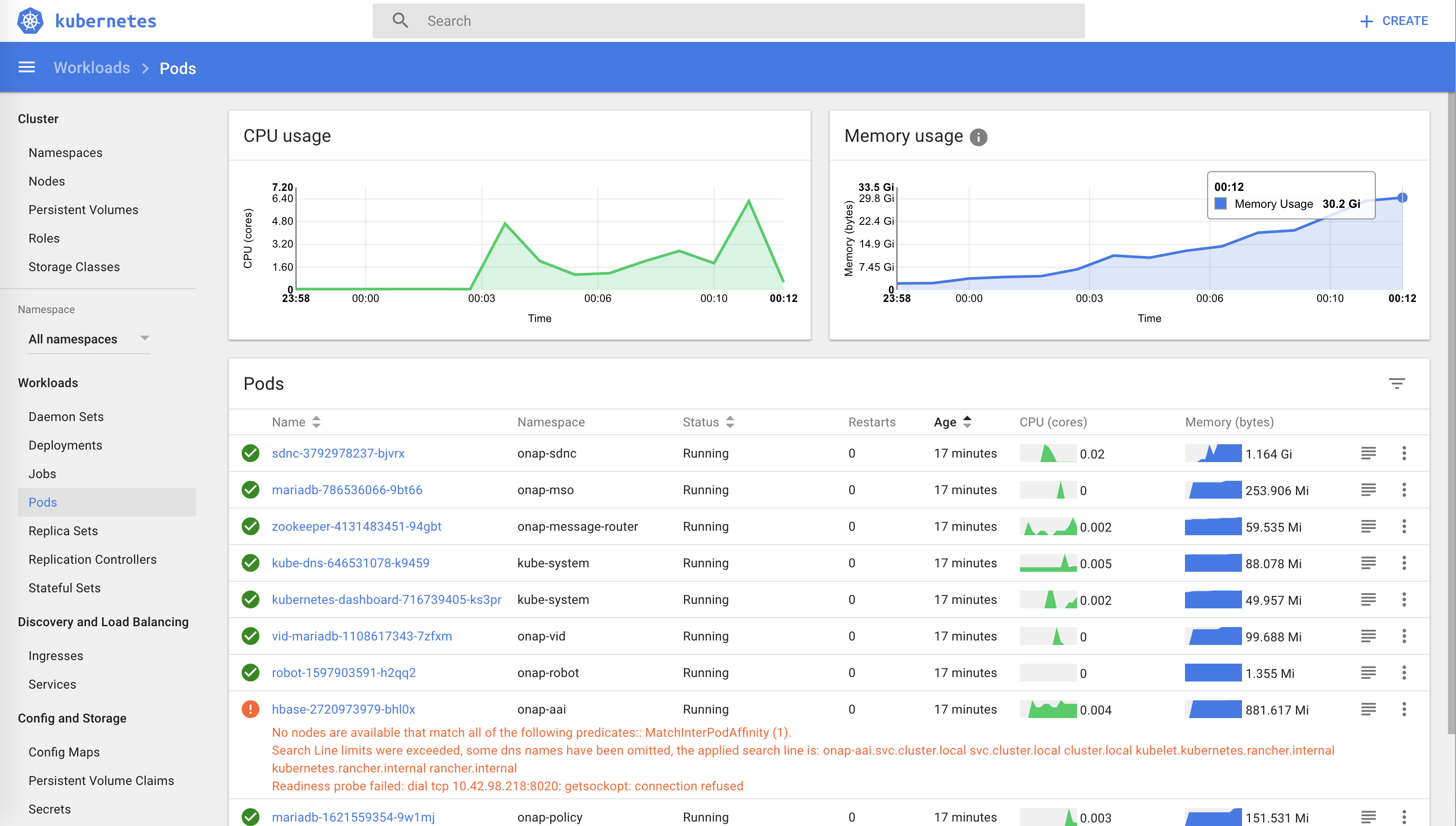

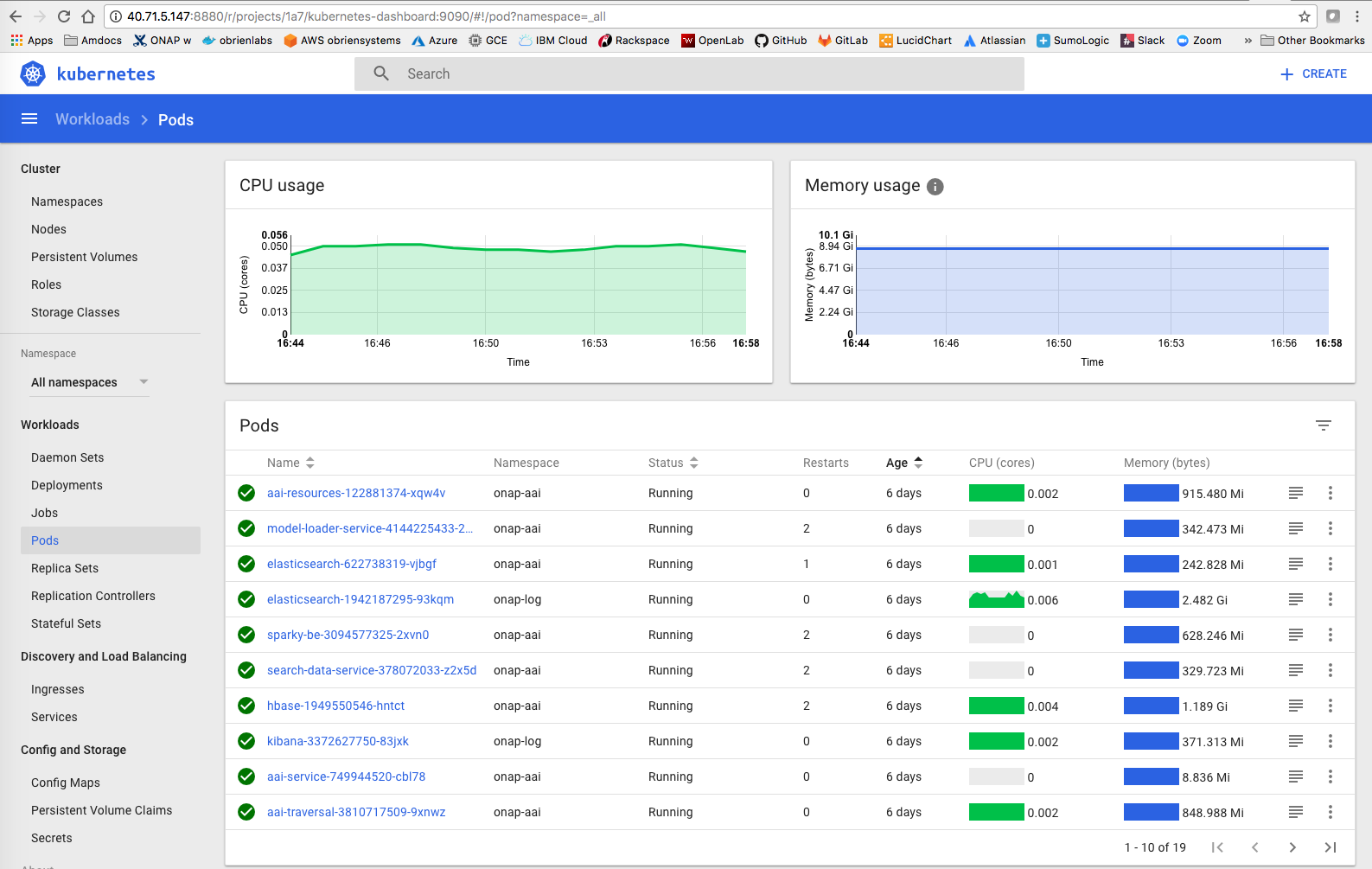

28 pods, 196 pods including vvp without the filebeat sidecars - 20181130 - this number is when all replicaSets and DaemonSets are set to 1 - which is 241 instances in the clustered case

Docker images currently size up to 75G as of 20181230

| After a docker_prepull.sh |

|---|

/dev/sda1 389255816 77322824 311916608 20% / |

| Type | VMs | Total RAM vCores HD | VM Flavor | K8S/Rancher Idle RAM | Deployed | Deployed ONAP RAM | Pods | Containers | Max vCores | Idle vCores | HD/VM | HD NFS only | IOPS | Date | Cost | branch | Notes deployment post 75min |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

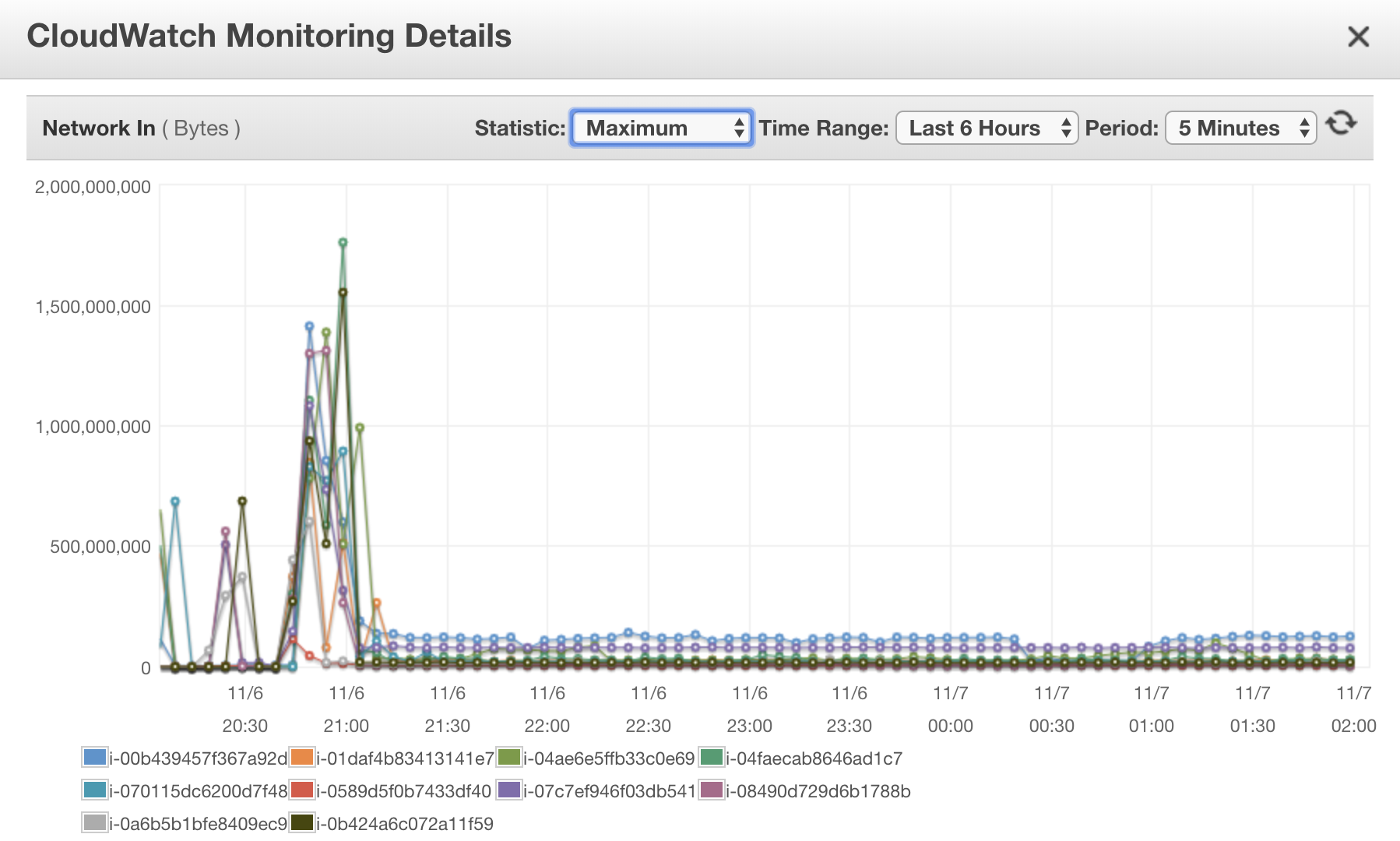

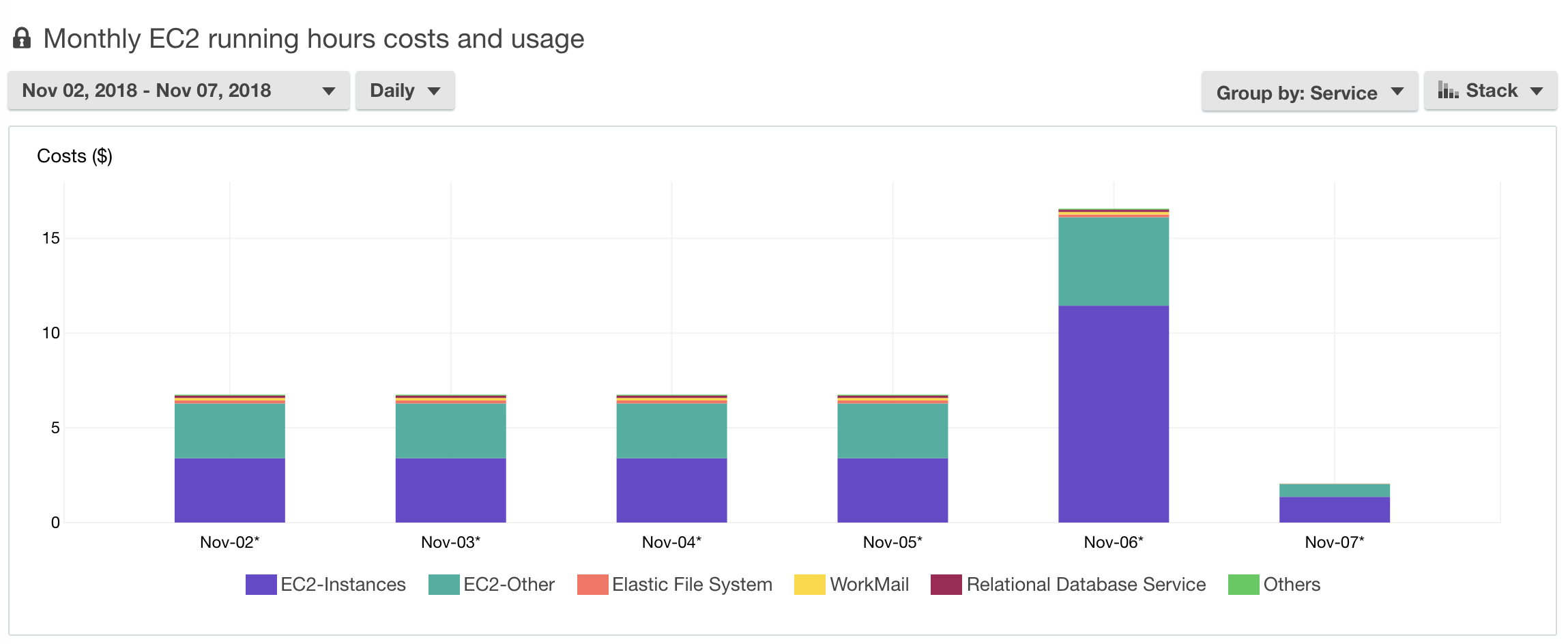

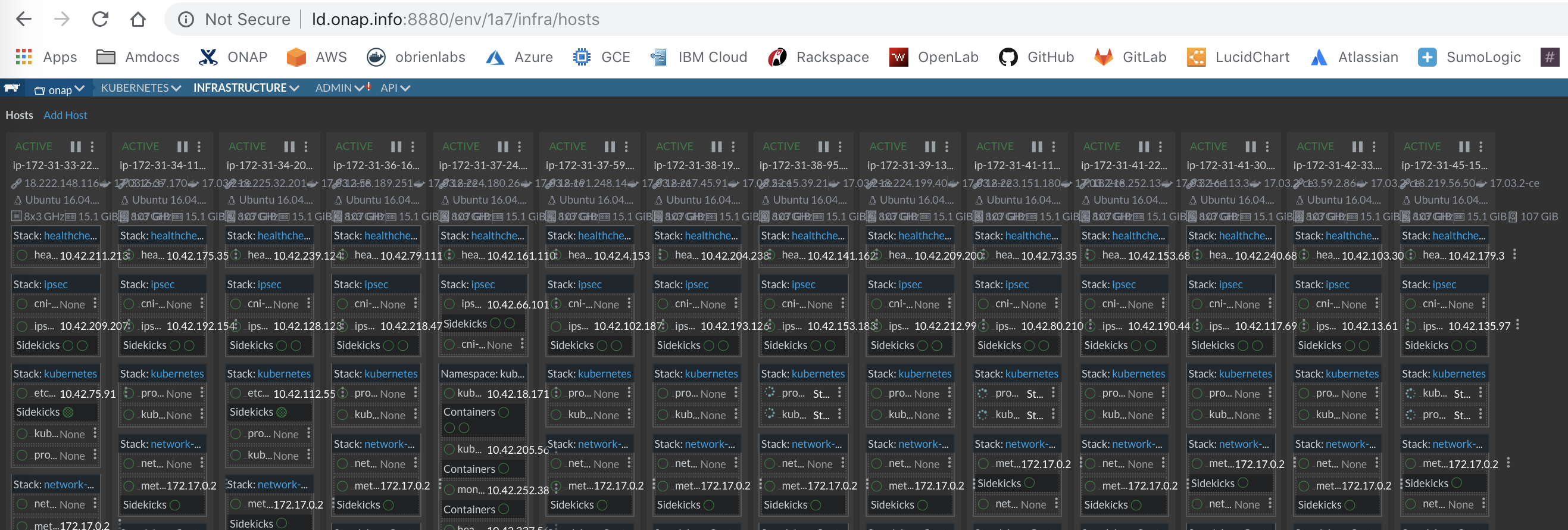

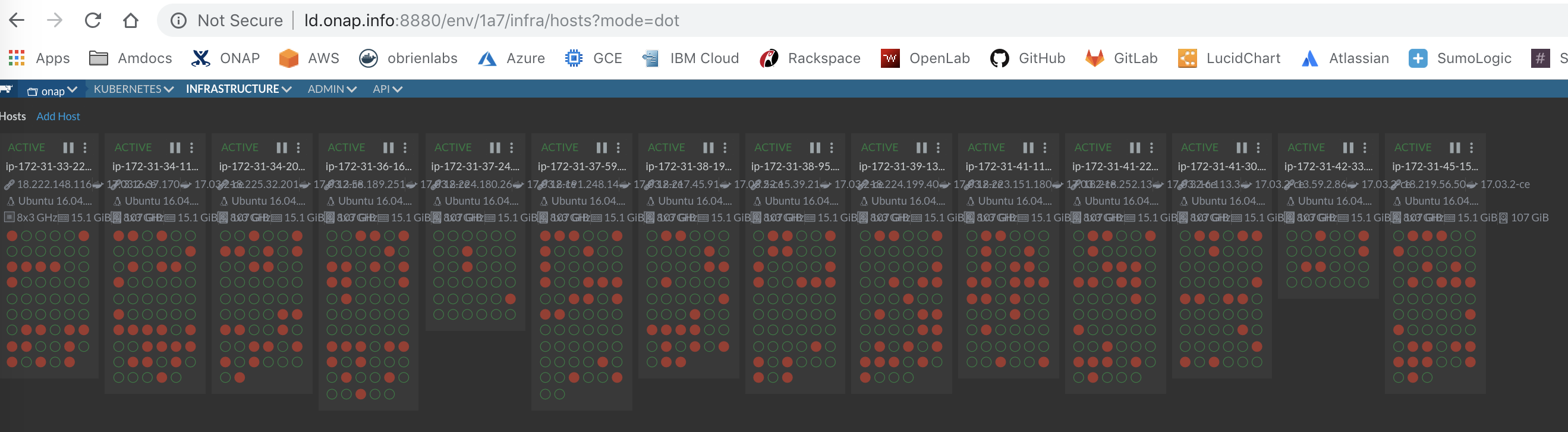

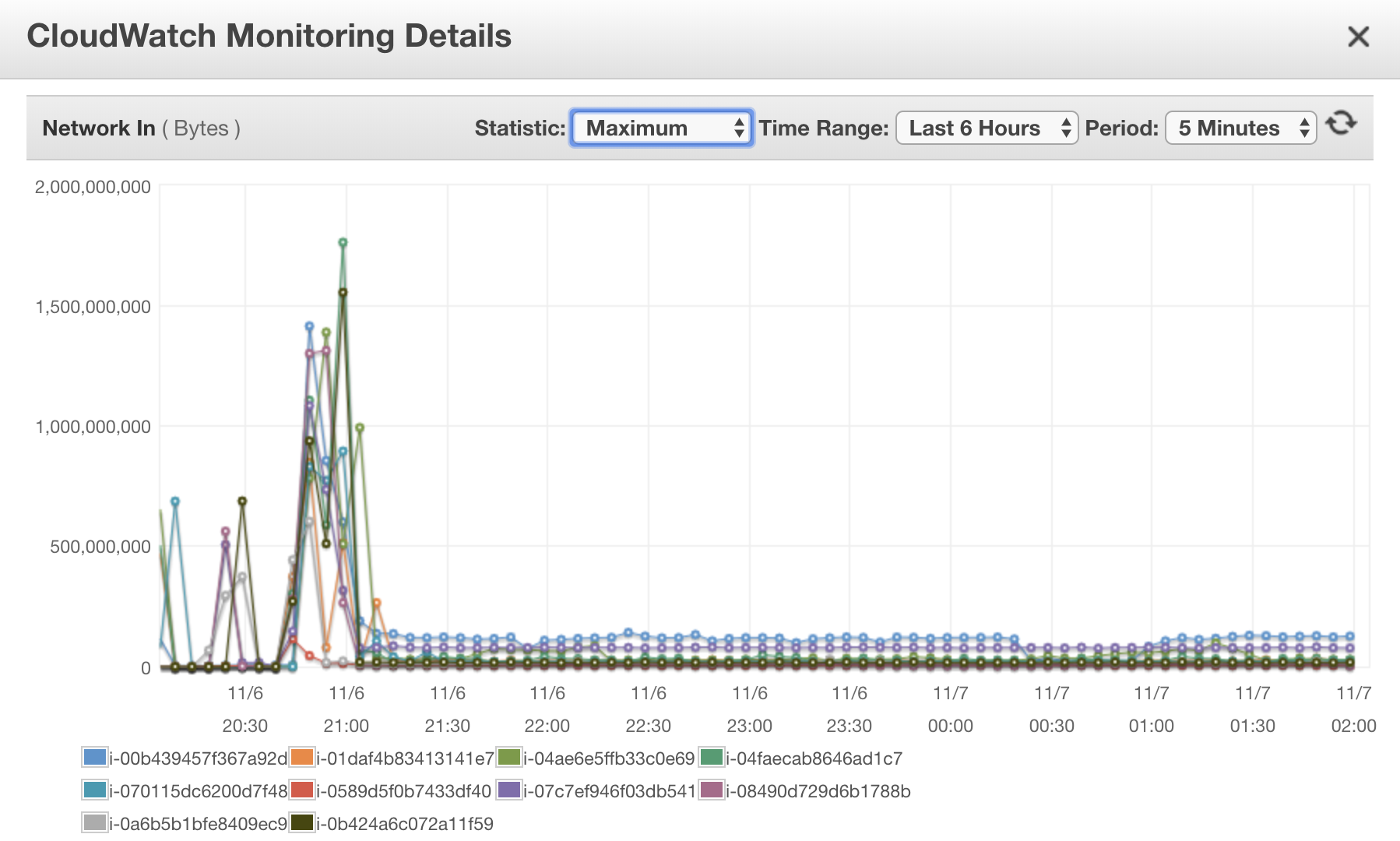

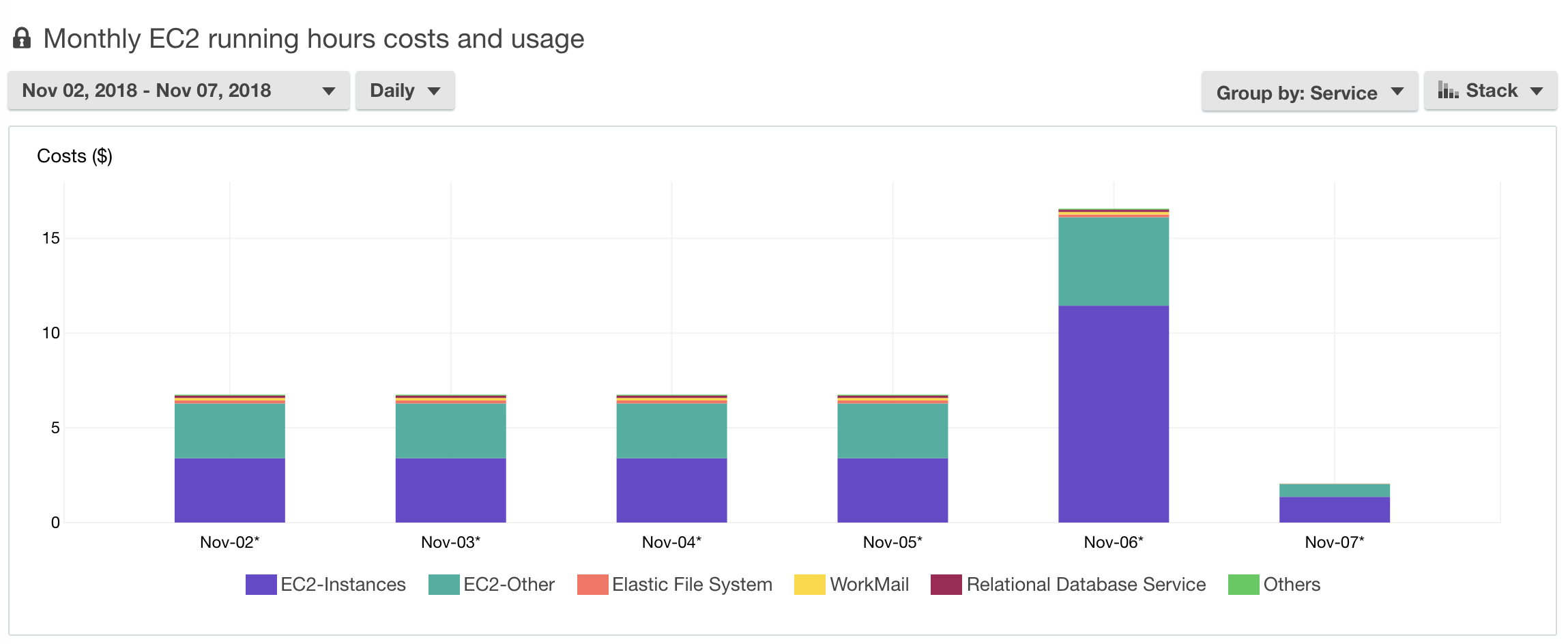

| Full Cluster (14 + 1) - recommended | 15 | 224G 112 vC 100G/VM | 16G, 8 vCores C5.2xLarge | 187Gb | 102Gb | 28 | 248 total 241 onap 217 up 0 error 24 config | 18 | 6+G master | 8.1G | 20181106 | $1.20 US/hour using the spot market | C | ||||

| Single VM (possible - not recommended) | 1 | 432G 64 vC 180G | 256G+ 32+ vCores | Rancher: 13G Kubernetes: 8G Top: 10G | 165Gb (after 24h) | 141Gb | 28 | 240 total 196 if RS and DS are set to 1 | 55 | 22 | 131G (including 75G dockers) | n/a | Max: 550/sec Idle: 220/sec | 20181105 20180101 | C | Tested on 432G/64vCore azure VM - R 1.6.22 K8S 1.11 updated 20190101 | |

| Developer 1-n pods | 1 | 16G | 16/32G 4-16 vCores | 14Gb | 10Gb | 3+ | 120+G | n/a | C | AAI+robot only |

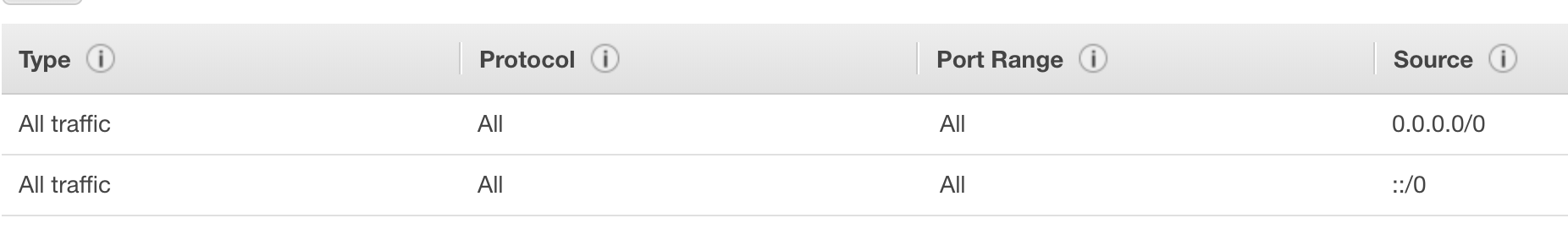

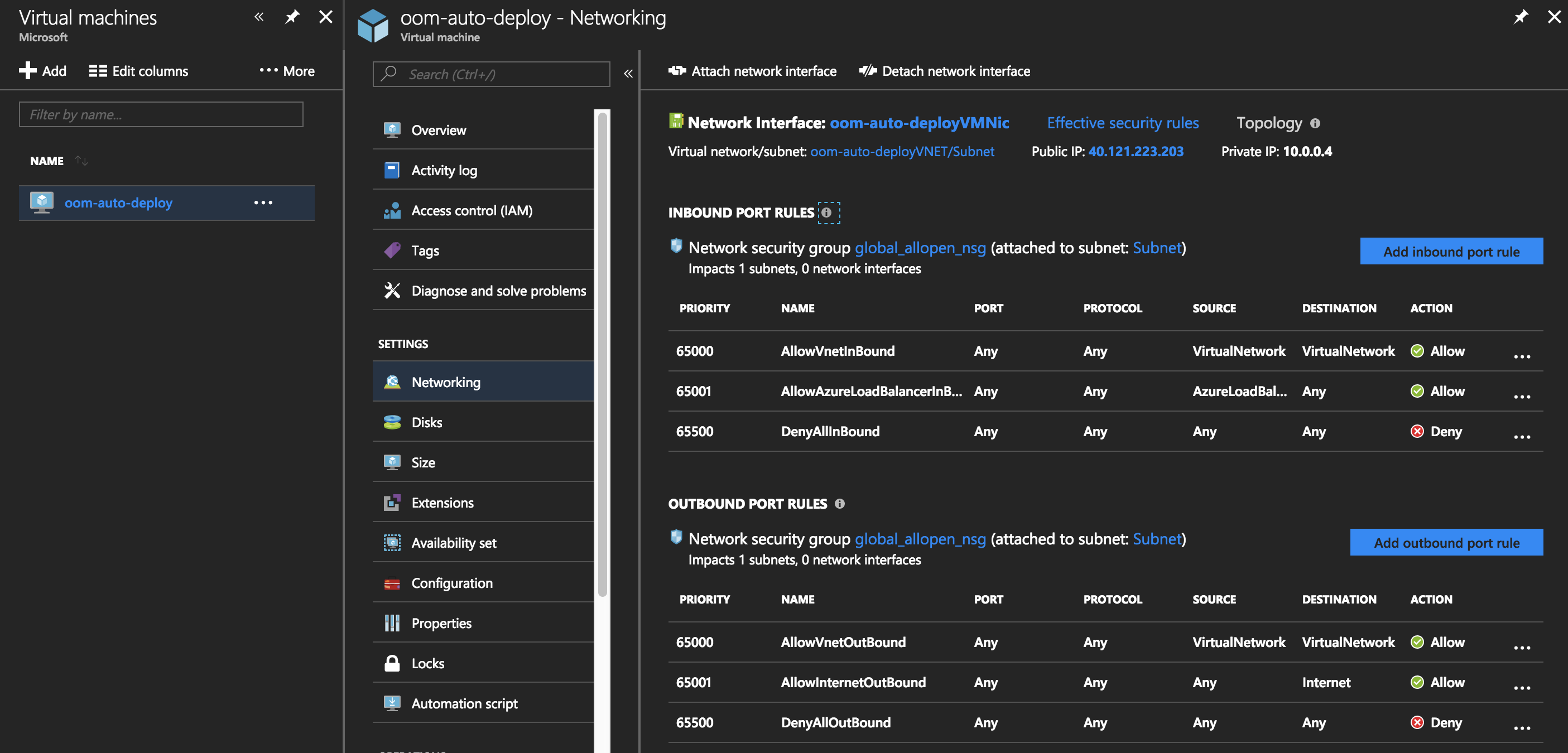

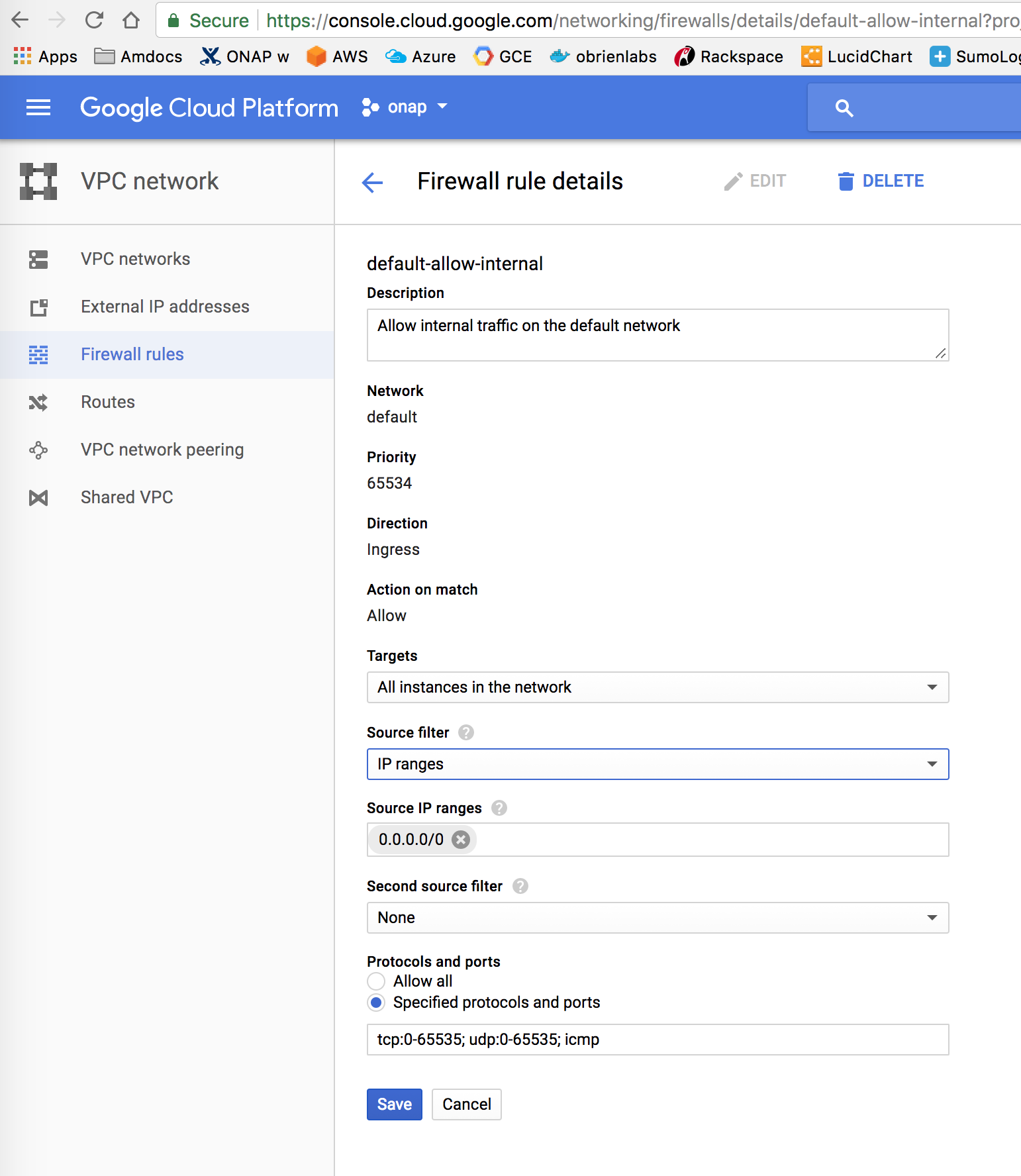

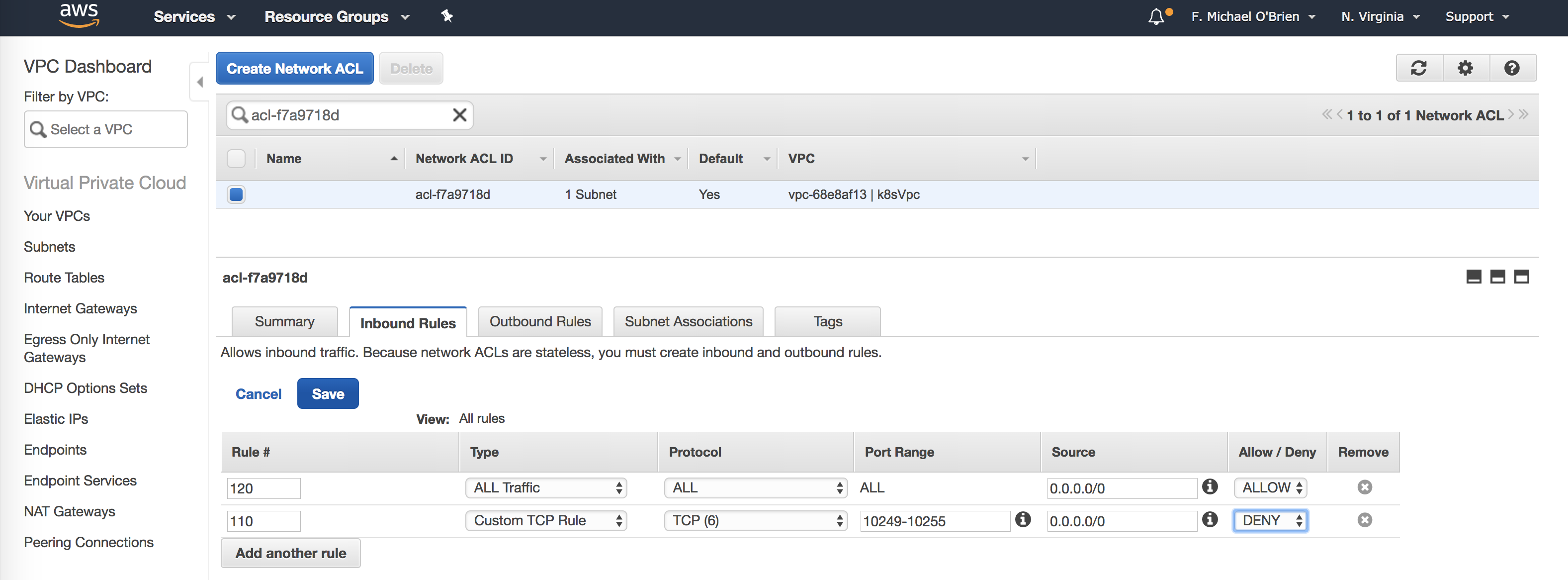

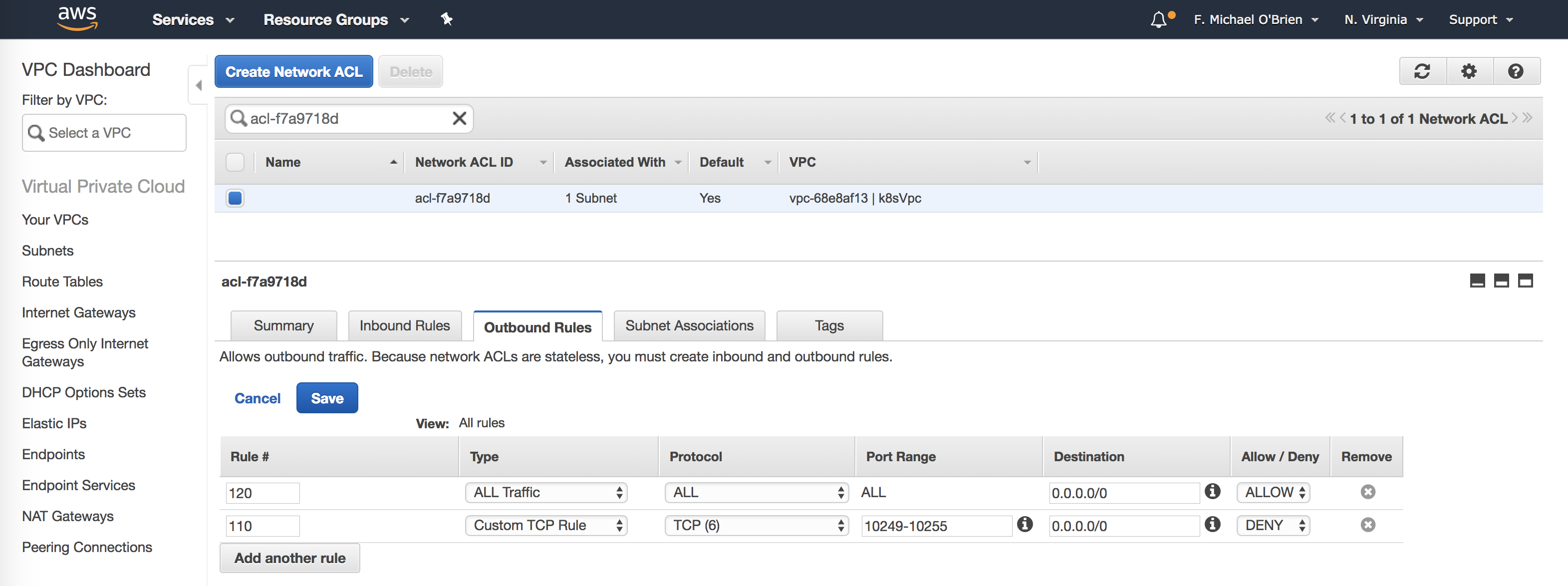

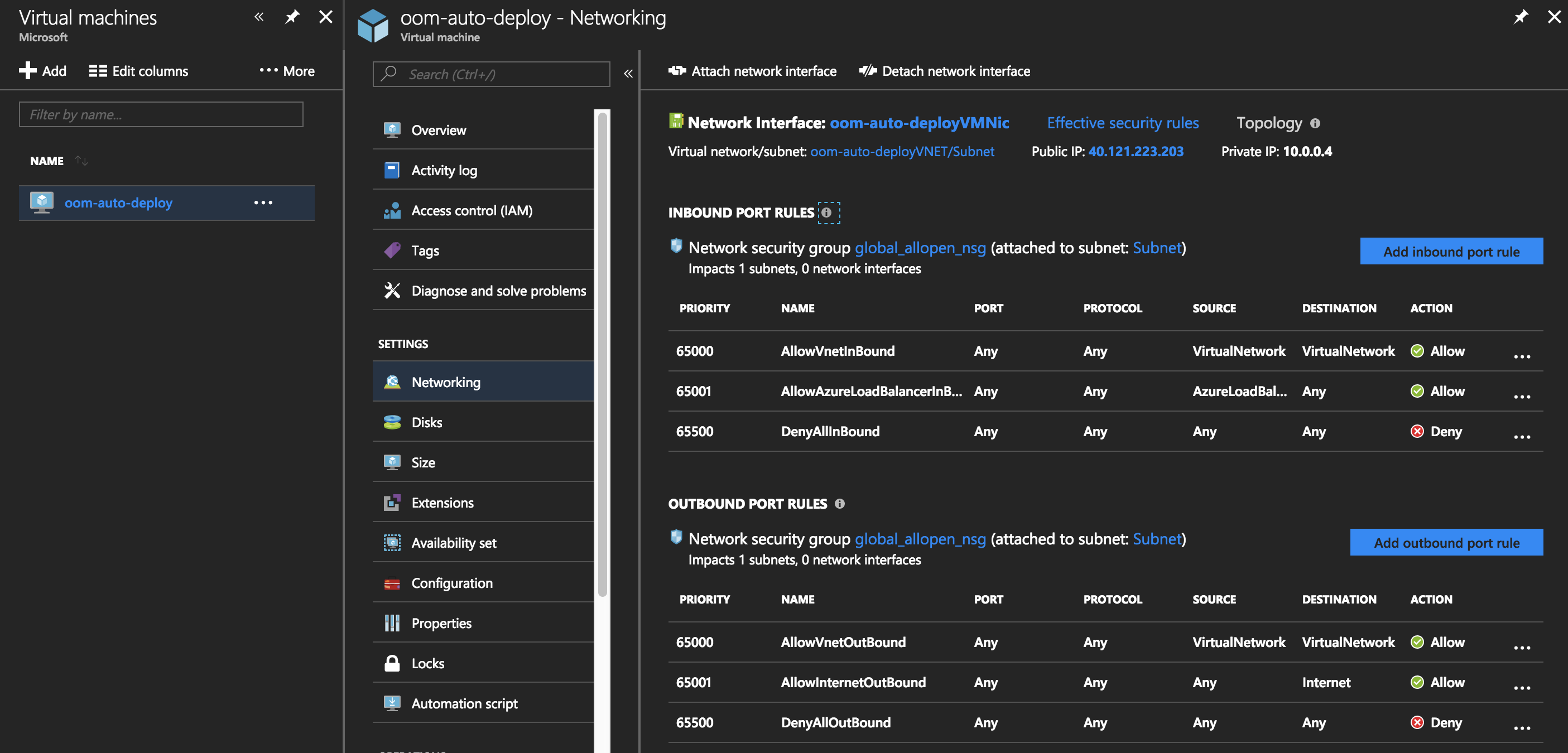

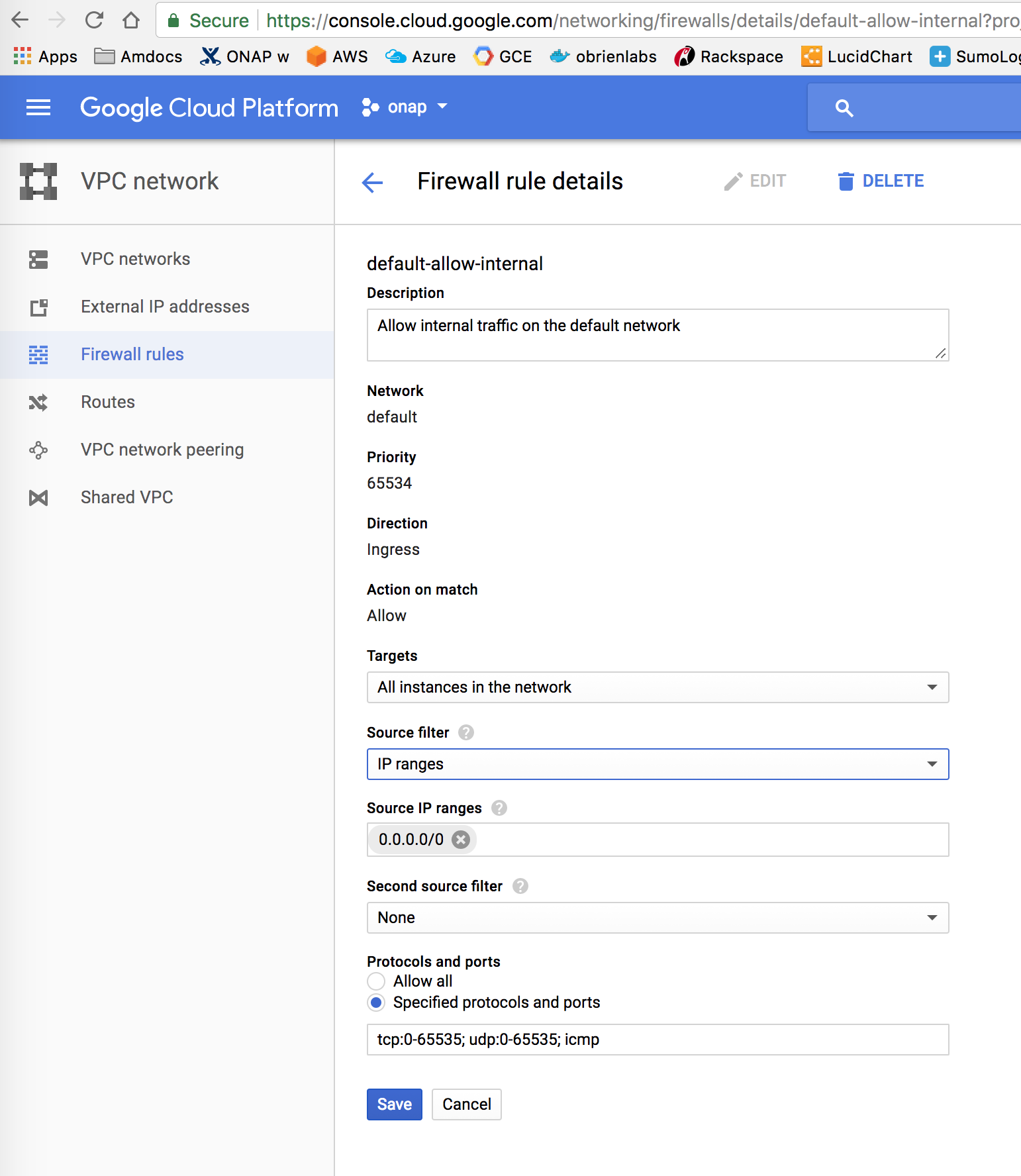

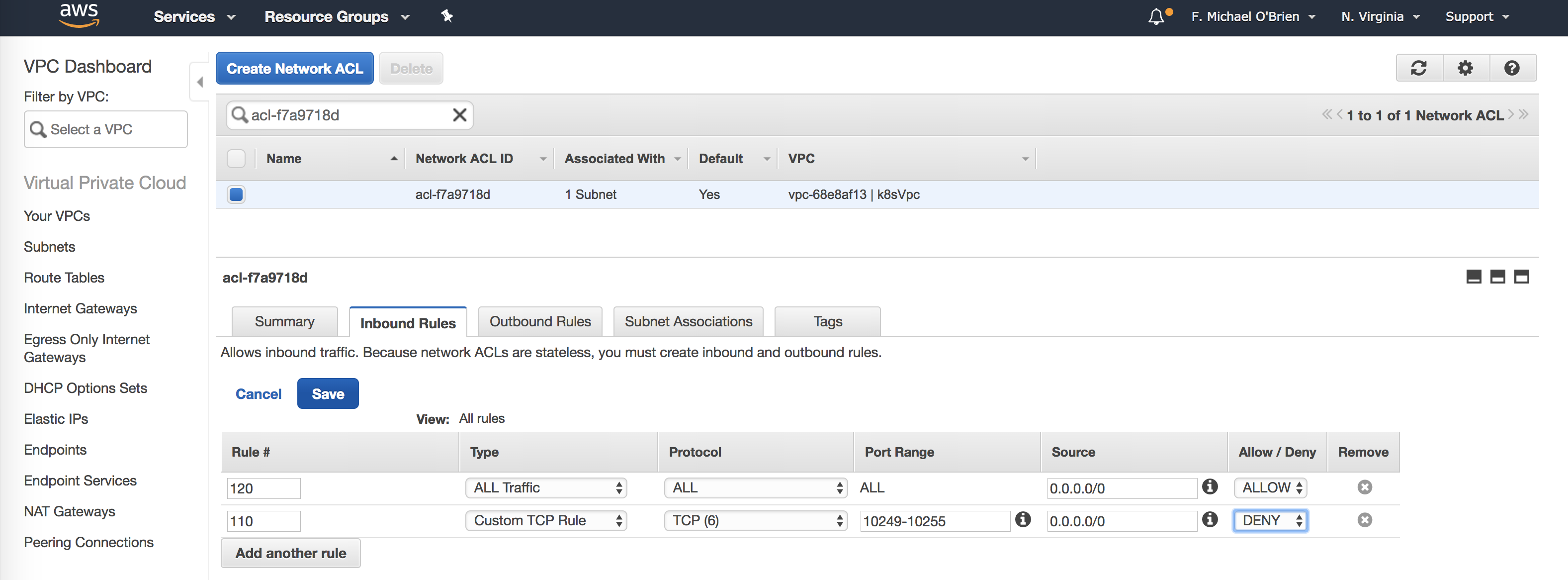

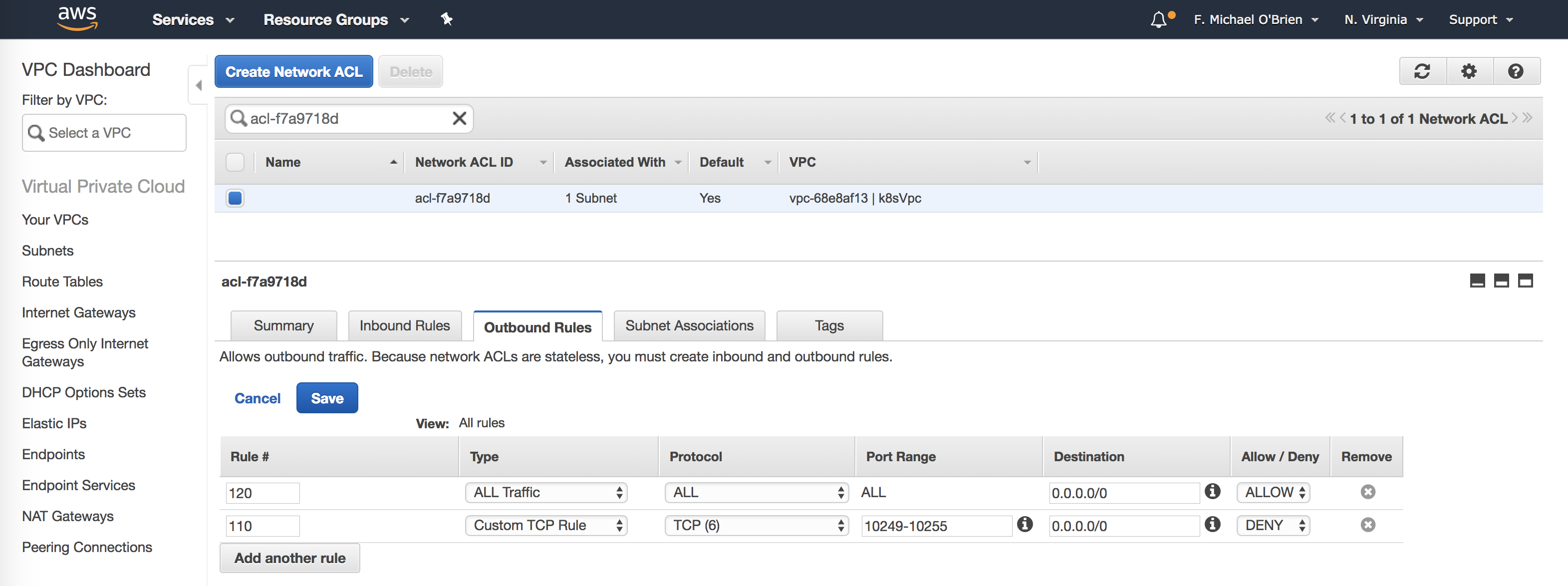

The VM should be open with no CIDR rules - but lock down 10249-10255 with RBAC

If you get an issue connecting to your rancher server "dial tcp 127.0.0.1:8880: getsockopt: connection refused" - this is usually security related - this line is the first to fail for example

https://git.onap.org/logging-analytics/tree/deploy/rancher/oom_rancher_setup.sh#n117

check the server first - either of these - but if the helm version hangs on "server" - the ports have an issue - run with all tcp/udp ports open 0.0.0.0/0 and ::/0 - and lock down the API on 10249-10255 via oauth github security from the rancher console to keep out crypto miners.

Rancher 1.6.25, Kubernetes 1.11.5, Docker 17.03, Helm 2.9.1

Specific to logging - we have a problem on any VM that contains AAI - the logstash container is being saturated there - see the 30+ percent VM -

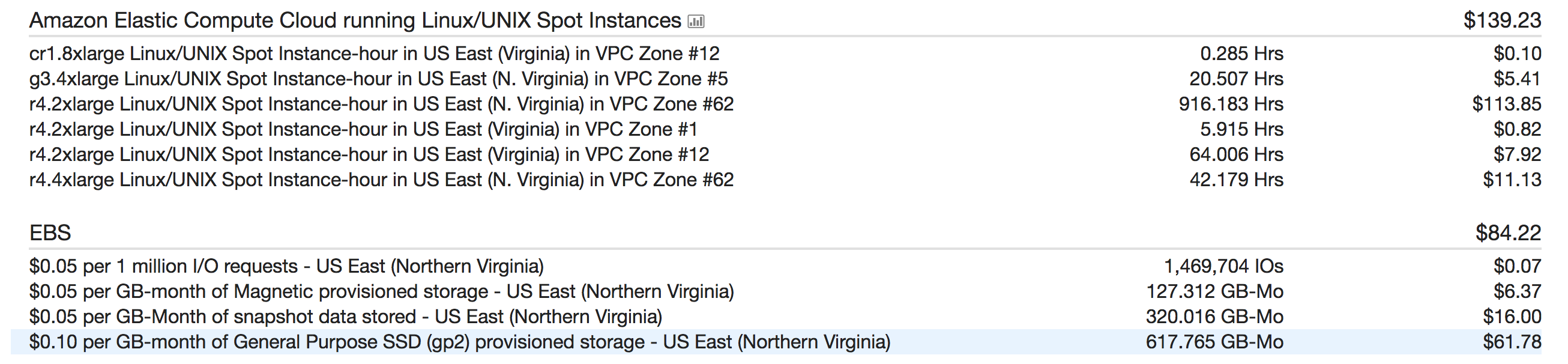

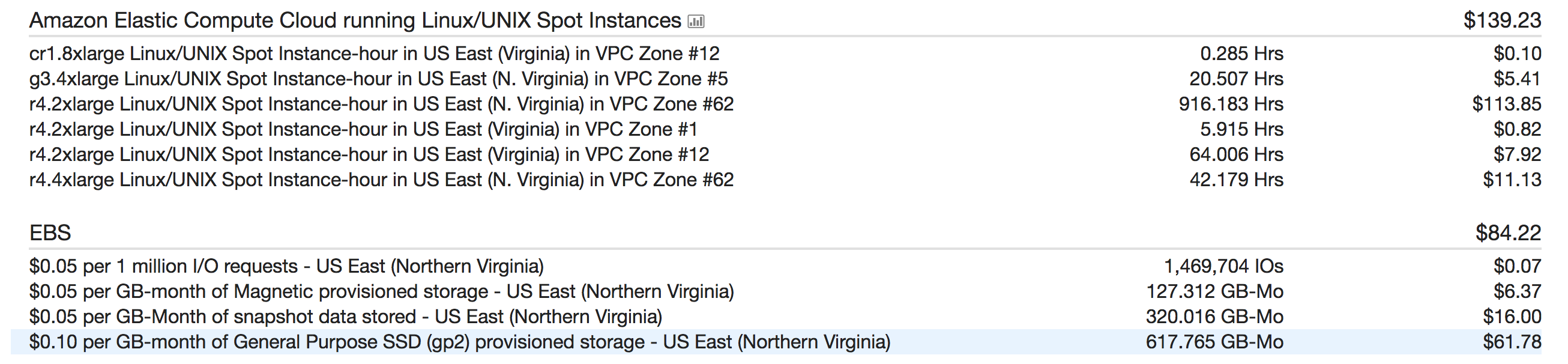

Using the spot market on AWS - we ran a bill of $10 for 8 hours of 15 VM's of C5.2xLarge - (includes EBS but not DNS, EFS/NFS)

ubuntu@ip-172-31-40-250:~$ kubectl get pods --all-namespaces | wc -l 248 ubuntu@ip-172-31-40-250:~$ kubectl get pods --all-namespaces | grep onap | wc -l 241 ubuntu@ip-172-31-40-250:~$ kubectl get pods --all-namespaces | grep onap | grep -E '1/1|2/2' | wc -l 217 ubuntu@ip-172-31-40-250:~$ kubectl get pods --all-namespaces | grep onap | grep -E '0/|1/2' | wc -l 24 ubuntu@ip-172-31-40-250:~$ kubectl get pods --all-namespaces -o wide | grep onap | grep -E '0/|1/2' onap onap-aaf-aaf-sms-preload-lvqx9 0/1 Completed 0 4h 10.42.75.71 ip-172-31-37-59.us-east-2.compute.internal <none> onap onap-aaf-aaf-sshsm-distcenter-ql5f8 0/1 Completed 0 4h 10.42.75.223 ip-172-31-34-207.us-east-2.compute.internal <none> onap onap-aaf-aaf-sshsm-testca-7rzcd 0/1 Completed 0 4h 10.42.18.37 ip-172-31-34-111.us-east-2.compute.internal <none> onap onap-aai-aai-graphadmin-create-db-schema-26pfs 0/1 Completed 0 4h 10.42.14.14 ip-172-31-37-59.us-east-2.compute.internal <none> onap onap-aai-aai-traversal-update-query-data-qlk7w 0/1 Completed 0 4h 10.42.88.122 ip-172-31-36-163.us-east-2.compute.internal <none> onap onap-contrib-netbox-app-provisioning-gmmvj 0/1 Completed 0 4h 10.42.111.99 ip-172-31-41-229.us-east-2.compute.internal <none> onap onap-contrib-netbox-app-provisioning-n6fw4 0/1 Error 0 4h 10.42.21.12 ip-172-31-36-163.us-east-2.compute.internal <none> onap onap-contrib-netbox-app-provisioning-nc8ww 0/1 Error 0 4h 10.42.109.156 ip-172-31-41-110.us-east-2.compute.internal <none> onap onap-contrib-netbox-app-provisioning-xcxds 0/1 Error 0 4h 10.42.152.223 ip-172-31-39-138.us-east-2.compute.internal <none> onap onap-dmaap-dmaap-dr-node-6496d8f55b-jfvrm 0/1 Init:0/1 28 4h 10.42.95.32 ip-172-31-38-194.us-east-2.compute.internal <none> onap onap-dmaap-dmaap-dr-prov-86f79c47f9-tldsp 0/1 CrashLoopBackOff 59 4h 10.42.76.248 ip-172-31-34-207.us-east-2.compute.internal <none> onap onap-oof-music-cassandra-job-config-7mb5f 0/1 Completed 0 4h 10.42.38.249 ip-172-31-41-110.us-east-2.compute.internal <none> onap onap-oof-oof-has-healthcheck-rpst7 0/1 Completed 0 4h 10.42.241.223 ip-172-31-39-138.us-east-2.compute.internal <none> onap onap-oof-oof-has-onboard-5bd2l 0/1 Completed 0 4h 10.42.205.75 ip-172-31-38-194.us-east-2.compute.internal <none> onap onap-portal-portal-db-config-qshzn 0/2 Completed 0 4h 10.42.112.46 ip-172-31-45-152.us-east-2.compute.internal <none> onap onap-portal-portal-db-config-rk4m2 0/2 Init:Error 0 4h 10.42.57.79 ip-172-31-38-194.us-east-2.compute.internal <none> onap onap-sdc-sdc-be-config-backend-2vw2q 0/1 Completed 0 4h 10.42.87.181 ip-172-31-39-138.us-east-2.compute.internal <none> onap onap-sdc-sdc-be-config-backend-k57lh 0/1 Init:Error 0 4h 10.42.148.79 ip-172-31-45-152.us-east-2.compute.internal <none> onap onap-sdc-sdc-cs-config-cassandra-vgnz2 0/1 Completed 0 4h 10.42.111.187 ip-172-31-34-111.us-east-2.compute.internal <none> onap onap-sdc-sdc-es-config-elasticsearch-lkb9m 0/1 Completed 0 4h 10.42.20.202 ip-172-31-39-138.us-east-2.compute.internal <none> onap onap-sdc-sdc-onboarding-be-cassandra-init-7zv5j 0/1 Completed 0 4h 10.42.218.1 ip-172-31-41-229.us-east-2.compute.internal <none> onap onap-sdc-sdc-wfd-be-workflow-init-q8t7z 0/1 Completed 0 4h 10.42.255.91 ip-172-31-41-30.us-east-2.compute.internal <none> onap onap-vid-vid-galera-config-4f274 0/1 Completed 0 4h 10.42.80.200 ip-172-31-33-223.us-east-2.compute.internal <none> onap onap-vnfsdk-vnfsdk-init-postgres-lf659 0/1 Completed 0 4h 10.42.238.204 ip-172-31-38-194.us-east-2.compute.internal <none> ubuntu@ip-172-31-40-250:~$ kubectl get nodes -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME ip-172-31-33-223.us-east-2.compute.internal Ready <none> 5h v1.11.2-rancher1 18.222.148.116 18.222.148.116 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-34-111.us-east-2.compute.internal Ready <none> 5h v1.11.2-rancher1 3.16.37.170 3.16.37.170 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-34-207.us-east-2.compute.internal Ready <none> 5h v1.11.2-rancher1 18.225.32.201 18.225.32.201 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-36-163.us-east-2.compute.internal Ready <none> 5h v1.11.2-rancher1 13.58.189.251 13.58.189.251 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-37-24.us-east-2.compute.internal Ready <none> 5h v1.11.2-rancher1 18.224.180.26 18.224.180.26 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-37-59.us-east-2.compute.internal Ready <none> 5h v1.11.2-rancher1 18.191.248.14 18.191.248.14 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-38-194.us-east-2.compute.internal Ready <none> 4h v1.11.2-rancher1 18.217.45.91 18.217.45.91 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-38-95.us-east-2.compute.internal Ready <none> 4h v1.11.2-rancher1 52.15.39.21 52.15.39.21 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-39-138.us-east-2.compute.internal Ready <none> 4h v1.11.2-rancher1 18.224.199.40 18.224.199.40 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-41-110.us-east-2.compute.internal Ready <none> 4h v1.11.2-rancher1 18.223.151.180 18.223.151.180 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-41-229.us-east-2.compute.internal Ready <none> 5h v1.11.2-rancher1 18.218.252.13 18.218.252.13 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-41-30.us-east-2.compute.internal Ready <none> 4h v1.11.2-rancher1 3.16.113.3 3.16.113.3 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-42-33.us-east-2.compute.internal Ready <none> 5h v1.11.2-rancher1 13.59.2.86 13.59.2.86 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ip-172-31-45-152.us-east-2.compute.internal Ready <none> 4h v1.11.2-rancher1 18.219.56.50 18.219.56.50 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2 ubuntu@ip-172-31-40-250:~$ kubectl top nodes NAME CPU(cores) CPU% MEMORY(bytes) MEMORY% ip-172-31-33-223.us-east-2.compute.internal 852m 10% 13923Mi 90% ip-172-31-34-111.us-east-2.compute.internal 1160m 14% 11643Mi 75% ip-172-31-34-207.us-east-2.compute.internal 1101m 13% 7981Mi 51% ip-172-31-36-163.us-east-2.compute.internal 656m 8% 13377Mi 87% ip-172-31-37-24.us-east-2.compute.internal 401m 5% 8543Mi 55% ip-172-31-37-59.us-east-2.compute.internal 711m 8% 10873Mi 70% ip-172-31-38-194.us-east-2.compute.internal 1136m 14% 8195Mi 53% ip-172-31-38-95.us-east-2.compute.internal 1195m 14% 9127Mi 59% ip-172-31-39-138.us-east-2.compute.internal 296m 3% 10870Mi 70% ip-172-31-41-110.us-east-2.compute.internal 2586m 32% 10950Mi 71% ip-172-31-41-229.us-east-2.compute.internal 159m 1% 9138Mi 59% ip-172-31-41-30.us-east-2.compute.internal 180m 2% 9862Mi 64% ip-172-31-42-33.us-east-2.compute.internal 1573m 19% 6352Mi 41% ip-172-31-45-152.us-east-2.compute.internal 1579m 19% 10633Mi 69% |

| key | value |

|---|---|

see https://gerrit.onap.org/r/#/c/77850/

| key | value |

|---|---|

| firewalld off | systemctl disable firewalld |

| git, make, python | yum install git yum groupinstall 'Development Tools' |

| IPv4 forwarding | add to /etc/sysctl.conf net.ipv4.ip_forward = 1 |

| Networking enabled | sudo vi /etc/sysconfig/network-scripts/ifcfg-ens33 with ONBOOT=yes" |

Follow https://git.onap.org/logging-analytics/tree/deploy/rancher/oom_rancher_setup.sh

Run the following script on a clean Ubuntu 16.04 or Redhat RHEL 7.x (7.6) VM anywhere - it will provision and register your kubernetes system as a collocated master/host.

Ideally you install a clustered set of hosts away from the master VM - you can do this by deleting the host from the cluster after it is installed below and run the (docker, nfs and the rancher agent docker on each host)/

The cd.sh script will fix your VM for this limitation first found in . If you don't run the cd.sh script - run the following command manually on each VM so that any elasticsearch container comes up properly - this is a base OS issue.

https://git.onap.org/logging-analytics/tree/deploy/cd.sh#n49

# fix virtual memory for onap-log:elasticsearch under Rancher 1.6.11 - OOM-431 sudo sysctl -w vm.max_map_count=262144 |

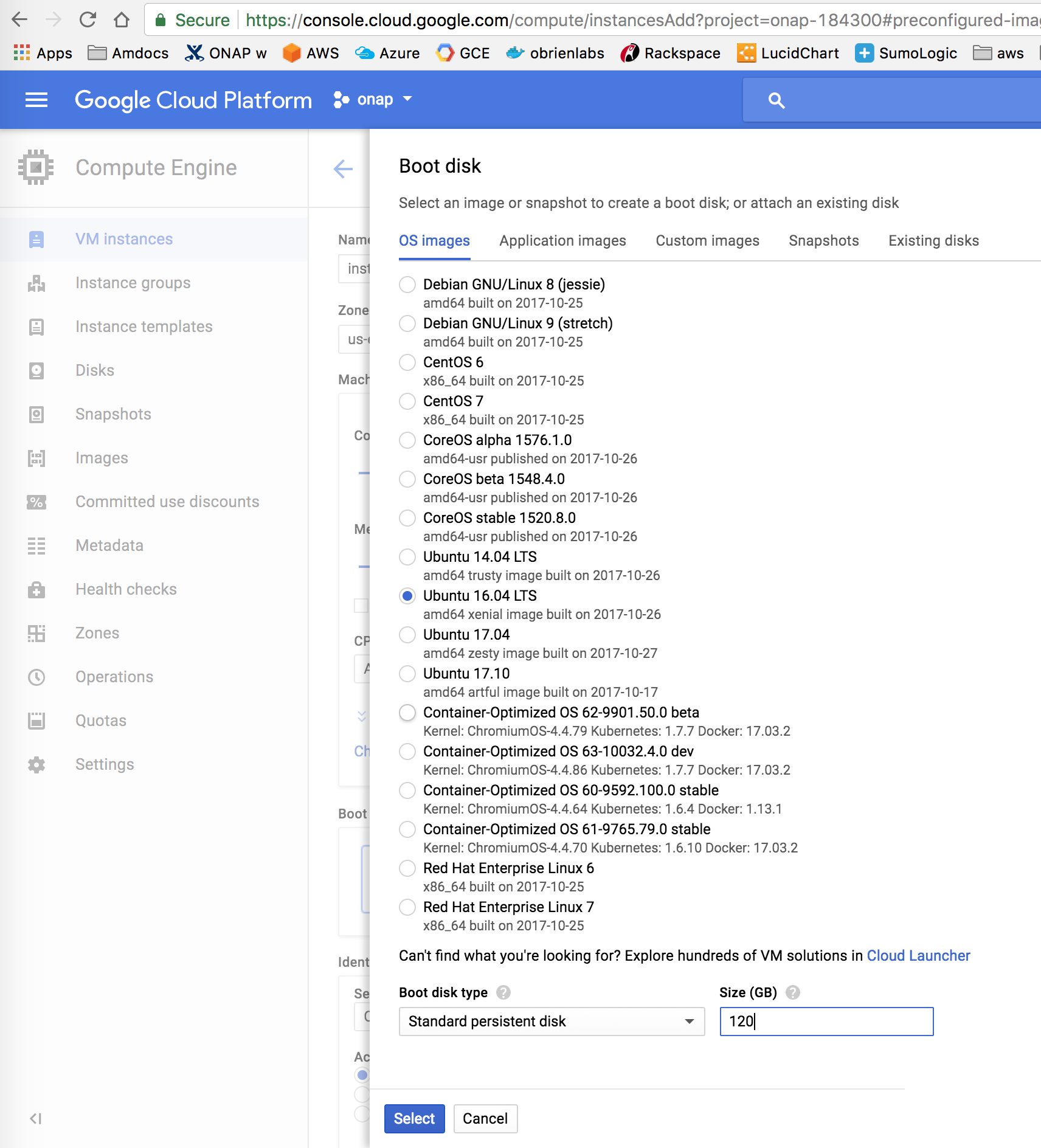

Create a single VM - 256G+

See recommended cluster configurations on ONAP Deployment Specification for Finance and Operations#AmazonAWS

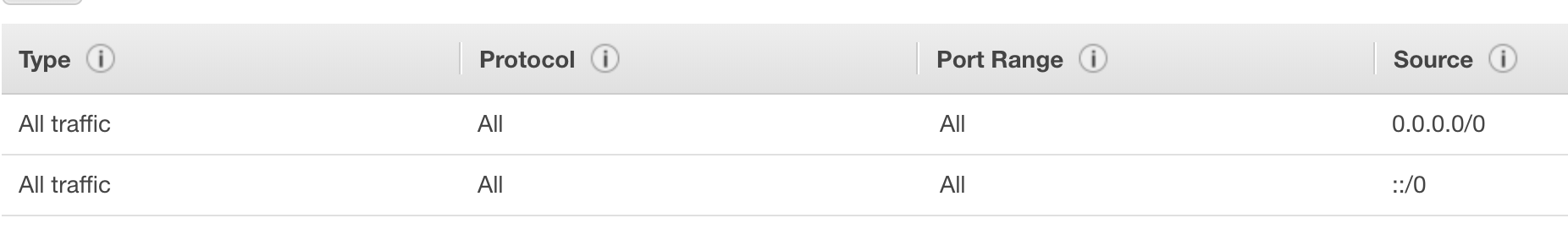

Create a 0.0.0.0/0 ::/O open security group

Use github to OAUTH authenticate your cluster just after installing it.

Last test 20190305 using 3.0.1-ONAP

ONAP Development#Changemax-podsfromdefault110podlimit

# 0 - verify the security group has all protocols (TCP/UCP) for 0.0.0.0/0 and ::/0

# to be save edit/make sure dns resolution is setup to the host

ubuntu@ld:~$ sudo cat /etc/hosts

127.0.0.1 cd.onap.info

# 1 - configure combined master/host VM - 26 min

sudo git clone https://gerrit.onap.org/r/logging-analytics

sudo cp logging-analytics/deploy/rancher/oom_rancher_setup.sh .

sudo ./oom_rancher_setup.sh -b master -s <your domain/ip> -e onap

# to deploy more than 110 pods per vm

before the environment (1a7) is created from the kubernetes template (1pt2) - at the waiting 3 min mark - edit it via https://wiki.onap.org/display/DW/ONAP+Development#ONAPDevelopment-Changemax-podsfromdefault110podlimit

--max-pods=900

https://lists.onap.org/g/onap-discuss/topic/oom_110_kubernetes_pod/25213556?p=,,,20,0,0,0::recentpostdate%2Fsticky,,,20,2,0,25213556

in "additional kubelet flags"

--max-pods=500

# on a 244G R4.8xlarge vm - 26 min later k8s cluster is up

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system heapster-6cfb49f776-5pq45 1/1 Running 0 10m

kube-system kube-dns-75c8cb4ccb-7dlsh 3/3 Running 0 10m

kube-system kubernetes-dashboard-6f4c8b9cd5-v625c 1/1 Running 0 10m

kube-system monitoring-grafana-76f5b489d5-zhrjc 1/1 Running 0 10m

kube-system monitoring-influxdb-6fc88bd58d-9494h 1/1 Running 0 10m

kube-system tiller-deploy-8b6c5d4fb-52zmt 1/1 Running 0 2m

# 3 - secure via github oauth the master - immediately to lock out crypto miners

http://cd.onap.info:8880

# check the master cluster

ubuntu@ip-172-31-14-89:~$ kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

ip-172-31-8-245.us-east-2.compute.internal 179m 2% 2494Mi 4%

ubuntu@ip-172-31-14-89:~$ kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

ip-172-31-8-245.us-east-2.compute.internal Ready <none> 13d v1.10.3-rancher1 172.17.0.1 Ubuntu 16.04.1 LTS 4.4.0-1049-aws docker://17.3.2

# 7 - after cluster is up - run cd.sh script to get onap up - customize your values.yaml - the 2nd time you run the script - a clean install - will clone new oom repo

# get the dev.yaml and set any pods you want up to true as well as fill out the openstack parameters

sudo wget https://git.onap.org/oom/plain/kubernetes/onap/resources/environments/dev.yaml

sudo cp dev.yaml dev0.yaml

sudo vi dev0.yaml

sudo cp dev0.yaml dev1.yaml

sudo cp logging-analytics/deploy/cd.sh .

# this does a prepull (-p), clones 3.0.0-ONAP, managed install -f true

sudo ./cd.sh -b 3.0.0-ONAP -e onap -p true -n nexus3.onap.org:10001 -f true -s 300 -c true -d true -w false -r false

# check around 55 min (on a 256G single node - with 32 vCores)

pods/failed/up @ min and ram

161/13/153 @ 50m 107g

@55 min

ubuntu@ip-172-31-20-218:~$ kubectl get pods --all-namespaces | grep onap | grep -E '1/1|2/2' | wc -l

152

ubuntu@ip-172-31-20-218:~$ kubectl get pods --all-namespaces | grep -E '0/|1/2'

onap dep-deployment-handler-5789b89d4b-s6fzw 1/2 Running 0 8m

onap dep-service-change-handler-76dcd99f84-fchxd 0/1 ContainerCreating 0 3m

onap onap-aai-champ-68ff644d85-rv7tr 0/1 Running 0 53m

onap onap-aai-gizmo-856f86d664-q5pvg 1/2 CrashLoopBackOff 9 53m

onap onap-oof-85864d6586-zcsz5 0/1 ImagePullBackOff 0 53m

onap onap-pomba-kibana-d76b6dd4c-sfbl6 0/1 Init:CrashLoopBackOff 7 53m

onap onap-pomba-networkdiscovery-85d76975b7-mfk92 1/2 CrashLoopBackOff 9 53m

onap onap-pomba-networkdiscoveryctxbuilder-c89786dfc-qnlx9 1/2 CrashLoopBackOff 9 53m

onap onap-vid-84c88db589-8cpgr 1/2 CrashLoopBackOff 7 52m

Note: DCAE has 2 sets of orchestration after the initial k8s orchestration - another at 57 min

ubuntu@ip-172-31-20-218:~$ kubectl get pods --all-namespaces | grep -E '0/|1/2'

onap dep-dcae-prh-6b5c6ff445-pr547 0/2 ContainerCreating 0 2m

onap dep-dcae-tca-analytics-7dbd46d5b5-bgrn9 0/2 ContainerCreating 0 1m

onap dep-dcae-ves-collector-59d4ff58f7-94rpq 0/2 ContainerCreating 0 1m

onap onap-aai-champ-68ff644d85-rv7tr 0/1 Running 0 57m

onap onap-aai-gizmo-856f86d664-q5pvg 1/2 CrashLoopBackOff 10 57m

onap onap-oof-85864d6586-zcsz5 0/1 ImagePullBackOff 0 57m

onap onap-pomba-kibana-d76b6dd4c-sfbl6 0/1 Init:CrashLoopBackOff 8 57m

onap onap-pomba-networkdiscovery-85d76975b7-mfk92 1/2 CrashLoopBackOff 11 57m

onap onap-pomba-networkdiscoveryctxbuilder-c89786dfc-qnlx9 1/2 Error 10 57m

onap onap-vid-84c88db589-8cpgr 1/2 CrashLoopBackOff 9 57m

at 1 hour

ubuntu@ip-172-31-20-218:~$ free

total used free shared buff/cache available

Mem: 251754696 111586672 45000724 193628 95167300 137158588

ubuntu@ip-172-31-20-218:~$ kubectl get pods --all-namespaces | grep onap | wc -l

164

ubuntu@ip-172-31-20-218:~$ kubectl get pods --all-namespaces | grep onap | grep -E '1/1|2/2' | wc -l

155

ubuntu@ip-172-31-20-218:~$ kubectl get pods --all-namespaces | grep -E '0/|1/2' | wc -l

8

ubuntu@ip-172-31-20-218:~$ kubectl get pods --all-namespaces | grep -E '0/|1/2'

onap dep-dcae-ves-collector-59d4ff58f7-94rpq 1/2 Running 0 4m

onap onap-aai-champ-68ff644d85-rv7tr 0/1 Running 0 59m

onap onap-aai-gizmo-856f86d664-q5pvg 1/2 CrashLoopBackOff 10 59m

onap onap-oof-85864d6586-zcsz5 0/1 ImagePullBackOff 0 59m

onap onap-pomba-kibana-d76b6dd4c-sfbl6 0/1 Init:CrashLoopBackOff 8 59m

onap onap-pomba-networkdiscovery-85d76975b7-mfk92 1/2 CrashLoopBackOff 11 59m

onap onap-pomba-networkdiscoveryctxbuilder-c89786dfc-qnlx9 1/2 CrashLoopBackOff 10 59m

onap onap-vid-84c88db589-8cpgr 1/2 CrashLoopBackOff 9 59m

ubuntu@ip-172-31-20-218:~$ df

Filesystem 1K-blocks Used Available Use% Mounted on

udev 125869392 0 125869392 0% /dev

tmpfs 25175472 54680 25120792 1% /run

/dev/xvda1 121914320 91698036 30199900 76% /

tmpfs 125877348 30312 125847036 1% /dev/shm

tmpfs 5120 0 5120 0% /run/lock

tmpfs 125877348 0 125877348 0% /sys/fs/cgroup

tmpfs 25175472 0 25175472 0% /run/user/1000

todo: verify the release is there after a helm install - as the configMap size issue is breaking the release for now |

Create a single VM - 256G+

ubuntu@a-onap-dmz-nodelete:~$ ./oom_deployment.sh -b master -s att.onap.cloud -e onap -r a_ONAP_CD_master -t _arm_deploy_onap_cd.json -p _arm_deploy_onap_cd_z_parameters.json # register the IP to DNS with route53 for att.onap.info - using this for the ONAP academic summit on the 22nd 13.68.113.104 = att.onap.cloud |

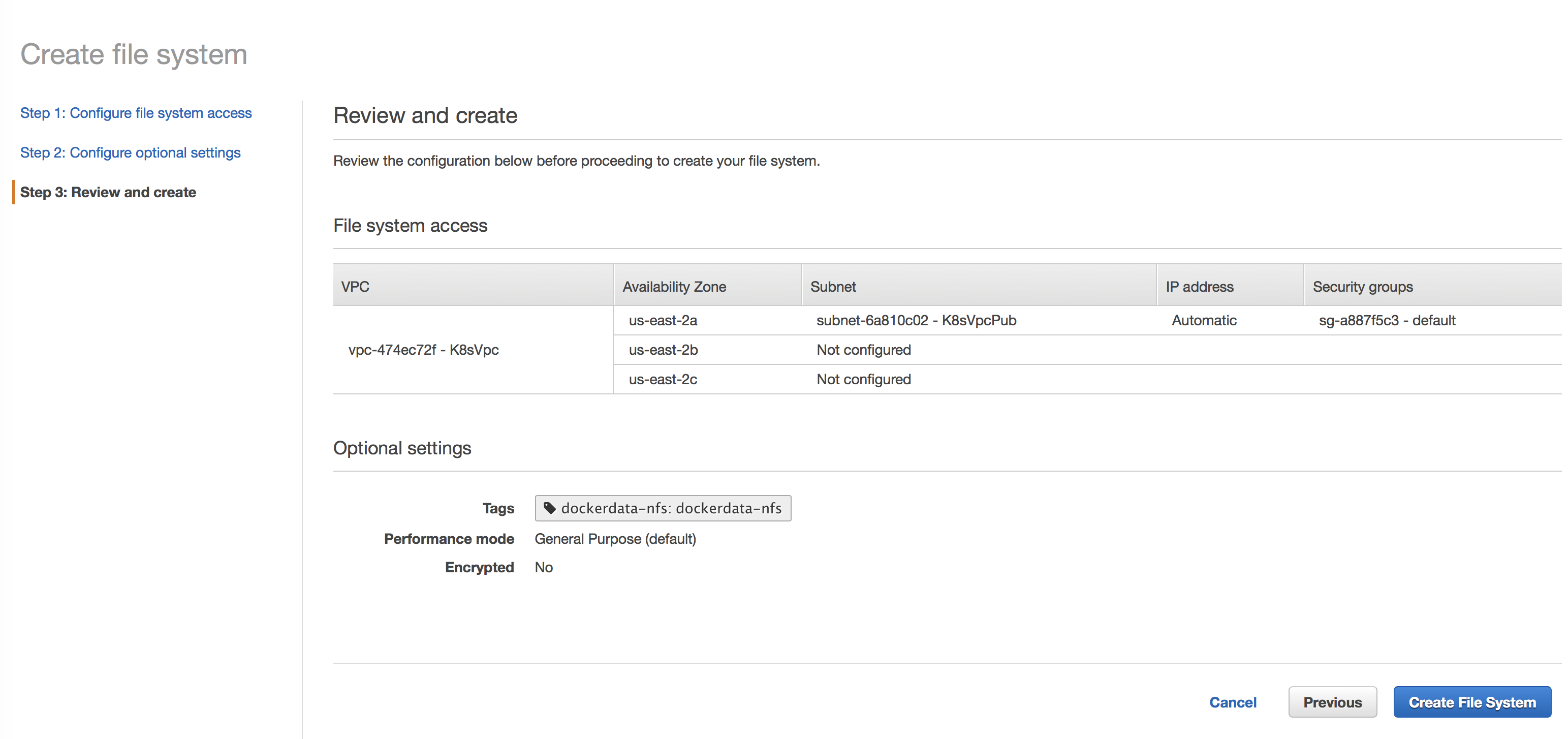

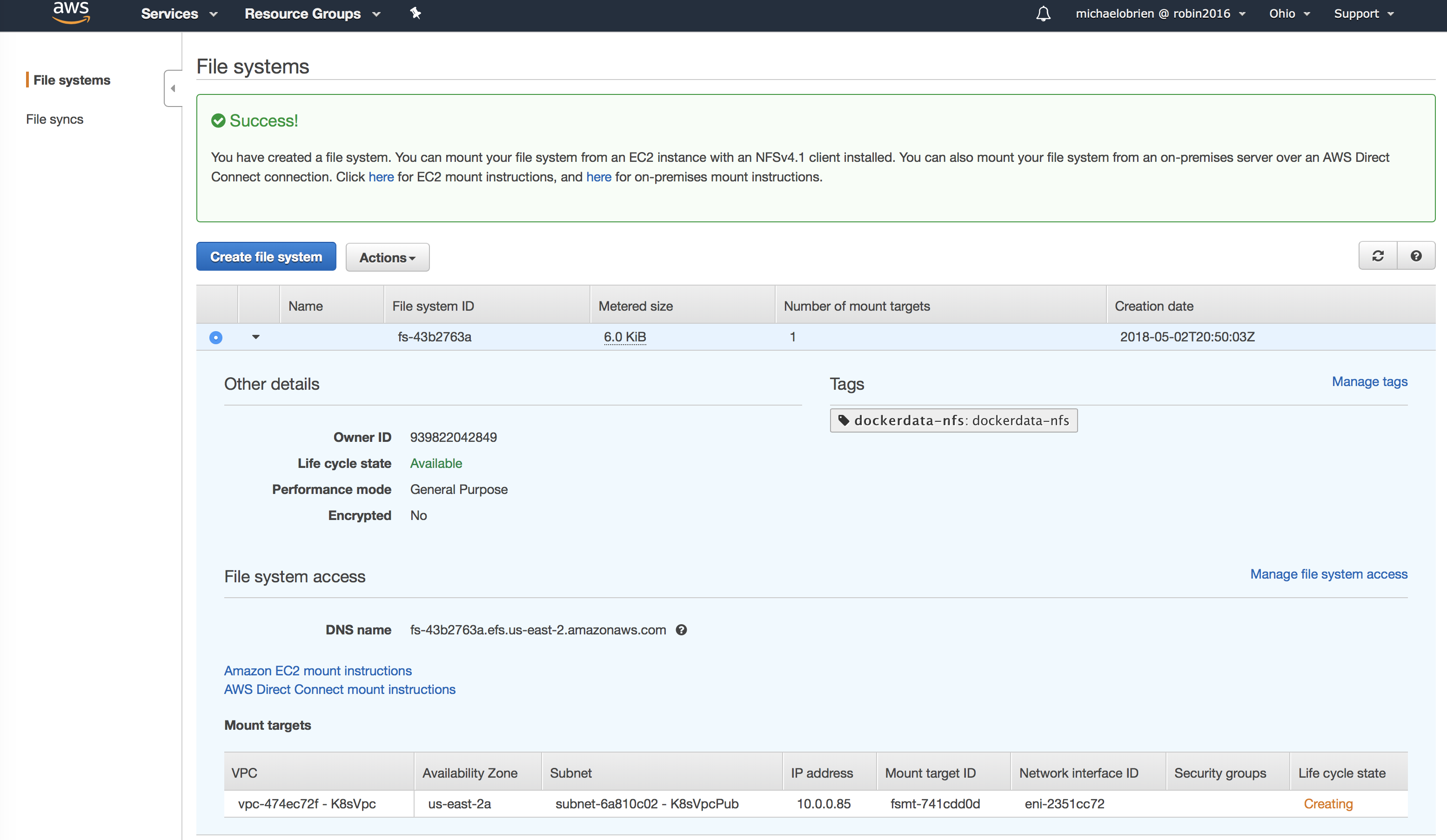

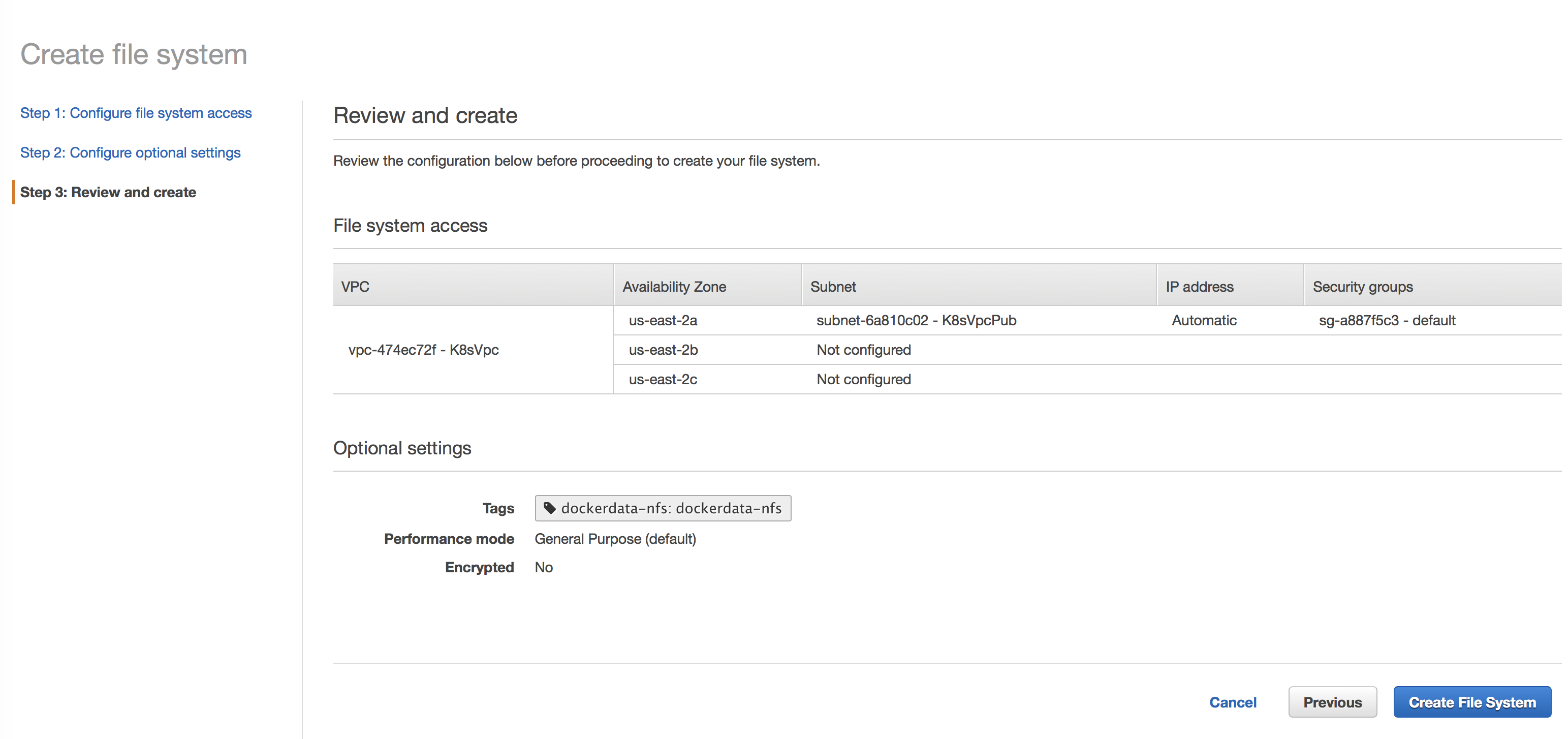

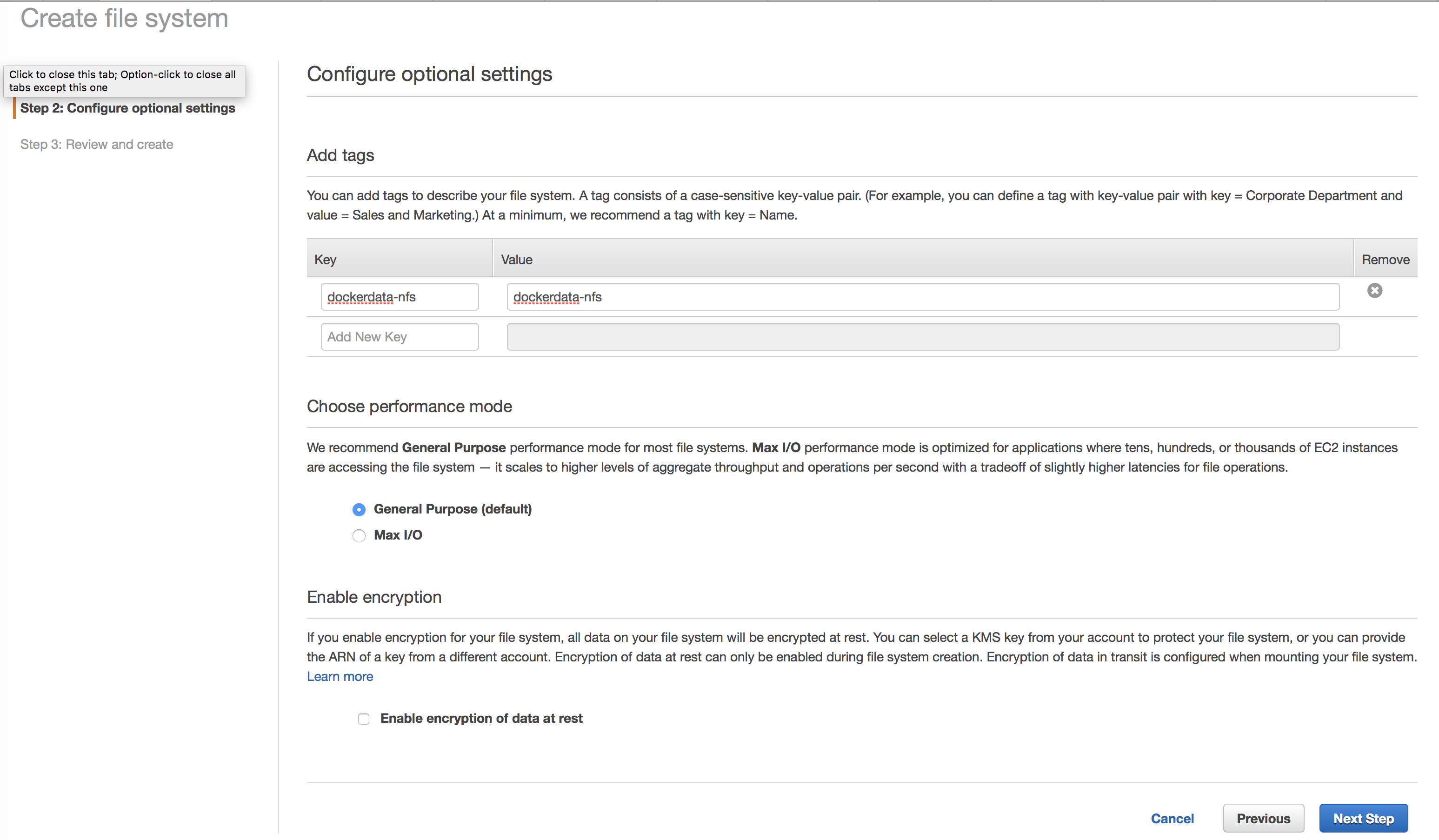

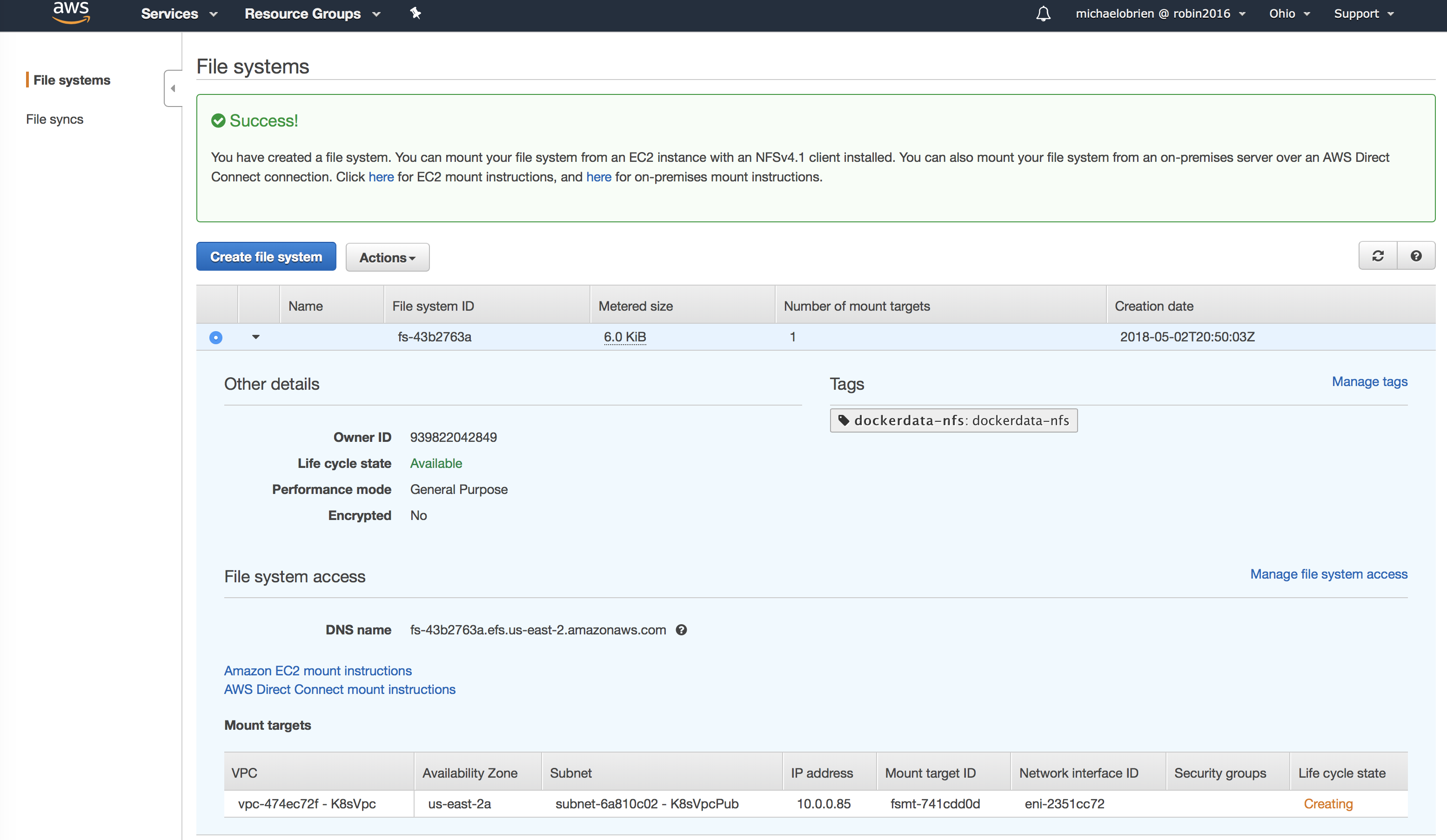

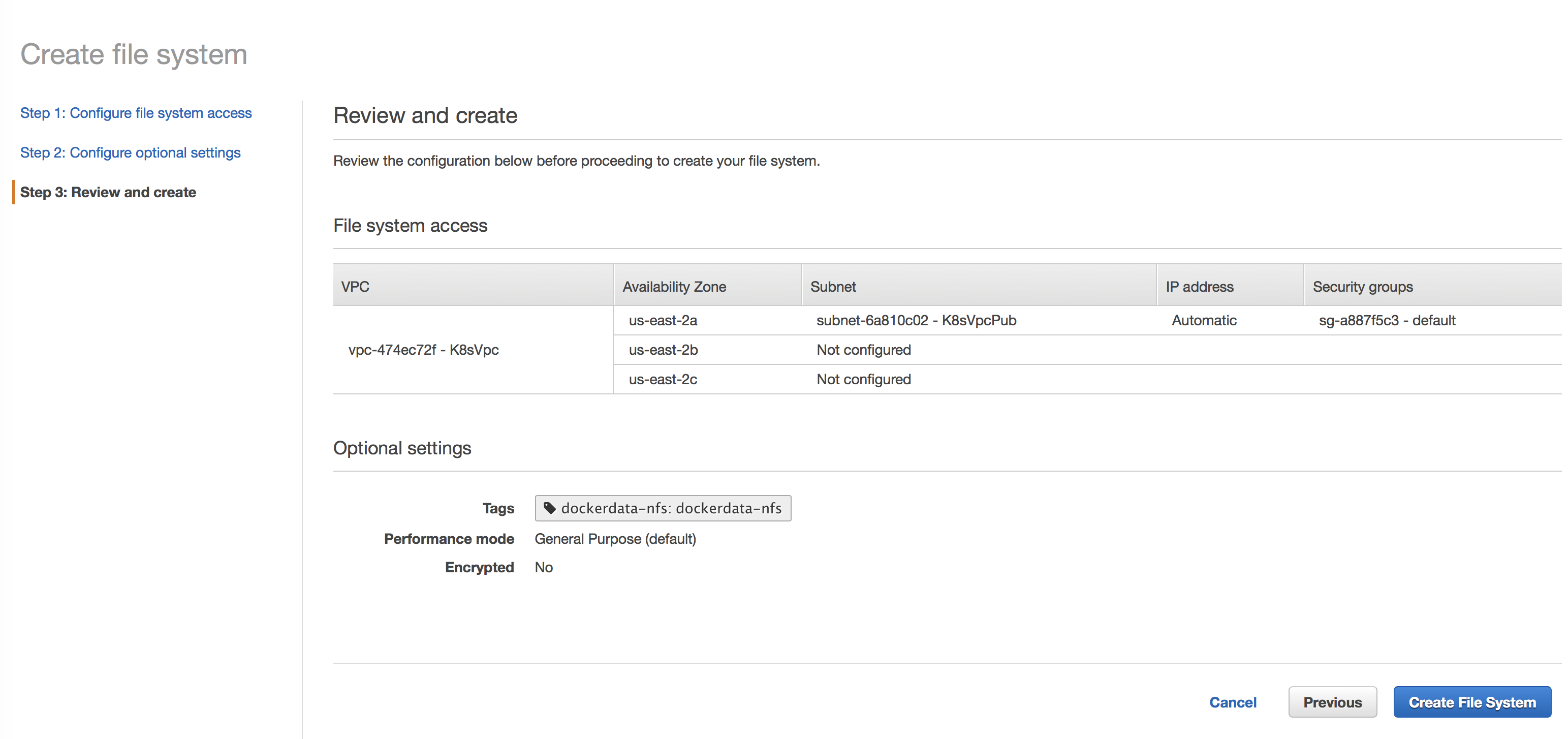

Add an NFS (EFS on AWS) share

Create a 1 + N cluster

See recommended cluster configurations on ONAP Deployment Specification for Finance and Operations#AmazonAWS

Create a 0.0.0.0/0 ::/O open security group

Use github to OAUTH authenticate your cluster just after installing it.

Last tested on ld.onap.info 20181029

# 0 - verify the security group has all protocols (TCP/UCP) for 0.0.0.0/0 and ::/0

# 1 - configure master - 15 min

sudo git clone https://gerrit.onap.org/r/logging-analytics

sudo logging-analytics/deploy/rancher/oom_rancher_setup.sh -b master -s <your domain/ip> -e onap

# on a 64G R4.2xlarge vm - 23 min later k8s cluster is up

kubectl get pods --all-namespaces

kube-system heapster-76b8cd7b5-g7p6n 1/1 Running 0 8m

kube-system kube-dns-5d7b4487c9-jjgvg 3/3 Running 0 8m

kube-system kubernetes-dashboard-f9577fffd-qldrw 1/1 Running 0 8m

kube-system monitoring-grafana-997796fcf-g6tr7 1/1 Running 0 8m

kube-system monitoring-influxdb-56fdcd96b-x2kvd 1/1 Running 0 8m

kube-system tiller-deploy-54bcc55dd5-756gn 1/1 Running 0 2m

# 2 - secure via github oauth the master - immediately to lock out crypto miners

http://ld.onap.info:8880

# 3 - delete the master from the hosts in rancher

http://ld.onap.info:8880

# 4 - create NFS share on master

https://us-east-2.console.aws.amazon.com/efs/home?region=us-east-2#/filesystems/fs-92xxxxx

# add -h 1.2.10 (if upgrading from 1.6.14 to 1.6.18 of rancher)

sudo logging-analytics/deploy/aws/oom_cluster_host_install.sh -n false -s <your domain/ip> -e fs-nnnnnn1b -r us-west-1 -t 371AEDC88zYAZdBXPM -c true -v true

# 5 - create NFS share and register each node - do this for all nodes

sudo git clone https://gerrit.onap.org/r/logging-analytics

# add -h 1.2.10 (if upgrading from 1.6.14 to 1.6.18 of rancher)

sudo logging-analytics/deploy/aws/oom_cluster_host_install.sh -n true -s <your domain/ip> -e fs-nnnnnn1b -r us-west-1 -t 371AEDC88zYAZdBXPM -c true -v true

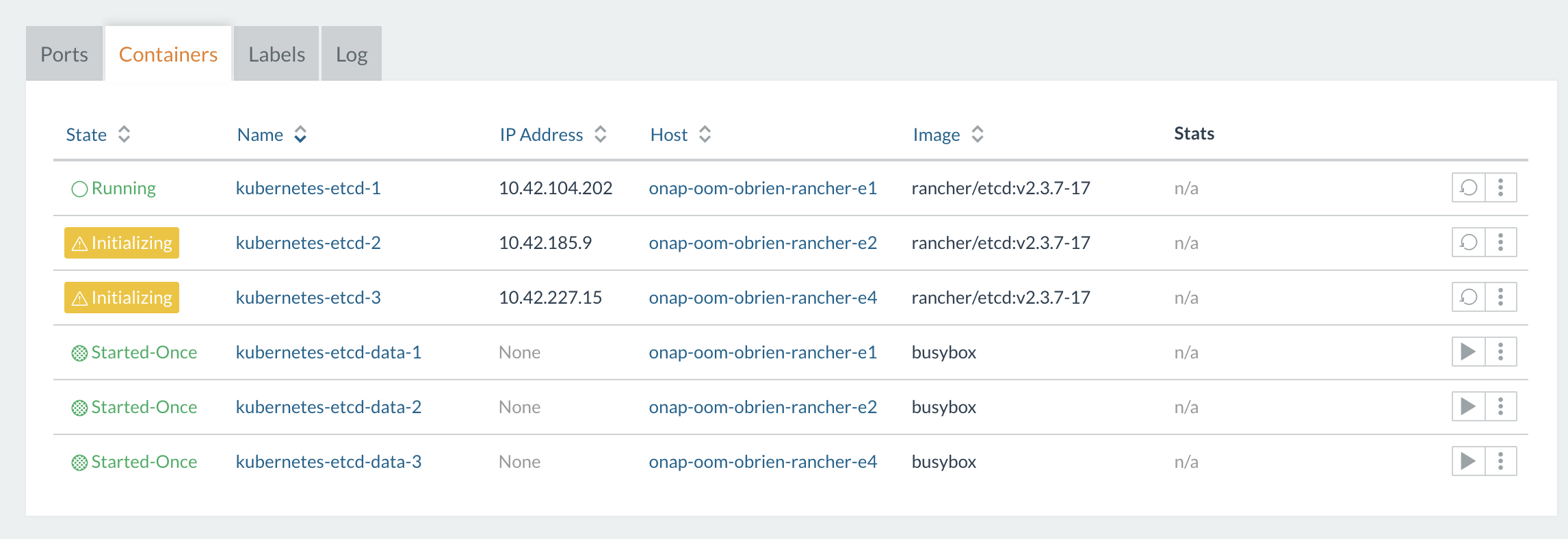

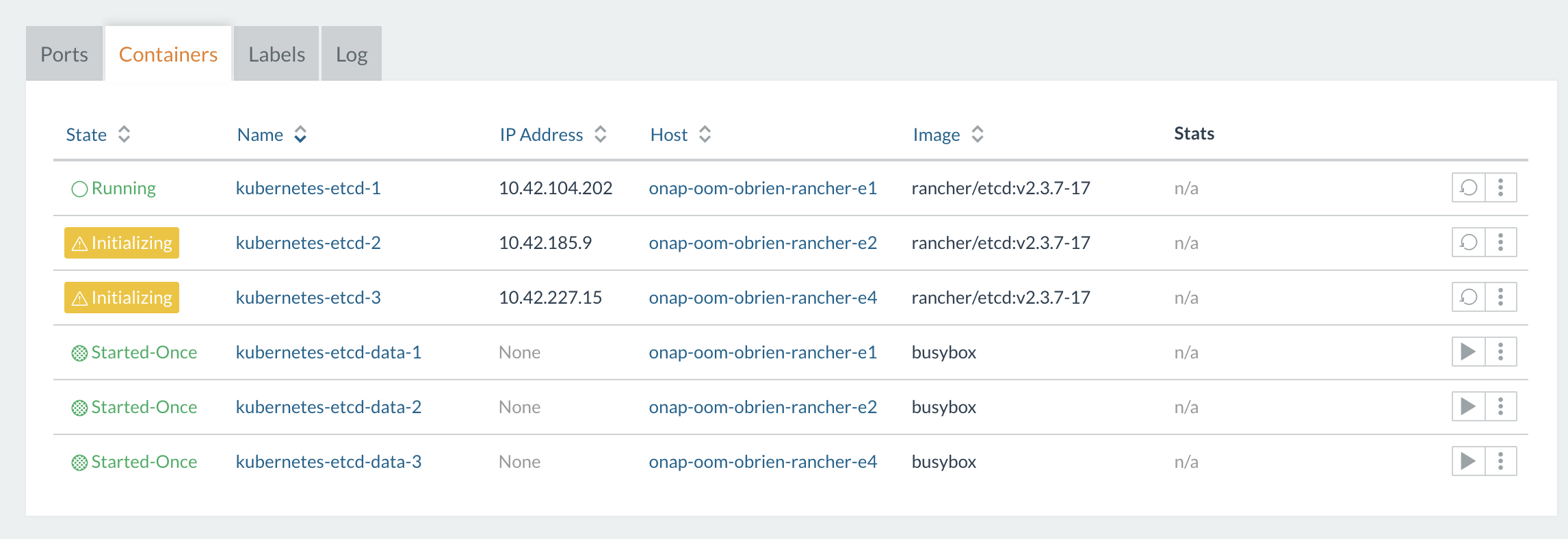

# it takes about 1 min to run the script and 1 minute for the etcd and healthcheck containers to go green on each host

# check the master cluster

kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

ip-172-31-19-9.us-east-2.compute.internal 9036m 56% 53266Mi 43%

ip-172-31-21-129.us-east-2.compute.internal 6840m 42% 47654Mi 38%

ip-172-31-18-85.us-east-2.compute.internal 6334m 39% 49545Mi 40%

ip-172-31-26-114.us-east-2.compute.internal 3605m 22% 25816Mi 21%

# fix helm on the master after adding nodes to the master - only if the server helm version is less than the client helm version (rancher 1.6.18 does not have this issue)

ubuntu@ip-172-31-14-89:~$ sudo helm version

Client: &version.Version{SemVer:"v2.9.1", GitCommit:"20adb27c7c5868466912eebdf6664e7390ebe710", GitTreeState:"clean"}

Server: &version.Version{SemVer:"v2.8.2", GitCommit:"a80231648a1473929271764b920a8e346f6de844", GitTreeState:"clean"}

ubuntu@ip-172-31-14-89:~$ sudo helm init --upgrade

$HELM_HOME has been configured at /home/ubuntu/.helm.

Tiller (the Helm server-side component) has been upgraded to the current version.

ubuntu@ip-172-31-14-89:~$ sudo helm version

Client: &version.Version{SemVer:"v2.9.1", GitCommit:"20adb27c7c5868466912eebdf6664e7390ebe710", GitTreeState:"clean"}

Server: &version.Version{SemVer:"v2.9.1", GitCommit:"20adb27c7c5868466912eebdf6664e7390ebe710", GitTreeState:"clean"}

# 7a - manual: follow the helm plugin page

# https://wiki.onap.org/display/DW/OOM+Helm+%28un%29Deploy+plugins

sudo git clone https://gerrit.onap.org/r/oom

sudo cp -R ~/oom/kubernetes/helm/plugins/ ~/.helm

cd oom/kubernetes

sudo helm serve &

sudo make all

sudo make onap

sudo helm deploy onap local/onap --namespace onap

fetching local/onap

release "onap" deployed

release "onap-aaf" deployed

release "onap-aai" deployed

release "onap-appc" deployed

release "onap-clamp" deployed

release "onap-cli" deployed

release "onap-consul" deployed

release "onap-contrib" deployed

release "onap-dcaegen2" deployed

release "onap-dmaap" deployed

release "onap-esr" deployed

release "onap-log" deployed

release "onap-msb" deployed

release "onap-multicloud" deployed

release "onap-nbi" deployed

release "onap-oof" deployed

release "onap-policy" deployed

release "onap-pomba" deployed

release "onap-portal" deployed

release "onap-robot" deployed

release "onap-sdc" deployed

release "onap-sdnc" deployed

release "onap-sniro-emulator" deployed

release "onap-so" deployed

release "onap-uui" deployed

release "onap-vfc" deployed

release "onap-vid" deployed

release "onap-vnfsdk" deployed

# 7b - automated: after cluster is up - run cd.sh script to get onap up - customize your values.yaml - the 2nd time you run the script

# clean install - will clone new oom repo

# get the dev.yaml and set any pods you want up to true as well as fill out the openstack parameters

sudo wget https://git.onap.org/oom/plain/kubernetes/onap/resources/environments/dev.yaml

sudo cp logging-analytics/deploy/cd.sh .

sudo ./cd.sh -b master -e onap -c true -d true -w true

# rerun install - no delete of oom repo

sudo ./cd.sh -b master -e onap -c false -d true -w true |

20181213 running 3.0.0-ONAP

Patches

Windriver openstack heat template 1+13 vms

https://gerrit.onap.org/r/#/c/74781/

docker prepull script – run before cd.sh - https://git.onap.org/logging-analytics/plain/deploy/docker_prepull.sh

https://gerrit.onap.org/r/#/c/74780/

Not merged with the heat template until the following nexus3 slowdown is addressed

https://jira.onap.org/browse/TSC-79

Bring up dmaap and aaf first and the rest of the pods in the following order.

Every 2.0s: helm list Fri Dec 14 15:19:49 2018 NAME REVISION UPDATED STATUS CHART NAMESPACE onap 2 Fri Dec 14 15:10:56 2018 DEPLOYED onap-3.0.0 onap onap-aaf 1 Fri Dec 14 15:10:57 2018 DEPLOYED aaf-3.0.0 onap onap-dmaap 2 Fri Dec 14 15:11:00 2018 DEPLOYED dmaap-3.0.0 onap onap onap-aaf-aaf-cm-5c65c9dc55-snhlj 1/1 Running 0 10m onap onap-aaf-aaf-cs-7dff4b9c44-85zg2 1/1 Running 0 10m onap onap-aaf-aaf-fs-ff6779b94-gz682 1/1 Running 0 10m onap onap-aaf-aaf-gui-76cfcc8b74-wn8b8 1/1 Running 0 10m onap onap-aaf-aaf-hello-5d45dd698c-xhc2v 1/1 Running 0 10m onap onap-aaf-aaf-locate-8587d8f4-l4k7v 1/1 Running 0 10m onap onap-aaf-aaf-oauth-d759586f6-bmz2l 1/1 Running 0 10m onap onap-aaf-aaf-service-546f66b756-cjppd 1/1 Running 0 10m onap onap-aaf-aaf-sms-7497c9bfcc-j892g 1/1 Running 0 10m onap onap-aaf-aaf-sms-preload-vhbbd 0/1 Completed 0 10m onap onap-aaf-aaf-sms-quorumclient-0 1/1 Running 0 10m onap onap-aaf-aaf-sms-quorumclient-1 1/1 Running 0 8m onap onap-aaf-aaf-sms-quorumclient-2 1/1 Running 0 6m onap onap-aaf-aaf-sms-vault-0 2/2 Running 1 10m onap onap-aaf-aaf-sshsm-distcenter-27ql7 0/1 Completed 0 10m onap onap-aaf-aaf-sshsm-testca-mw95p 0/1 Completed 0 10m onap onap-dmaap-dbc-pg-0 1/1 Running 0 17m onap onap-dmaap-dbc-pg-1 1/1 Running 0 15m onap onap-dmaap-dbc-pgpool-c5f8498-fn9cn 1/1 Running 0 17m onap onap-dmaap-dbc-pgpool-c5f8498-t9s27 1/1 Running 0 17m onap onap-dmaap-dmaap-bus-controller-59c96d6b8f-9xsxg 1/1 Running 0 17m onap onap-dmaap-dmaap-dr-db-557c66dc9d-gvb9f 1/1 Running 0 17m onap onap-dmaap-dmaap-dr-node-6496d8f55b-ffgfr 1/1 Running 0 17m onap onap-dmaap-dmaap-dr-prov-86f79c47f9-zb8p7 1/1 Running 0 17m onap onap-dmaap-message-router-5fb78875f4-lvsg6 1/1 Running 0 17m onap onap-dmaap-message-router-kafka-7964db7c49-n8prg 1/1 Running 0 17m onap onap-dmaap-message-router-zookeeper-5cdfb67f4c-5w4vw 1/1 Running 0 17m onap-msb 2 Fri Dec 14 15:31:12 2018 DEPLOYED msb-3.0.0 onap onap onap-msb-kube2msb-5c79ddd89f-dqhm6 1/1 Running 0 4m onap onap-msb-msb-consul-6949bd46f4-jk6jw 1/1 Running 0 4m onap onap-msb-msb-discovery-86c7b945f9-bc4zq 2/2 Running 0 4m onap onap-msb-msb-eag-5f86f89c4f-fgc76 2/2 Running 0 4m onap onap-msb-msb-iag-56cdd4c87b-jsfr8 2/2 Running 0 4m onap-aai 1 Fri Dec 14 15:30:59 2018 DEPLOYED aai-3.0.0 onap onap onap-aai-aai-54b7bf7779-bfbmg 1/1 Running 0 2m onap onap-aai-aai-babel-6bbbcf5d5c-sp676 2/2 Running 0 13m onap onap-aai-aai-cassandra-0 1/1 Running 0 13m onap onap-aai-aai-cassandra-1 1/1 Running 0 12m onap onap-aai-aai-cassandra-2 1/1 Running 0 9m onap onap-aai-aai-champ-54f7986b6b-wql2b 2/2 Running 0 13m onap onap-aai-aai-data-router-f5f75c9bd-l6ww7 2/2 Running 0 13m onap onap-aai-aai-elasticsearch-c9bf9dbf6-fnj8r 1/1 Running 0 13m onap onap-aai-aai-gizmo-5f8bf54f6f-chg85 2/2 Running 0 13m onap onap-aai-aai-graphadmin-9b956d4c-k9fhk 2/2 Running 0 13m onap onap-aai-aai-graphadmin-create-db-schema-s2nnw 0/1 Completed 0 13m onap onap-aai-aai-modelloader-644b46df55-vt4gk 2/2 Running 0 13m onap onap-aai-aai-resources-745b6b4f5b-rj7lm 2/2 Running 0 13m onap onap-aai-aai-search-data-559b8dbc7f-l6cqq 2/2 Running 0 13m onap onap-aai-aai-sparky-be-75658695f5-z2xv4 2/2 Running 0 13m onap onap-aai-aai-spike-6778948986-7h7br 2/2 Running 0 13m onap onap-aai-aai-traversal-58b97f689f-jlblx 2/2 Running 0 13m onap onap-aai-aai-traversal-update-query-data-7sqt5 0/1 Completed 0 13m onap-msb 5 Fri Dec 14 15:51:42 2018 DEPLOYED msb-3.0.0 onap onap onap-msb-kube2msb-5c79ddd89f-dqhm6 1/1 Running 0 18m onap onap-msb-msb-consul-6949bd46f4-jk6jw 1/1 Running 0 18m onap onap-msb-msb-discovery-86c7b945f9-bc4zq 2/2 Running 0 18m onap onap-msb-msb-eag-5f86f89c4f-fgc76 2/2 Running 0 18m onap onap-msb-msb-iag-56cdd4c87b-jsfr8 2/2 Running 0 18m onap-esr 3 Fri Dec 14 15:51:40 2018 DEPLOYED esr-3.0.0 onap onap onap-esr-esr-gui-6c5ccd59d6-6brcx 1/1 Running 0 2m onap onap-esr-esr-server-5f967d4767-ctwp6 2/2 Running 0 2m onap-robot 2 Fri Dec 14 15:51:48 2018 DEPLOYED robot-3.0.0 onap onap onap-robot-robot-ddd948476-n9szh 1/1 Running 0 11m onap-multicloud 1 Fri Dec 14 15:51:43 2018 DEPLOYED multicloud-3.0.0 onap |

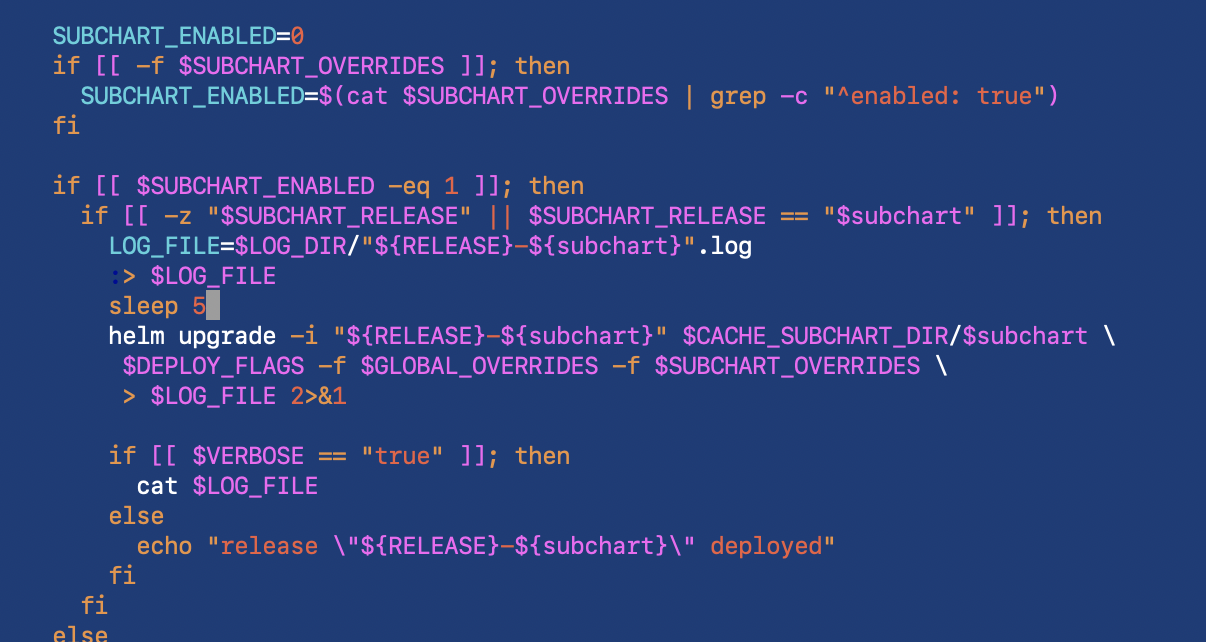

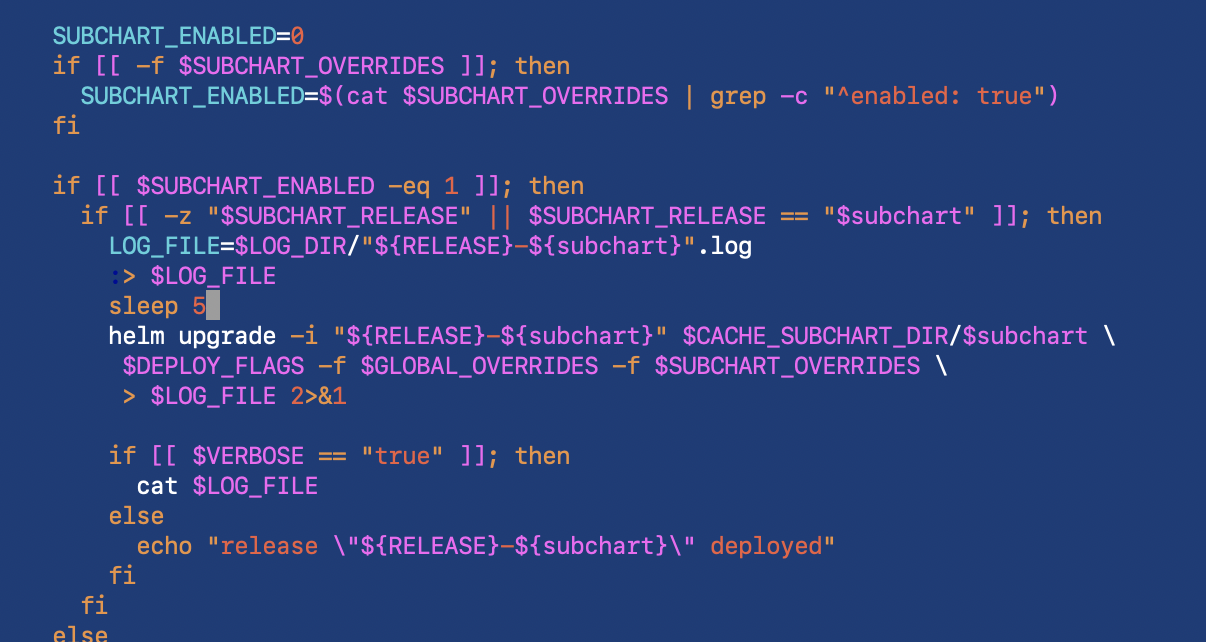

There is a patch going into 3.0.1 to delay deployments to not overload tiller 3+ seconds

sudo cp -R ~/oom/kubernetes/helm/plugins/ ~/.helm sudo vi ~/.helm/plugins/deploy/deploy.sh |

Note: your HD/SSD, ram and cpu configuration will drastically affect deployment. For example if you are cpu starved - the idle state of onap will delay pods as more come in - additionally network bandwidth to pull docker containers will be significant - and PV creation is sensitive to FS throughput/lag.

Some of the internal pod timings are optimized for certain azure deployment

https://git.onap.org/oom/tree/kubernetes/onap/resources/environments/public-cloud.yaml

Verify if the integration docker csv manifest is the truth or the oom repo values.yaml (no override required?)

Alain Soleil pointed out the proxy page (was using commercial nexus3) - ONAP OOM Beijing - Hosting docker images locally - I had about 4 jiras on this and forgot about them.

20190121:

| Answered John Lotoski for EKS and his other post on nexus3 proxy failures - looks like an issue with a double proxy between dockerhub - or an issue specific to the dockerhub/registry:2 container - https://lists.onap.org/g/onap-discuss/topic/registry_issue_few_images/29285134?p=,,,20,0,0,0::recentpostdate%2Fsticky,,,20,2,0,29285134 |

Running

nexus3.onap.info:5000 - my private AWS nexus3 proxy of nexus3.onap.org:10001

nexus3.onap.cloud:5000 - azure public proxy - filled with casablanca (will retire after Jan 2)

nexus4.onap.cloud:5000 - azure public proxy - filled with master - and later casablanca

nexus3windriver.onap.cloud:5000 - windriver/openstack lab inside the firewall to use only for the lab - access to public is throttled

# from a clean ubuntu 16.04 VM # install docker sudo curl https://releases.rancher.com/install-docker/17.03.sh | sh sudo usermod -aG docker ubuntu # install nexus mkdir -p certs openssl req -newkey rsa:4096 -nodes -sha256 -keyout certs/domain.key -x509 -days 365 -out certs/domain.crt Common Name (e.g. server FQDN or YOUR name) []:nexus3.onap.info sudo nano /etc/hosts sudo docker run -d --restart=unless-stopped --name registry -v `pwd`/certs:/certs -e REGISTRY_HTTP_ADDR=0.0.0.0:5000 -e REGISTRY_HTTP_TLS_CERTIFICATE=/certs/domain.crt -e REGISTRY_HTTP_TLS_KEY=/certs/domain.key -e REGISTRY_PROXY_REMOTEURL=https://nexus3.onap.org:10001 -p 5000:5000 registry:2 sudo docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 7f9b0e97eb7f registry:2 "/entrypoint.sh /e..." 8 seconds ago Up 7 seconds 0.0.0.0:5000->5000/tcp registry # test it sudo docker login -u docker -p docker nexus3.onap.info:5000 Login Succeeded # get images from https://git.onap.org/integration/plain/version-manifest/src/main/resources/docker-manifest.csv?h=casablanca # use for example the first line onap/aaf/aaf_agent,2.1.8 # or the prepull script in https://git.onap.org/logging-analytics/plain/deploy/docker_prepull.sh sudo docker pull nexus3.onap.info:5000/onap/aaf/aaf_agent:2.1.8 2.1.8: Pulling from onap/aaf/aaf_agent 18d680d61657: Pulling fs layer 819d6de9e493: Downloading [======================================> ] 770.7 kB/1.012 MB # list sudo docker images REPOSITORY TAG IMAGE ID CREATED SIZE registry 2 2e2f252f3c88 3 months ago 33.3 MB # prepull to cache images on the server - in this case casablanca branch sudo wget https://git.onap.org/logging-analytics/plain/deploy/docker_prepull.sh sudo chmod 777 docker_prepull.sh # prep - same as client vms - the cert sudo mkdir /etc/docker/certs.d sudo mkdir /etc/docker/certs.d/nexus3.onap.cloud:5000 sudo cp certs/domain.crt /etc/docker/certs.d/nexus3.onap.cloud:5000/ca.crt sudo systemctl restart docker sudo docker login -u docker -p docker nexus3.onap.cloud:5000 # prepull sudo nohup ./docker_prepull.sh -b casablanca -s nexus3.onap.cloud:5000 & |

Cert is on

# on each host

# Cert is on TSC-79

sudo wget https://jira.onap.org/secure/attachment/13127/domain_nexus3_onap_cloud.crt

# or if you already have it

scp domain_nexus3_onap_cloud.crt ubuntu@ld3.onap.cloud:~/

# to avoid

sudo docker login -u docker -p docker nexus3.onap.cloud:5000

Error response from daemon: Get https://nexus3.onap.cloud:5000/v1/users/: x509: certificate signed by unknown authority

# cp cert

sudo mkdir /etc/docker/certs.d

sudo mkdir /etc/docker/certs.d/nexus3.onap.cloud:5000

sudo cp domain_nexus3_onap_cloud.crt /etc/docker/certs.d/nexus3.onap.cloud:5000/ca.crt

sudo systemctl restart docker

sudo docker login -u docker -p docker nexus3.onap.cloud:5000

Login Succeeded

# testing

# vm with the image existing - 2 sec

ubuntu@ip-172-31-33-46:~$ sudo docker pull nexus3.onap.cloud:5000/onap/aaf/aaf_agent:2.1.8

2.1.8: Pulling from onap/aaf/aaf_agent

Digest: sha256:71781f3cfa51066abb1a4a35267af37beec01b6bb75817fdfae056582839290c

Status: Downloaded newer image for nexus3.onap.cloud:5000/onap/aaf/aaf_agent:2.1.8

# vm with layers existing except for last 5 - 5 sec

ubuntu@a-cd-master:~$ sudo docker pull nexus3.onap.cloud:5000/onap/aaf/aaf_agent:2.1.8

2.1.8: Pulling from onap/aaf/aaf_agent

18d680d61657: Already exists

.. 20

49e90af50c7d: Already exists

....

acb05d09ff6e: Pull complete

Digest: sha256:71781f3cfa51066abb1a4a35267af37beec01b6bb75817fdfae056582839290c

Status: Downloaded newer image for nexus3.onap.cloud:5000/onap/aaf/aaf_agent:2.1.8

# clean AWS VM (clean install of docker) - no pulls yet - 45 sec for everything

ubuntu@ip-172-31-14-34:~$ sudo docker pull nexus3.onap.cloud:5000/onap/aaf/aaf_agent:2.1.8

2.1.8: Pulling from onap/aaf/aaf_agent

18d680d61657: Pulling fs layer

0addb6fece63: Pulling fs layer

78e58219b215: Pulling fs layer

eb6959a66df2: Pulling fs layer

321bd3fd2d0e: Pull complete

...

acb05d09ff6e: Pull complete

Digest: sha256:71781f3cfa51066abb1a4a35267af37beec01b6bb75817fdfae056582839290c

Status: Downloaded newer image for nexus3.onap.cloud:5000/onap/aaf/aaf_agent:2.1.8

ubuntu@ip-172-31-14-34:~$ sudo docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

nexus3.onap.cloud:5000/onap/aaf/aaf_agent 2.1.8 090b326a7f11 5 weeks ago 1.14 GB

# going to test a same size image directly from the LF - with minimal common layers

nexus3.onap.org:10001/onap/testsuite 1.3.2 c4b58baa95e8 3 weeks ago 1.13 GB

# 5 min in we are still at 3% - numbers below are a min old

ubuntu@ip-172-31-14-34:~$ sudo docker pull nexus3.onap.org:10001/onap/testsuite:1.3.2

1.3.2: Pulling from onap/testsuite

32802c0cfa4d: Downloading [=============> ] 8.416 MB/32.1 MB

da1315cffa03: Download complete

fa83472a3562: Download complete

f85999a86bef: Download complete

3eca7452fe93: Downloading [=======================> ] 8.517 MB/17.79 MB

9f002f13a564: Downloading [=========================================> ] 8.528 MB/10.24 MB

02682cf43e5c: Waiting

....

754645df4601: Waiting

# in 5 min we get 3% 35/1130Mb - which comes out to 162 min for 1.13G for .org as opposed to 45 sec for .info - which is a 200X slowdown - some of this is due to the fact my nexus3.onap.info is on the same VPC as my test VM - testing on openlab

# openlab - 2 min 40 sec which is 3.6 times slower - expected than in AWS - (25 min pulls vs 90min in openlab) - this makes nexus.onap.org 60 times slower in openlab than a proxy running from AWS (2 vCore/16G/ssd VM)

ubuntu@onap-oom-obrien-rancher-e4:~$ sudo docker pull nexus3.onap.info:5000/onap/aaf/aaf_agent:2.1.8

2.1.8: Pulling from onap/aaf/aaf_agent

18d680d61657: Pull complete

...

acb05d09ff6e: Pull complete

Digest: sha256:71781f3cfa51066abb1a4a35267af37beec01b6bb75817fdfae056582839290c

Status: Downloaded newer image for nexus3.onap.info:5000/onap/aaf/aaf_agent:2.1.8

#pulling smaller from nexus3.onap.info 2 min 20 - for 36Mb = 0.23Mb/sec - extrapolated to 1.13Gb for above is 5022 sec or 83 min - half the rough calculation above

ubuntu@onap-oom-obrien-rancher-e4:~$ sudo docker pull nexus3.onap.org:10001/onap/aaf/sms:3.0.1

3.0.1: Pulling from onap/aaf/sms

c67f3896b22c: Pull complete

...

76eeb922b789: Pull complete

Digest: sha256:d5b64947edb93848acacaa9820234aa29e58217db9f878886b7bafae00fdb436

Status: Downloaded newer image for nexus3.onap.org:10001/onap/aaf/sms:3.0.1

# conclusion - nexus3.onap.org is experiencing a routing issue from their DC outbound causing a 80-100x slowdown over a proxy nexus3 - since 20181217 - as local jenkins.onap.org builds complete faster

# workaround is to use a nexus3 proxy above |

and adding to values.yaml

global:

#repository: nexus3.onap.org:10001

repository: nexus3.onap.cloud:5000

repositoryCred:

user: docker

password: docker |

windriver lab also has a network issue (for example if i pull from nexus3.onap.cloud:5000 (azure) into an aws EC2 instance - 45 sec for 1.1G - If I pull the same in an openlab VM - on the order of 10+ min) - therefore you need a local nexus3 proxy if you are inside the openstack lab - I have registered nexus3windriver.onap.cloud:5000 to a nexus3 proxy in my logging tenant - cert above

https://git.onap.org/logging-analytics/plain/deploy/docker_prepull.sh

using

via

https://gerrit.onap.org/r/#/c/74780/

git clone ssh://michaelobrien@gerrit.onap.org:29418/logging-analytics cd logging-analytics git pull ssh://michaelobrien@gerrit.onap.org:29418/logging-analytics refs/changes/80/74780/1 ubuntu@onap-oom-obrien-rancher-e0:~$ sudo nohup ./docker_prepull.sh & [1] 14488 ubuntu@onap-oom-obrien-rancher-e0:~$ nohup: ignoring input and appending output to 'nohup.out' |

If you need to redeploy a pod due to a job timeout, failure or to pickup a config/code change - delete the /dockerdata-nfs/*-aai for example subdirectory - so that a db restart for example does not run into existing data issues.

sudo chmod -R 777 /dockerdata-nfs sudo rm -rf /dockerdata-nfs/onap-aai |

sudo helm deploy onap local/onap --namespace $ENVIRON -f ../../dev.yaml -f onap/resources/environments/public-cloud.yaml where dev.yaml is the same as in resources but with all components turned on and IfNotPresent instead of Always |

we are not using the public-cloud.yaml override here - to verify just timing between deploys in this case - each pod waits for the previous to complete so resources are not in contention

see update to

https://git.onap.org/logging-analytics/tree/deploy/cd.sh

https://gerrit.onap.org/r/#/c/75422

DEPLOY_ORDER_POD_NAME_ARRAY=('robot consul aaf dmaap dcaegen2 msb aai esr multicloud oof so sdc sdnc vid policy portal log vfc uui vnfsdk appc clamp cli pomba vvp contrib sniro-emulator')

# don't count completed pods

DEPLOY_NUMBER_PODS_DESIRED_ARRAY=(1 4 13 11 13 5 15 2 6 17 10 12 11 2 8 6 3 18 2 5 5 5 1 11 11 3 1)

# account for podd that have varying deploy times or replicaset sizes

# don't count the 0/1 completed pods - and skip most of the ResultSet instances except 1

# dcae boostrap is problematic

DEPLOY_NUMBER_PODS_PARTIAL_ARRAY=(1 2 11 9 13 5 11 2 6 16 10 12 11 2 8 6 3 18 2 5 5 5 1 9 11 3 1) |

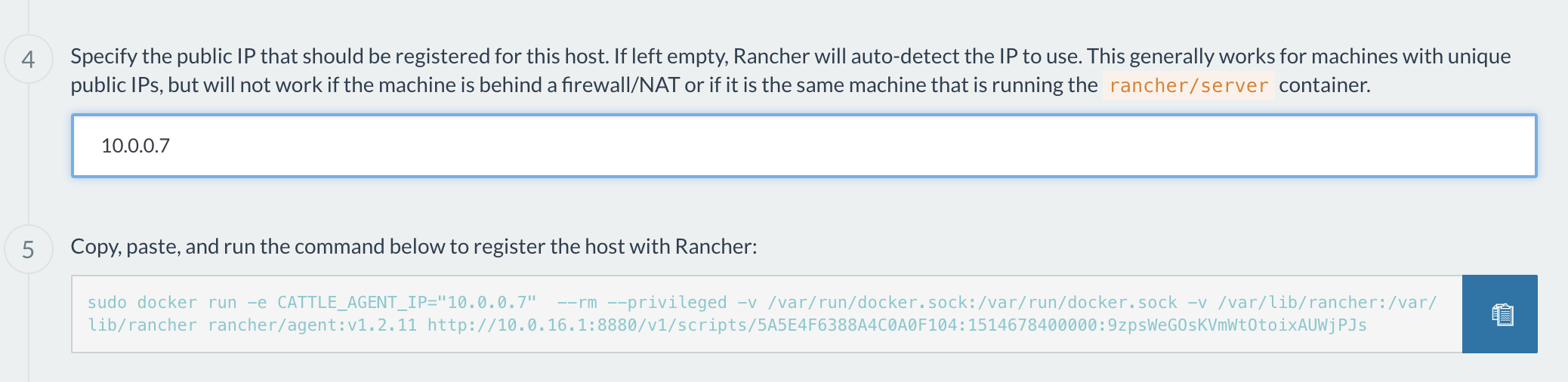

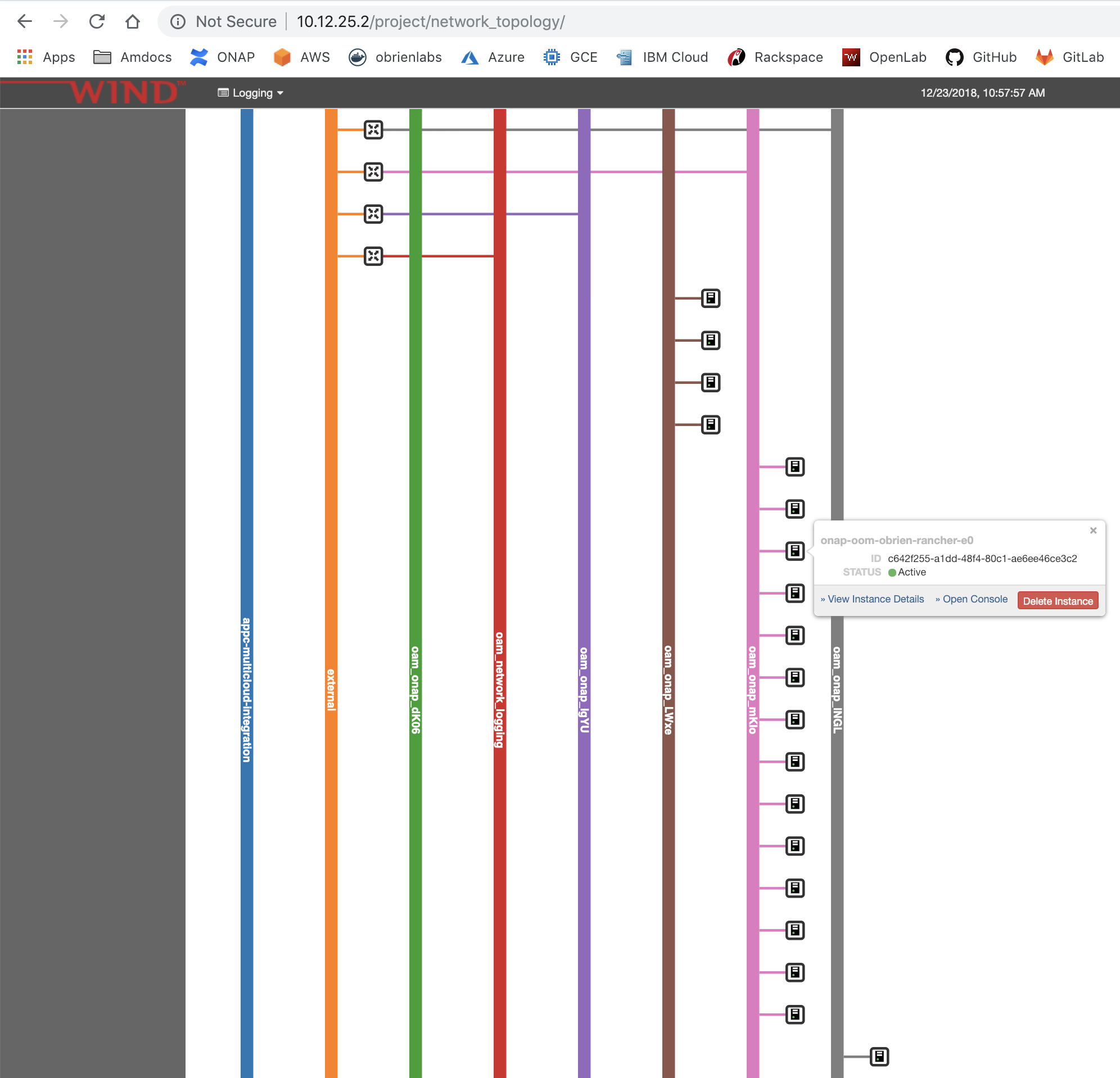

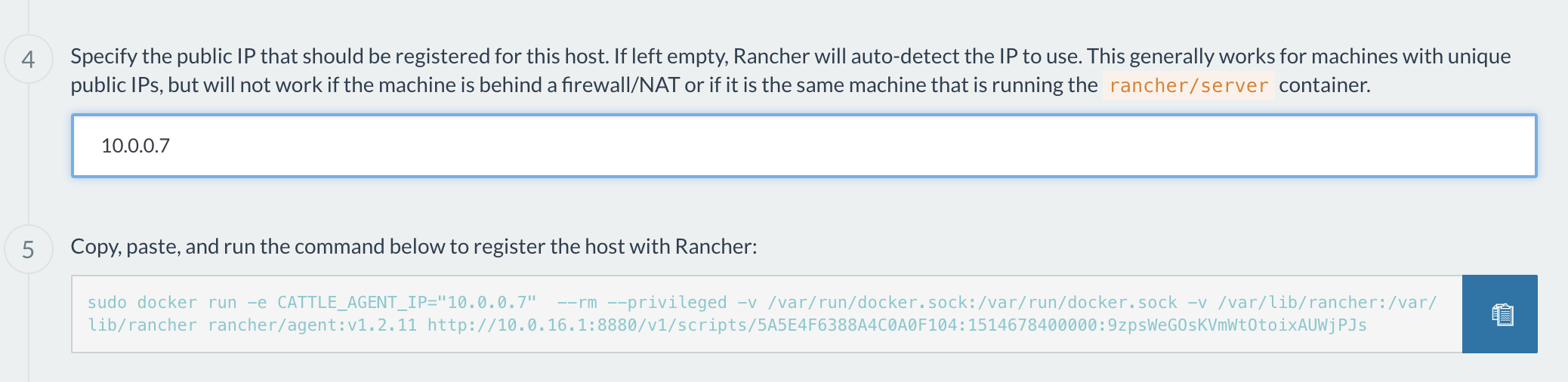

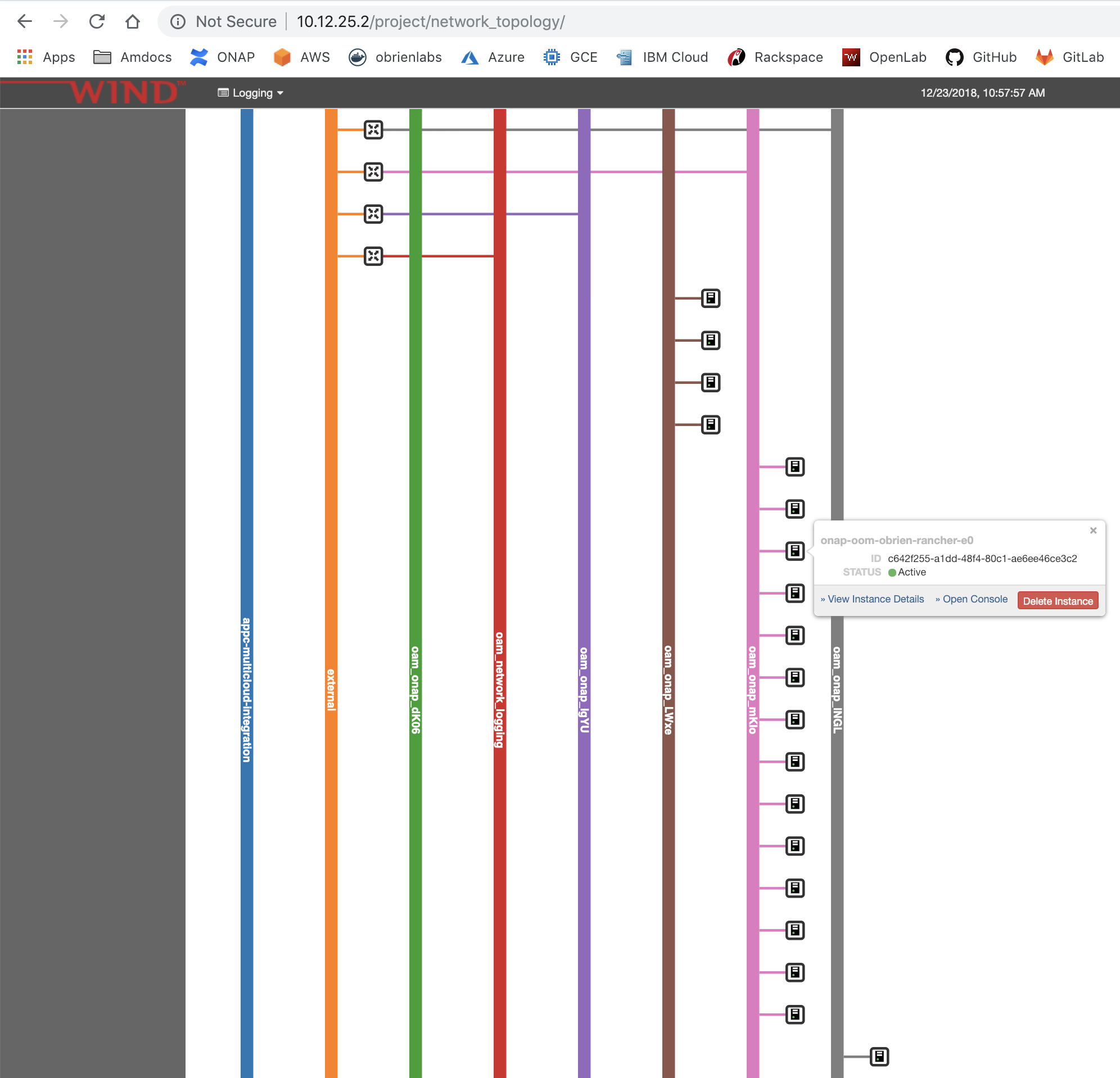

Note: the Windriver Openstack lab requires that host registration occurs against the private network 10.0.0.0/16 not the 10.12.0.0/16 public network - this is fine in Azure/AWS but not in openstack

The docs will be adjusted

This is bad - public IP based cluster

This is good - private IP based cluster

https://jira.onap.org/secure/attachment/13010/logging_openstack_13_16g.yaml

see

https://gerrit.onap.org/r/74781

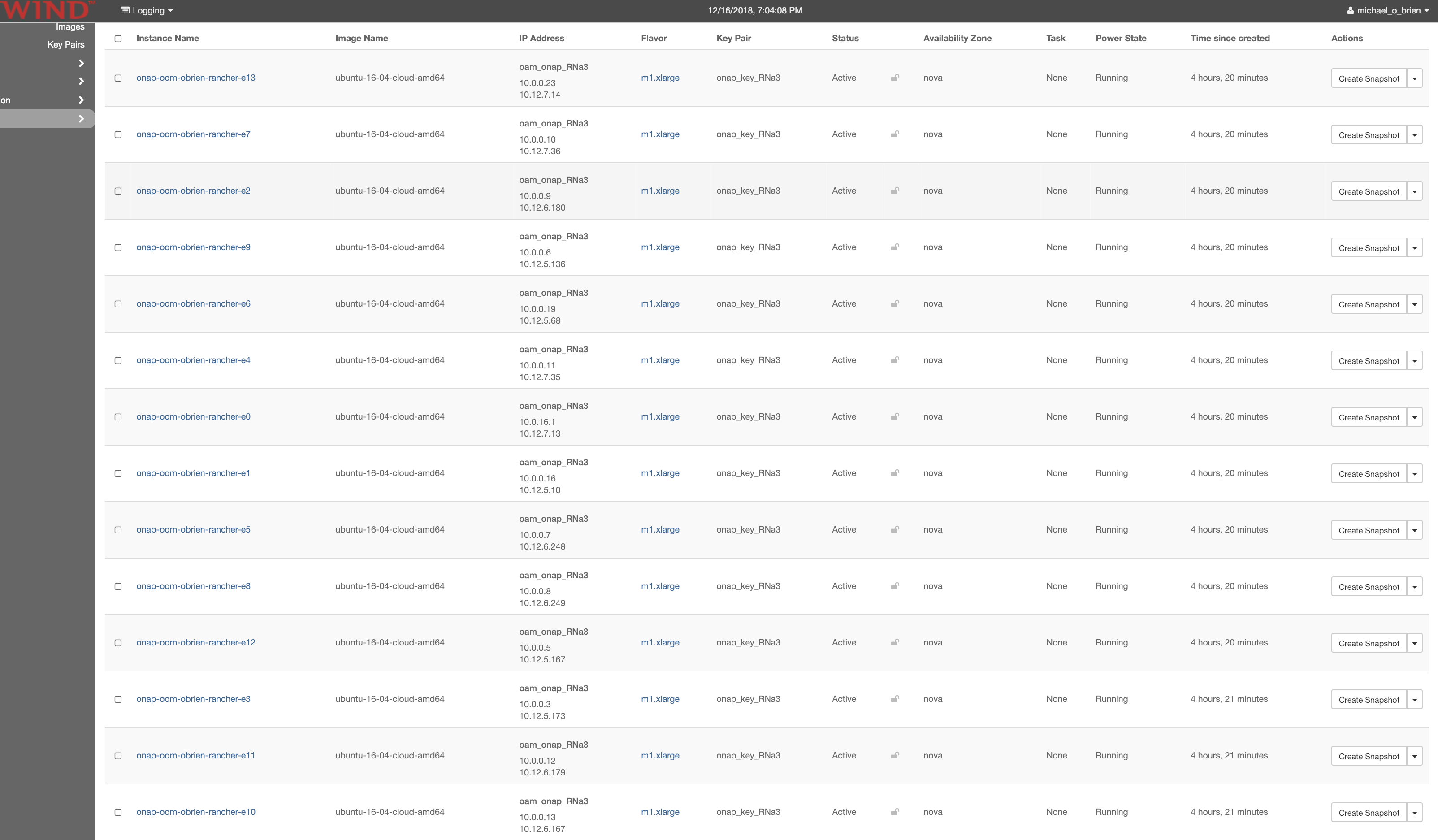

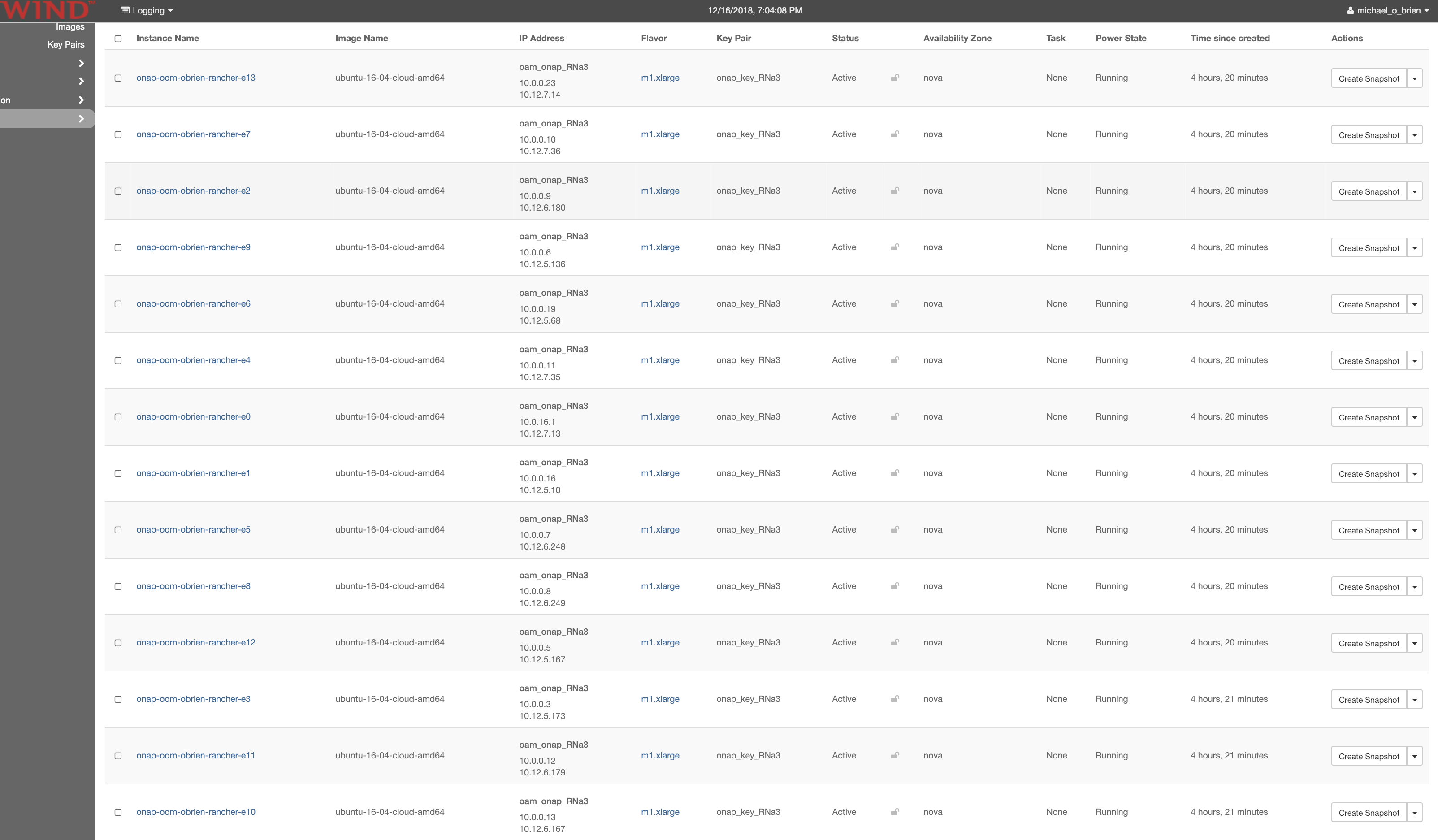

obrienbiometrics:onap_oom-714_heat michaelobrien$ openstack stack create -t logging_openstack_13_16g.yaml -e logging_openstack_oom.env OOM20181216-13 +---------------------+-----------------------------------------+ | Field | Value | +---------------------+-----------------------------------------+ | id | ed6aa689-2e2a-4e75-8868-9db29607c3ba | | stack_name | OOM20181216-13 | | description | Heat template to install OOM components | | creation_time | 2018-12-16T19:42:27Z | | updated_time | 2018-12-16T19:42:27Z | | stack_status | CREATE_IN_PROGRESS | | stack_status_reason | Stack CREATE started | +---------------------+-----------------------------------------+ obrienbiometrics:onap_oom-714_heat michaelobrien$ openstack server list +--------------------------------------+-----------------------------+--------+--------------------------------------+--------------------------+ | ID | Name | Status | Networks | Image Name | +--------------------------------------+-----------------------------+--------+--------------------------------------+--------------------------+ | 7695cf14-513e-4fea-8b00-6c2a25df85d3 | onap-oom-obrien-rancher-e13 | ACTIVE | oam_onap_RNa3=10.0.0.23, 10.12.7.14 | ubuntu-16-04-cloud-amd64 | | 1b70f179-007c-4975-8e4a-314a57754684 | onap-oom-obrien-rancher-e7 | ACTIVE | oam_onap_RNa3=10.0.0.10, 10.12.7.36 | ubuntu-16-04-cloud-amd64 | | 17c77bd5-0a0a-45ec-a9c7-98022d0f62fe | onap-oom-obrien-rancher-e2 | ACTIVE | oam_onap_RNa3=10.0.0.9, 10.12.6.180 | ubuntu-16-04-cloud-amd64 | | f85e075f-e981-4bf8-af3f-e439b7b72ad2 | onap-oom-obrien-rancher-e9 | ACTIVE | oam_onap_RNa3=10.0.0.6, 10.12.5.136 | ubuntu-16-04-cloud-amd64 | | 58c404d0-8bae-4889-ab0f-6c74461c6b90 | onap-oom-obrien-rancher-e6 | ACTIVE | oam_onap_RNa3=10.0.0.19, 10.12.5.68 | ubuntu-16-04-cloud-amd64 | | b91ff9b4-01fe-4c34-ad66-6ffccc9572c1 | onap-oom-obrien-rancher-e4 | ACTIVE | oam_onap_RNa3=10.0.0.11, 10.12.7.35 | ubuntu-16-04-cloud-amd64 | | d9be8b3d-2ef2-4a00-9752-b935d6dd2dba | onap-oom-obrien-rancher-e0 | ACTIVE | oam_onap_RNa3=10.0.16.1, 10.12.7.13 | ubuntu-16-04-cloud-amd64 | | da0b1be6-ec2b-43e6-bb3f-1f0626dcc88b | onap-oom-obrien-rancher-e1 | ACTIVE | oam_onap_RNa3=10.0.0.16, 10.12.5.10 | ubuntu-16-04-cloud-amd64 | | 0ffec4d0-bd6f-40f9-ab2e-f71aa5b9fbda | onap-oom-obrien-rancher-e5 | ACTIVE | oam_onap_RNa3=10.0.0.7, 10.12.6.248 | ubuntu-16-04-cloud-amd64 | | 125620e0-2aa6-47cf-b422-d4cbb66a7876 | onap-oom-obrien-rancher-e8 | ACTIVE | oam_onap_RNa3=10.0.0.8, 10.12.6.249 | ubuntu-16-04-cloud-amd64 | | 1efe102a-d310-48d2-9190-c442eaec3f80 | onap-oom-obrien-rancher-e12 | ACTIVE | oam_onap_RNa3=10.0.0.5, 10.12.5.167 | ubuntu-16-04-cloud-amd64 | | 7c248d1d-193a-415f-868b-a94939a6e393 | onap-oom-obrien-rancher-e3 | ACTIVE | oam_onap_RNa3=10.0.0.3, 10.12.5.173 | ubuntu-16-04-cloud-amd64 | | 98dc0aa1-e42d-459c-8dde-1a9378aa644d | onap-oom-obrien-rancher-e11 | ACTIVE | oam_onap_RNa3=10.0.0.12, 10.12.6.179 | ubuntu-16-04-cloud-amd64 | | 6799037c-31b5-42bd-aebf-1ce7aa583673 | onap-oom-obrien-rancher-e10 | ACTIVE | oam_onap_RNa3=10.0.0.13, 10.12.6.167 | ubuntu-16-04-cloud-amd64 | +--------------------------------------+-----------------------------+--------+--------------------------------------+--------------------------+ |

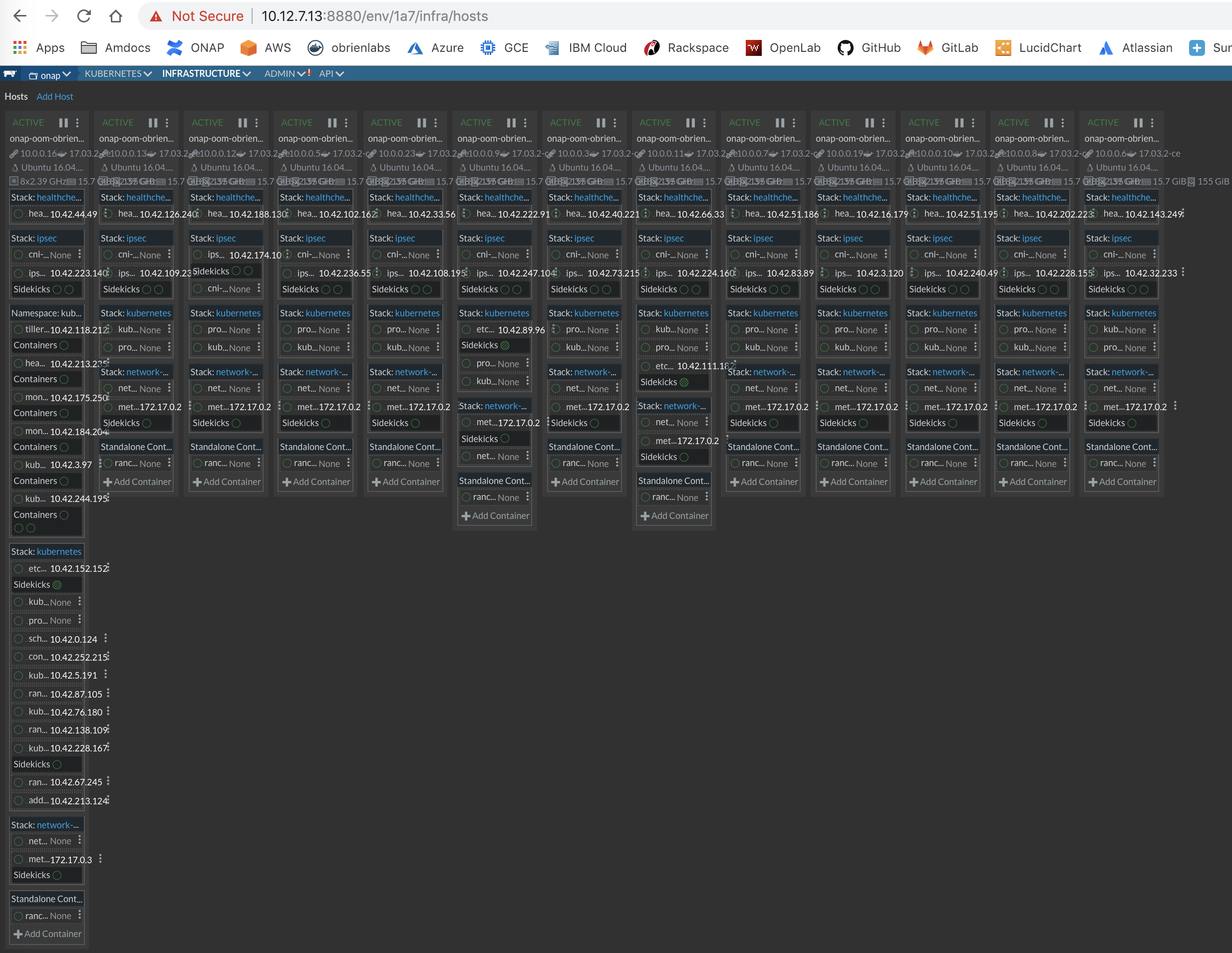

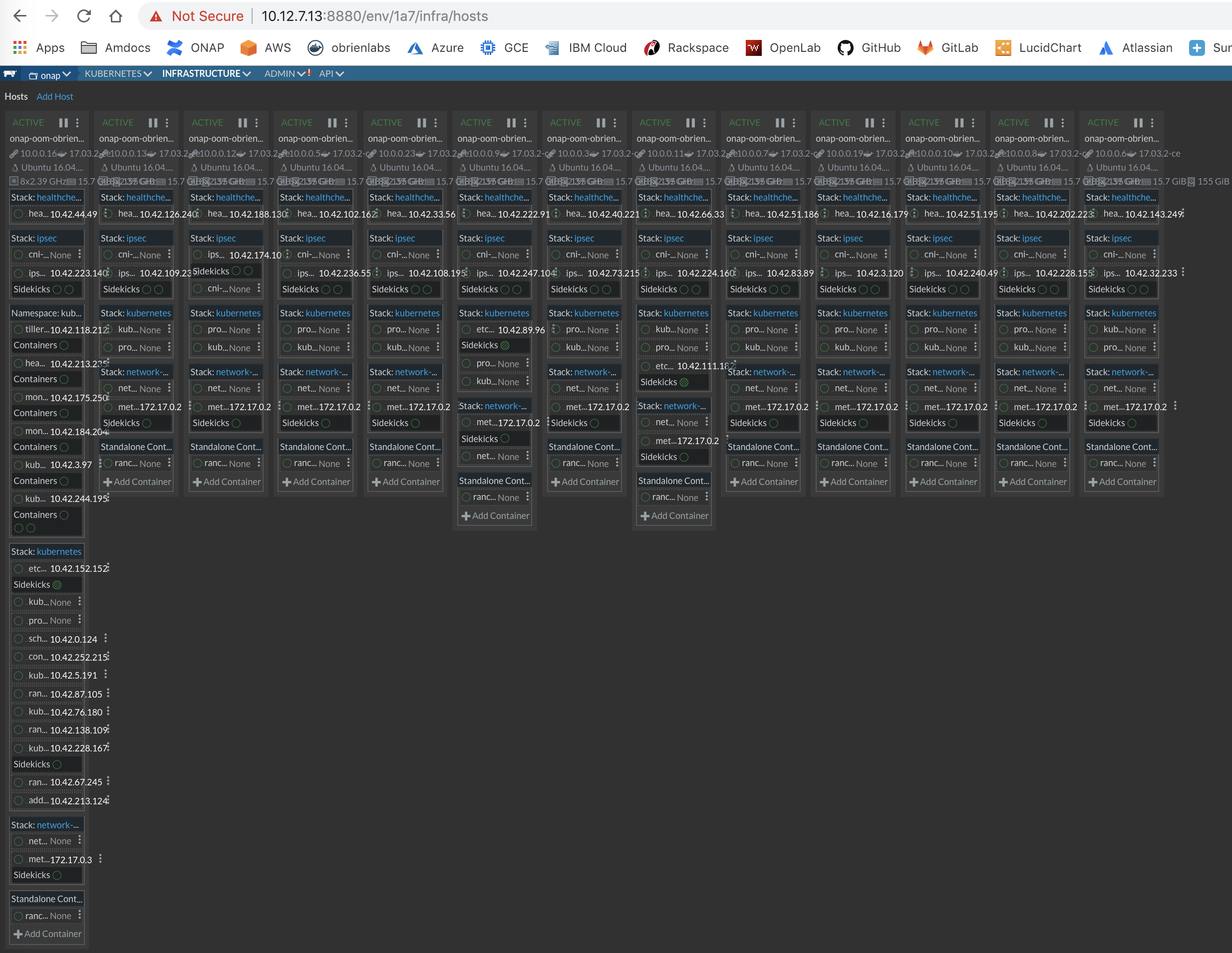

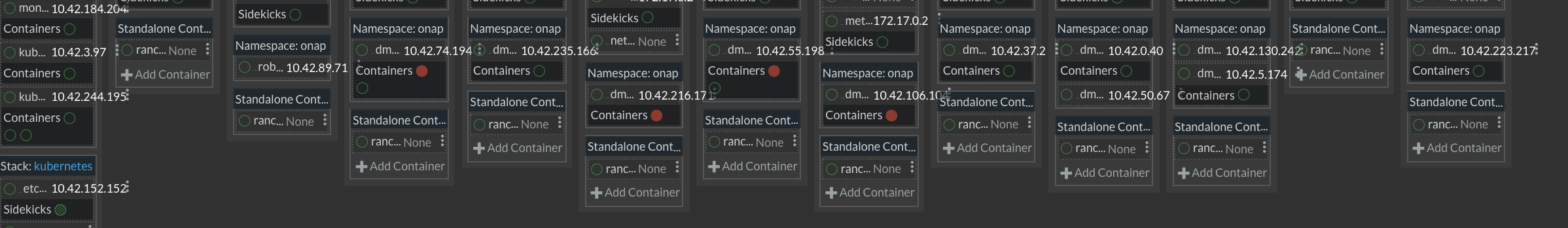

# 13+1 vms on openlab available as of 20181216 - running 2 separate clusters # 13+1 all 16g VMs # 4+1 all 32g VMs # master undercloud sudo git clone https://gerrit.onap.org/r/logging-analytics sudo cp logging-analytics/deploy/rancher/oom_rancher_setup.sh . sudo ./oom_rancher_setup.sh -b master -s 10.12.7.13 -e onap # master nfs sudo wget https://jira.onap.org/secure/attachment/12887/master_nfs_node.sh sudo chmod 777 master_nfs_node.sh sudo ./master_nfs_node.sh 10.12.5.10 10.12.6.180 10.12.5.173 10.12.7.35 10.12.6.248 10.12.5.68 10.12.7.36 10.12.6.249 10.12.5.136 10.12.6.167 10.12.6.179 10.12.5.167 10.12.7.14 #sudo ./master_nfs_node.sh 10.12.5.162 10.12.5.198 10.12.5.102 10.12.5.4 # slaves nfs sudo wget https://jira.onap.org/secure/attachment/12888/slave_nfs_node.sh sudo chmod 777 slave_nfs_node.sh sudo ./slave_nfs_node.sh 10.12.7.13 #sudo ./slave_nfs_node.sh 10.12.6.125 # test it ubuntu@onap-oom-obrien-rancher-e4:~$ sudo ls /dockerdata-nfs/ test.sh # remove client from master node ubuntu@onap-oom-obrien-rancher-e0:~$ kubectl get nodes NAME STATUS ROLES AGE VERSION onap-oom-obrien-rancher-e0 Ready <none> 5m v1.11.5-rancher1 ubuntu@onap-oom-obrien-rancher-e0:~$ kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system heapster-7b48b696fc-2z47t 1/1 Running 0 5m kube-system kube-dns-6655f78c68-gn2ds 3/3 Running 0 5m kube-system kubernetes-dashboard-6f54f7c4b-sfvjc 1/1 Running 0 5m kube-system monitoring-grafana-7877679464-872zv 1/1 Running 0 5m kube-system monitoring-influxdb-64664c6cf5-rs5ms 1/1 Running 0 5m kube-system tiller-deploy-6f4745cbcf-zmsrm 1/1 Running 0 5m # after master removal from hosts - expected no nodes ubuntu@onap-oom-obrien-rancher-e0:~$ kubectl get nodes error: the server doesn't have a resource type "nodes" # slaves rancher client - 1st node # register on the private network not the public IP # notice the CATTLE_AGENT sudo docker run -e CATTLE_AGENT_IP="10.0.0.7" --rm --privileged -v /var/run/docker.sock:/var/run/docker.sock -v /var/lib/rancher:/var/lib/rancher rancher/agent:v1.2.11 http://10.0.16.1:8880/v1/scripts/5A5E4F6388A4C0A0F104:1514678400000:9zpsWeGOsKVmWtOtoixAUWjPJs ubuntu@onap-oom-obrien-rancher-e0:~$ kubectl get nodes NAME STATUS ROLES AGE VERSION onap-oom-obrien-rancher-e1 Ready <none> 0s v1.11.5-rancher1 # add the other nodes # the 4 node 32g = 128g cluster ubuntu@onap-oom-obrien-rancher-e0:~$ kubectl get nodes NAME STATUS ROLES AGE VERSION onap-oom-obrien-rancher-e1 Ready <none> 1h v1.11.5-rancher1 onap-oom-obrien-rancher-e2 Ready <none> 4m v1.11.5-rancher1 onap-oom-obrien-rancher-e3 Ready <none> 5m v1.11.5-rancher1 onap-oom-obrien-rancher-e4 Ready <none> 3m v1.11.5-rancher1 # the 13 node 16g = 208g cluster ubuntu@onap-oom-obrien-rancher-e0:~$ kubectl top nodes NAME CPU(cores) CPU% MEMORY(bytes) MEMORY% onap-oom-obrien-rancher-e1 208m 2% 2693Mi 16% onap-oom-obrien-rancher-e10 38m 0% 1083Mi 6% onap-oom-obrien-rancher-e11 36m 0% 1104Mi 6% onap-oom-obrien-rancher-e12 57m 0% 1070Mi 6% onap-oom-obrien-rancher-e13 116m 1% 1017Mi 6% onap-oom-obrien-rancher-e2 73m 0% 1361Mi 8% onap-oom-obrien-rancher-e3 62m 0% 1099Mi 6% onap-oom-obrien-rancher-e4 74m 0% 1370Mi 8% onap-oom-obrien-rancher-e5 37m 0% 1104Mi 6% onap-oom-obrien-rancher-e6 55m 0% 1125Mi 7% onap-oom-obrien-rancher-e7 42m 0% 1102Mi 6% onap-oom-obrien-rancher-e8 53m 0% 1090Mi 6% onap-oom-obrien-rancher-e9 52m 0% 1072Mi 6% |

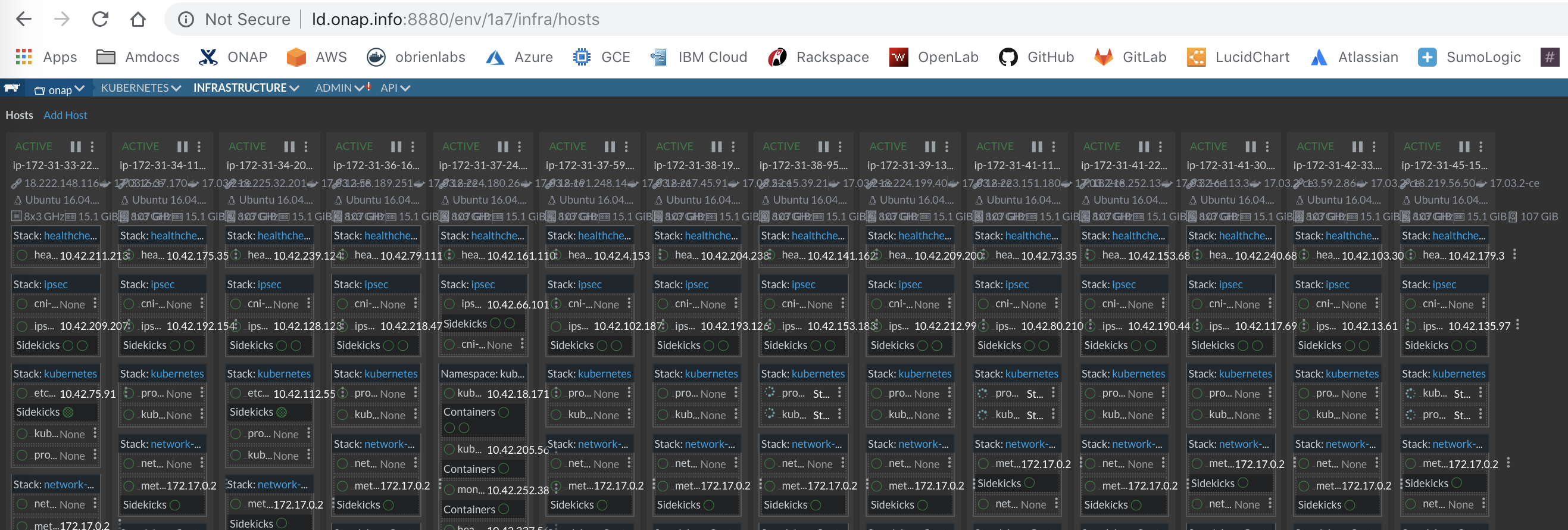

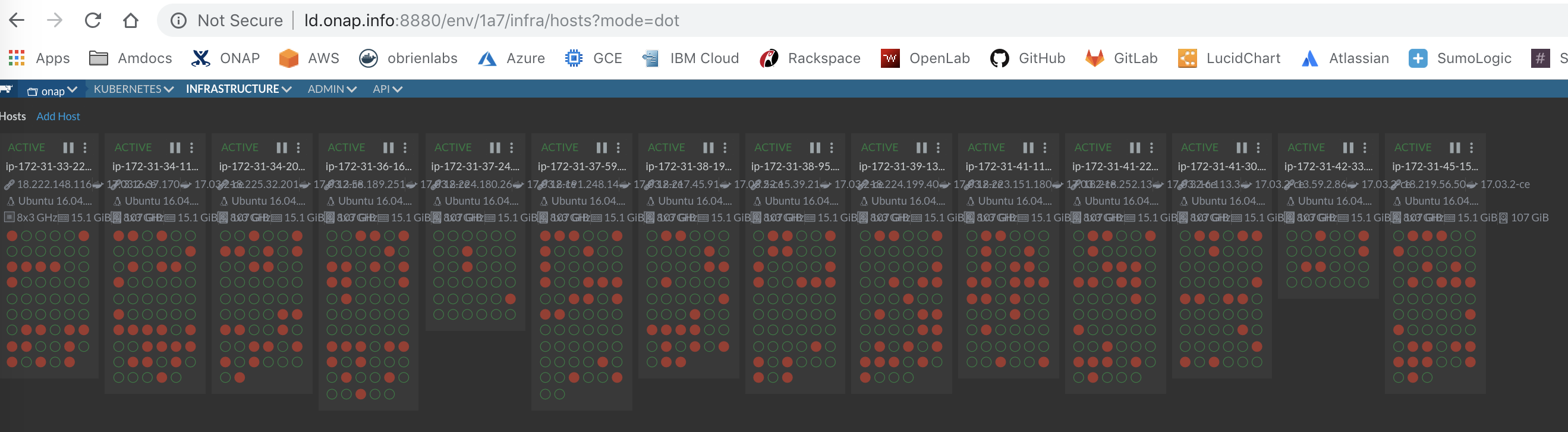

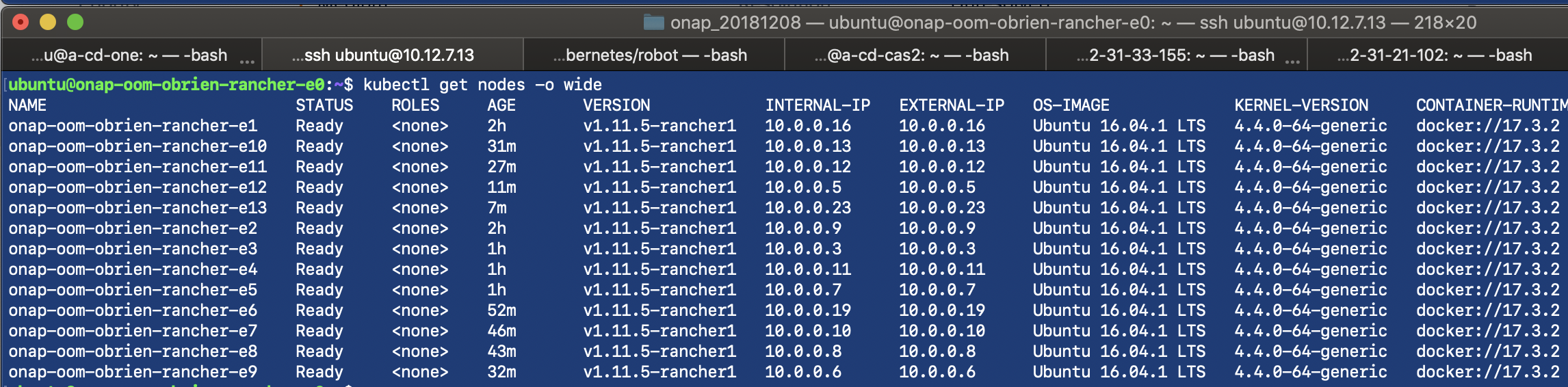

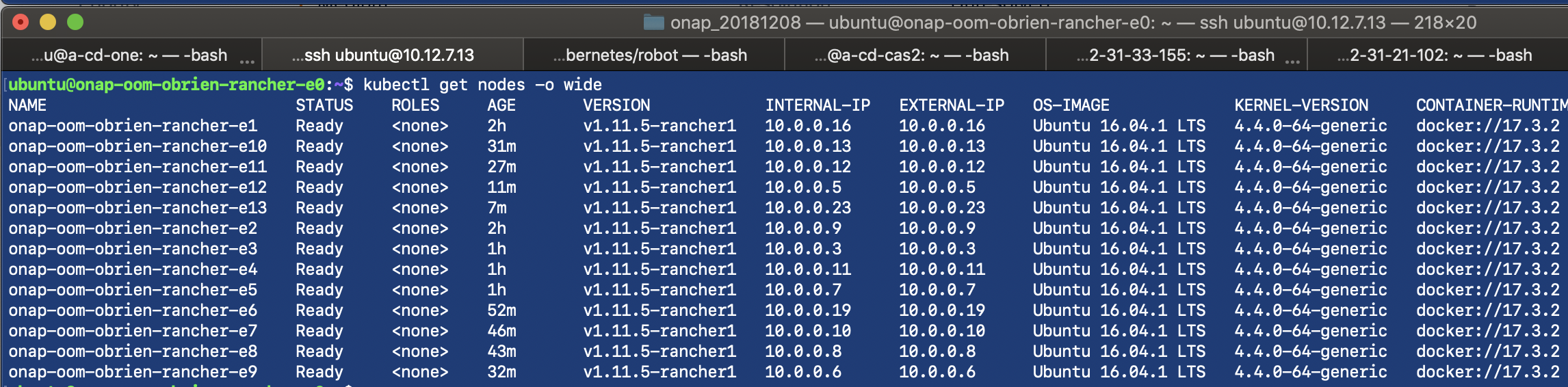

The cluster hosting kubernetes is up with 13+1 nodes and 2 network interfaces (the private 10.0.0.0/16 subnet and the 10.12.0.0/16 public subnet)

Verify kubernetes hosts are ready

ubuntu@onap-oom-obrien-rancher-e0:~$ kubectl get nodes NAME STATUS ROLES AGE VERSION onap-oom-obrien-rancher-e1 Ready <none> 2h v1.11.5-rancher1 onap-oom-obrien-rancher-e10 Ready <none> 25m v1.11.5-rancher1 onap-oom-obrien-rancher-e11 Ready <none> 20m v1.11.5-rancher1 onap-oom-obrien-rancher-e12 Ready <none> 5m v1.11.5-rancher1 onap-oom-obrien-rancher-e13 Ready <none> 1m v1.11.5-rancher1 onap-oom-obrien-rancher-e2 Ready <none> 2h v1.11.5-rancher1 onap-oom-obrien-rancher-e3 Ready <none> 1h v1.11.5-rancher1 onap-oom-obrien-rancher-e4 Ready <none> 1h v1.11.5-rancher1 onap-oom-obrien-rancher-e5 Ready <none> 1h v1.11.5-rancher1 onap-oom-obrien-rancher-e6 Ready <none> 46m v1.11.5-rancher1 onap-oom-obrien-rancher-e7 Ready <none> 40m v1.11.5-rancher1 onap-oom-obrien-rancher-e8 Ready <none> 37m v1.11.5-rancher1 onap-oom-obrien-rancher-e9 Ready <none> 26m v1.11.5-rancher1 |

# manually check out 3.0.0-ONAP (script is written for branches like casablanca)

sudo git clone -b 3.0.0-ONAP http://gerrit.onap.org/r/oom

sudo cp -R ~/oom/kubernetes/helm/plugins/ ~/.helm

# fix tiller bug

sudo nano ~/.helm/plugins/deploy/deploy.sh

# modify dev.yaml with logging-rc file openstack parameters - appc, sdnc and

sudo cp logging-analytics/deploy/cd.sh .

sudo cp oom/kubernetes/onap/resources/environments/dev.yaml .

sudo nano dev.yaml

ubuntu@onap-oom-obrien-rancher-0:~/oom/kubernetes/so/resources/config/mso$ echo -n "Whq..jCLj" | openssl aes-128-ecb -e -K `cat encryption.key` -nosalt | xxd -c 256 -p

bdaee....c60d3e09

# so server configuration

config:

openStackUserName: "michael_o_brien"

openStackRegion: "RegionOne"

openStackKeyStoneUrl: "http://10.12.25.2:5000"

openStackServiceTenantName: "service"

openStackEncryptedPasswordHere: "bdaee....c60d3e09" |

# copy dev.yaml to dev0.yaml # bring up all onap in sequence or adjust the list for a subset specific for the vFW - assumes you already cloned oom sudo nohup ./cd.sh -b 3.0.0-ONAP -e onap -p false -n nexus3.onap.org:10001 -f true -s 900 -c false -d true -w false -r false & #sudo helm deploy onap local/onap --namespace $ENVIRON -f ../../dev.yaml -f onap/resources/environments/public-cloud.yaml |

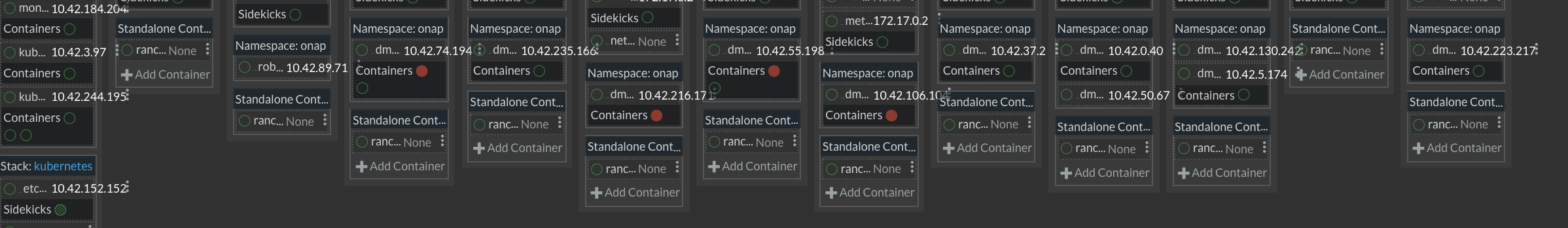

The load is distributed across the cluster even for individual pods like dmaap

vFW vFirewall Workarounds

From Alexis Chiarello currently verifying 20190125 - these are for the heat environment - not the kubernetes one - following Casablanca Stability Testing Instructions currently |

|---|

20181213 - thank you Alexis and Beejal Shah Something else I forgot to mention, I did change the heat templates to adapt for our Ubuntu images in our env (to enable additional NICs, eth2 / eth3) and also disable gateway by default on the 2 additional subnets created. See attached for the modified files. Cheers, Alexis. sudo chmod 777 master_nfs_node.sh I reran the vFWCL use case in my re-installed Casablanca lab and here is what I had to manually do post-install : - fix Robot "robot-eteshare-configmap" config map and adjust values that did not my match my env (onap_private_subnet_id, sec_group, dcae_collector_ip, Ubuntu image names, etc...). - make sure to push the policies from pap (PRELOAD_POLICIES=true then run config/push-policies.sh from /tmp/policy-install folder) (the following are for heat not kubernetes)

That's it, in my case, with the above the vFWCL closed loop works just fine and able to see APP-C processing the modifyConfig event and change the number of streams using netconf to the packet generator. Cheers, Alexis. |

Two choices, run the single oom_deployment.sh via your ARM, CloudFormation, Heat template wrapper as a oneclick or use the 2 step procedure above.

entrypoint aws/azure/openstack | Ubuntu 16 rancher install | oom deployment CD script | |

|---|---|---|---|

https://git.onap.org/logging-analytics/tree/deploy/cd.sh#n57

see also

https://git.onap.org/logging-analytics/tree/deploy/cd.sh#n57

required for a couple pods that leave left over resources and for the secondary cloudify out-of-band orchestration in DCAEGEN2

sudo helm undeploy $ENVIRON --purge kubectl delete namespace onap sudo helm delete --purge onap kubectl delete pv --all kubectl delete pvc --all kubectl delete secrets --all kubectl delete clusterrolebinding --all sudo rm -rf /dockerdata-nfs/onap-<pod> # or for a single pod kubectl delete pod $ENVIRON-aaf-sms-vault-0 -n $ENVIRON --grace-period=0 --force |

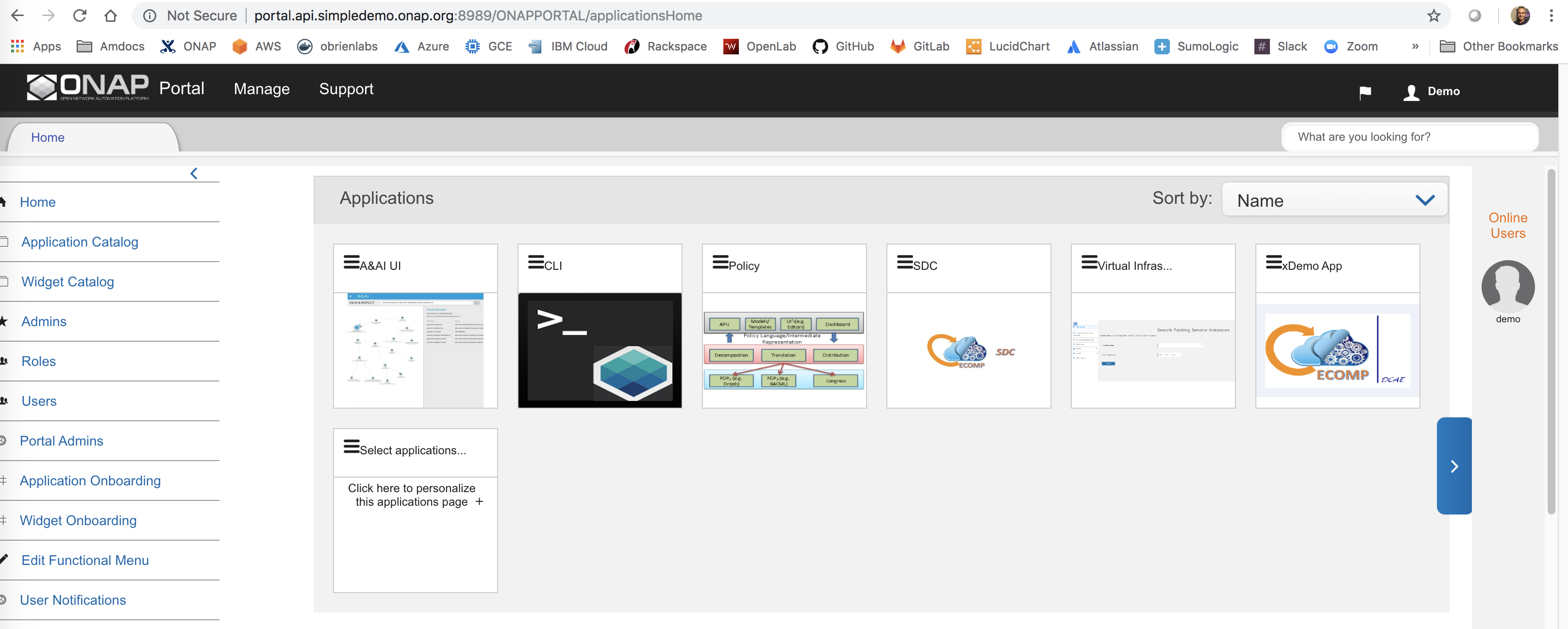

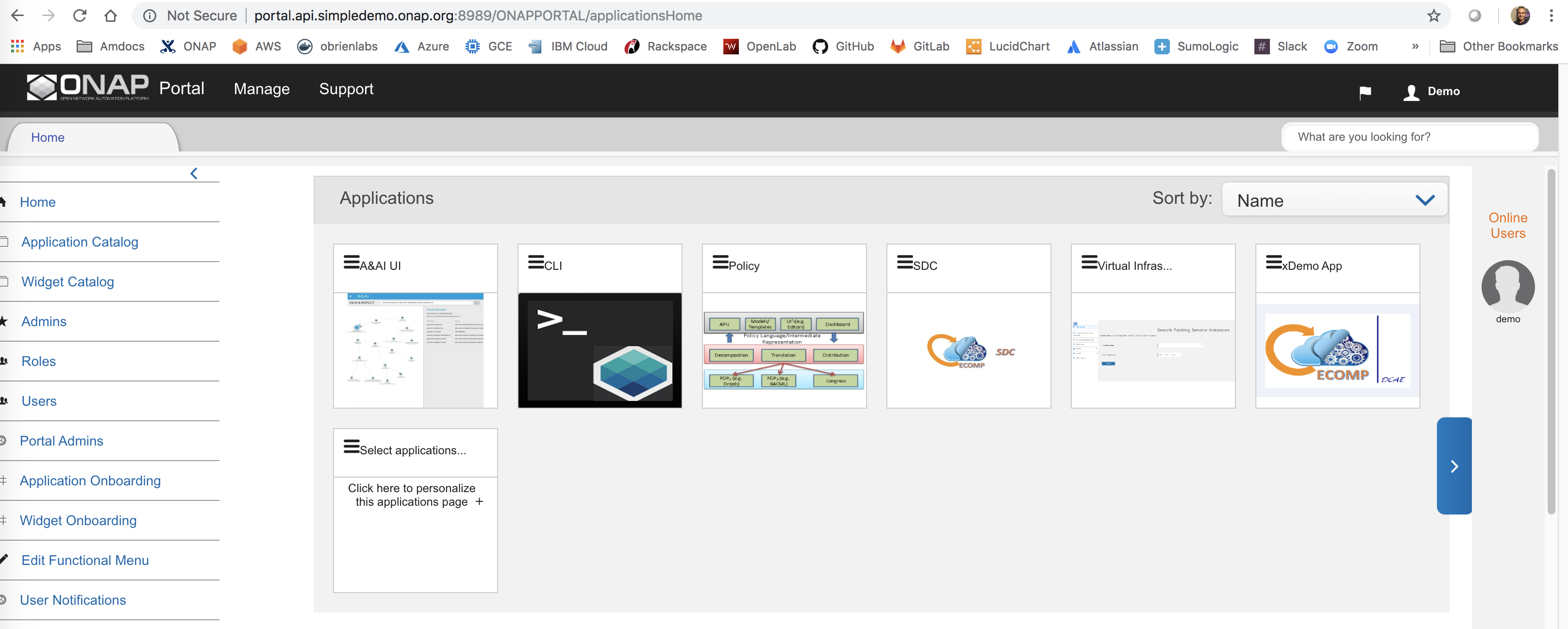

Access the ONAP portal via the 8989 LoadBalancer Mandeep Khinda merged in for and documented at http://onap.readthedocs.io/en/latest/submodules/oom.git/docs/oom_user_guide.html#accessing-the-onap-portal-using-oom-and-a-kubernetes-cluster

ubuntu@a-onap-devopscd:~$ kubectl -n onap get services|grep "portal-app" portal-app LoadBalancer 10.43.145.94 13.68.113.105 8989:30215/TCP,8006:30213/TCP,8010:30214/TCP,8443:30225/TCP 20h In the case of connecting to openlab through the vpn from your mac - you would need the 2nd number - which will be something like 10.0.0.12 - but the public IP corresponding to this private network IP - which only for this case is the e1 instance with 10.12.7.7 as the external routable IP |

add the following and prefix with the IP above to your client's /etc/hosts

in this case I am using the public 13... ip (elastic or generated public ip) - AWS in this example 13.68.113.105 portal.api.simpledemo.onap.org 13.68.113.105 vid.api.simpledemo.onap.org 13.68.113.105 sdc.api.fe.simpledemo.onap.org 13.68.113.105 portal-sdk.simpledemo.onap.org 13.68.113.105 policy.api.simpledemo.onap.org 13.68.113.105 aai.api.sparky.simpledemo.onap.org 13.68.113.105 cli.api.simpledemo.onap.org 13.68.113.105 msb.api.discovery.simpledemo.onap.org |

launch

http://portal.api.simpledemo.onap.org:8989/ONAPPORTAL/login.htm

login with demo user

kubectl n onap exec -it dev-portal-portal-db-b8db58679-q9pjq - mysql -D mysql -h localhost -e 'select * from user' |

see

and

Casablanca Stability Testing Instructions

# verifying on ld.onap.cloud 20190126

oom/kubernetes/robot/demo-k8s.sh onap init

Initialize Customer And Models | FAIL |

ConnectionError: HTTPConnectionPool(host='1.2.3.4', port=5000): Max retries exceeded with url: /v2.0/tokens (Caused by NewConnectionError('<urllib3.connection.HTTPConnection object at 0x7efd0f8a4ad0>: Failed to establish a new connection: [Errno 110] Connection timed out',))

# push sample vFWCL policies

PAP_POD=$(kubectl --namespace onap get pods | grep policy-pap | sed 's/ .*//')

kubectl exec -it $PAP_POD -n onap -c pap -- bash -c 'export PRELOAD_POLICIES=true; /tmp/policy-install/config/push-policies.sh'

# ete instantiateDemoVFWC

/root/oom/kubernetes/robot/ete-k8s.sh onap instantiateDemoVFWCL

# restart drools

kubectl delete pod dev-policy-drools-0 -n onap

# wait for policy to kick in

sleep 20m

# demo vfwclosedloop

/root/oom/kubernetes/robot/demo-k8s.sh onap vfwclosedloop $PNG_IP

# check the sink on 667

|

For a view of the system see Log Streaming Compliance and API

A single 122g R4.4xlarge VM in progress

see also

helm install will bring up everything without the configmap failure - but the release is busted - pods come up though ubuntu@ip-172-31-27-63:~$ sudo helm install local/onap -n onap --namespace onap -f onap/resources/environments/disable-allcharts.yaml --set aai.enabled=true --set dmaap.enabled=true --set log.enabled=true --set policy.enabled=true --set portal.enabled=true --set robot.enabled=true --set sdc.enabled=true --set sdnc.enabled=true --set so.enabled=true --set vid.enabled=true |

| deployment | containers | |

|---|---|---|

| minimum (no vfwCL) | ||

| medium (vfwCL) | ||

| full |

amdocs@ubuntu:~/_dev/oom/kubernetes$ kubectl get pods --all-namespaces | grep 0/1 onap onap-aai-champ-68ff644d85-mpkb9 0/1 Running 0 1d onap onap-pomba-kibana-d76b6dd4c-j4q9m 0/1 Init:CrashLoopBackOff 472 1d amdocs@ubuntu:~/_dev/oom/kubernetes$ kubectl get pods --all-namespaces | grep 1/2 onap onap-aai-gizmo-856f86d664-mf587 1/2 CrashLoopBackOff 568 1d onap onap-pomba-networkdiscovery-85d76975b7-w9sjl 1/2 CrashLoopBackOff 573 1d onap onap-pomba-networkdiscoveryctxbuilder-c89786dfc-rtdqc 1/2 CrashLoopBackOff 569 1d onap onap-vid-84c88db589-vbfht 1/2 CrashLoopBackOff 616 1d with clamp and pomba enabled (ran clamp first) amdocs@ubuntu:~/_dev/oom/kubernetes$ sudo helm upgrade -i onap local/onap --namespace onap -f dev.yaml Error: UPGRADE FAILED: failed to create resource: Service "pomba-kibana" is invalid: spec.ports[0].nodePort: Invalid value: 30234: provided port is already allocated |

see the AWS cluster install below

| VMs | RAM | HD | vCores | Ports | Network |

|---|---|---|---|---|---|

| 1 | 55-70G at startup

| 40G per host min (30G for dockers) 100G after a week 5G min per NFS 4GBPS peak | (need to reduce 152 pods to 110) 8 min 60 peak at startup recommended 16-64 vCores  | see list on PortProfile Recommend 0.0.0.0/0 (all open) inside VPC Block 10249-10255 outside secure 8888 with oauth | 170 MB/sec peak 1200 |

| 3+ | 85G Recommend min 3 x 64G class VMs Try for 4 | master: 40G hosts: 80G (30G of dockers) NFS: 5G | 24 to 64 | ||

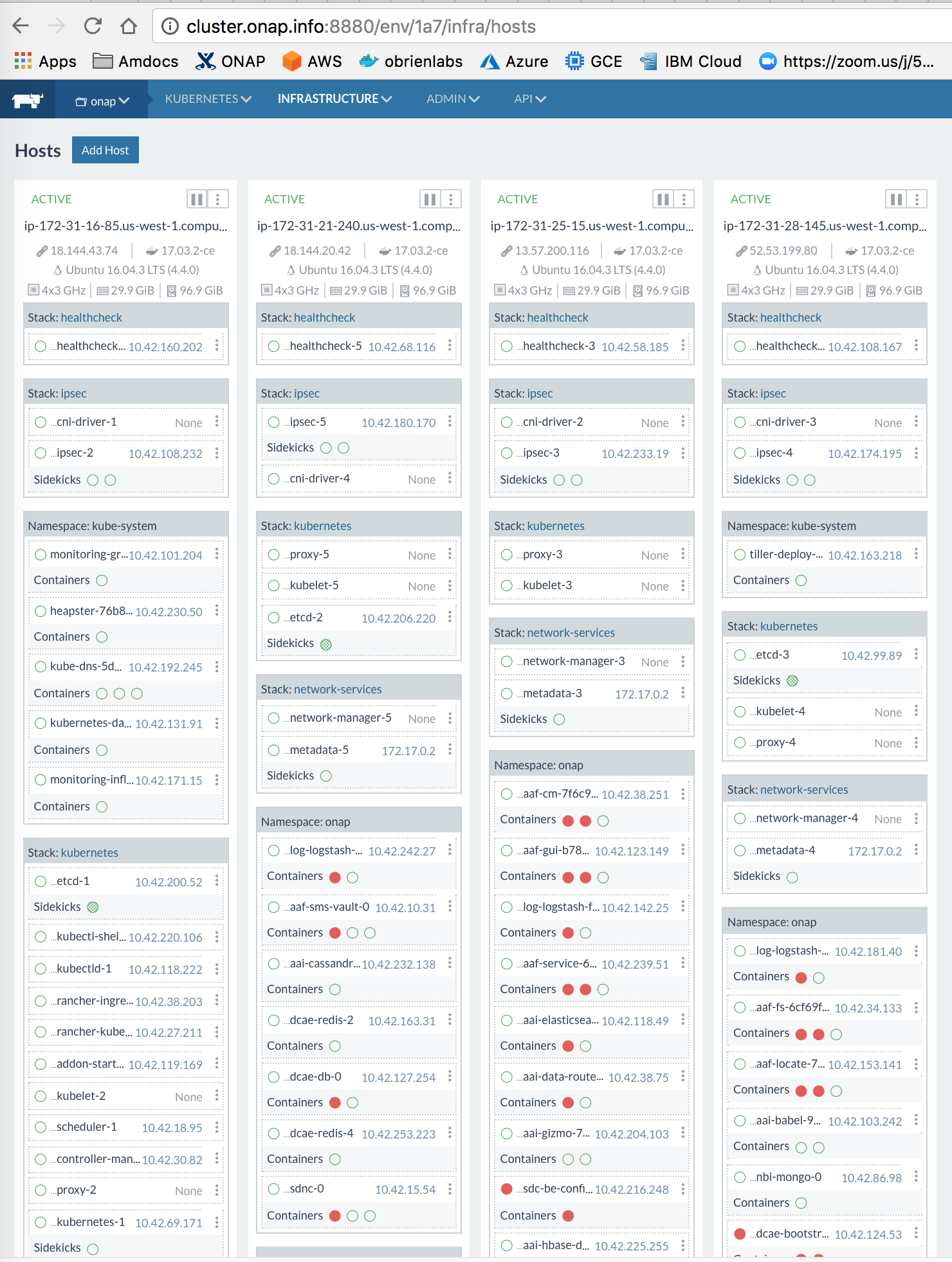

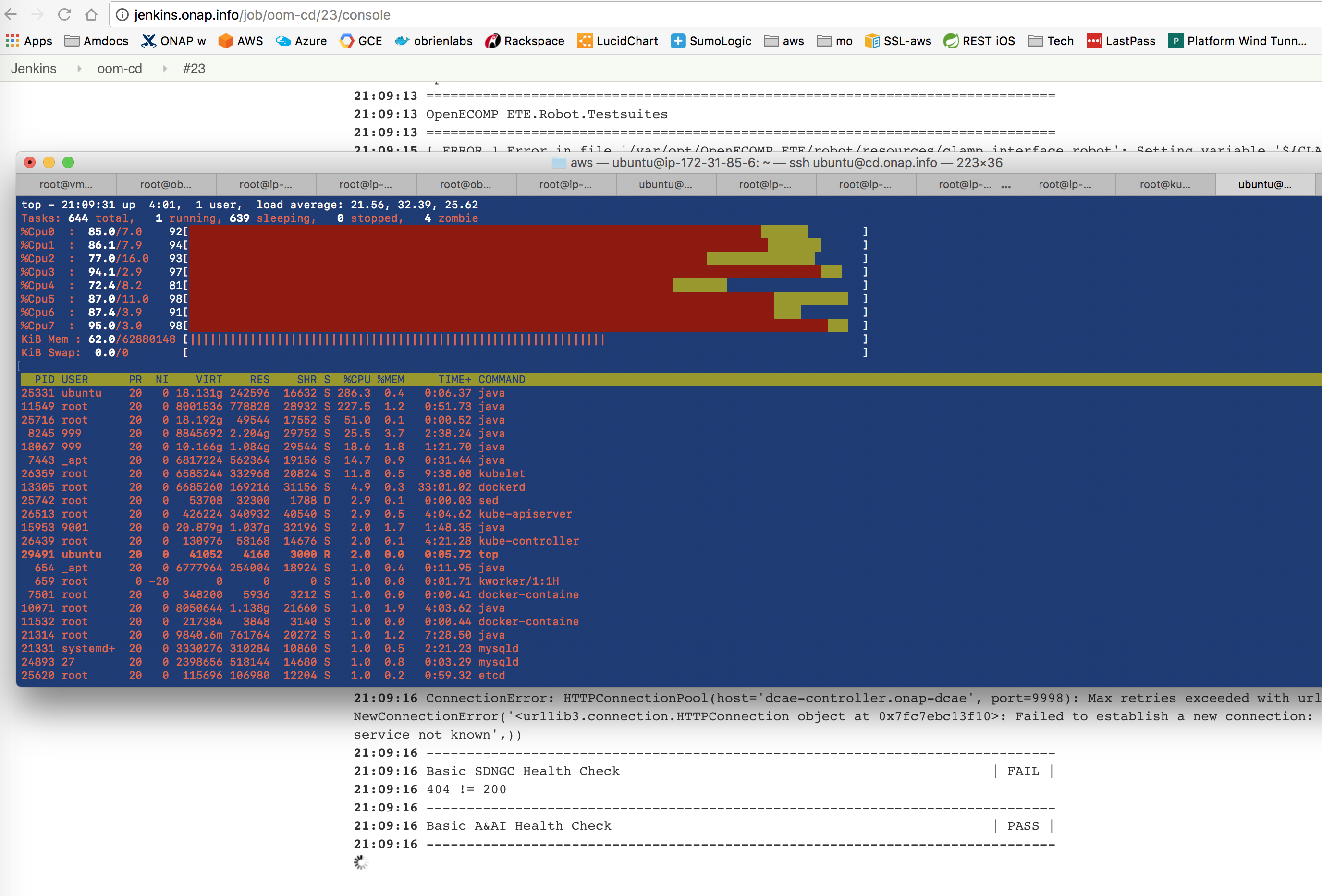

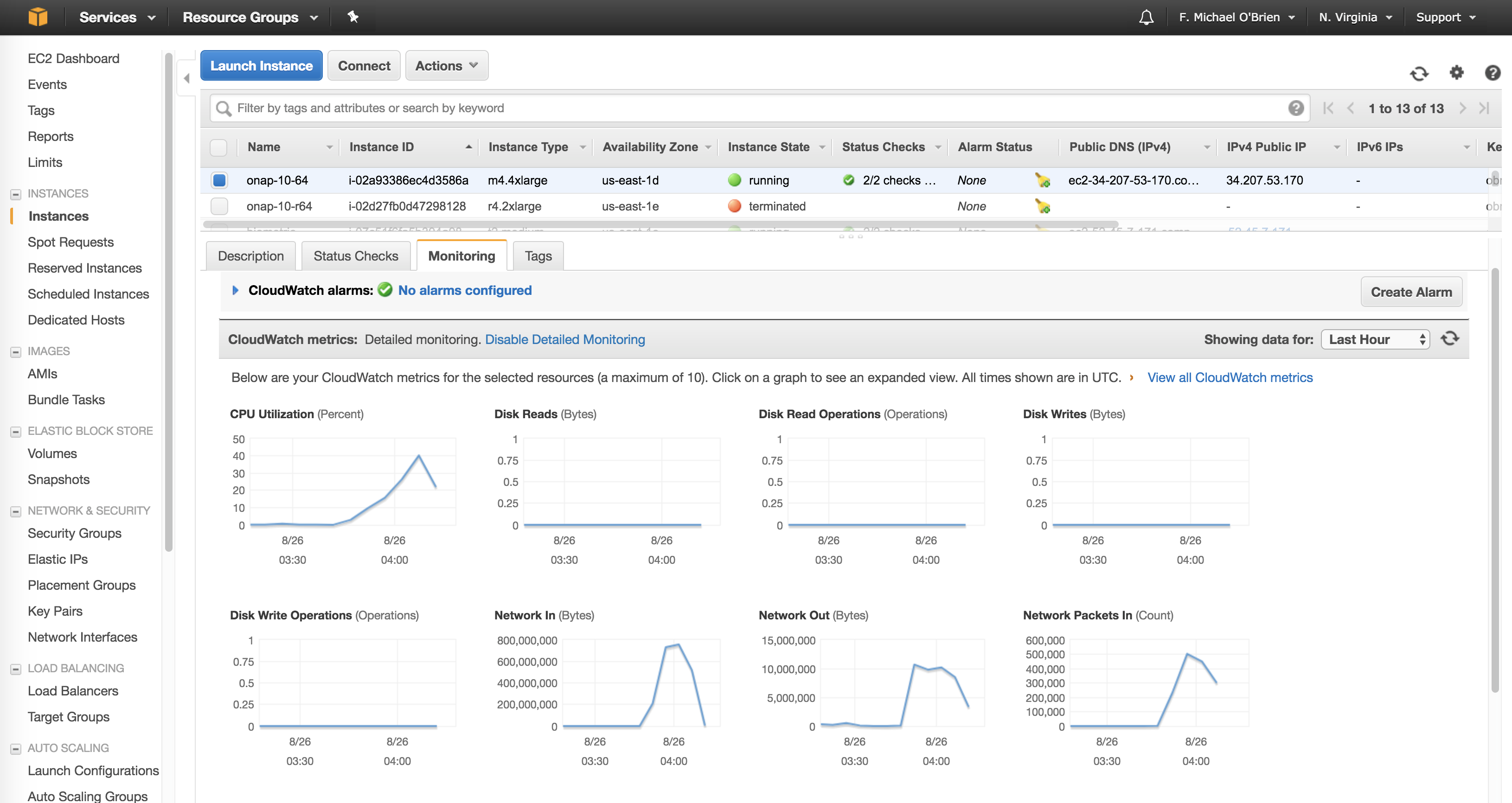

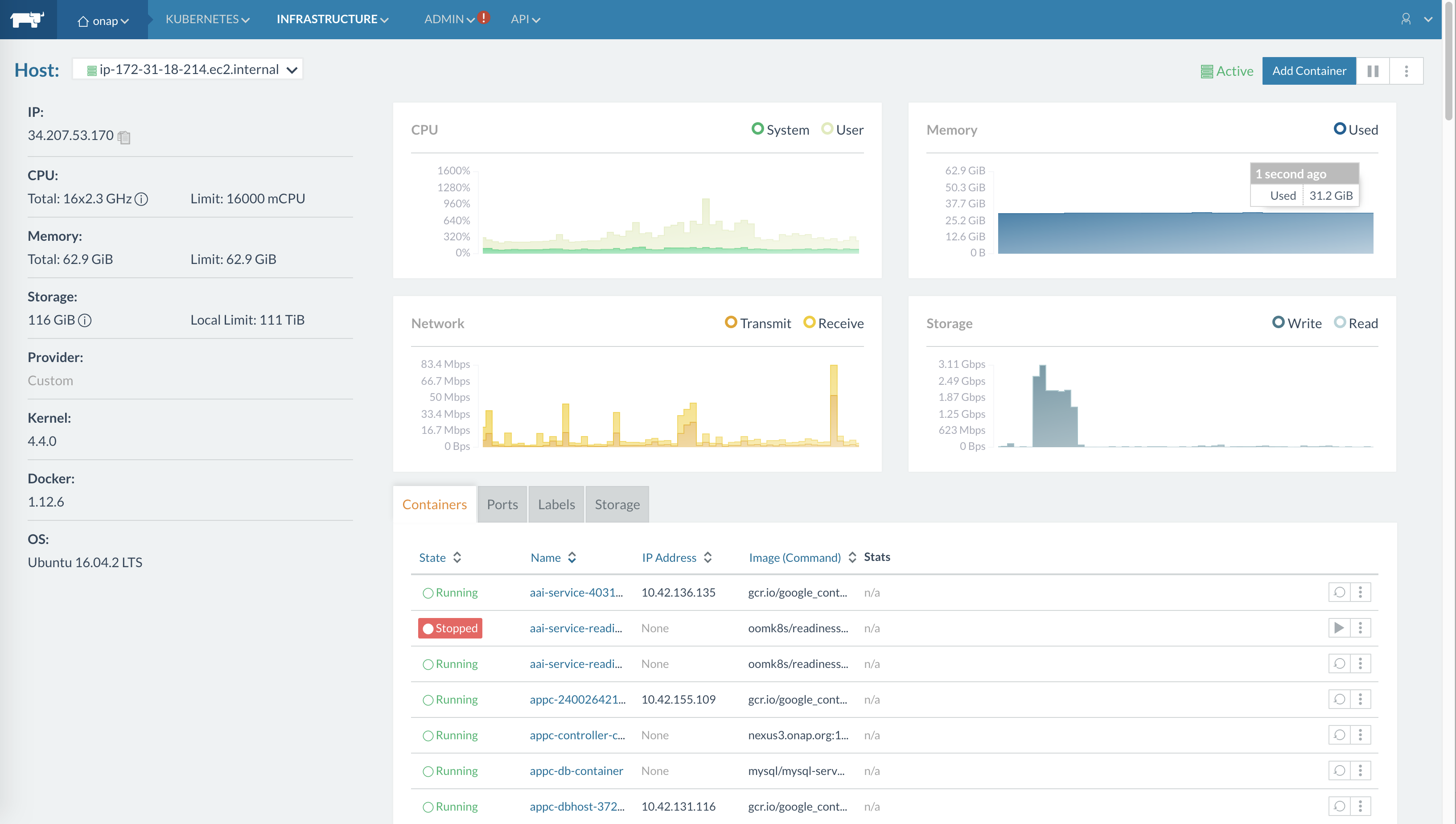

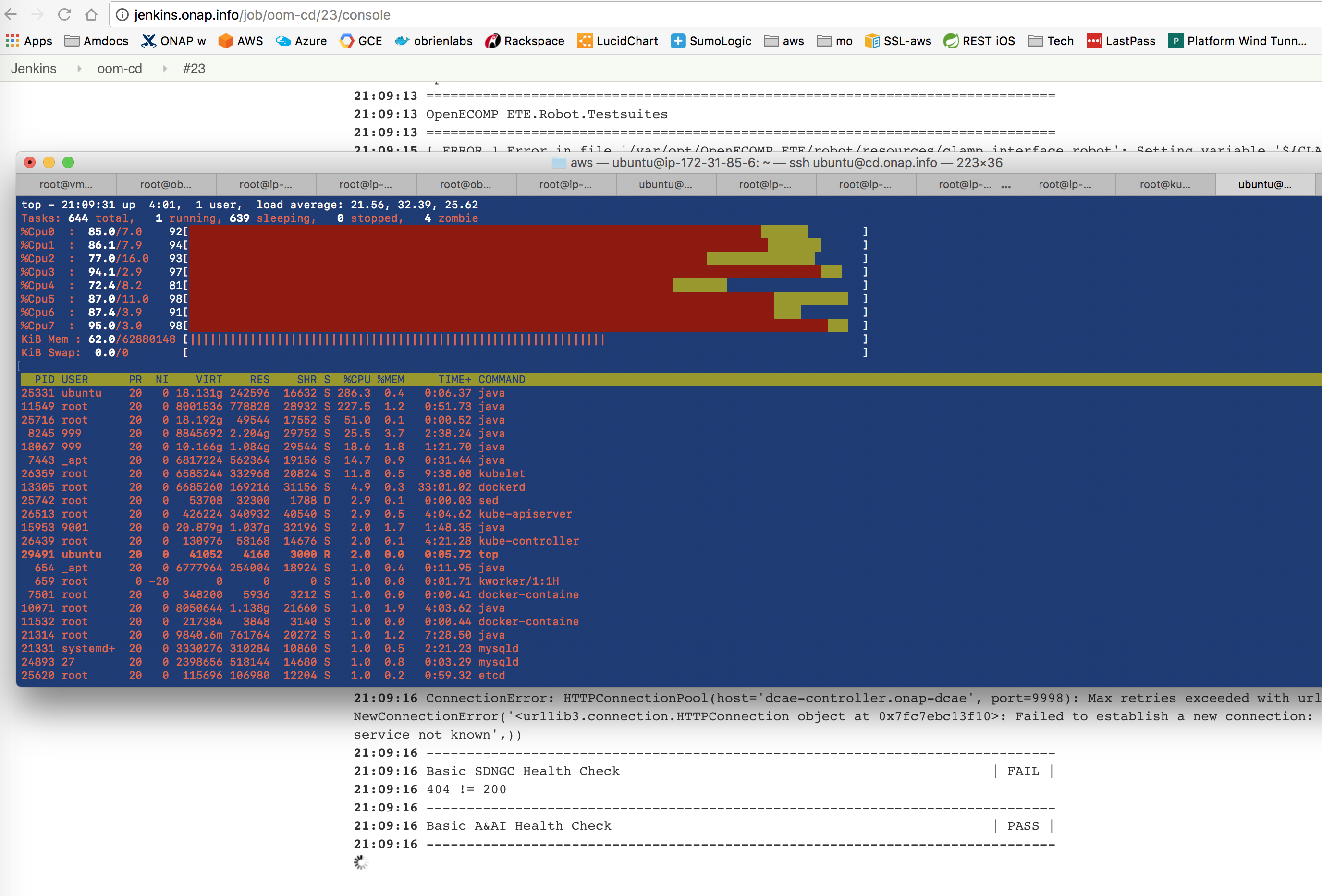

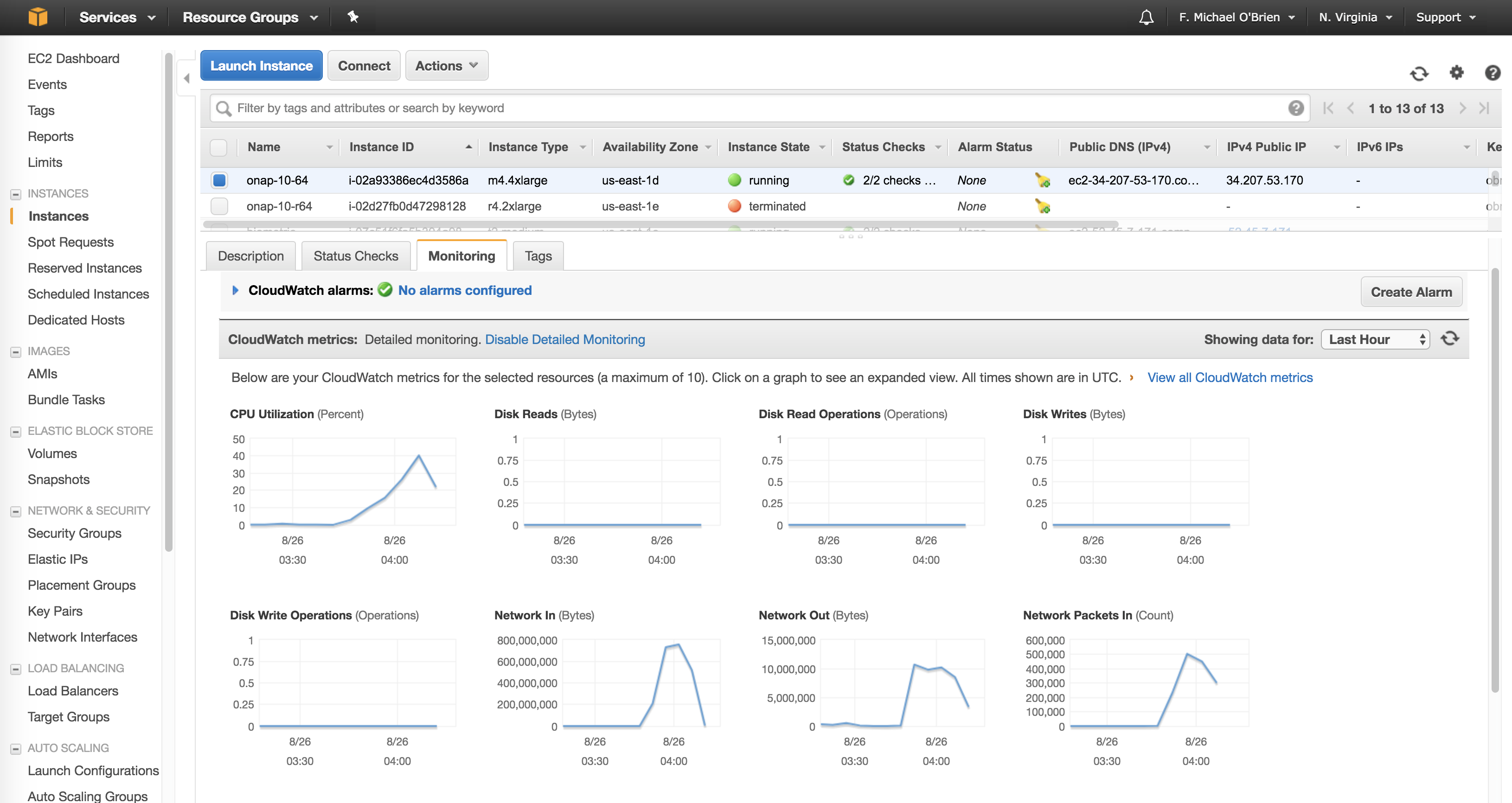

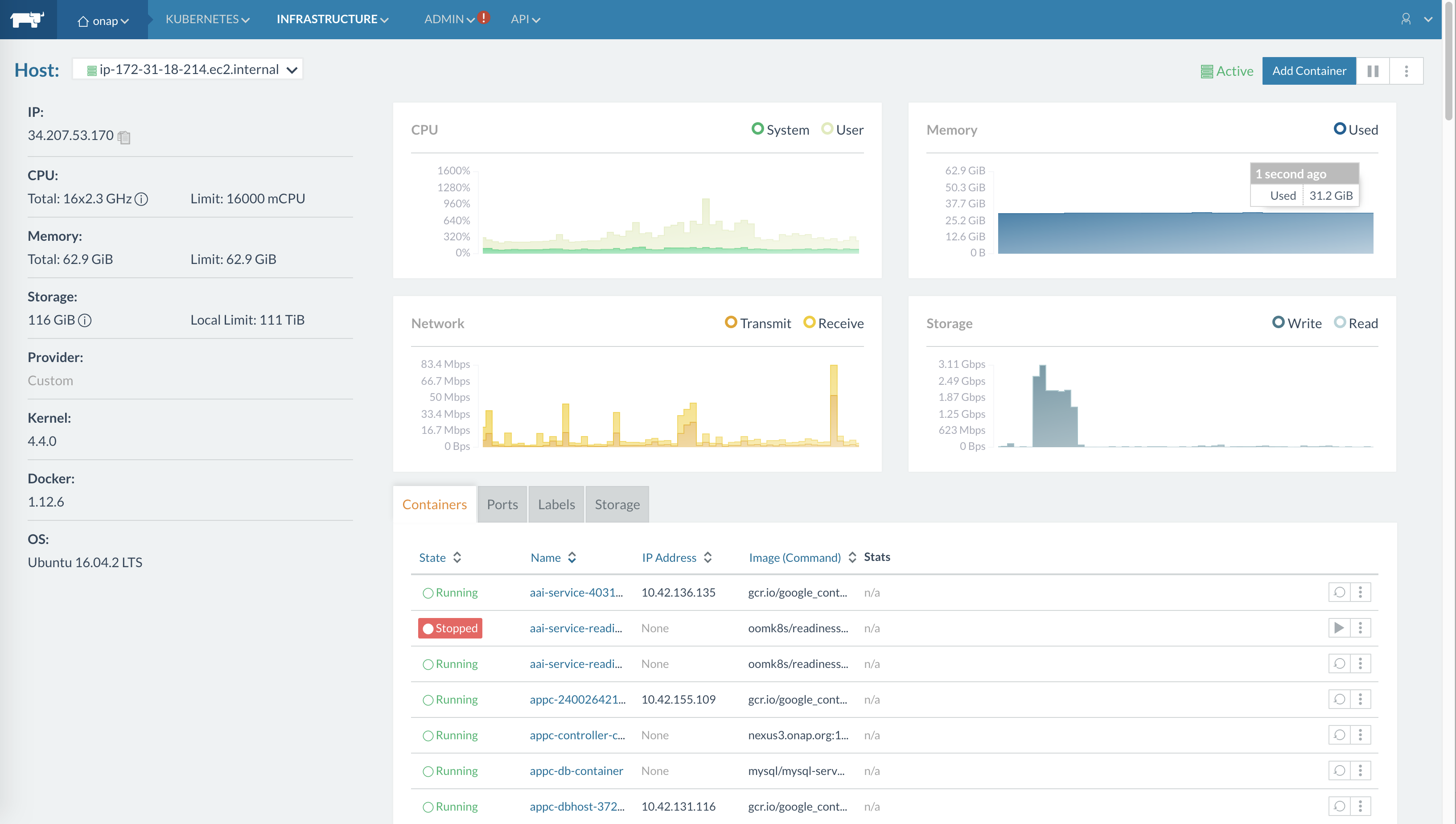

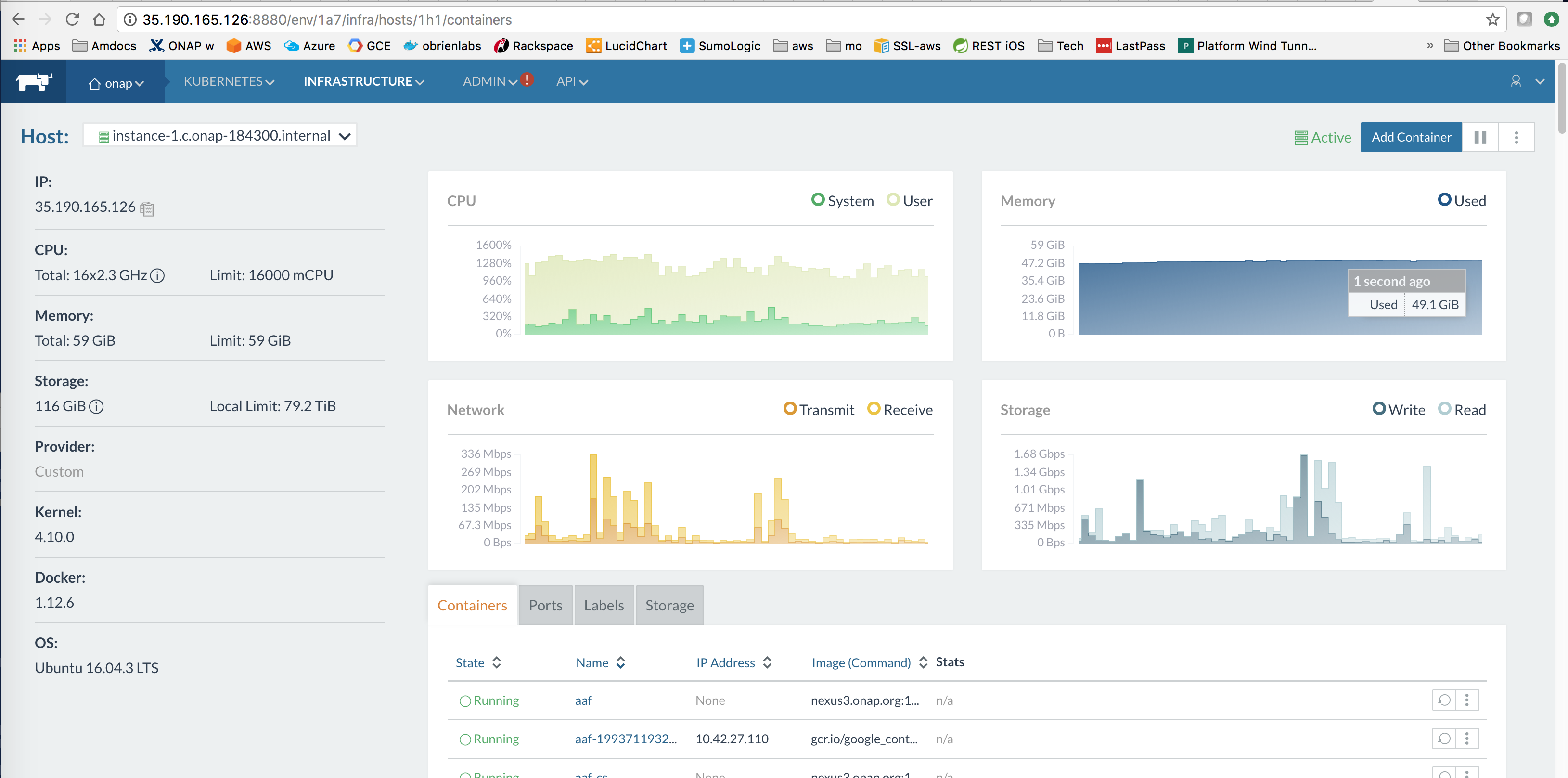

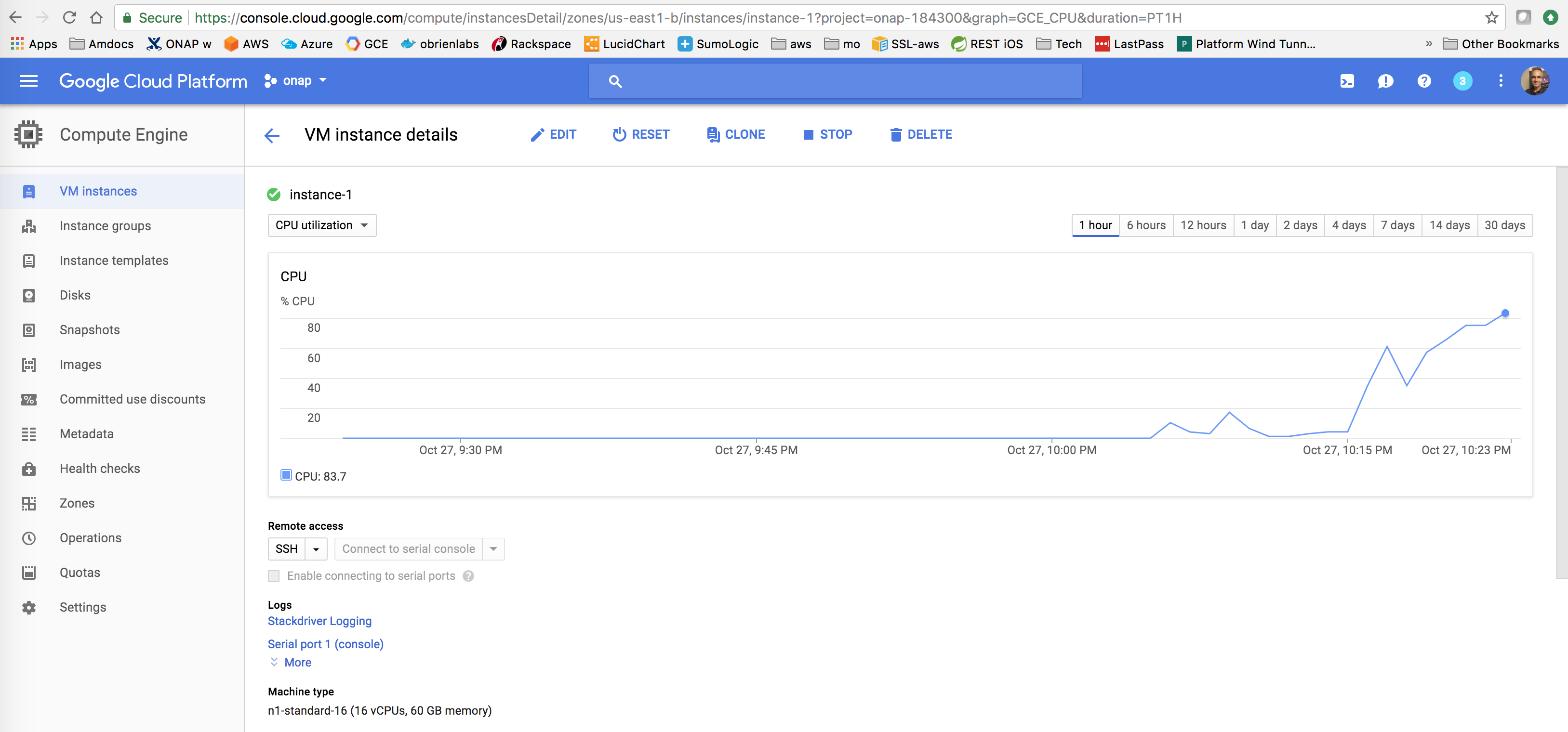

This is snapshot of the CD system running on Amazon AWS at http://jenkins.onap.info/job/oom-cd-master/ It is a 1 + 4 node cluster composed of four 64G/8vCore R4.2xLarge VMs

|

Account Provider: (2) Robin of Amazon and Michael O'Brien of Amdocs

Amazon has donated an allocation enough for 512G of VM space (a large 4 x 122G/16vCore cluster and a secondary 9 x 16G cluster) in order to run CD systems since Dec 2017 - at a cost savings of at least $500/month - thank you very much Amazon in supporting ONAP See example max/med allocations for IT/Finance in ONAP Deployment Specification for Finance and Operations#AmazonAWS |

Amazon AWS is currently hosting our RI for ONAP Continuous Deployment - this is a joint Proof Of Concept between Amazon and ONAP.

Auto Continuous Deployment via Jenkins and Kibana

https://docs.aws.amazon.com/cli/latest/userguide/cli-install-macos.html

obrien:obrienlabs amdocs$ pip --version pip 9.0.1 from /Library/Python/2.7/site-packages/pip-9.0.1-py2.7.egg (python 2.7) obrien:obrienlabs amdocs$ curl -O https://bootstrap.pypa.io/get-pip.py obrien:obrienlabs amdocs$ python3 get-pip.py --user Requirement already up-to-date: pip in /Library/Frameworks/Python.framework/Versions/3.6/lib/python3.6/site-packages obrien:obrienlabs amdocs$ pip3 install awscli --upgrade --user Successfully installed awscli-1.14.41 botocore-1.8.45 pyasn1-0.4.2 s3transfer-0.1.13 |

obrien:obrienlabs amdocs$ ssh ubuntu@<your domain/ip> $ sudo apt install python-pip $ pip install awscli --upgrade --user $ aws --version aws-cli/1.14.41 Python/2.7.12 Linux/4.4.0-1041-aws botocore/1.8.45 |

$aws configure AWS Access Key ID [None]: AK....Q AWS Secret Access Key [None]: Dl....l Default region name [None]: us-east-1 Default output format [None]: json $aws ec2 describe-regions --output table || ec2.ca-central-1.amazonaws.com | ca-central-1 || .... |

https://docs.aws.amazon.com/cli/latest/reference/ec2/allocate-address.html

$aws ec2 allocate-address

{ "PublicIp": "35.172..", "Domain": "vpc", "AllocationId": "eipalloc-2f743..."} |

$ cat route53-a-record-change-set.json

{"Comment": "comment","Changes": [

{ "Action": "CREATE",

"ResourceRecordSet": {

"Name": "amazon.onap.cloud",

"Type": "A", "TTL": 300,

"ResourceRecords": [

{ "Value": "35.172.36.." }]}}]}

$ aws route53 change-resource-record-sets --hosted-zone-id Z...7 --change-batch file://route53-a-record-change-set.json

{ "ChangeInfo": { "Status": "PENDING", "Comment": "comment",

"SubmittedAt": "2018-02-17T15:02:46.512Z", "Id": "/change/C2QUNYTDVF453x" }}

$ dig amazon.onap.cloud

; <<>> DiG 9.9.7-P3 <<>> amazon.onap.cloud

amazon.onap.cloud. 300 IN A 35.172.36..

onap.cloud. 172800 IN NS ns-1392.awsdns-46.org. |

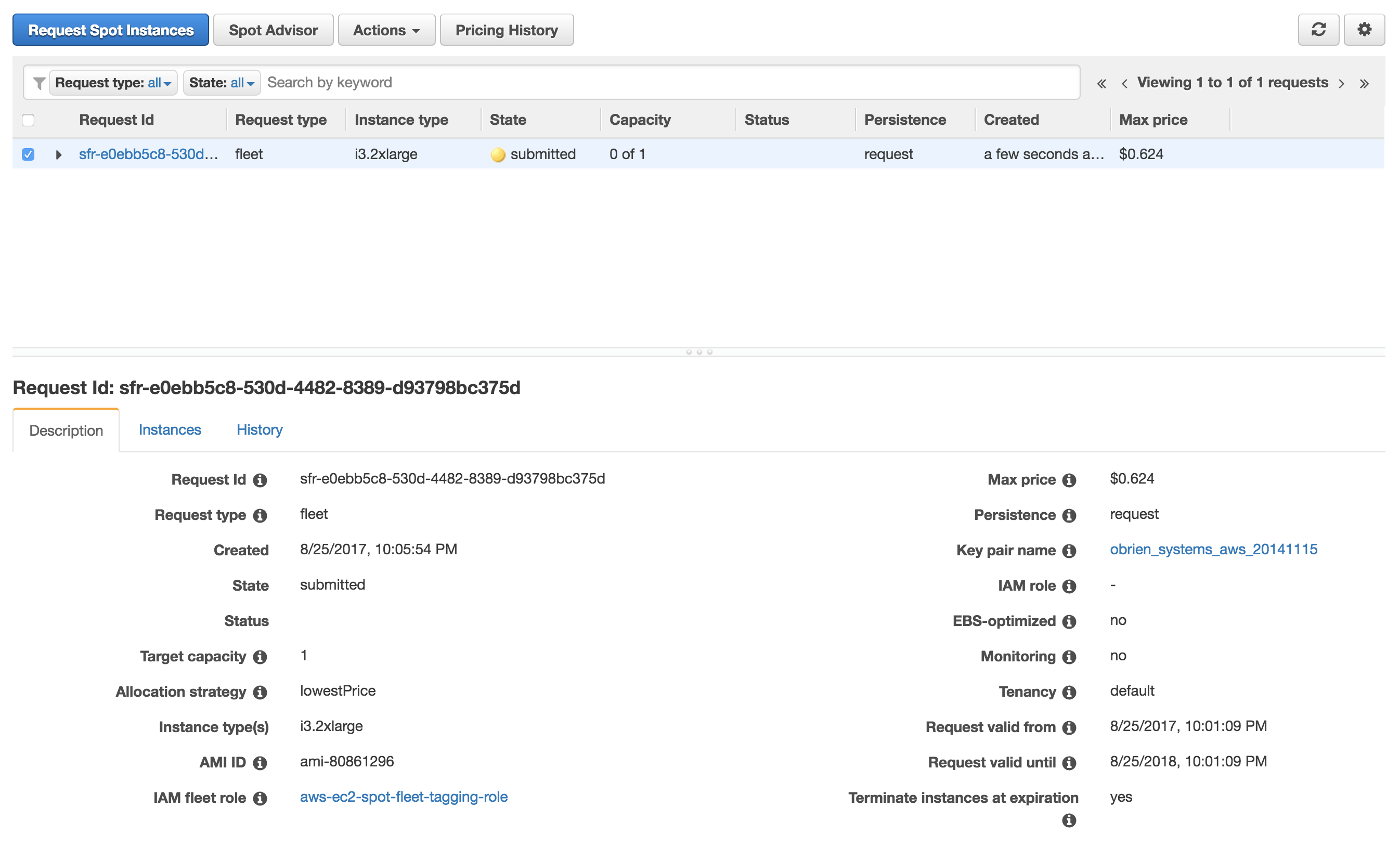

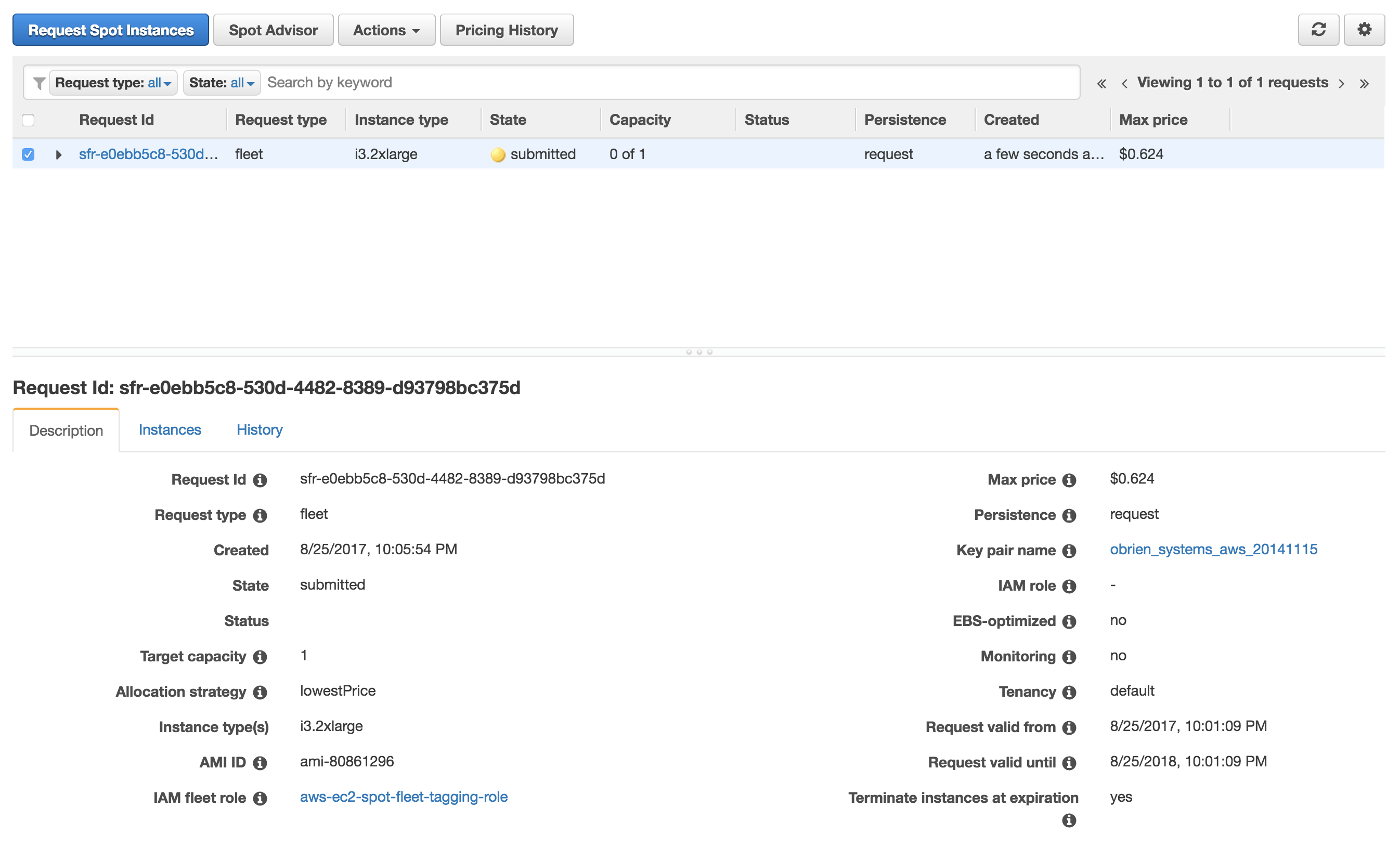

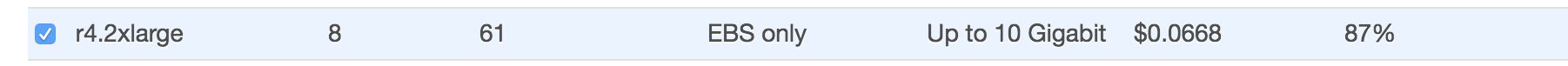

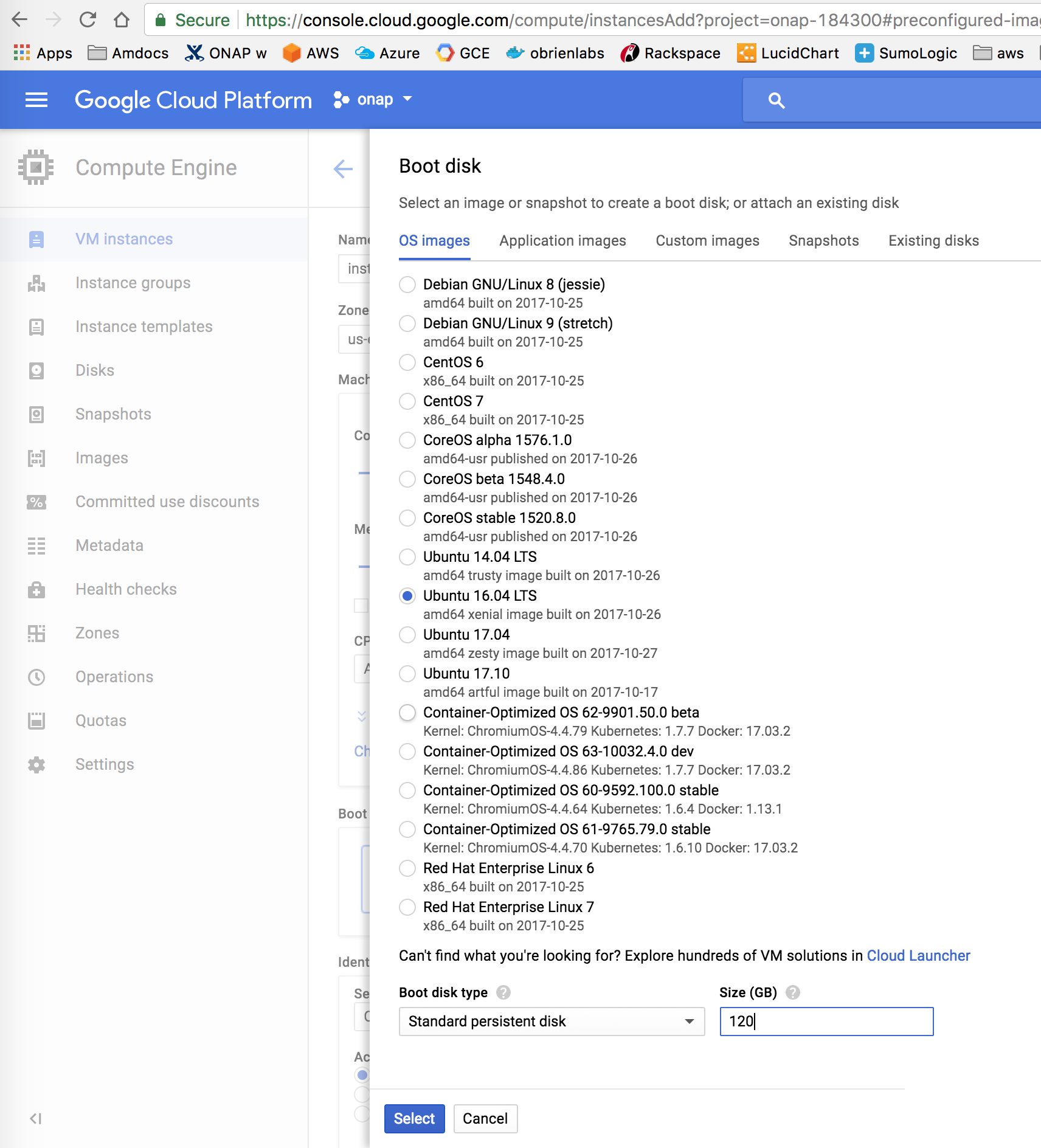

# request the usually cheapest $0.13 spot 64G EBS instance at AWS

aws ec2 request-spot-instances --spot-price "0.25" --instance-count 1 --type "one-time" --launch-specification file://aws_ec2_spot_cli.json

# don't pass in the the following - it will be generated for the EBS volume

"SnapshotId": "snap-0cfc17b071e696816"

launch specification json

{ "ImageId": "ami-c0ddd64ba",

"InstanceType": "r4.2xlarge",

"KeyName": "obrien_systems_aws_2015",

"BlockDeviceMappings": [

{"DeviceName": "/dev/sda1",

"Ebs": {

"DeleteOnTermination": true,

"VolumeType": "gp2",

"VolumeSize": 120

}}],

"SecurityGroupIds": [ "sg-322c4nnn42" ]}

# results

{ "SpotInstanceRequests": [{ "Status": {

"Message": "Your Spot request has been submitted for review, and is pending evaluation.",

"Code": "pending-evaluation", |

aws ec2 describe-spot-instance-requests --spot-instance-request-id sir-1tyr5etg

"InstanceId": "i-02a653592cb748e2x", |

Can be done separately as long as it is in the first 30 sec during initialization and before rancher starts on the instance.

$aws ec2 associate-address --instance-id i-02a653592cb748e2x --allocation-id eipalloc-375c1d0x

{ "AssociationId": "eipassoc-a4b5a29x"} |

$aws ec2 reboot-instances --instance-ids i-02a653592cb748e2x |

look at https://github.com/kubernetes-incubator/external-storage

"From the NFS wizard"

Setting up your EC2 instance

Mounting your file system

If you are unable to connect, see our troubleshooting documentation.

https://docs.aws.amazon.com/efs/latest/ug/mounting-fs.html

https://git.onap.org/logging-analytics/tree/deploy/aws/oom_cluster_host_install.sh

ubuntu@ip-172-31-19-239:~$ sudo git clone https://gerrit.onap.org/r/logging-analytics Cloning into 'logging-analytics'... ubuntu@ip-172-31-19-239:~$ sudo cp logging-analytics/deploy/aws/oom_cluster_host_install.sh . ubuntu@ip-172-31-19-239:~$ sudo ./oom_cluster_host_install.sh -n true -s <your domain/ip> -e fs-0000001b -r us-west-1 -t 5EA8A:15000:MWcEyoKw -c true -v # fix helm after adding nodes to the master ubuntu@ip-172-31-31-219:~$ sudo helm init --upgrade $HELM_HOME has been configured at /home/ubuntu/.helm. Tiller (the Helm server-side component) has been upgraded to the current version. ubuntu@ip-172-31-31-219:~$ sudo helm repo add local http://127.0.0.1:8879 "local" has been added to your repositories ubuntu@ip-172-31-31-219:~$ sudo helm repo list NAME URL stable https://kubernetes-charts.storage.googleapis.com local http://127.0.0.1:8879 |

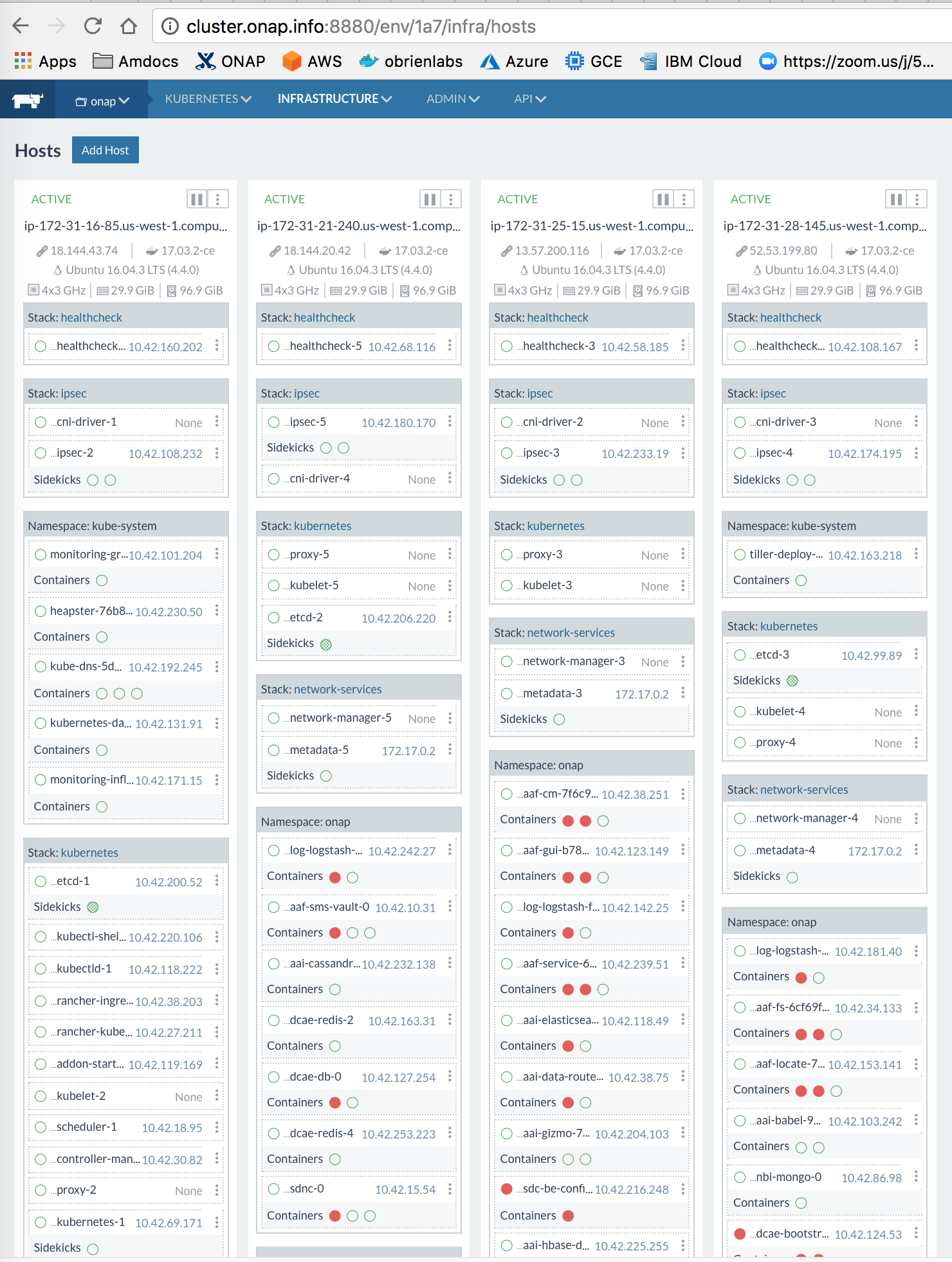

Notice that we are vCore bound Ideally we need 64 vCores for a minimal production system

# setup the master sudo git clone https://gerrit.onap.org/r/logging-analytics sudo logging-analytics/deploy/rancher/oom_rancher_setup.sh -b master -s <your domain/ip> -e onap # manually delete the host that was installed on the master - in the rancher gui for now # run without a client on the master sudo logging-analytics/deploy/aws/oom_cluster_host_install.sh -n false -s <your domain/ip> -e fs-nnnnnn1b -r us-west-1 -t 371AEDC88zYAZdBXPM -c true -v true ls /dockerdata-nfs/ onap test.sh # run the script from git on each cluster nodes sudo git clone https://gerrit.onap.org/r/logging-analytics sudo logging-analytics/deploy/aws/oom_cluster_host_install.sh -n true -s <your domain/ip> -e fs-nnnnnn1b -r us-west-1 -t 371AEDC88zYAZdBXPM -c true -v true # check a node ls /dockerdata-nfs/ onap test.sh sudo docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 6e4a57e19c39 rancher/healthcheck:v0.3.3 "/.r/r /rancher-en..." 1 second ago Up Less than a second r-healthcheck-healthcheck-5-f0a8f5e8 f9bffc6d9b3e rancher/network-manager:v0.7.19 "/rancher-entrypoi..." 1 second ago Up 1 second r-network-services-network-manager-5-103f6104 460f31281e98 rancher/net:holder "/.r/r /rancher-en..." 4 seconds ago Up 4 seconds r-ipsec-ipsec-5-2e22f370 3e30b0cf91bb rancher/agent:v1.2.9 "/run.sh run" 17 seconds ago Up 16 seconds rancher-agent # On the master - fix helm after adding nodes to the master sudo helm init --upgrade $HELM_HOME has been configured at /home/ubuntu/.helm. Tiller (the Helm server-side component) has been upgraded to the current version. sudo helm repo add local http://127.0.0.1:8879 # check the cluster on the master kubectl top nodes NAME CPU(cores) CPU% MEMORY(bytes) MEMORY% ip-172-31-16-85.us-west-1.compute.internal 129m 3% 1805Mi 5% ip-172-31-25-15.us-west-1.compute.internal 43m 1% 1065Mi 3% ip-172-31-28-145.us-west-1.compute.internal 40m 1% 1049Mi 3% ip-172-31-21-240.us-west-1.compute.internal 30m 0% 965Mi 3% # important: secure your rancher cluster by adding an oauth github account - to keep out crypto miners http://cluster.onap.info:8880/admin/access/github # now back to master to install onap # get the dev.yaml and set any pods you want up to true as well as fill out the openstack parameters sudo wget https://git.onap.org/oom/plain/kubernetes/onap/resources/environments/dev.yaml sudo cp logging-analytics/deploy/cd.sh . sudo ./cd.sh -b master -e onap -c true -d true -w false -r false 136 pending > 0 at the 1th 15 sec interval ubuntu@ip-172-31-28-152:~$ kubectl get pods -n onap | grep -E '1/1|2/2' | wc -l 20 120 pending > 0 at the 39th 15 sec interval ubuntu@ip-172-31-28-152:~$ kubectl get pods -n onap | grep -E '1/1|2/2' | wc -l 47 99 pending > 0 at the 93th 15 sec interval after an hour most of the 136 containers should be up kubectl get pods --all-namespaces | grep -E '0/|1/2' onap onap-aaf-cs-59954bd86f-vdvhx 0/1 CrashLoopBackOff 7 37m onap onap-aaf-oauth-57474c586c-f9tzc 0/1 Init:1/2 2 37m onap onap-aai-champ-7d55cbb956-j5zvn 0/1 Running 0 37m onap onap-drools-0 0/1 Init:0/1 0 1h onap onap-nexus-54ddfc9497-h74m2 0/1 CrashLoopBackOff 17 1h onap onap-sdc-be-777759bcb9-ng7zw 1/2 Running 0 1h onap onap-sdc-es-66ffbcd8fd-v8j7g 0/1 Running 0 1h onap onap-sdc-fe-75fb4965bd-bfb4l 0/2 Init:1/2 6 1h # cpu bound - a small cluster has 4x4 cores - try to run with 4x16 cores ubuntu@ip-172-31-28-152:~$ kubectl top nodes NAME CPU(cores) CPU% MEMORY(bytes) MEMORY% ip-172-31-28-145.us-west-1.compute.internal 3699m 92% 26034Mi 85% ip-172-31-21-240.us-west-1.compute.internal 3741m 93% 3872Mi 12% ip-172-31-16-85.us-west-1.compute.internal 3997m 99% 23160Mi 75% ip-172-31-25-15.us-west-1.compute.internal 3998m 99% 27076Mi 88% |

Node: R4.large (2 cores, 16g)

Notice that we are vCore bound Ideally we need 64 vCores for a minimal production system - this runs with 12 x 4 vCores = 48

30 min after helm install start - DCAE containers come at at 55

ssh ubuntu@ld.onap.info

# setup the master

sudo git clone https://gerrit.onap.org/r/logging-analytics

sudo logging-analytics/deploy/rancher/oom_rancher_setup.sh -b master -s <your domain/ip> -e onap

# manually delete the host that was installed on the master - in the rancher gui for now

# get the token for use with the EFS/NFS share

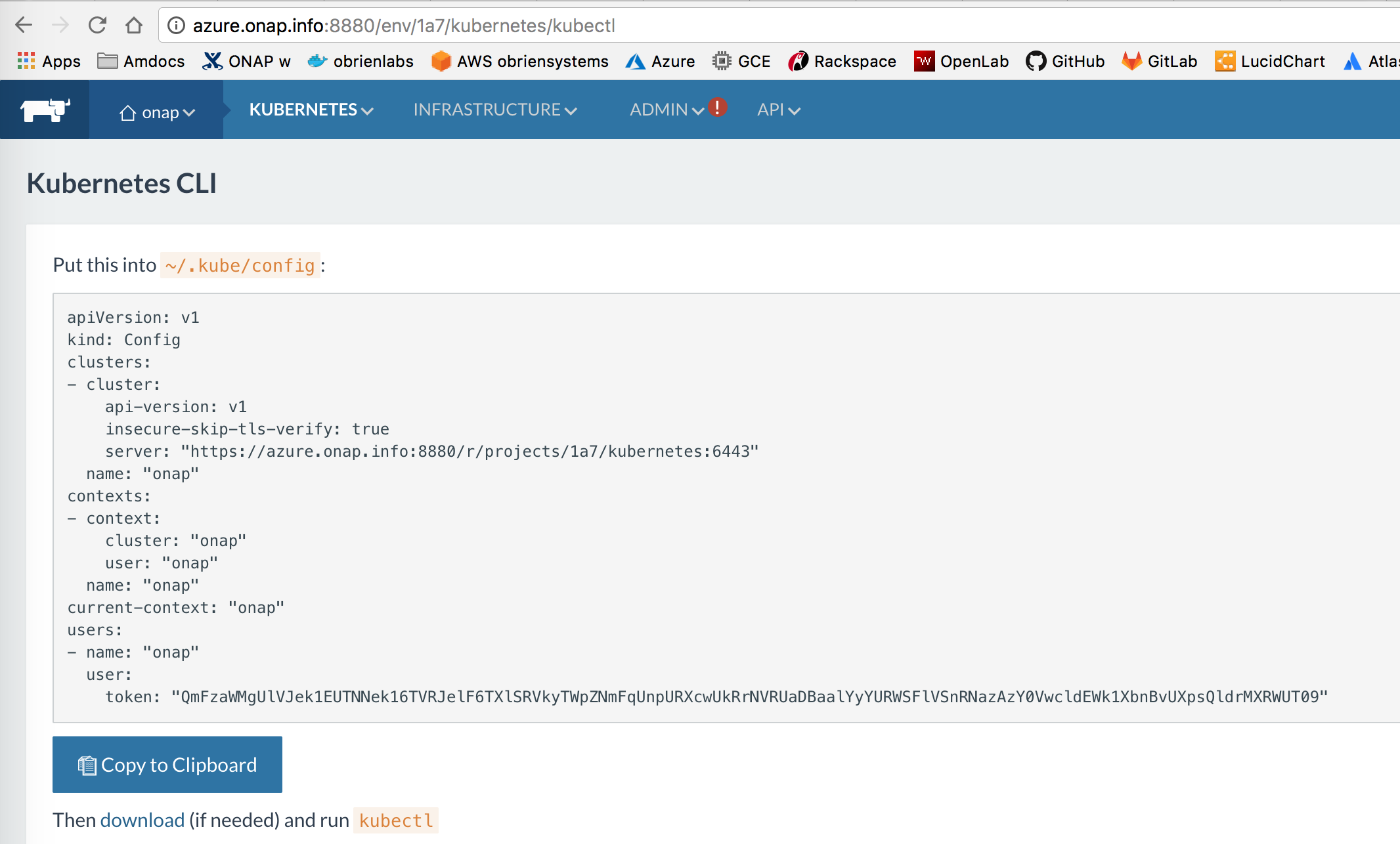

ubuntu@ip-172-31-8-245:~$ cat ~/.kube/config | grep token

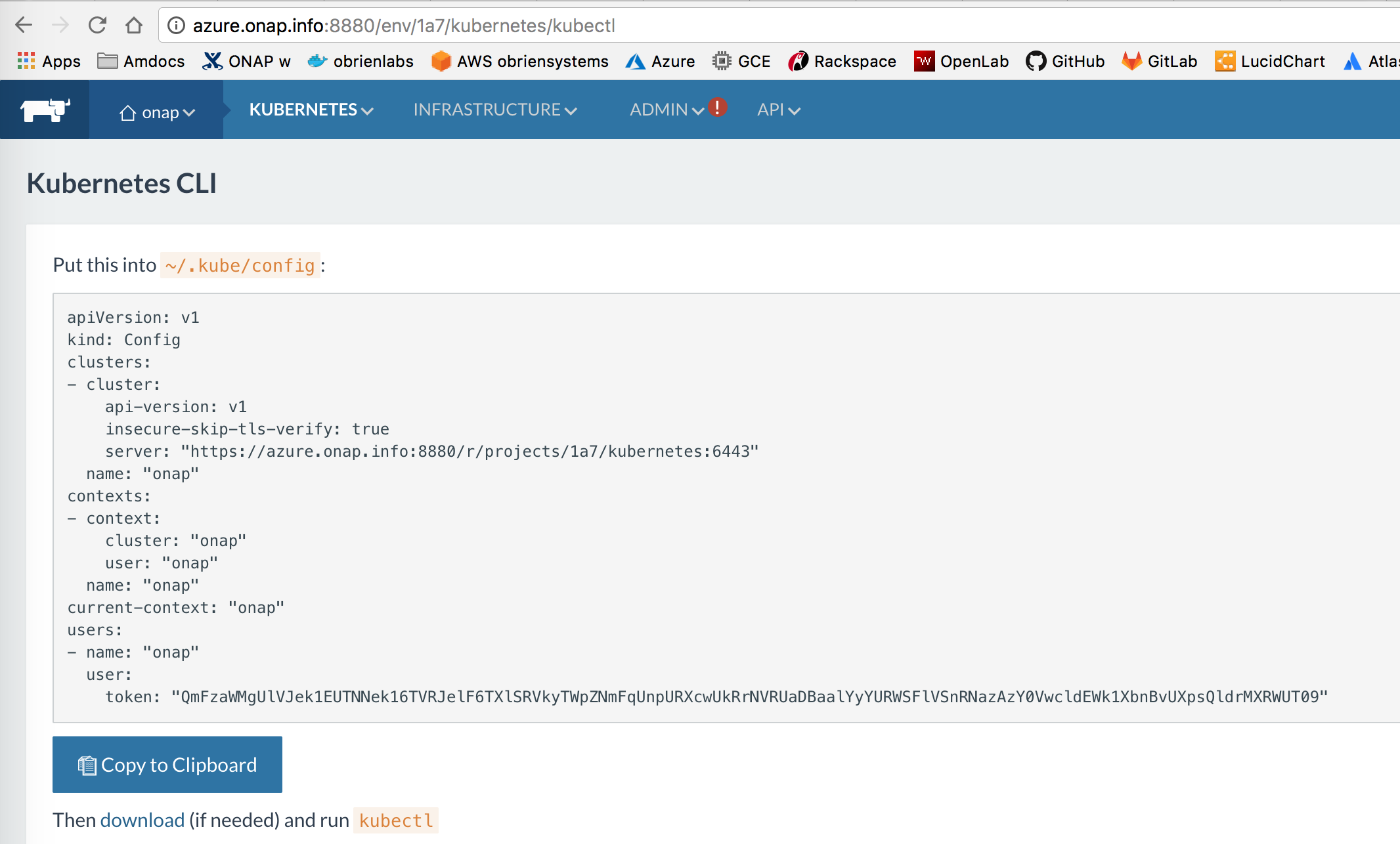

token: "QmFzaWMgTVVORk4wRkdNalF3UXpNNE9E.........RtNWxlbXBCU0hGTE1reEJVamxWTjJ0Tk5sWlVjZz09"

# run without a client on the master

ubuntu@ip-172-31-8-245:~$ sudo logging-analytics/deploy/aws/oom_cluster_host_install.sh -n false -s ld.onap.info -e fs-....eb -r us-east-2 -t QmFzaWMgTVVORk4wRkdNalF3UX..........aU1dGSllUVkozU0RSTmRtNWxlbXBCU0hGTE1reEJVamxWTjJ0Tk5sWlVjZz09 -c true -v true

ls /dockerdata-nfs/

onap test.sh

# run the script from git on each cluster node

sudo git clone https://gerrit.onap.org/r/logging-analytics

sudo logging-analytics/deploy/aws/oom_cluster_host_install.sh -n true -s <your domain/ip> -e fs-nnnnnn1b -r us-west-1 -t 371AEDC88zYAZdBXPM -c true -v true

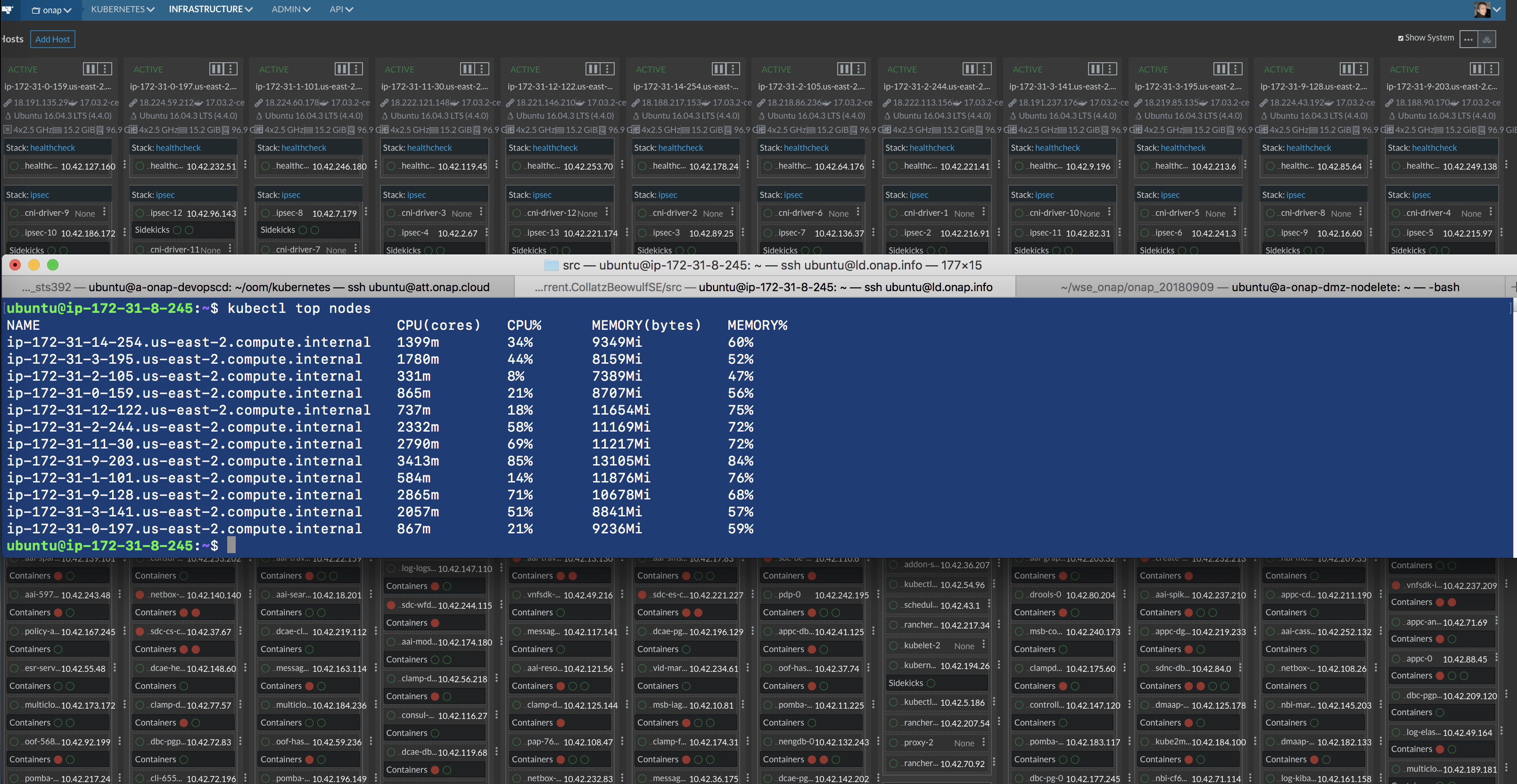

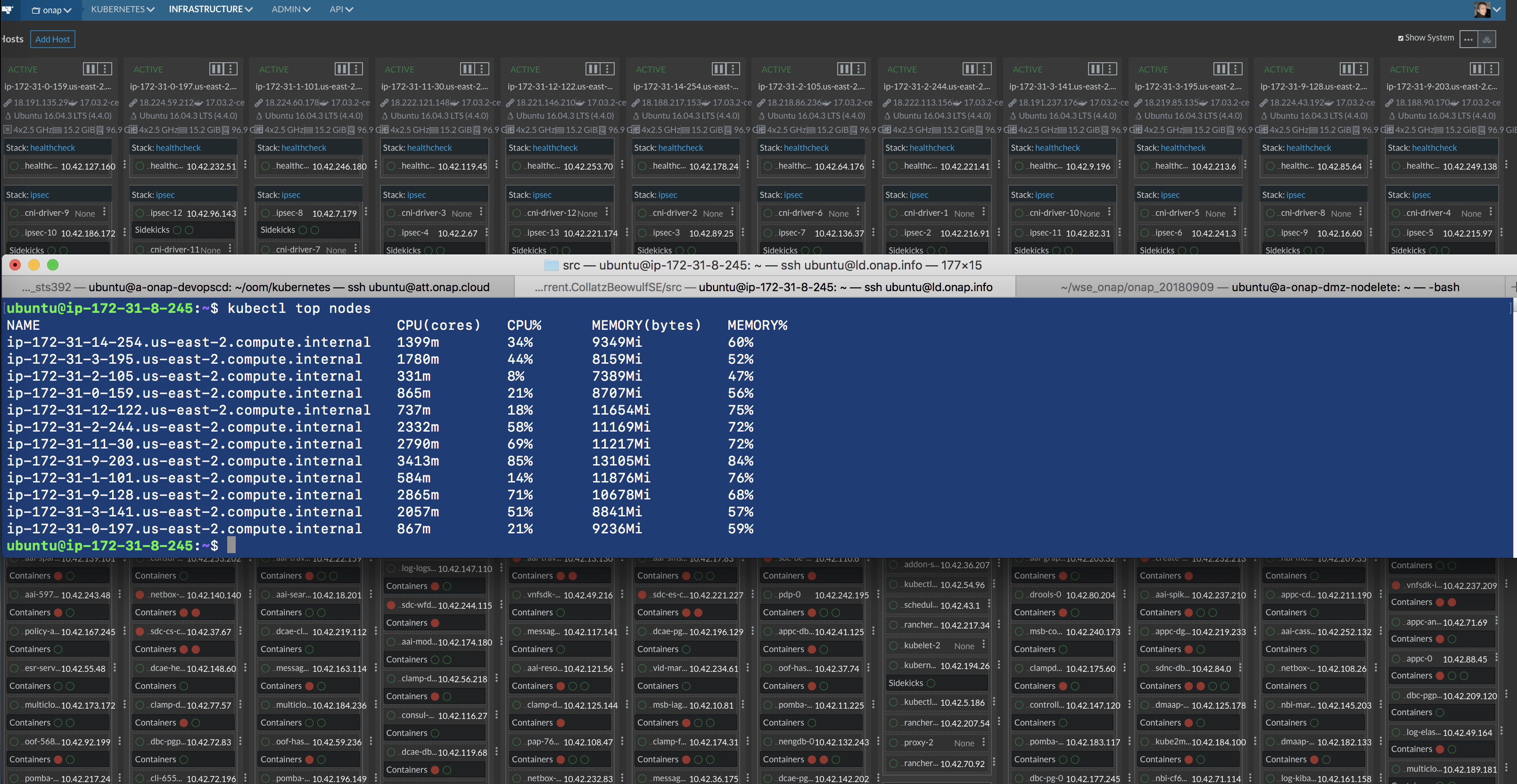

ubuntu@ip-172-31-8-245:~$ kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

ip-172-31-14-254.us-east-2.compute.internal 45m 1% 1160Mi 7%

ip-172-31-3-195.us-east-2.compute.internal 29m 0% 1023Mi 6%

ip-172-31-2-105.us-east-2.compute.internal 31m 0% 1004Mi 6%

ip-172-31-0-159.us-east-2.compute.internal 30m 0% 1018Mi 6%

ip-172-31-12-122.us-east-2.compute.internal 34m 0% 1002Mi 6%

ip-172-31-0-197.us-east-2.compute.internal 30m 0% 1015Mi 6%

ip-172-31-2-244.us-east-2.compute.internal 123m 3% 2032Mi 13%

ip-172-31-11-30.us-east-2.compute.internal 38m 0% 1142Mi 7%

ip-172-31-9-203.us-east-2.compute.internal 33m 0% 998Mi 6%

ip-172-31-1-101.us-east-2.compute.internal 32m 0% 996Mi 6%

ip-172-31-9-128.us-east-2.compute.internal 31m 0% 1037Mi 6%

ip-172-31-3-141.us-east-2.compute.internal 30m 0% 1011Mi 6%

# now back to master to install onap

# get the dev.yaml and set any pods you want up to true as well as fill out the openstack parameters

sudo wget https://git.onap.org/oom/plain/kubernetes/onap/resources/environments/dev.yaml

sudo cp logging-analytics/deploy/cd.sh .

sudo ./cd.sh -b master -e onap -c true -d true -w false -r false

after an hour most of the 136 containers should be up

kubectl get pods --all-namespaces | grep -E '0/|1/2'

|

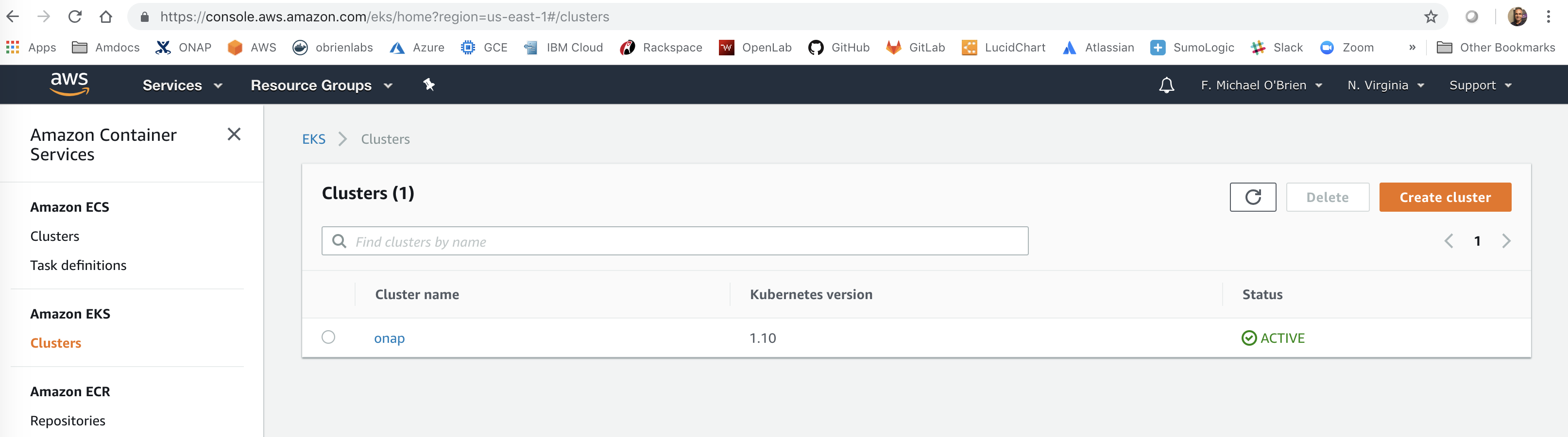

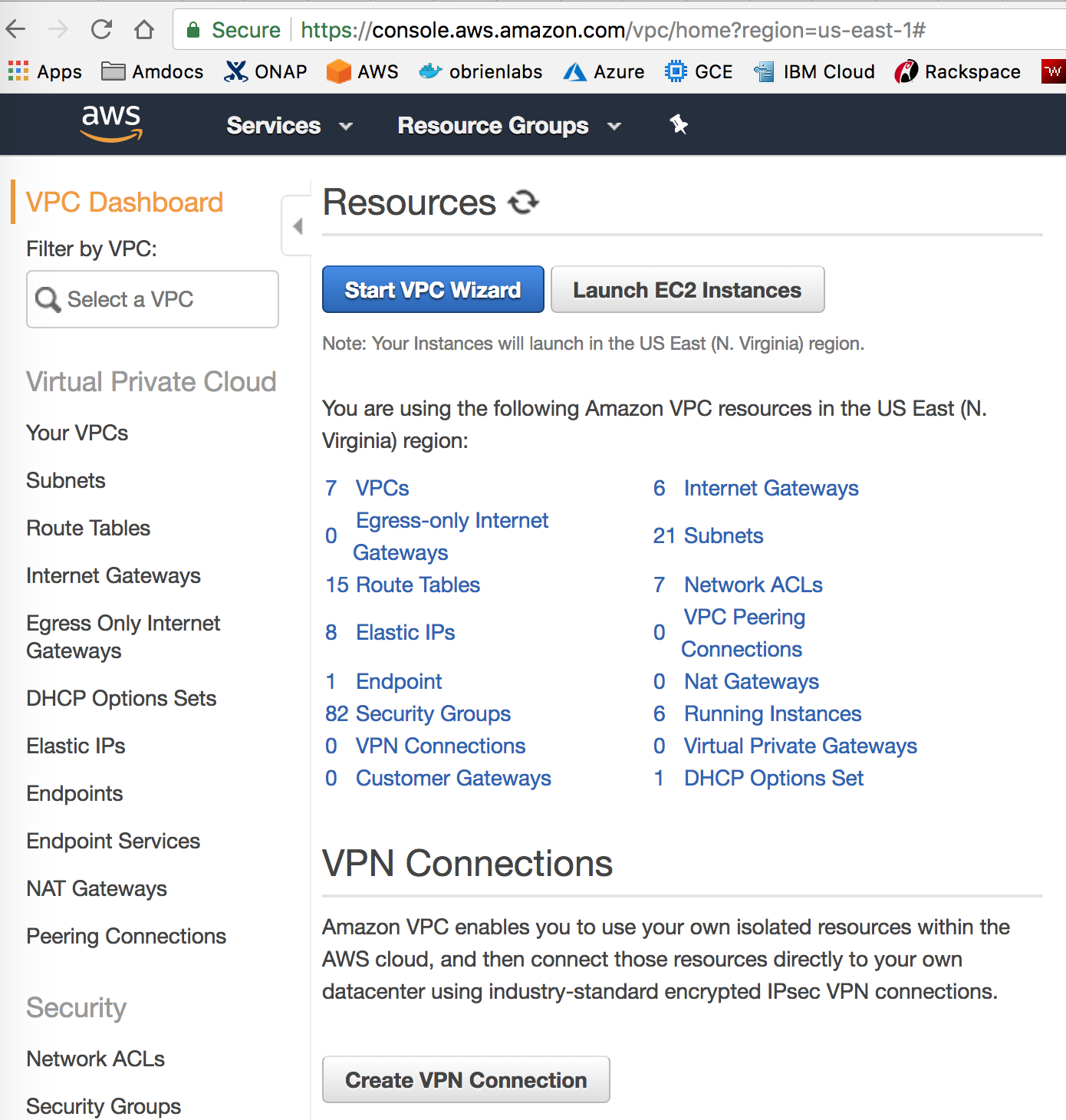

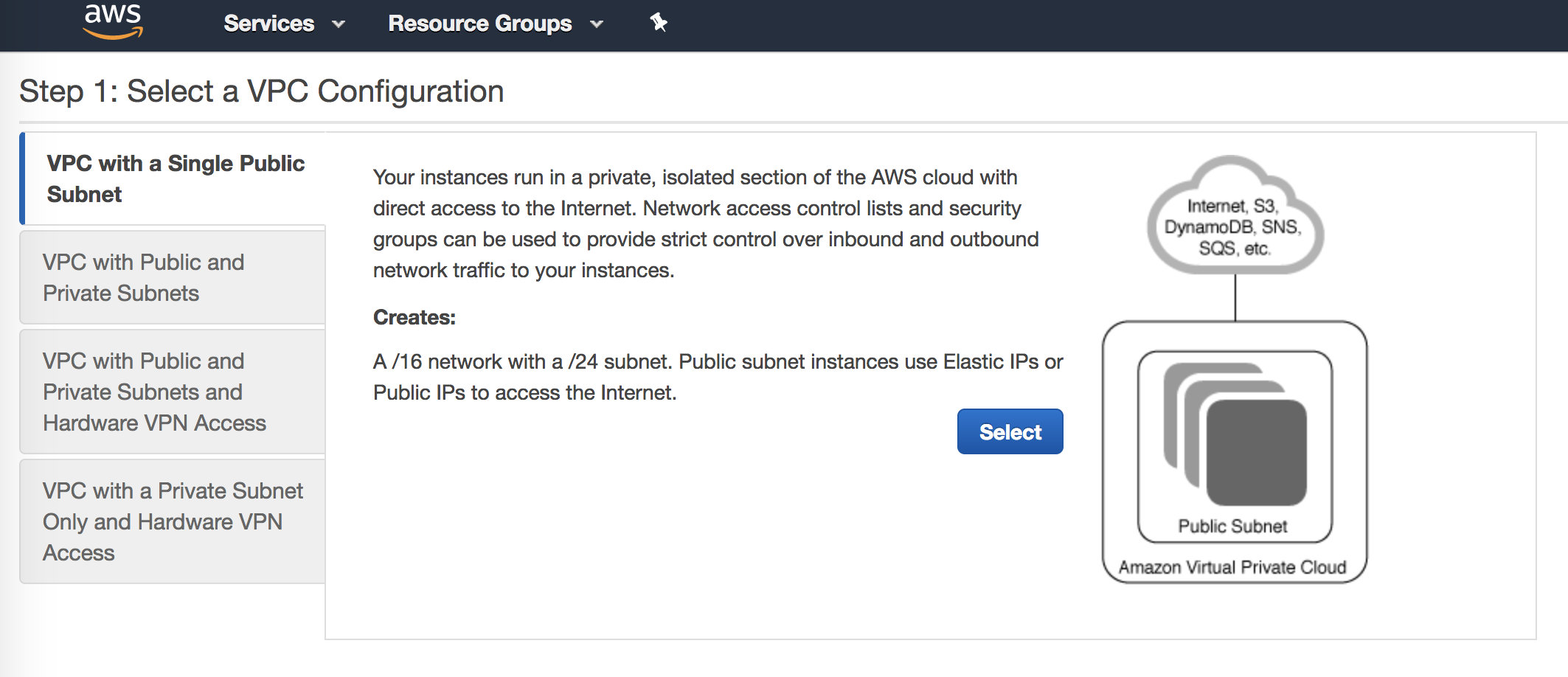

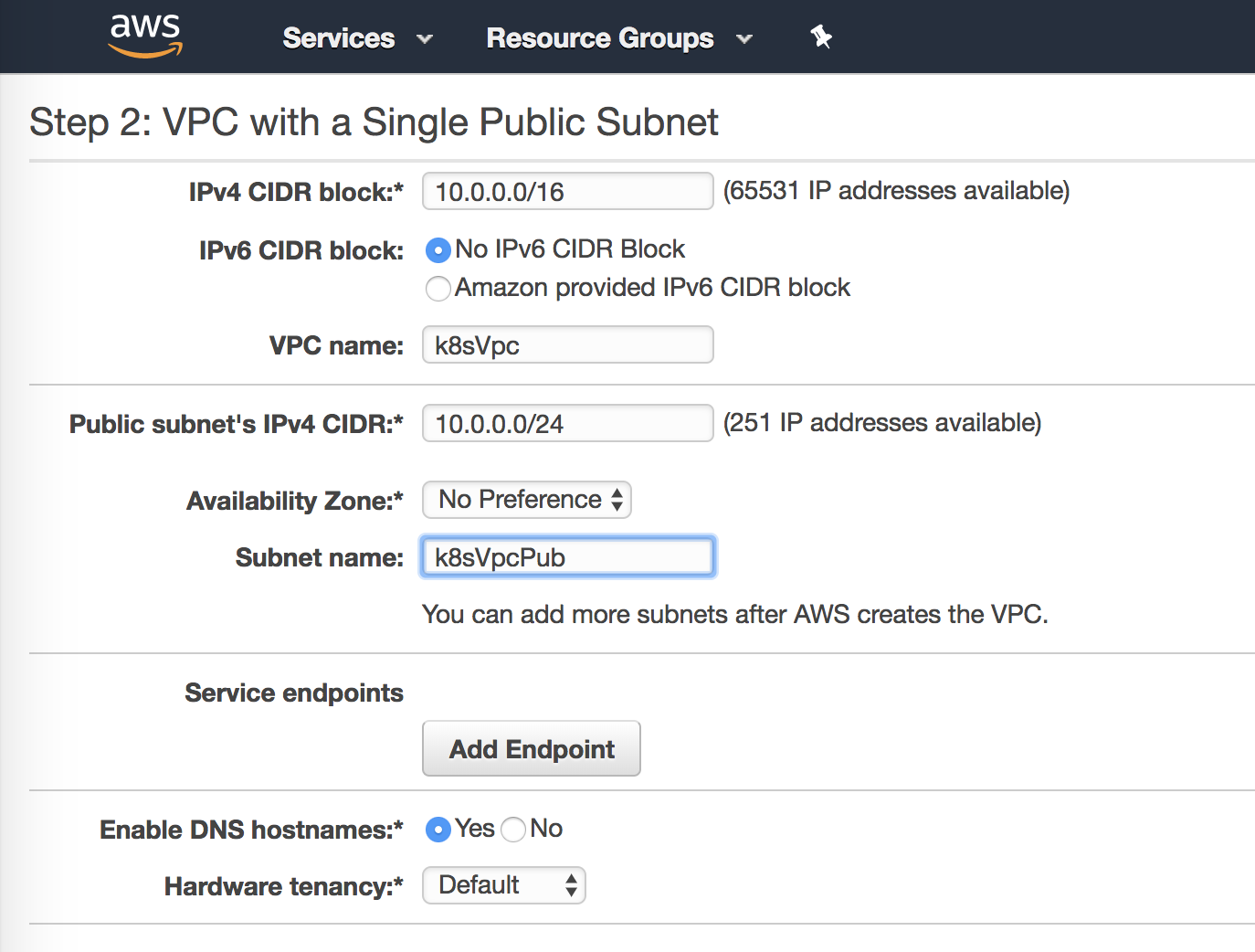

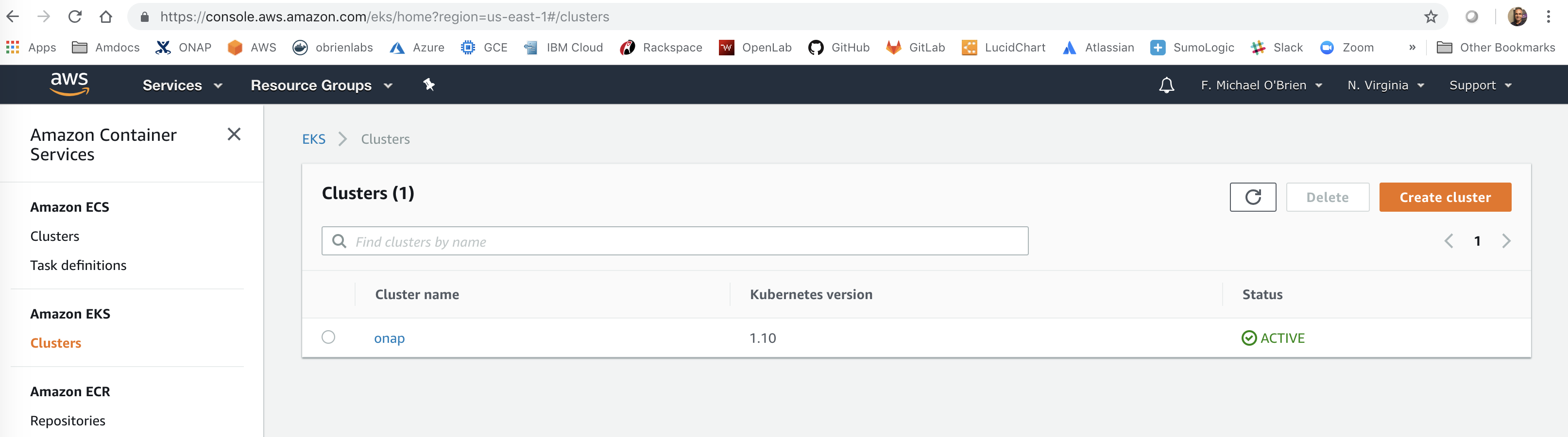

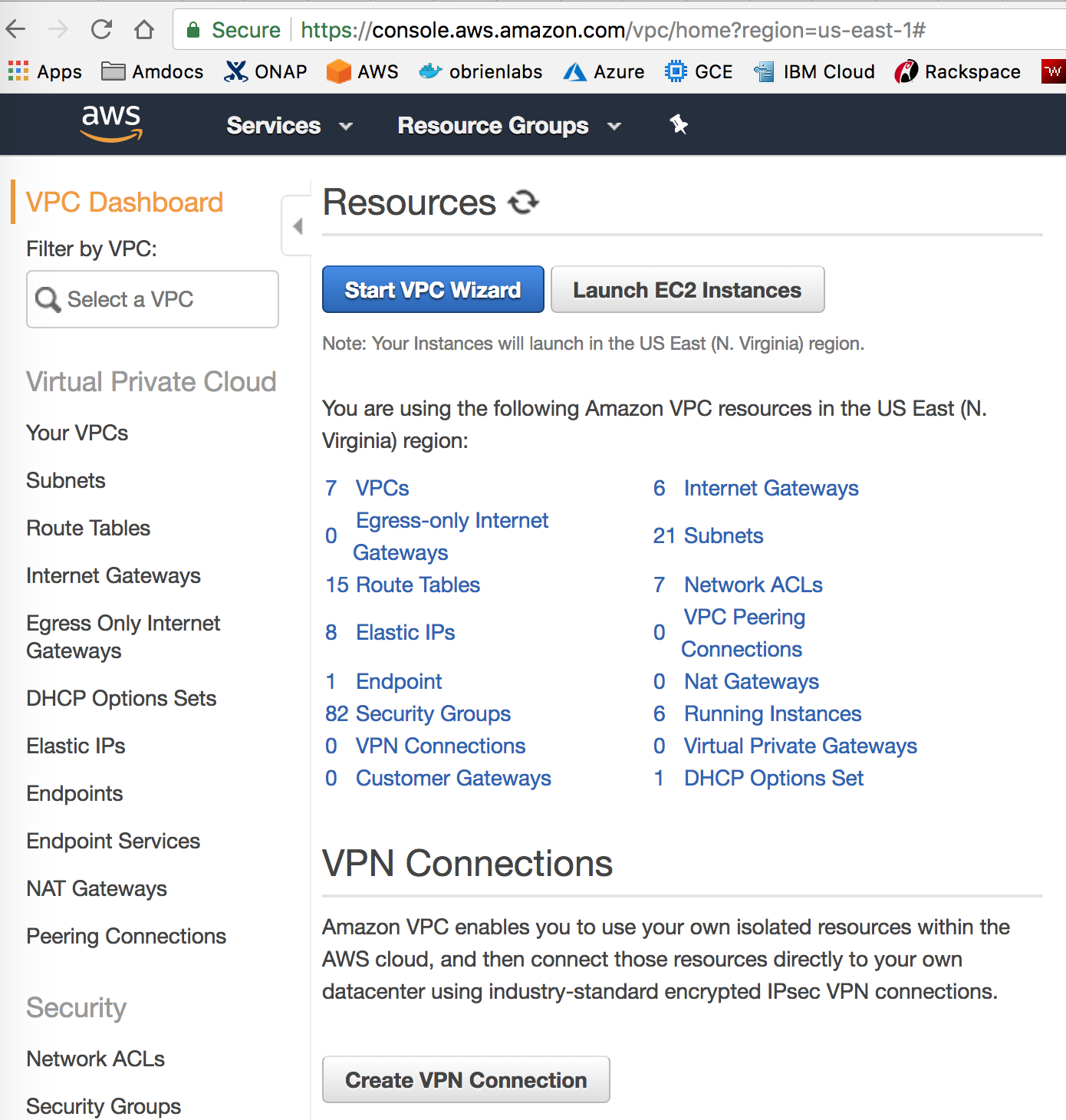

follow

https://docs.aws.amazon.com/eks/latest/userguide/getting-started.html

https://aws.amazon.com/getting-started/projects/deploy-kubernetes-app-amazon-eks/

follow the VPC CNI plugin - https://aws.amazon.com/blogs/opensource/vpc-cni-plugin-v1-1-available/

and 20190121 work with John Lotoskion https://lists.onap.org/g/onap-discuss/topic/aws_efs_nfs_and_rancher_2_2/29382184?p=,,,20,0,0,0::recentpostdate%2Fsticky,,,20,2,0,29382184

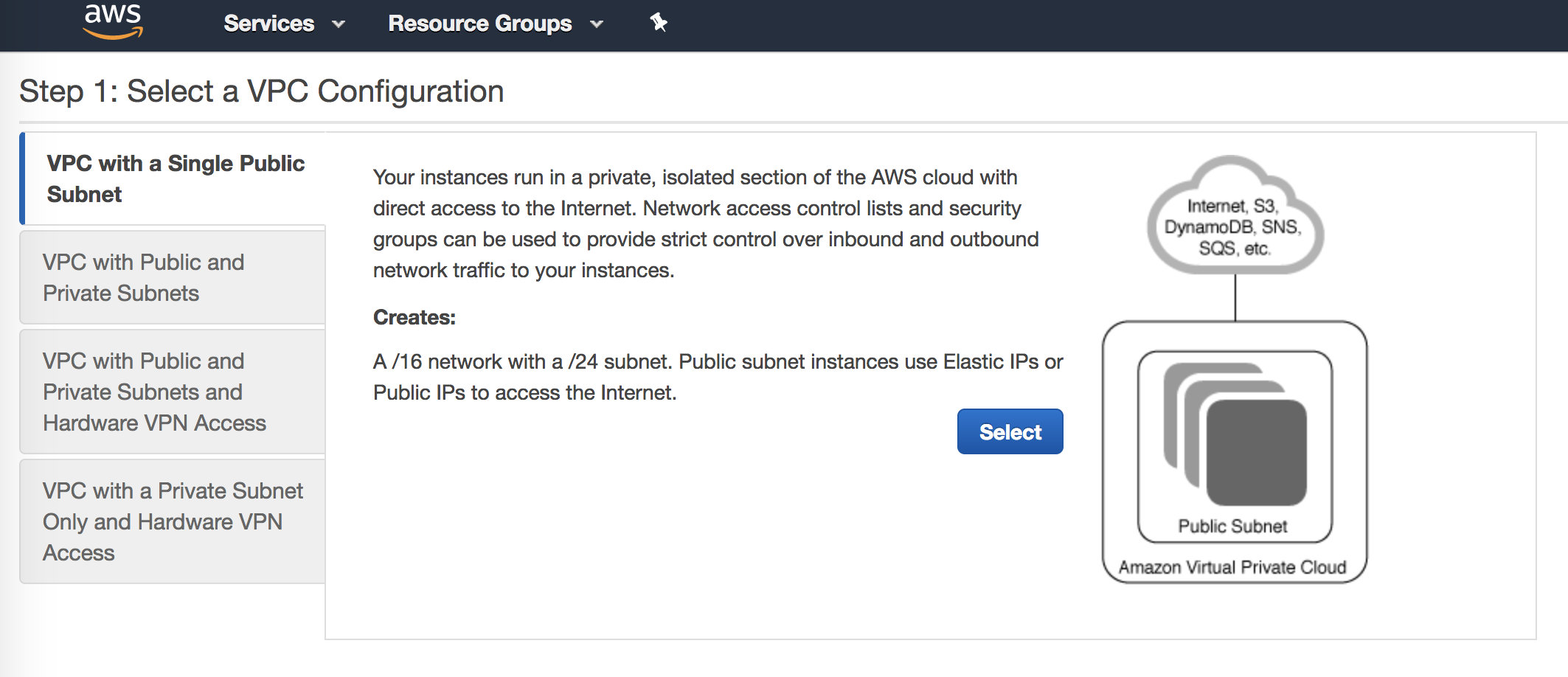

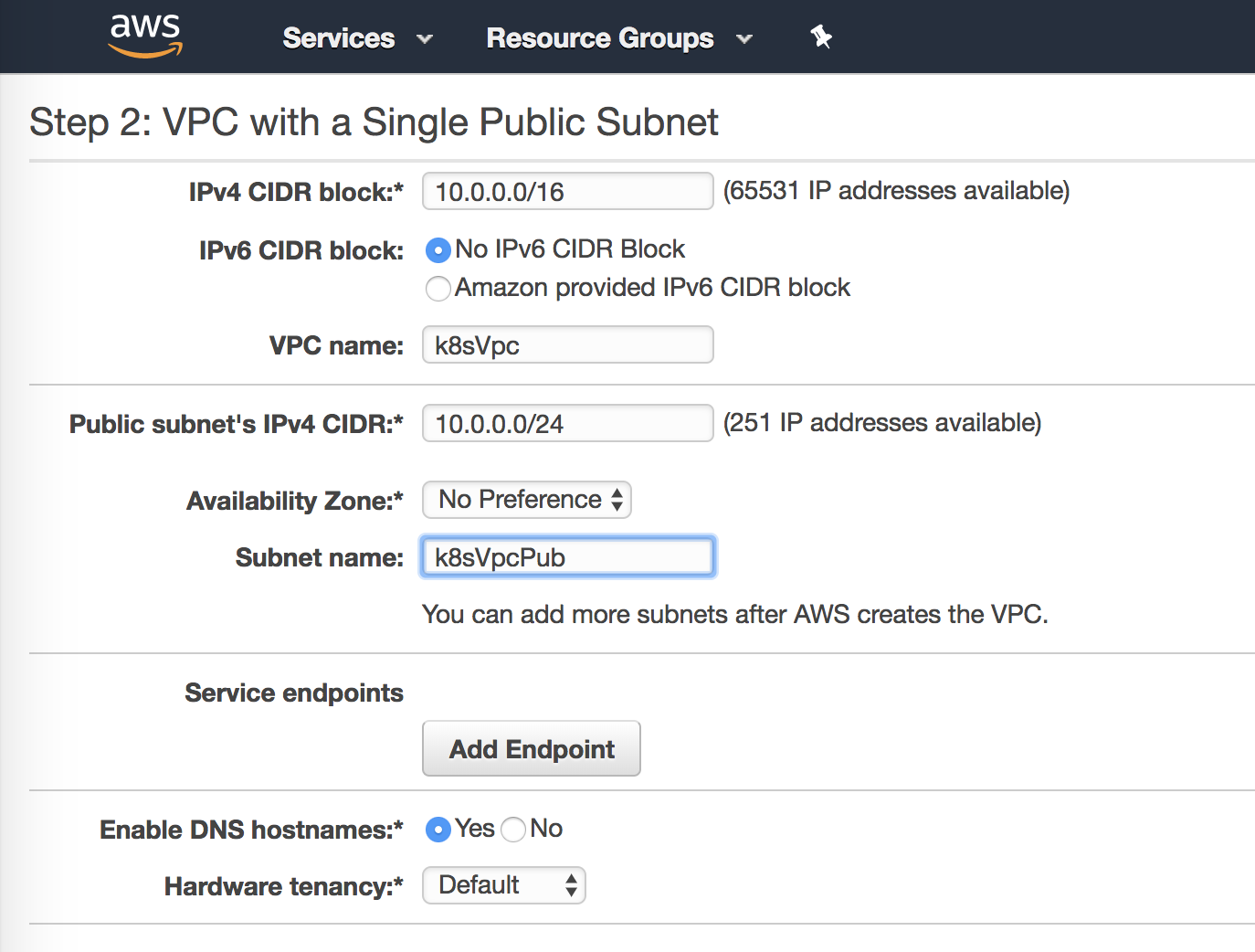

Standard ELB and public/private VPC

oom_rancher_install.sh is in under https://gerrit.onap.org/r/#/c/32019/

see

cd.sh in under https://gerrit.onap.org/r/#/c/32653/

Scenario: installing Rancher on clean Ubuntu 16.04 64g VM (single collocated server/host) and the master branch of onap via OOM deployment (2 scripts)

1 hour video of automated installation on an AWS EC2 spot instance

$ aws ec2 terminate-instances --instance-ids i-0040425ac8c0d8f6x

{ "TerminatingInstances": [ {

"InstanceId": "i-0040425ac8c0d8f63",

"CurrentState": {

"Code": 32,

"Name": "shutting-down" },

"PreviousState": {

"Code": 16,

"Name": "running"

} } ]} |

Video on Installing and Running the ONAP Demos#ONAPDeploymentVideos

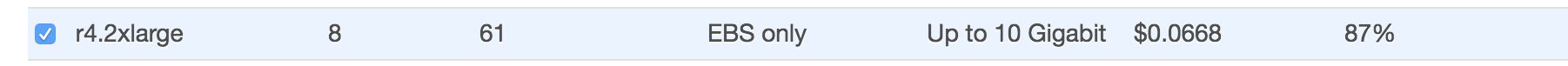

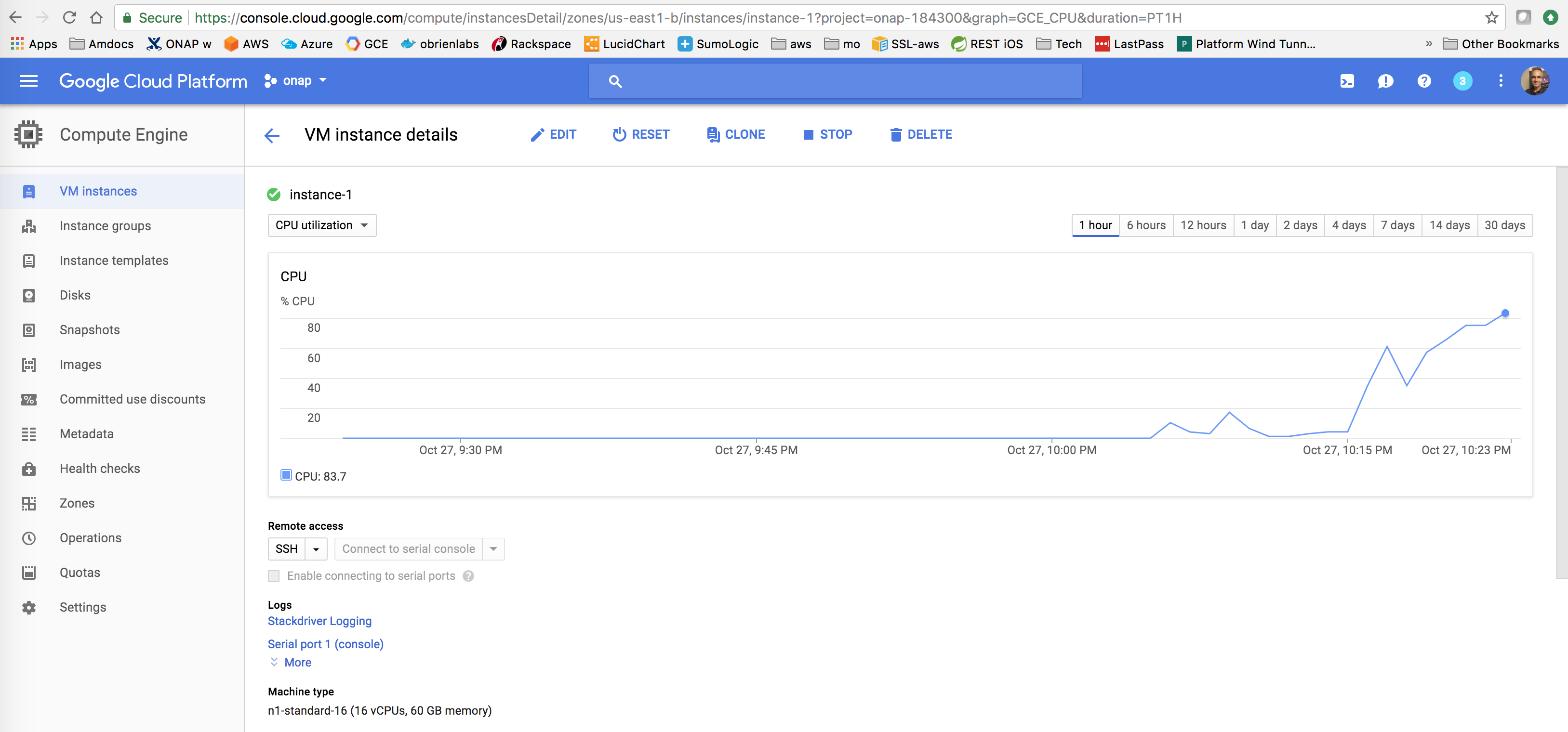

WE can run ONAP on an AWS EC2 instance for $0.17/hour as opposed to Rackspace at $1.12/hour for a 64G Ubuntu host VM.

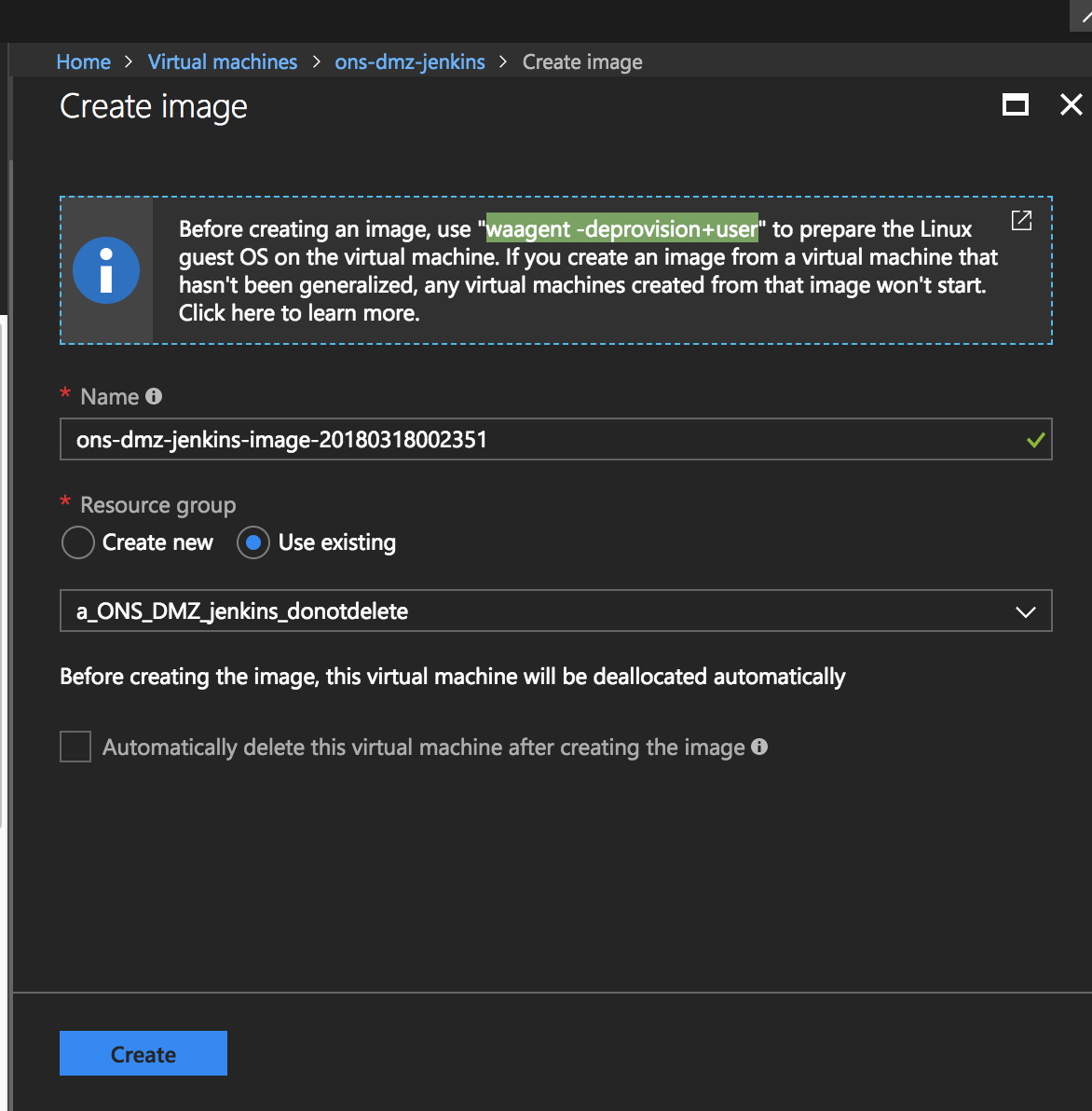

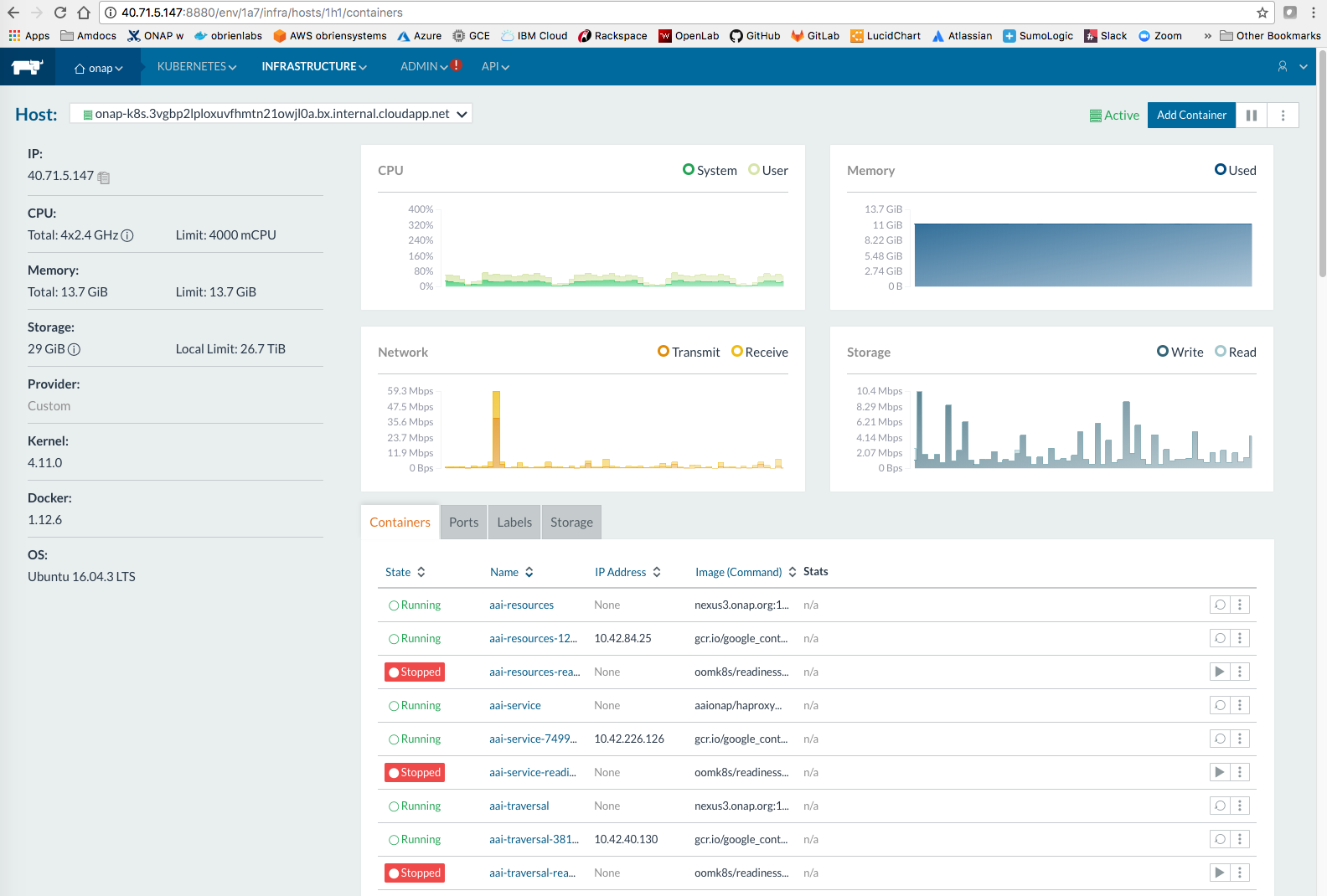

I have created an AMI on Amazon AWS under the following ID that has a reference 20170825 tag of ONAP 1.0 running on top of Rancher

ami-b8f3f3c3 : onap-oom-k8s-10

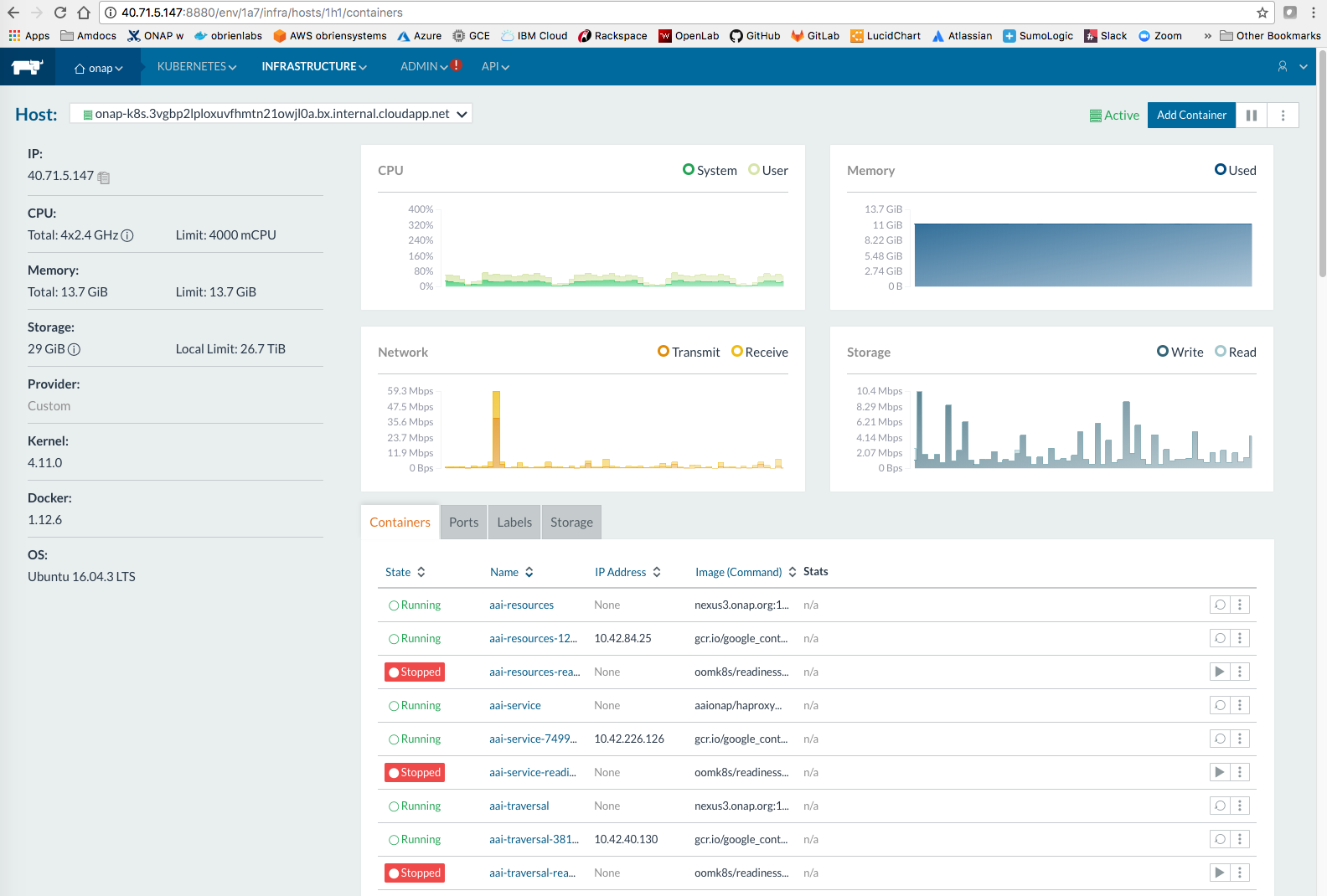

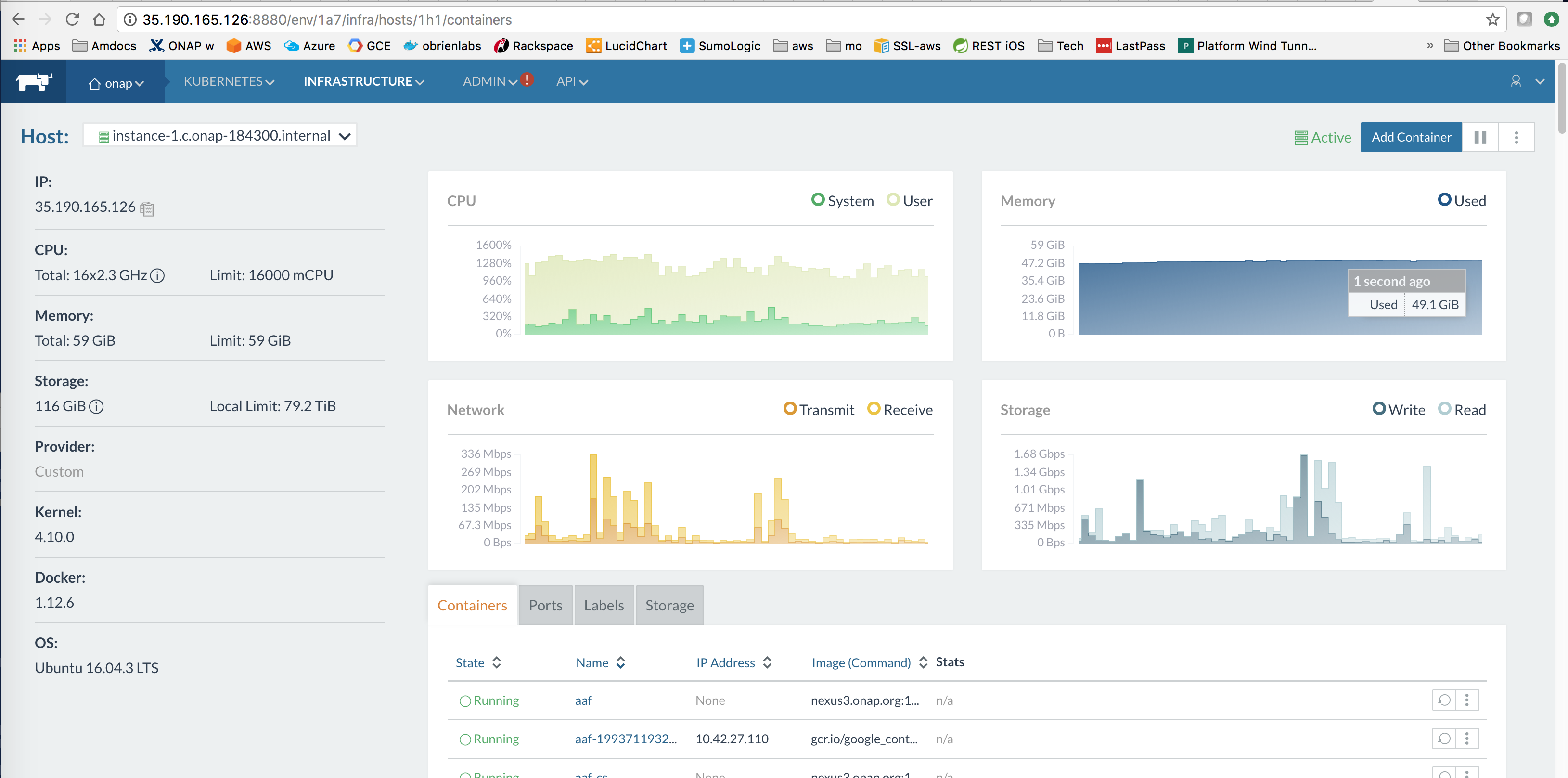

EIP 34.233.240.214 maps to http://dev.onap.info:8880/env/1a7/infra/hosts

A D2.2xlarge with 61G ram on the spot market https://console.aws.amazon.com/ec2sp/v1/spot/launch-wizard?region=us-east-1 at $0.16/hour for all of ONAP

It may take up to 3-8 min for kubernetes pods to initialize as long as you preload the docker images

Workaround for the disk space error - even though we are running with a 1.9 TB NVMe SSD

https://github.com/kubernetes/kubernetes/issues/48703

Use a flavor that uses EBS like M4.4xLarge which is OK

Use a flavor that uses EBS like M4.4xLarge which is OK - except for AAI right now

r4.2xlarge is the smallest and most cost effective 64g min instance to use for full ONAP deployment - it requires EBS stores. This is assuming 1 instance up at all times and a couple ad-hoc instances up a couple hours for testing/experimentation.

Resource Correspondence

| ID | Type | Parent | AWS | Openstack |

|---|---|---|---|---|

https://console.aws.amazon.com/cloudformation/designer/home?region=us-east-1#

Part of getting another infrastructure provider like AWS to work with ONAP will be in identifying and decoupling southbound logic from any particular cloud provider using an extensible plugin architecture on the SBI interface.

see Multi VIM/Cloud (5/11/17), VID project (5/17/17), Service Orchestrator (5/14/17), ONAP Operations Manager (5/10/17), ONAP Operations Manager / ONAP on Containers

Replace the DCAE Controller

Cloudify is Tosca based - https://github.com/cloudify-cosmo/cloudify-aws-plugin

https://istio.io/docs/setup/kubernetes/quick-start/

Waiting for the EC2 C5 instance types under the C620 chipset to arrive at AWS so we can experiment under EC2 Spot - http://technewshunter.com/cpus/intel-launches-xeon-w-cpus-for-workstations-skylake-sp-ecc-for-lga2066-41771/ https://aws.amazon.com/about-aws/whats-new/2016/11/coming-soon-amazon-ec2-c5-instances-the-next-generation-of-compute-optimized-instances/

http://docs.aws.amazon.com/cli/latest/userguide/cli-install-macos.html

use

curl "https://s3.amazonaws.com/aws-cli/awscli-bundle.zip" -o "awscli-bundle.zip" unzip awscli-bundle.zip sudo ./awscli-bundle/install -i /usr/local/aws -b /usr/local/bin/aws aws --version aws-cli/1.11.170 Python/2.7.13 Darwin/16.7.0 botocore/1.7.28 |

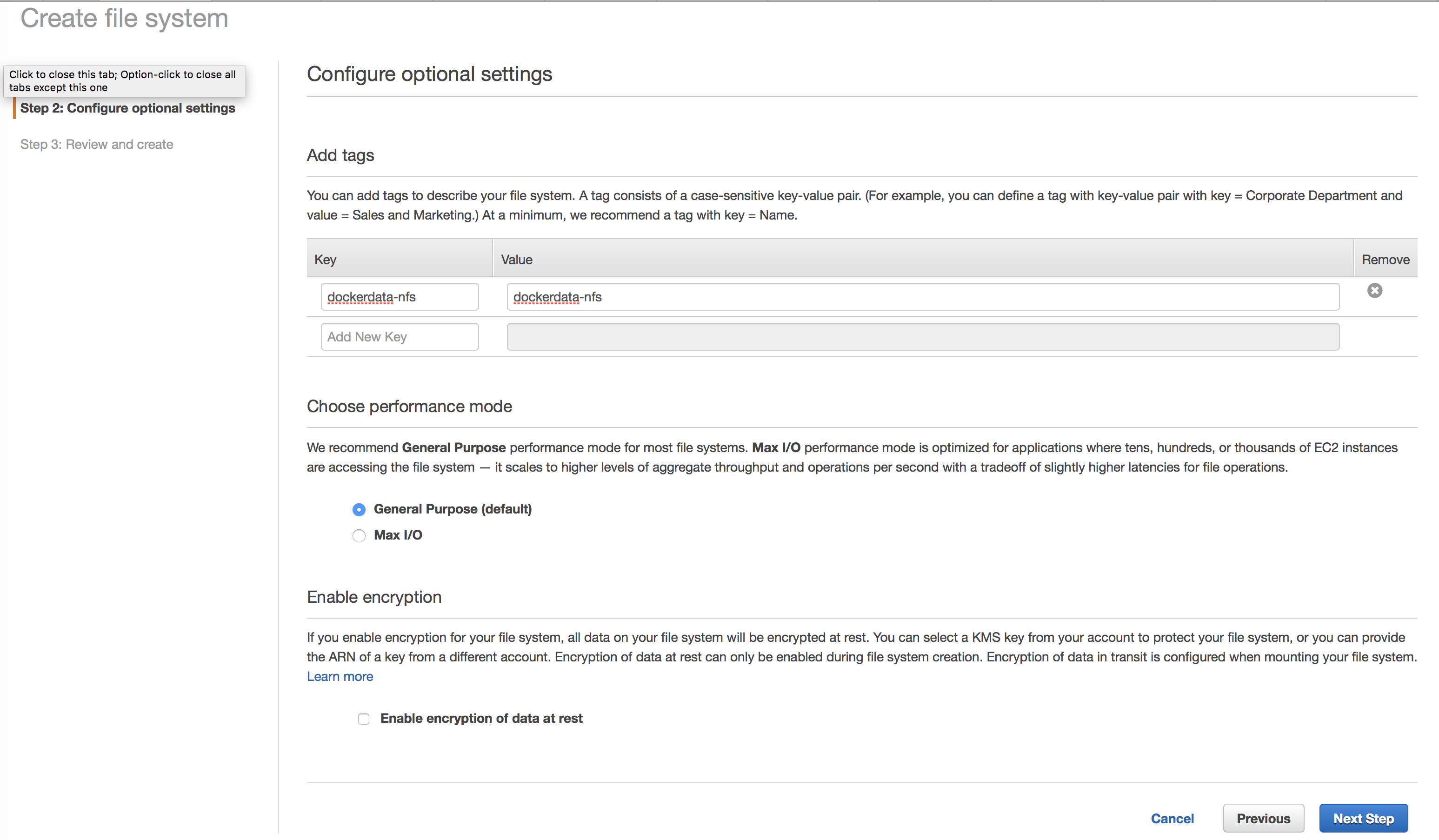

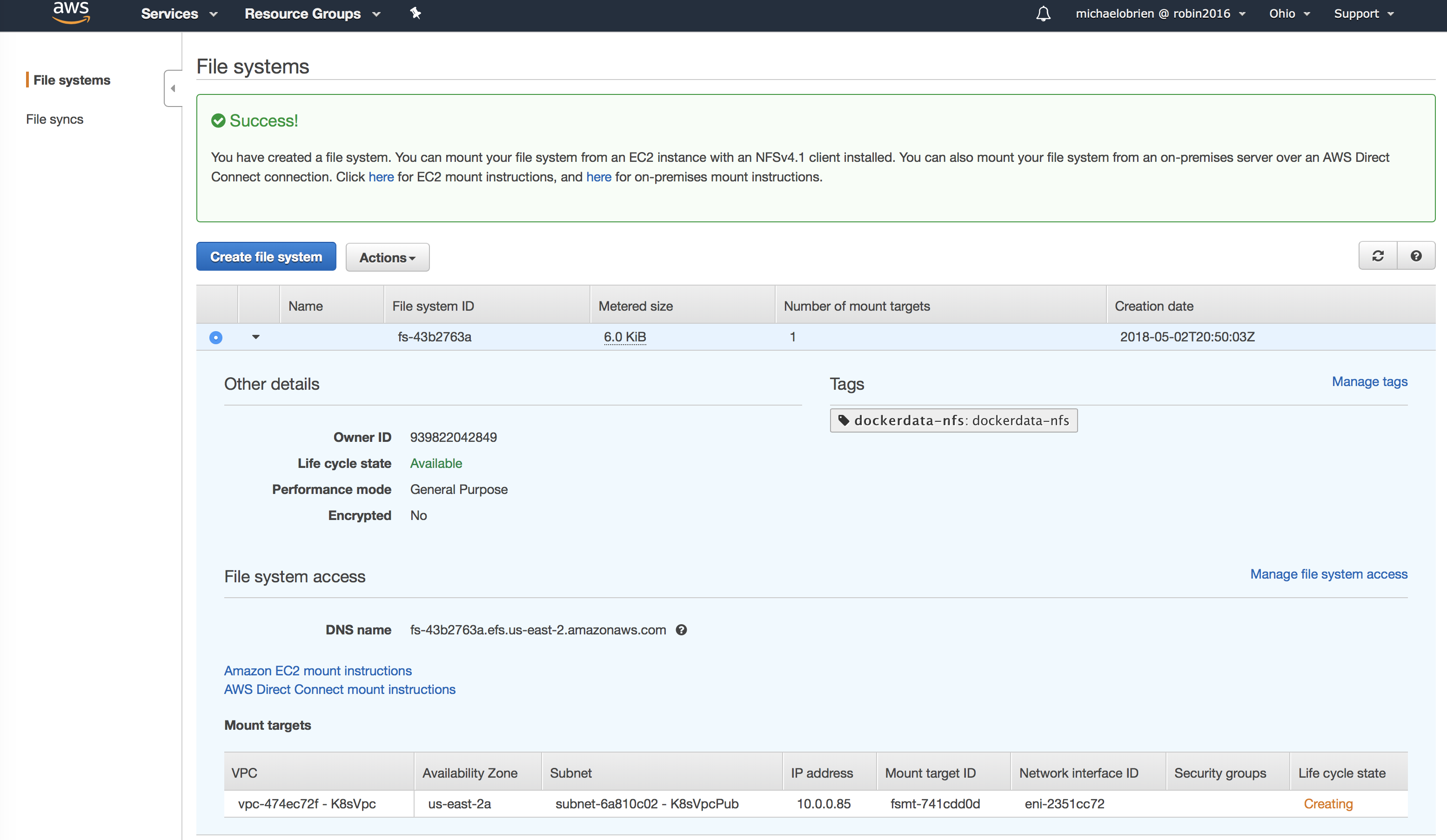

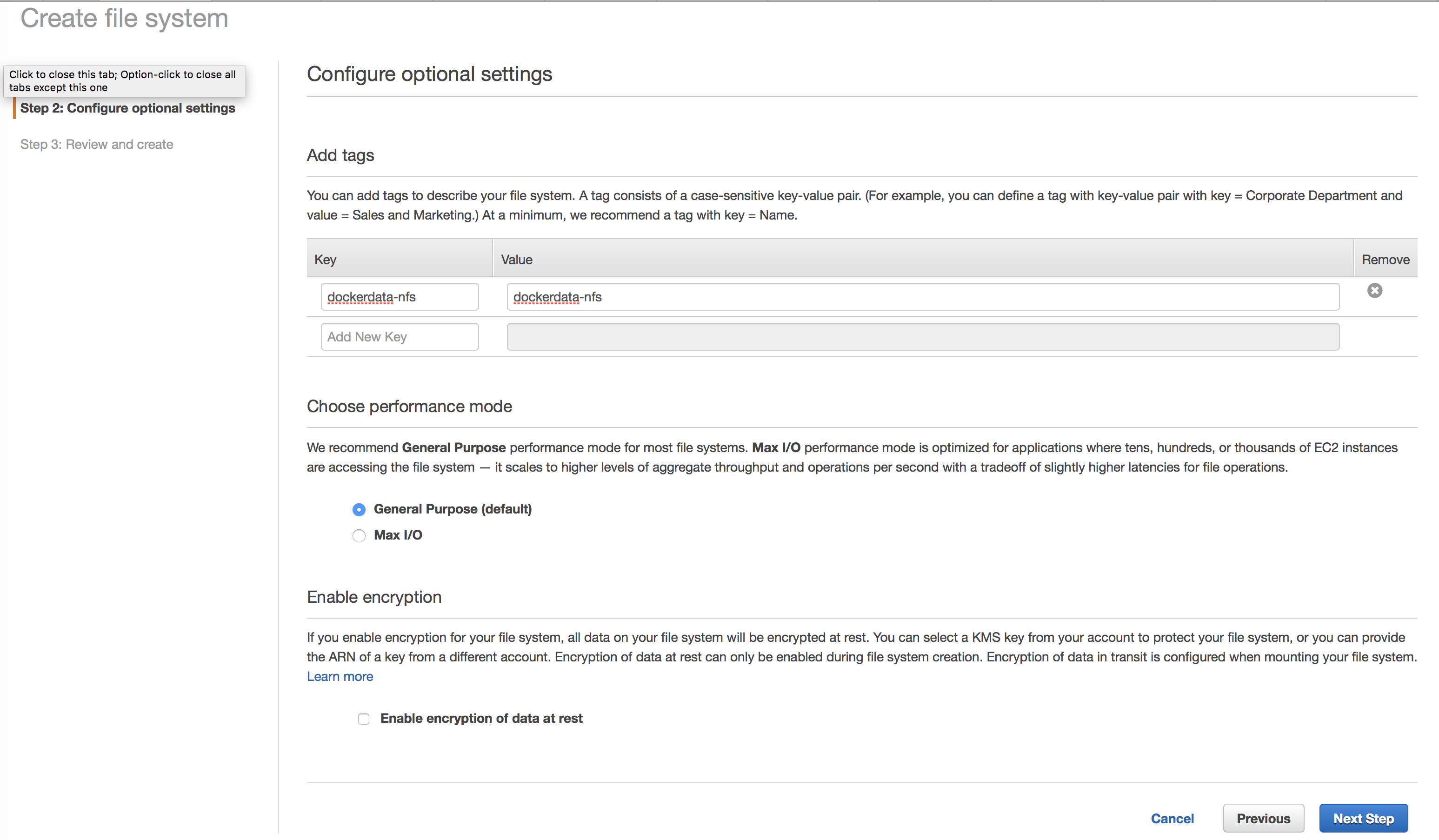

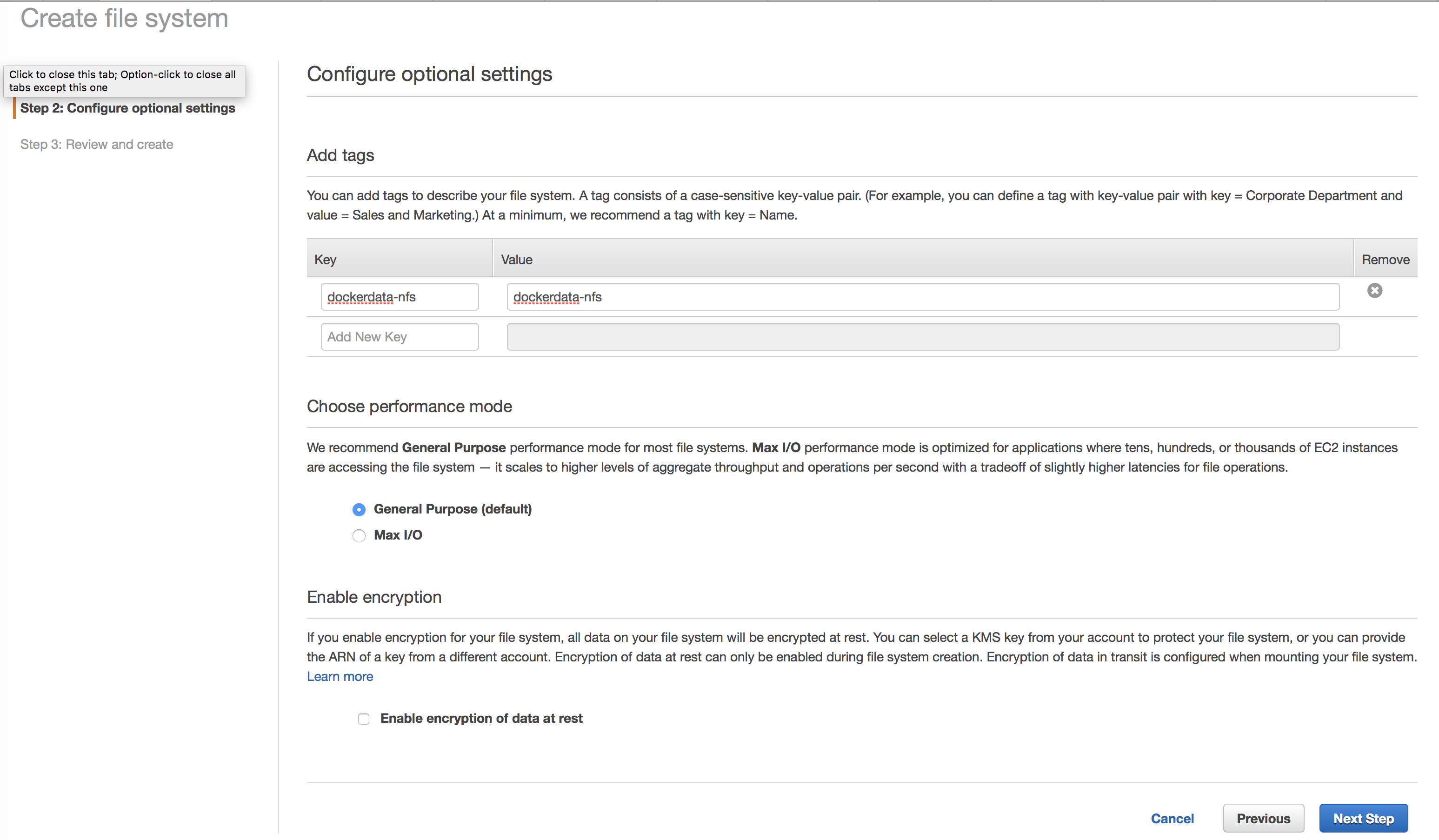

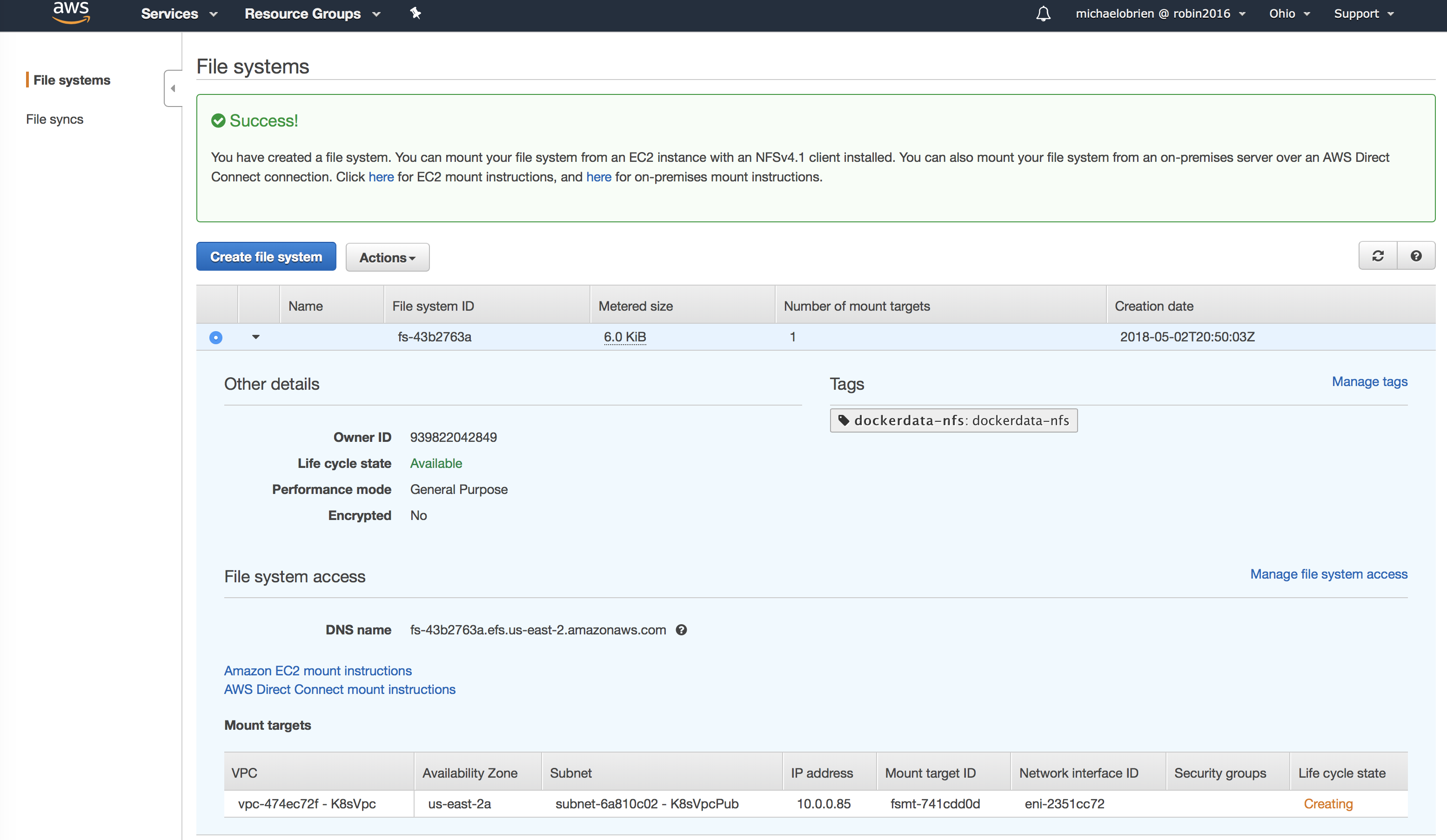

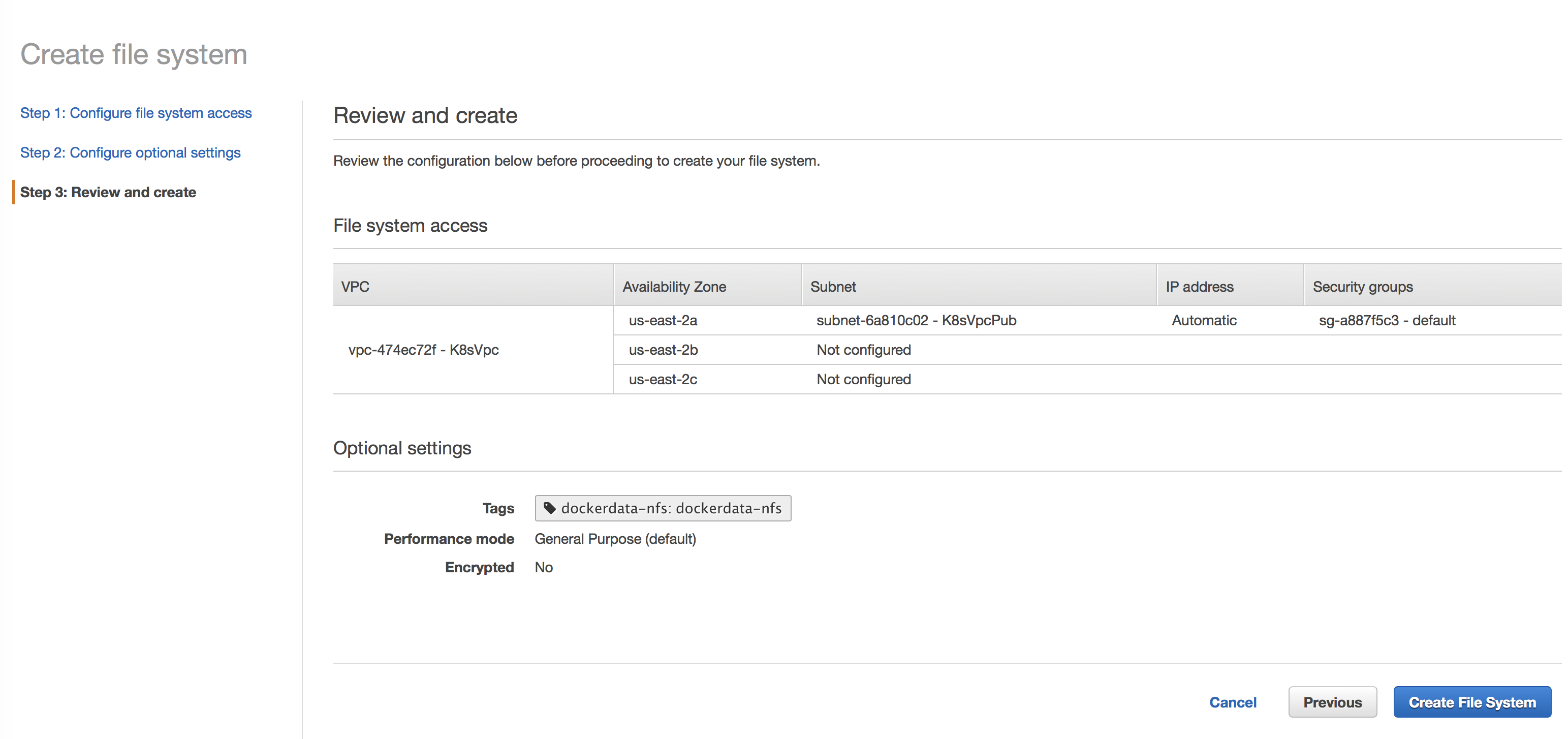

You need an NFS share between the VM's in your Kubernetes cluster - an Elastic File System share will wrap NFS

"From the NFS wizard"

Setting up your EC2 instance

Mounting your file system

If you are unable to connect, see our troubleshooting documentation.

https://docs.aws.amazon.com/efs/latest/ug/mounting-fs.html

Automated

Manual

ubuntu@ip-10-0-0-66:~$ sudo apt-get install nfs-common ubuntu@ip-10-0-0-66:~$ cd / ubuntu@ip-10-0-0-66:~$ sudo mkdir /dockerdata-nfs root@ip-10-0-0-19:/# sudo mount -t nfs4 -o nfsvers=4.1,rsize=1048576,wsize=1048576,hard,timeo=600,retrans=2 fs-43b2763a.efs.us-east-2.amazonaws.com:/ /dockerdata-nfs # write something on one vm - and verify it shows on another ubuntu@ip-10-0-0-8:~$ ls /dockerdata-nfs/ test.sh |

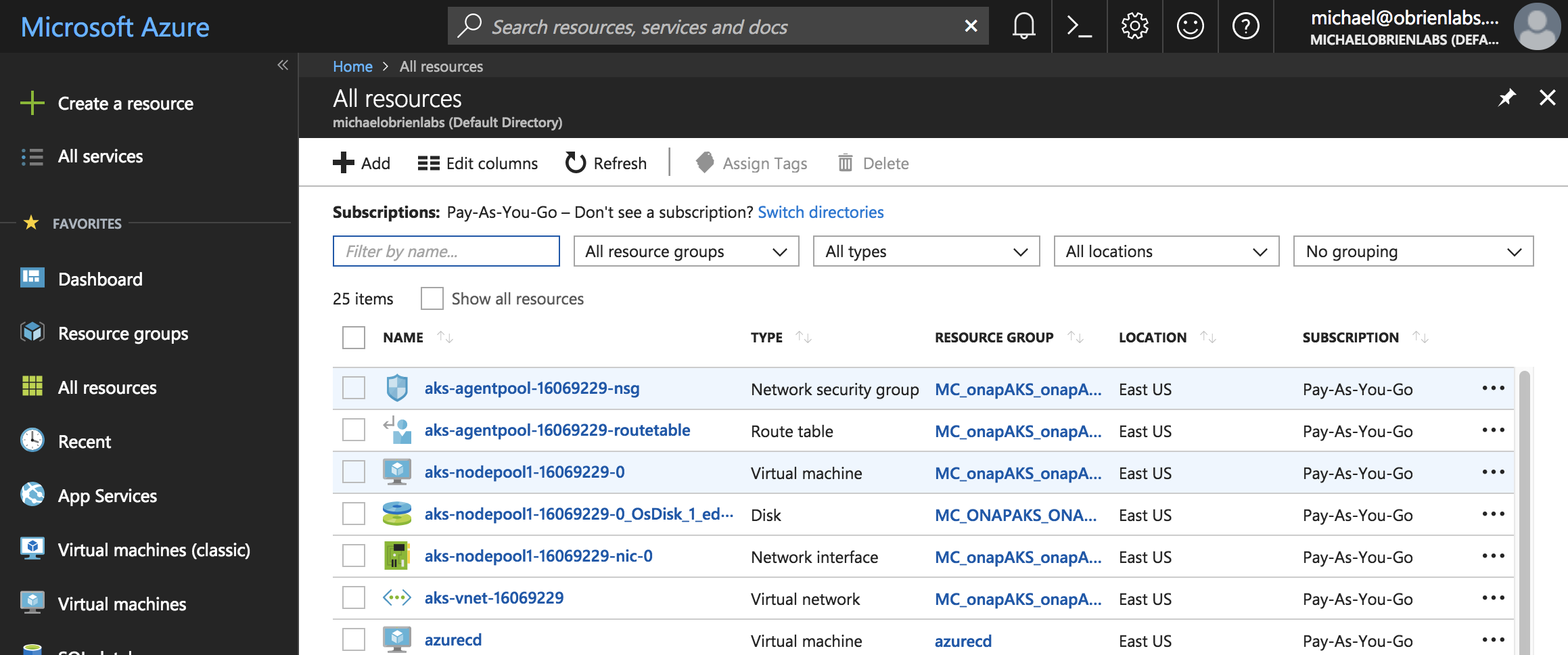

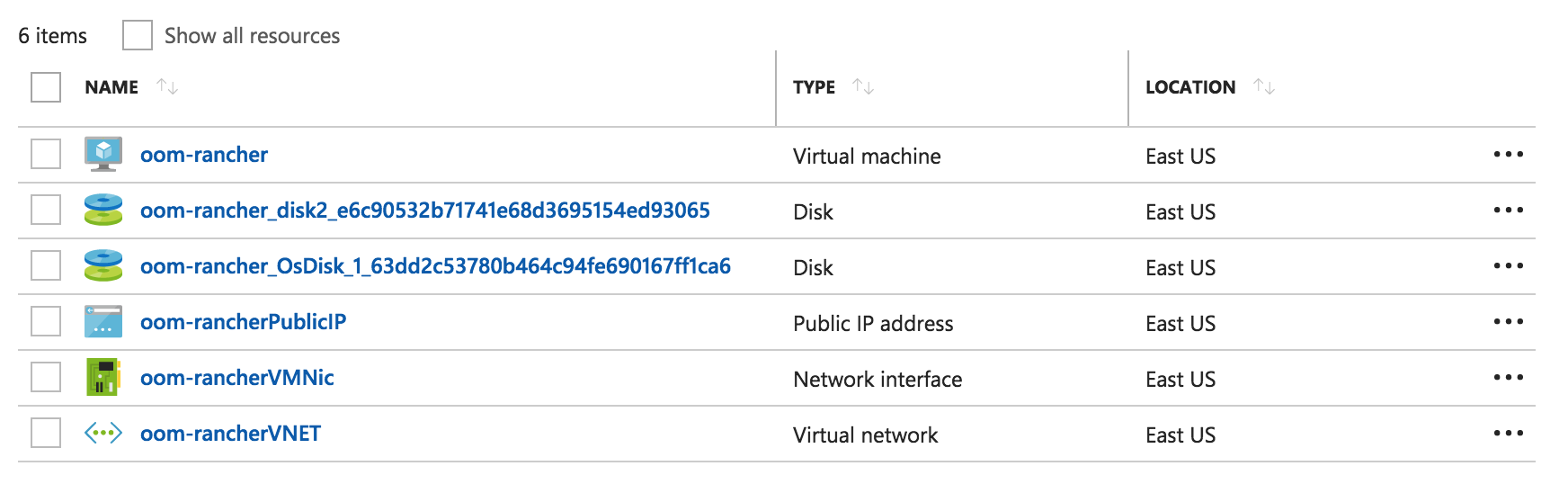

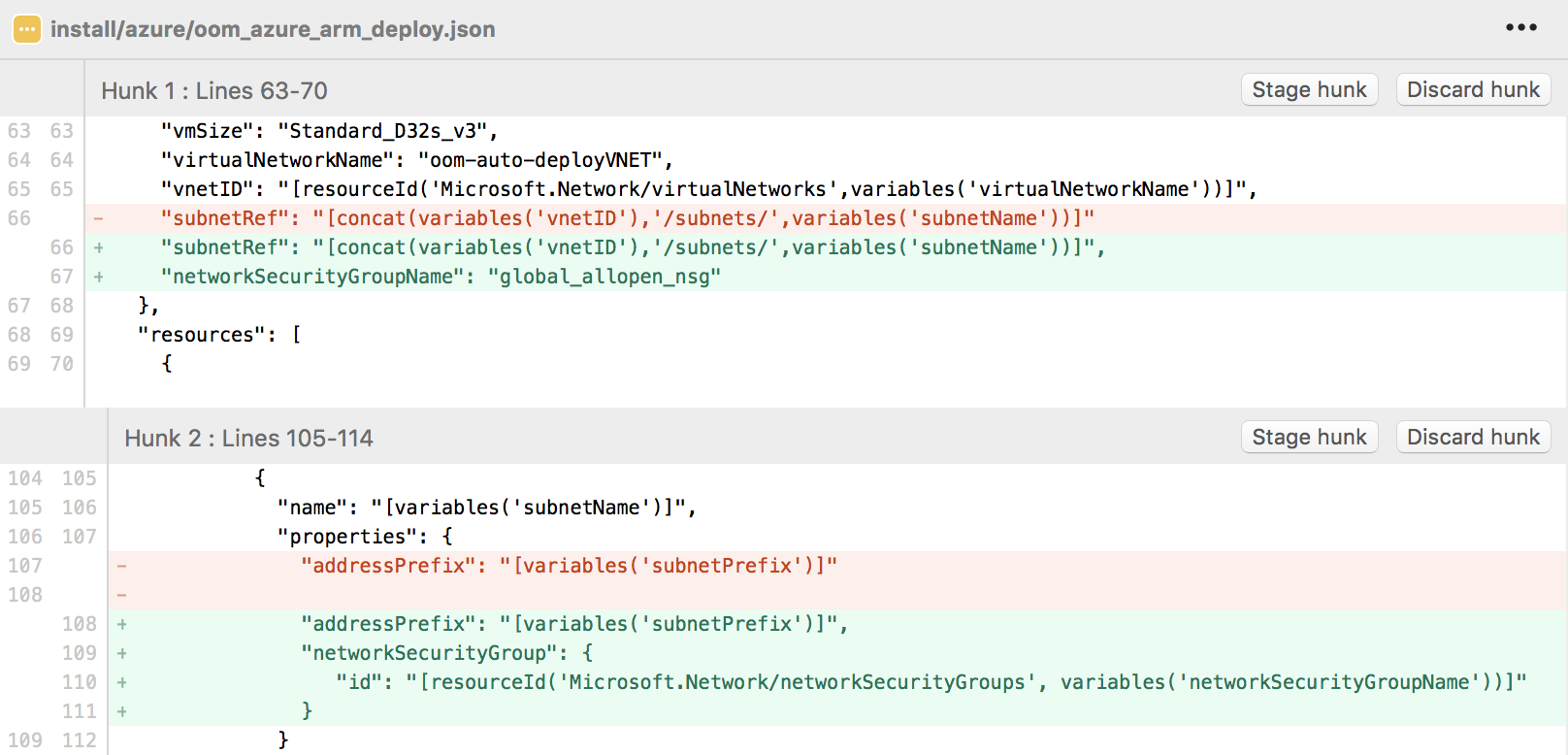

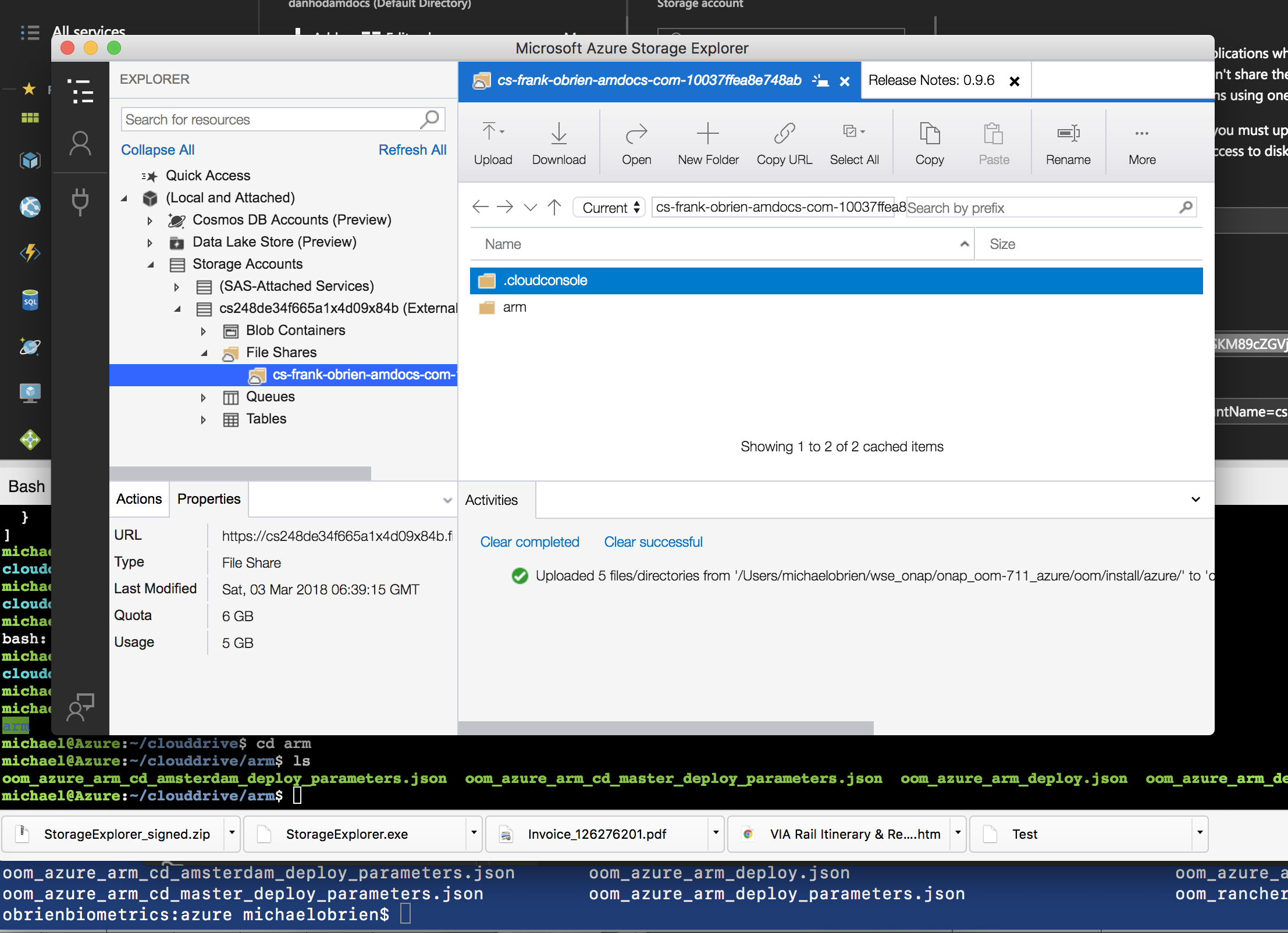

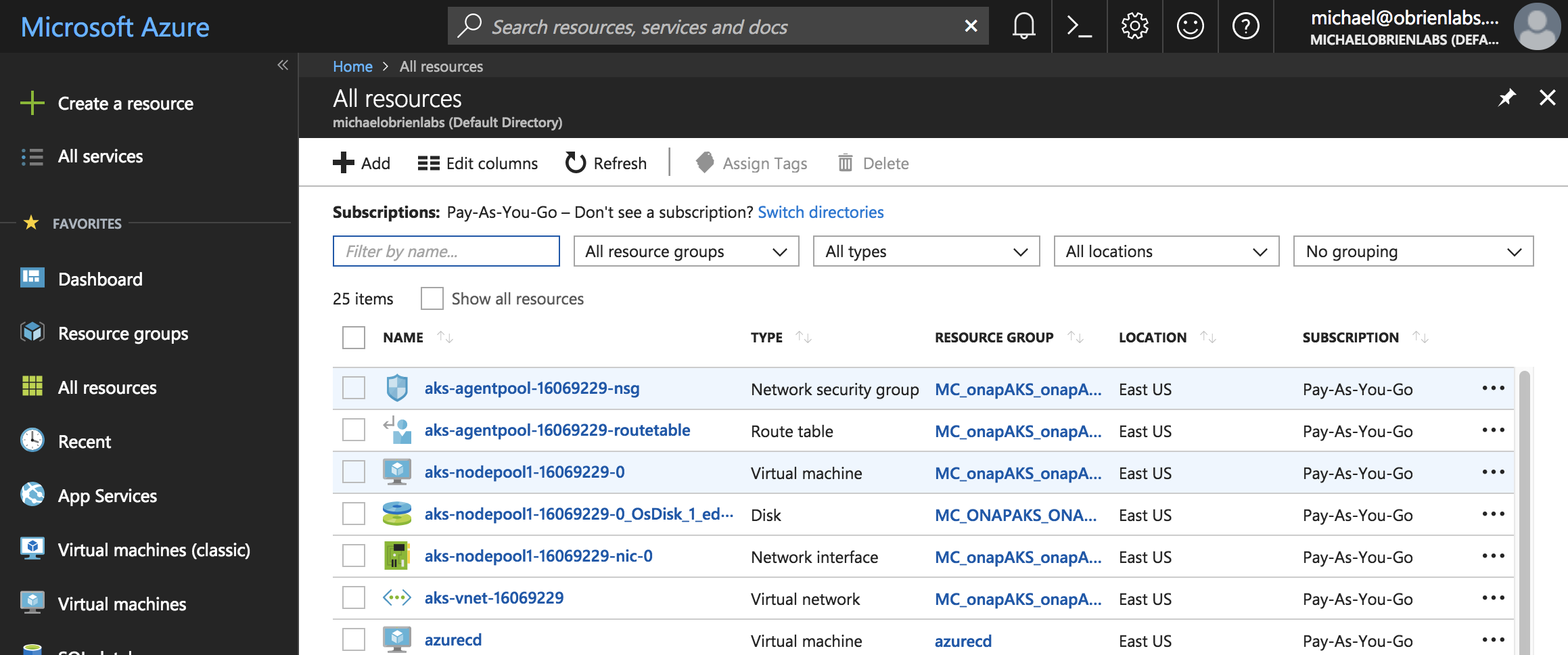

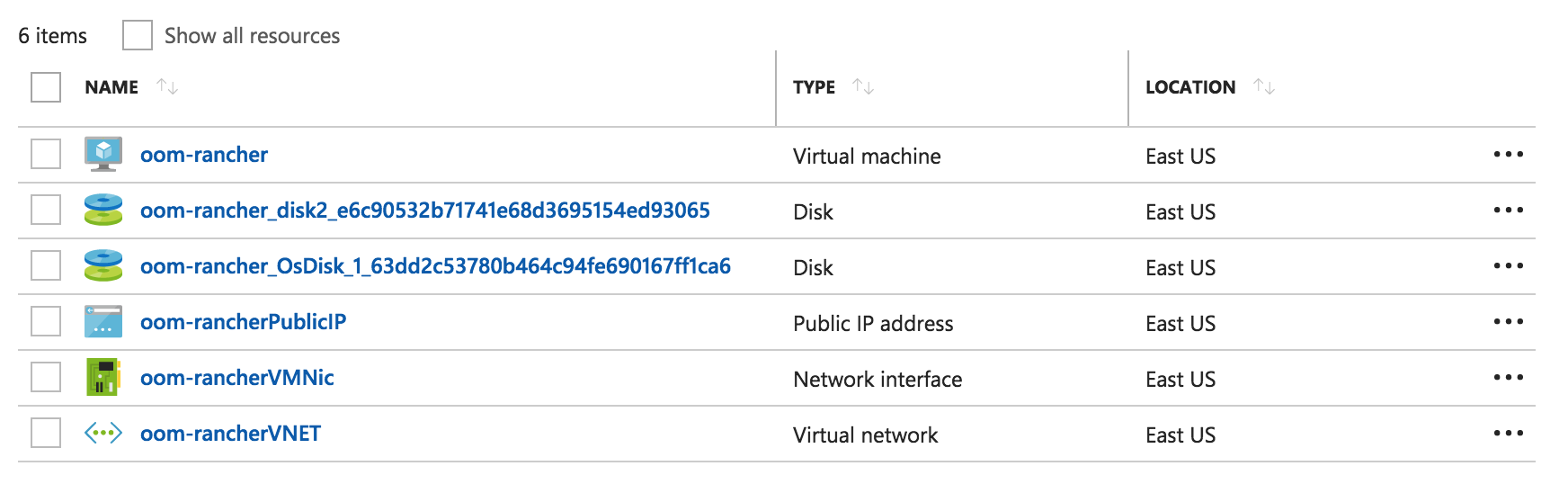

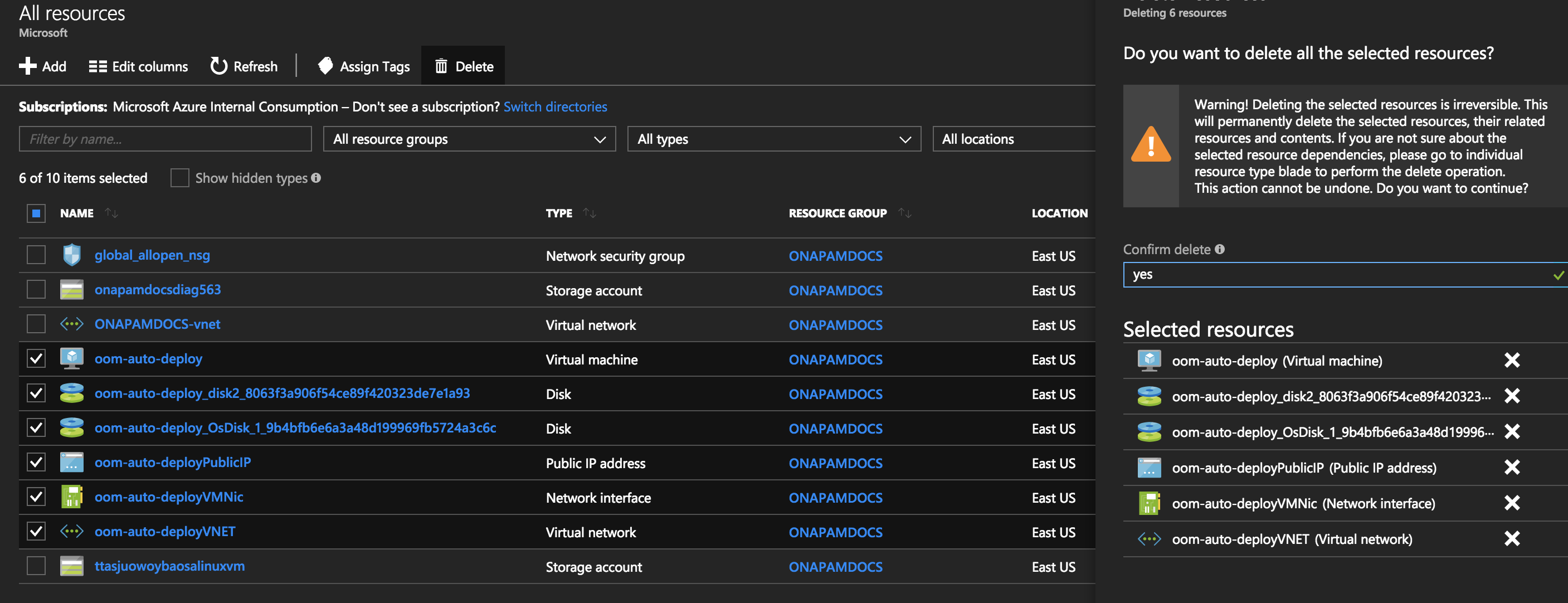

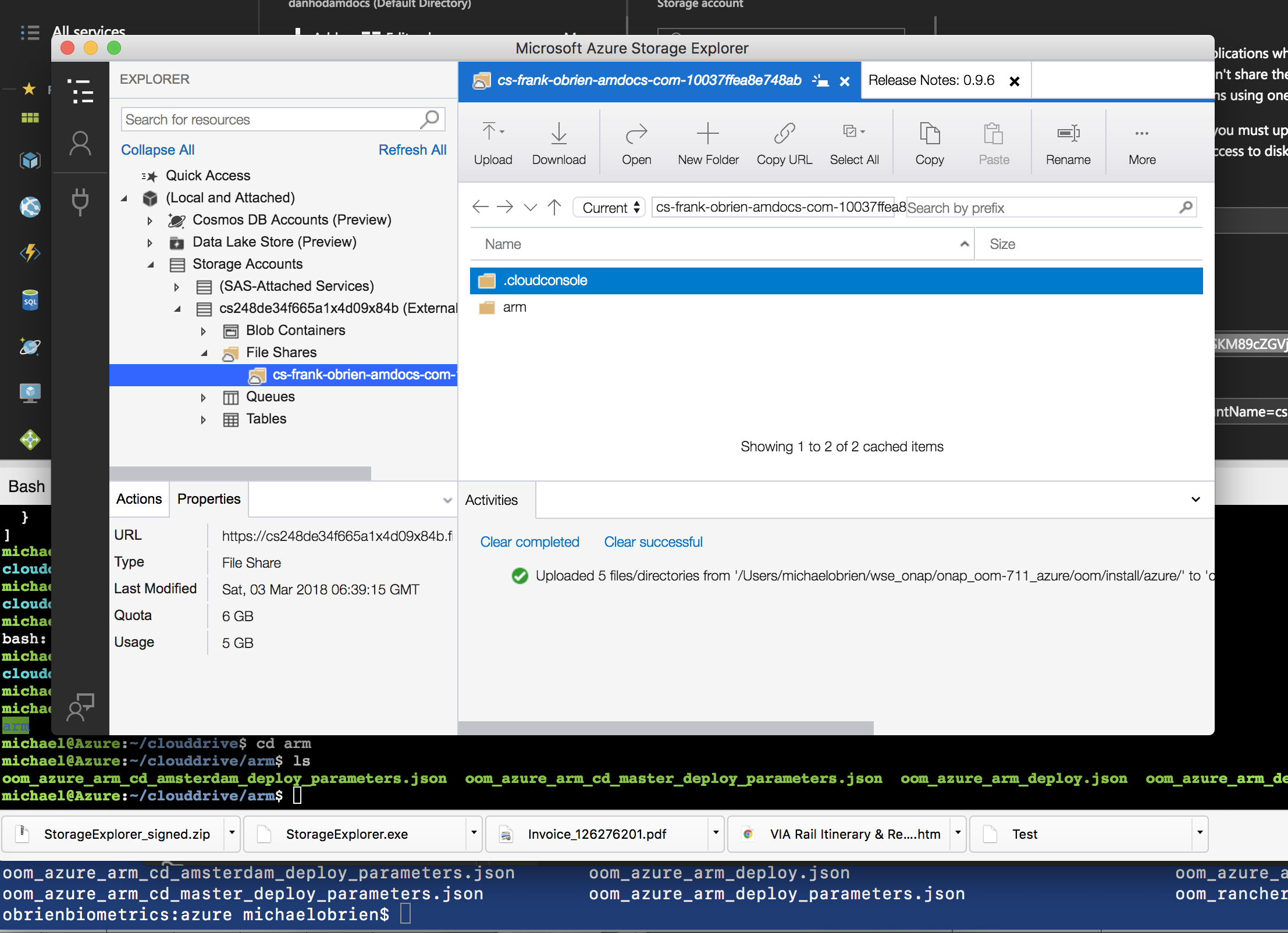

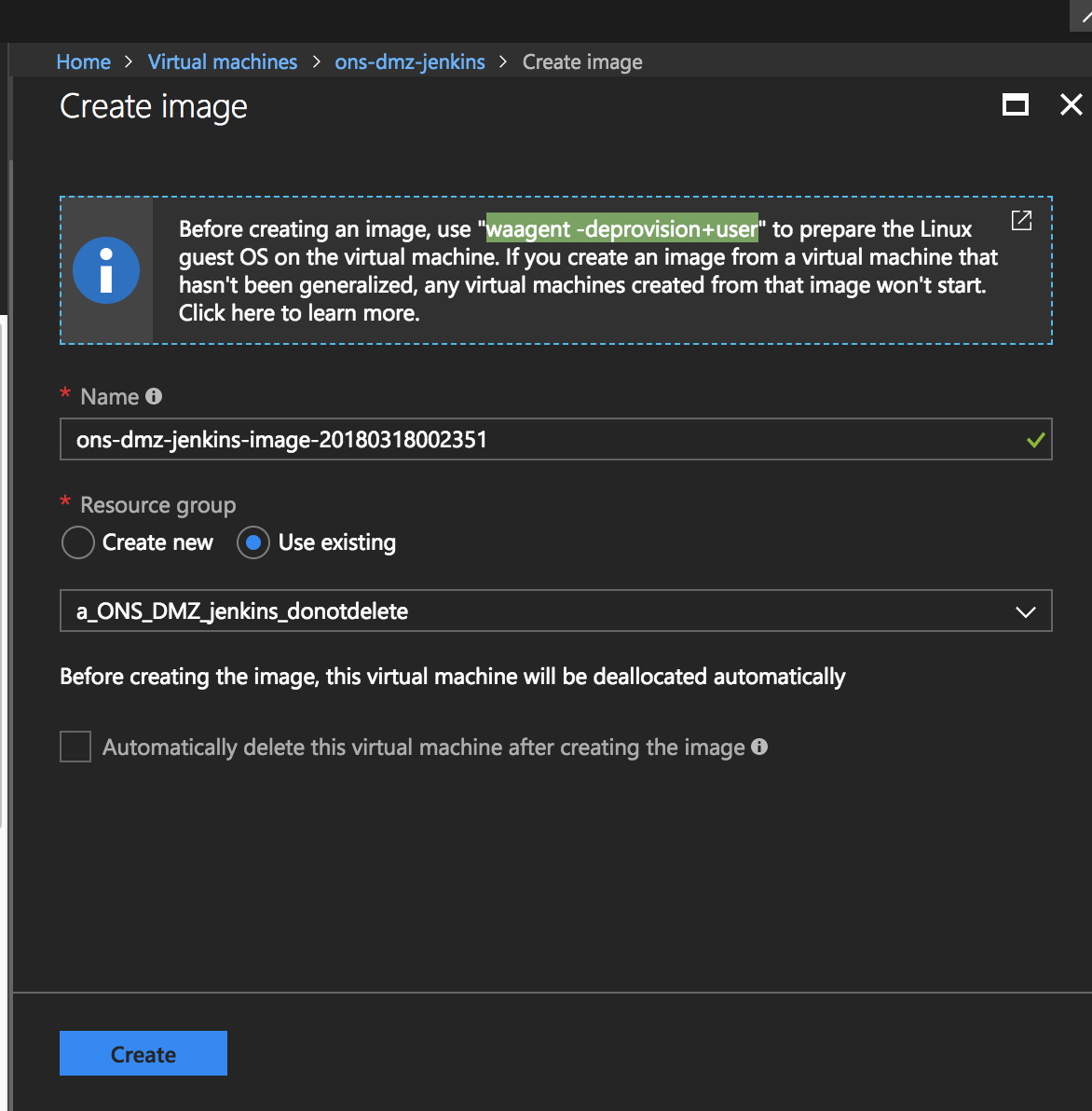

Subscription Sponsor: (1) Microsoft

Deliverables are deployment scripts, arm/cli templates for various deployment scenarios (single, multiple, federated servers)

In review

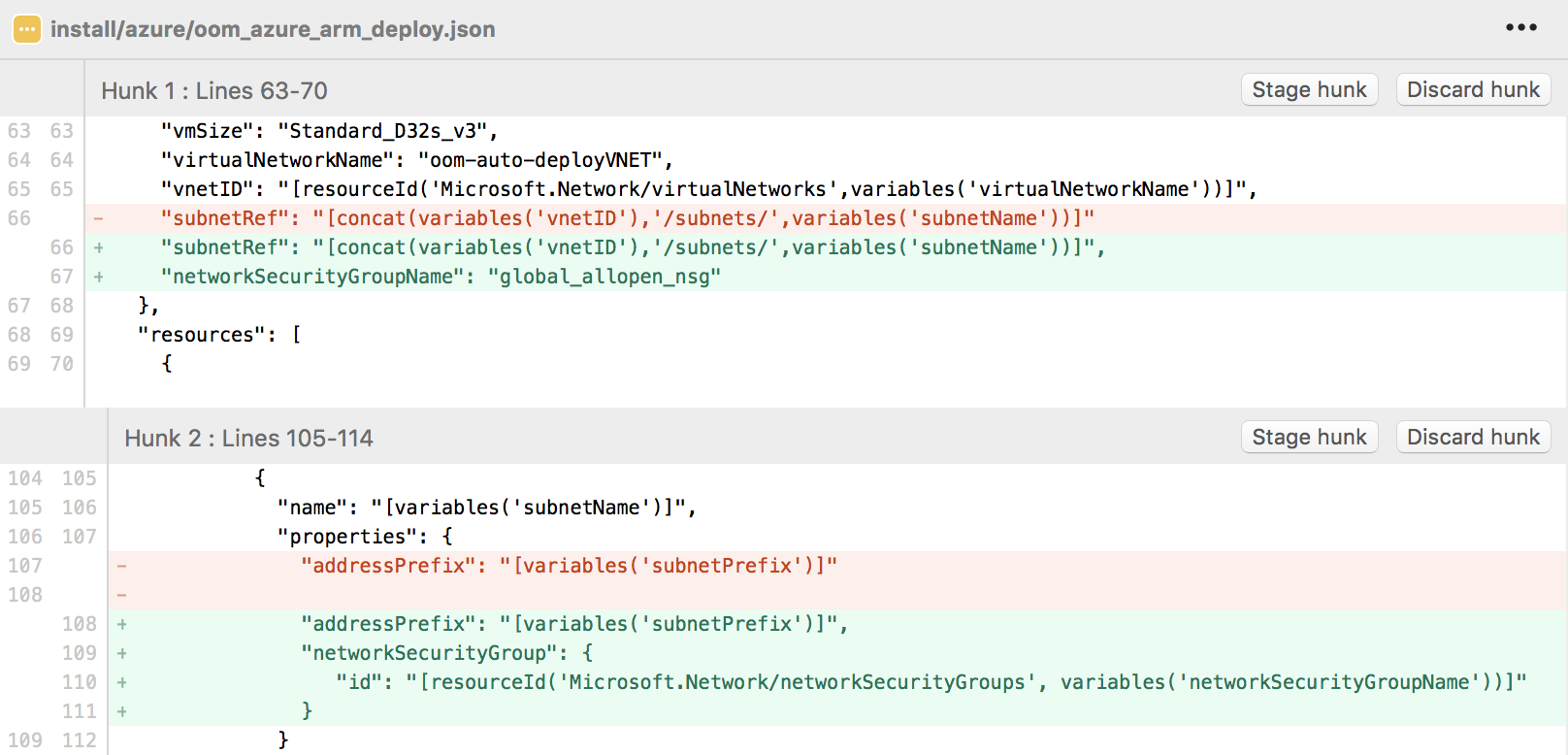

Automation is currently only written for single VM that hosts both the rancher server and the deployed onap pods. Use the ARM template below to deploy your VM and provision it (adjust your config parameters)

Two choices, run the single oom_deployment.sh ARM wrapper - or use it to bring up an empty vm and run oom_entrypoint.sh manually. Once the VM comes up the oom_entrypoint.sh script will run - which will download the oom_rancher_setup.sh script to setup docker, rancher, kubernetes and helm - the entrypoint script will then run the cd.sh script to bring up onap based on your values.yaml config by running helm install on it.

# login to az cli, wget the deployment script, arm template and parameters file - edit the parameters file (dns, ssh key ...) and run the arm template wget https://git.onap.org/logging-analytics/plain/deploy/azure/oom_deployment.sh wget https://git.onap.org/logging-analytics/plain/deploy/azure/_arm_deploy_onap_cd.json wget https://git.onap.org/logging-analytics/plain/deploy/azure/_arm_deploy_onap_cd_z_parameters.json # either run the entrypoint which creates a resource template and runs the stack - or do those two commands manually ./oom_deployment.sh -b master -s azure.onap.cloud -e onap -r a_auto-youruserid_20180421 -t arm_deploy_onap_cd.json -p arm_deploy_onap_cd_z_parameters.json # wait for the VM to finish in about 75 min or watch progress by ssh'ing into the vm and doing root@ons-auto-201803181110z: sudo tail -f /var/lib/waagent/custom-script/download/0/stdout # if you wish to run the oom_entrypoint script yourself - edit/break the cloud init section at the end of the arm template and do it yourself below # download and edit values.yaml with your onap preferences and openstack tenant config wget https://jira.onap.org/secure/attachment/11414/values.yaml # download and run the bootstrap and onap install script, the -s server name can be an IP, FQDN or hostname wget https://git.onap.org/logging-analytics/plain/deploy/rancher/oom_entrypoint.sh chmod 777 oom_entrypoint.sh sudo ./oom_entrypoint.sh -b master -s devops.onap.info -e onap # wait 15 min for rancher to finish, then 30-90 min for onap to come up #20181015 - delete the deployment, recreate the onap environment in rancher with the template adjusted for more than the default 110 container limit - by adding --max-pods=500 # then redo the helm install |

see https://jira.onap.org/secure/attachment/11455/oom_openstack.yaml and https://jira.onap.org/secure/attachment/11454/oom_openstack_oom.env

see https://git.onap.org/logging-analytics/tree/deploy/rancher/oom_entrypoint.sh

customize your template (true/false for any components, docker overrides etc...)

https://jira.onap.org/secure/attachment/11414/values.yaml

Run oom_entrypoint.sh after you verified values.yaml - it will run both scripts below for you - a single node kubernetes setup running what you configured in values.yaml will be up in 50-90 min. If you want to just configure your vm without bringing up ONAP - comment out the cd.sh line and run that separately.

see wget https://git.onap.org/logging-analytics/plain/deploy/rancher/oom_rancher_setup.sh

see wget https://git.onap.org/logging-analytics/plain/deploy/cd.sh

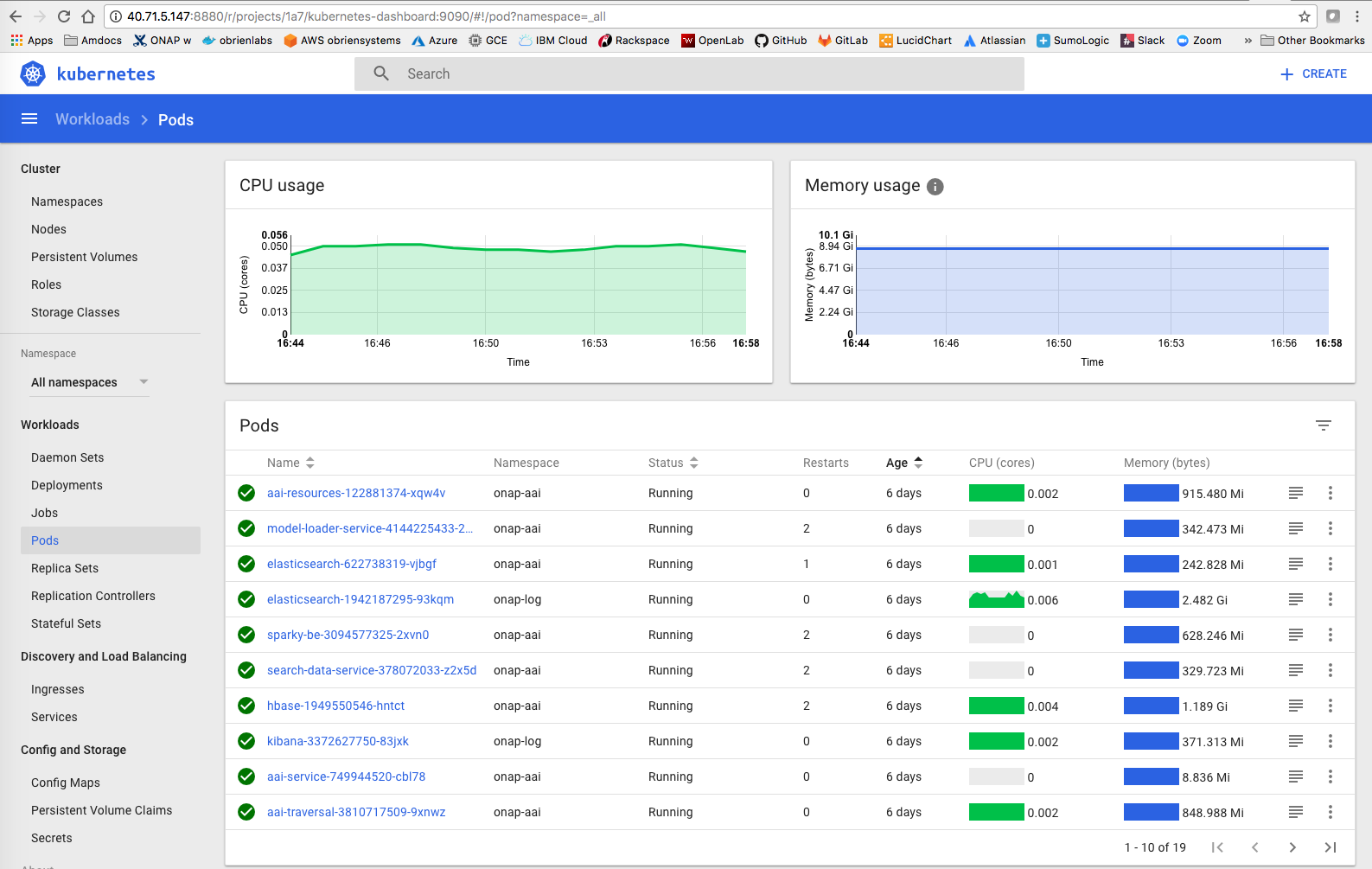

Verify your system is up by doing a kubectl get pods --all-namespaces and checking the 8880 port to bring up the rancher or kubernetes gui.

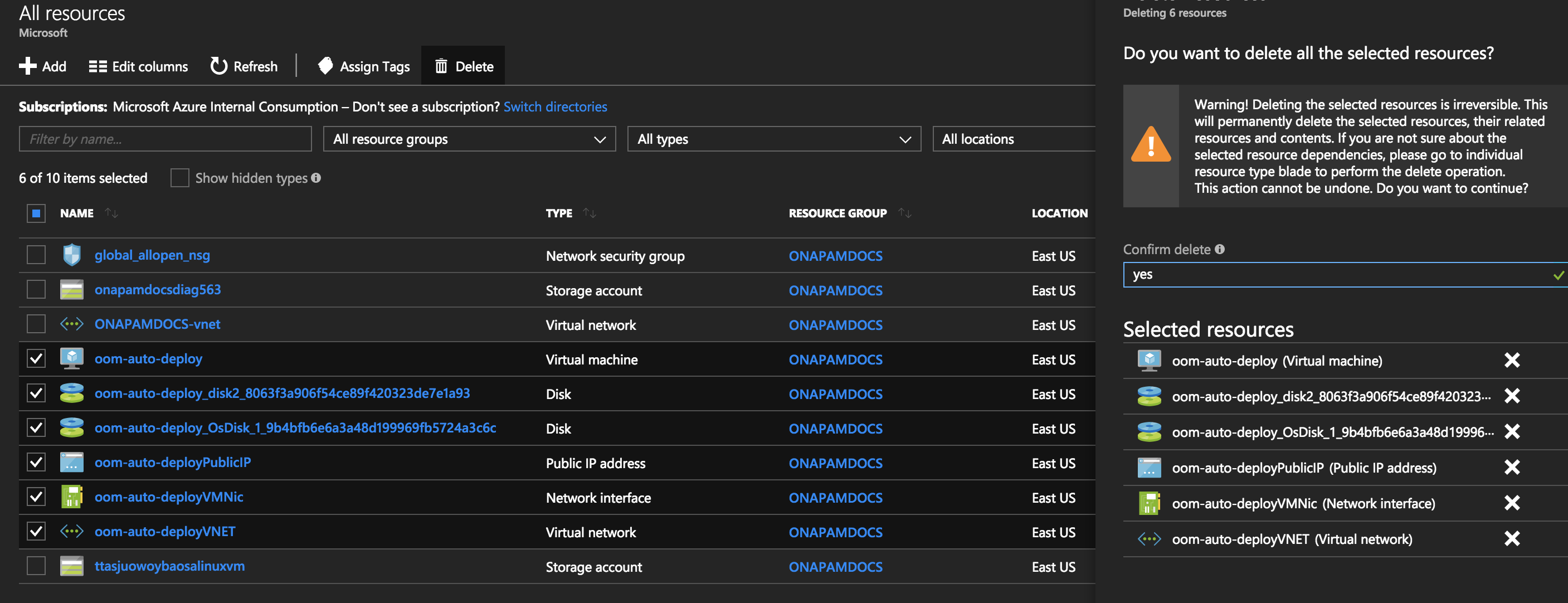

https://portal.azure.com/#blade/HubsExtension/Resources/resourceType/Microsoft.Resources%2Fresources

see

az group create --name onap_eastus --location eastus |

az group deployment create --resource-group onap_eastus --template-file oom_azure_arm_deploy.json --parameters @oom_azure_arm_deploy_parameters.json |

The oom_entrypoint.sh script will be run as a cloud-init script on the VM - see

which runs

see

kubectl get pods --all-namespaces # raise/lower onap components from the installed directory if using the oneclick arm template # amsterdam only root@ons-auto-master-201803191429z:/var/lib/waagent/custom-script/download/0/oom/kubernetes/oneclick# ./createAll.bash -n onap |

Azure subscription

https://docs.microsoft.com/en-us/cli/azure/install-azure-cli?view=azure-cli-latest

Install homebrew first (reinstall if you are on the latest OSX 10.13.2 https://github.com/Homebrew/install because of 3718)

Will install Python 3.6

$brew update $brew install azure-cli |

https://docs.microsoft.com/en-us/cli/azure/get-started-with-azure-cli?view=azure-cli-latest

$ az login

To sign in, use a web browser to open the page https://aka.ms/devicelogin and enter the code E..D to authenticate.

[ {

"cloudName": "AzureCloud",

"id": "f4...b",

"isDefault": true,

"name": "Pay-As-You-Go",

"state": "Enabled",

"tenantId": "bcb.....f",

"user": {

"name": "michael@....org",

"type": "user"

}}] |

https://docs.microsoft.com/en-us/cli/azure/install-azure-cli-apt?view=azure-cli-latest