Residential Broadband vCPE

Amdocs, AT&T, Huawei, Intel, Orange, Wind River

Technical Report 317 (TR-317) from the Broadband Forum specifies a "Network Enhanced Residential Gateway" (NERG) that can be used by service providers (SP) to deploy residential broadband services including High Speed Internet access, IPTV and VoIP services. A new device type named BRG (Bridged Residential Gateway) is defined, as well as a functionality split between what it would run and what a virtual function instantiated in the SP's cloud, called vG, will run. This functionality split allows for the introduction of new services not possible with the existing whole-physical residential gateway. It also saves operational cost with less changes / truck roll in and to the customer residence would be needed.

Key for this use case is:

The full details for this this new residential gateway can be found in the BBF report. For ONAP Amsterdam Release (Nov 2017) a subset of the full functionality of TR-317 will be included in the VNFs implementing the vCPE use case; but the ONAP behavior for the use case will be mostly what will be expected from ONAP in a production network.

The diagram above depicts the R1 vCPE use case (with the exception of the home networks on the left which will not be part of the tested use case). The IP addresses and VLAN numbers are for illustration purposes, the actual values may be different in the final set up. In particular, IPv6 addresses may be used in place of IPv4 10.x.x.x in the above.

VNFs | Code / Vendor | Comments |

vBNG | Open Source:

| BNG: Broadband Network Gateway Includes subscriber session management, traffic aggregation and routing, and policy / QoS. |

vG_MUX | Open Source:

| Virtual Gateway Multiplexer - the entry point of the vG hosting interface |

vG, including DHCP | Open Source:

DHCP Server source: ISC | Virtual Gateway Provides basic routing capabilities and service selection for residential services. |

BRG Emulator | VPP + modification by Intel | Bridged Residential Gateway Emulator VPP based software used as the BRG emulator for the use case Goal for future releases: Monitoring of metrics and alarms of the BRG and automated action. Example policy based on monitoring: - Automatic reboot of the BRG because of high load (CPU). |

vAAA | Open Source:

| Access authentication and authorization. |

vDHCP | OpenSource:

| Instance 1: BRG WAN IP address assignment Instance 2: vG WAN IP address assignment |

vDNS | OpenSource:

| Domain name resolution |

NFVI+VIM:

NFVI+VIMs | Code / Vendor | NFVI+VIM requirement |

| OpenStack | Vendor:

| NFV Infrastructure and Virtual Infrastructure Management system that is compatible with ONAP Release 1. |

The assumed order of operations for the run time use case experience is as follows:

The newly created tunnel i/f will be used for all communications between BRG Emulator and its pair vG. Below is the frame format of packets sent inside this tunnel:

VXLAN Packet Format

Fields description going from right to left in the figure above:

The role of vG_MUX is to terminate VXLAN tunnels (typically utilizing public IP addresses) and relay packet to/from local vGs over newly created VxLAN tunnels. To this end vG_MUX maintains a XC tabel of the following format: <Remote VTEP IP, VNI>. vG_MUX uses this table as follows:

BRG Emulator → vG direction:

vG → BRG Emulator direction:

The first transaction that uses the VxLAN tunnel is the BRG Emulator's request to get its own IP address for its LAN interface, as follows:

Once BRG Emulator gets the ACK it is ready to start serving the home network.

The vCPE use case consists of several VNFs and networks that can be thought of as Resources which fall into two categories:

1. Infrastructure - These Resources are deployed prior to any customer request for an instance of vCPE Residential Broadband service. These VNFs and networks are assumed to be up and running and ready when a customer order is received. Some of this infrastructure (e.g,. the vGMUX VNF) may be dedicated to supporting vCPE Residential Broadband service instances, and others (e.g., the vDHCP VNF) may provide shared functionality across many service types. We will refer to the latter as "Core Infrastructure".

2. Customer - These Resources are dedicated to a particular customer service instance, and hence are instantiated on a per vCPE Residential Broadband service customer request basis.

Because the Infrastructure Resources support many vCPE Residential Broadband service instances, and because the Core Infrastructure Resources provide support for perhaps many service types, it is appropriate to think of these as collectively providing various underlying "Services" to a higher order customer Service. We will model these Resources accordingly as being comprised of Infrastructure Services which support the vCPE Residential Broadband Customer Service.

In the "real world", the vDHCP, vAAA, and vDNS+DHCP functions would each be deployed separate of each other. However, because as "Core Infrastructure" they provide only a generic supporting function for this use case and are not integral to the purposes of this use" case, it is less critical that we model these functions as we would find them in the "real world". Hence we will take this opportunity to demonstrate a more complex VNF by modeling this functionality as a single VNF comprised of three VMs, packaged into a single HEAT template.

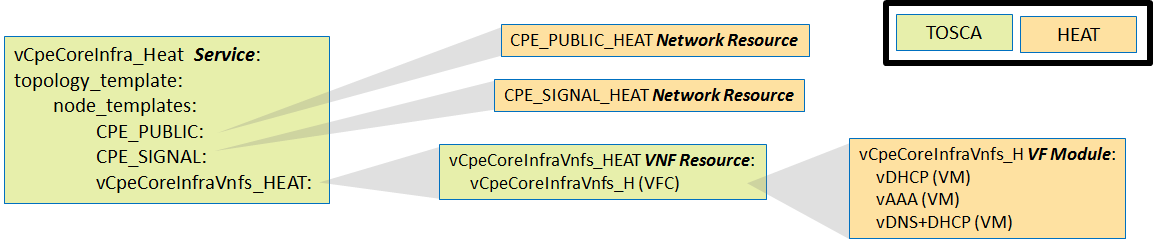

We will model a vCpeCoreInfra_Heat Service that is comprised of this complex VNF as well as two networks:, the "CPE_PUBLIC" and the "CPE_SIGNAL" networks. Thus, a request into ONAP to create a new instance of vCpeCoreInfra_Heat Service in a particular cloud region will result in an instance of the CPE_PUBLIC network and the CPE_SIGNAL networks being instantiated in that cloud region using the CPE_PUBLIC_HEAT and CPE_SIGNAL_HEAT HEAT templates respectively, as well as an instance of the single VNF being instantiated in that cloud region using the single VNF HEAT template.

From a modeling perspective we will leverage the concept of "VF Module" (analogous to the concept of ETSI Virtual Deployment Unit, or VDU) to capture the "unit of deployment" within a multi-VM VNF. Thus, we will model the this HEAT template as a single vCpeCoreInfraVnfs_H VF Module.

In the "real world" the network between the BNG and the vGMUX would likely be a shared network that multiple BNGs could use to communicate to multiple vGMUXs, and hence it is reasonable to model that network as not being part of the same Service (and Service instance) as the BNG or the vGMUX. Hence we will model it as a separate Service which can be instantiated in a given cloud region independently of, but of course prior to, the VNF instantiation.

The network between the BRG and the BNG is specific to a particular BNG instance, and thus it is reasonable to model it as part of the same Service (and Service instance) as the BNG.

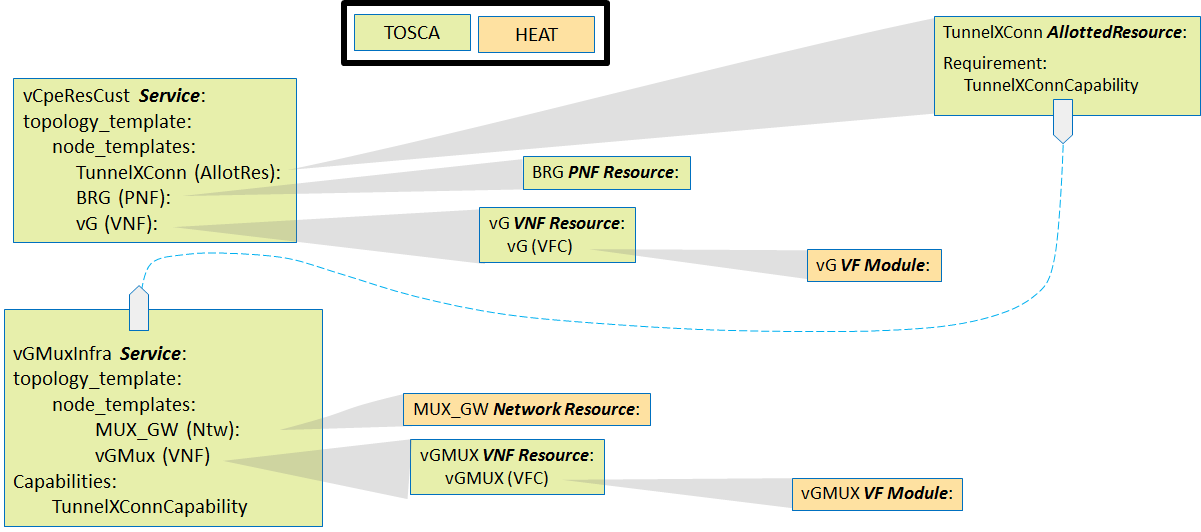

In the "real world", each instance of the Customer Service will be comprised of:

Because in this sense the vGMUX is providing a "service" an allotment of which is being consumed, we consider the TunnelXConn Allotted Resource to be taken from the vGMUX's Infrastructure Service, vGMuxInfra.

Though in the "real world" the BRG will be a physical device, for purposes of this use case we will emulate such a physical device via a VNF, the vBRG_EMU VNF. We will instantiate this VNF separately from the Customer's service instance, and model it as a separate service: BRG_EMU Service. Upon instantiation of a BRG_EMU service instance with its vBRG_EMU VNF, that VNF will begin acting like its "real world" PNF counterpart.

As a Release 1 ONAP "stretch goal", we will also attempt to demonstrate how a standalone general TOSCA Orchestrator can be incorporated into SO to provide the "cloud resource orchestration" functionality without relying on HEAT orchestration to provide this functionality. As such we will model the "vCpeCoreInfra" Service in a manner that does not leverage HEAT templates, but rather as pure TOSCA.

Note: All detailed flows (with diagrams) are for R1. Flows planned for subsequent releases are tagged as "aspiration" and are only mentioned, details will be added later.

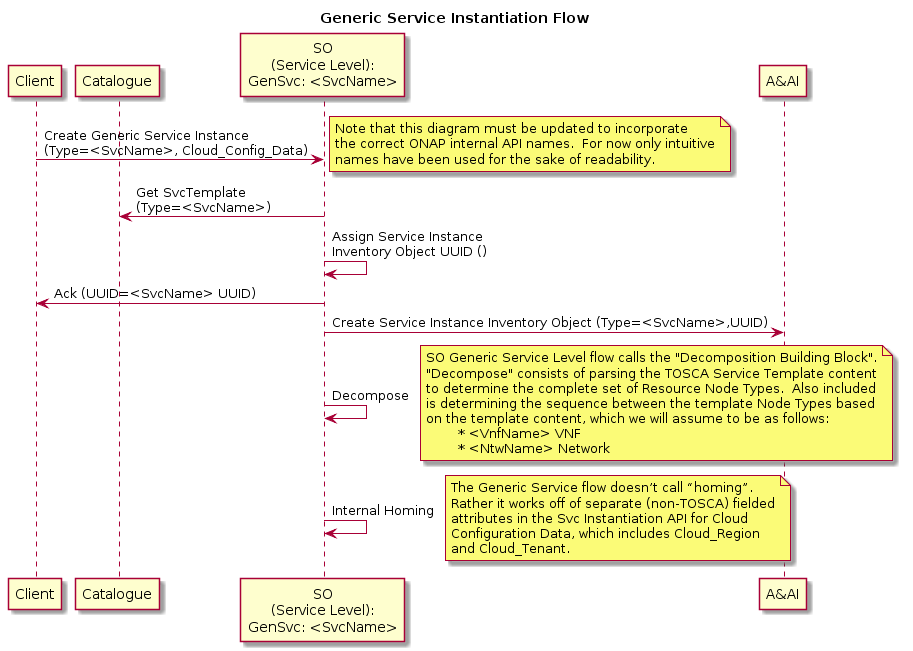

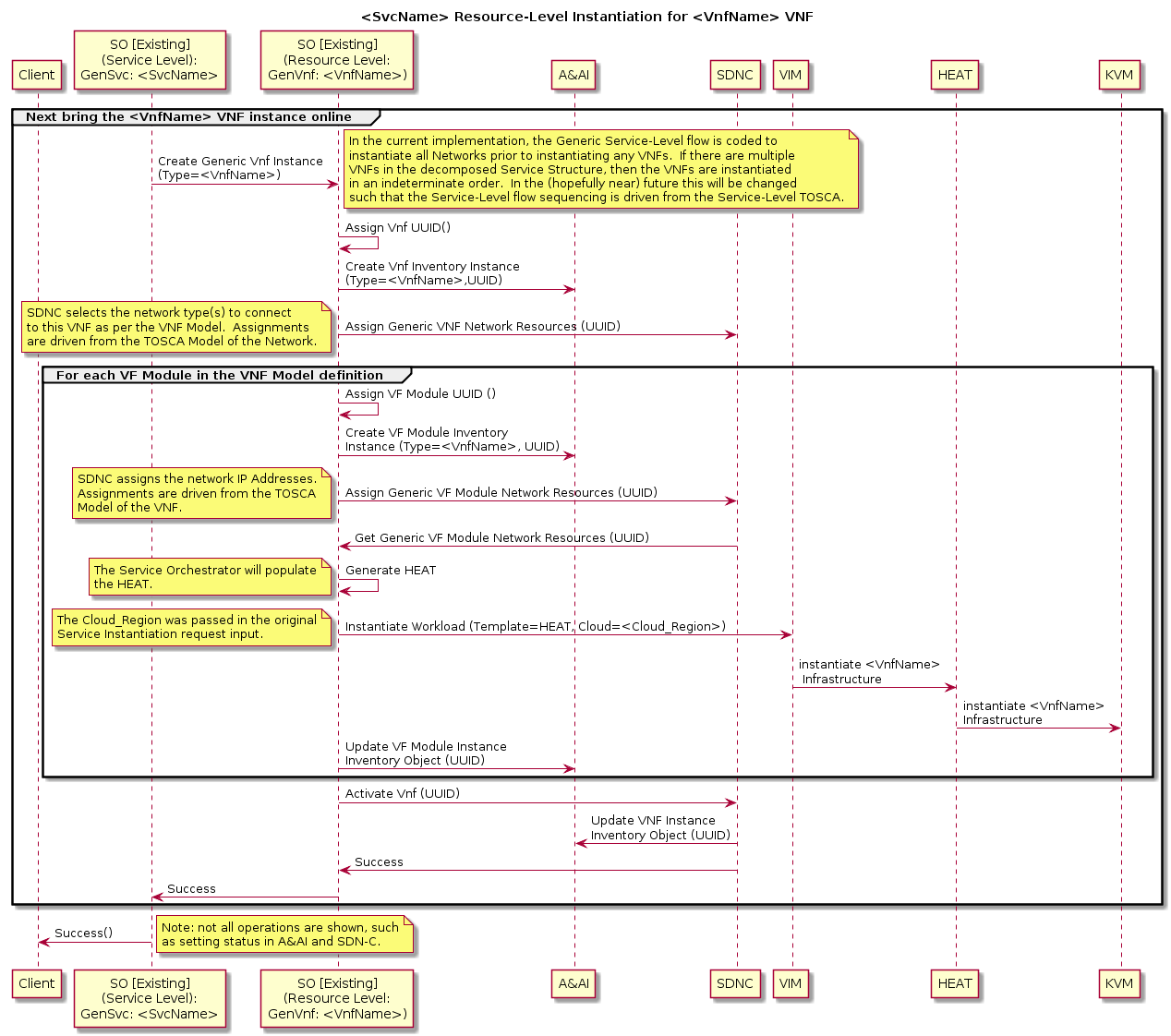

For "simple" Services which include only simple networks and VNFs (e.g., with no multi-data instances that map to different VF Modules), there exists an SO “Generic Service” flow (“top level flow”) that spawns Resource Level VNF and/or Network sub-flows that are themselves "Generic". By "Generic" we mean that the SO flows were built with no particular Service, VNF, or Network in mind, but are rather implemented in such a way to support many different Service, VNF, and Network types. The SDC model drives which particular sub-flows to spawn and of which type, as well as driving the specific behavior of the Service level and Resource level flows.

For such "simple" Services, the SDNC functionality is also “generic” such that only modeling and configuration is needed to drive SDNC behavior for a specific VNF type. For example, the SDNC generic VNF flow can automatically assign IP Addresses from a pool that has been pre-loaded into SDNC. E.g., pre-load 25 vG instances with their assignments pre-populated. SDNC keeps track of which instances have/have not been assigned.

It is expected that these SO and SDNC assets will be leveraged for most of the Services and Resources needed for the vCPE use case, though some custom flows will also be needed as per the following table:

Service | Service Level Flow | Resource | Resource Level Flow | SDNC Northbound API | SDNC DG |

|---|---|---|---|---|---|

vCpeCoreInfra_X | Generic Service | vCpeCoreInfraVnfs_X (VNF) | Generic VNF | GENERIC-RESOURCE | Generic DG |

“ | CPE_PUBLIC (Network) | Generic Ntw | GENERIC-RESOURCE | Generic DG | |

“ | CPE_SIGNAL (Network) | Generic Ntw | GENERIC-RESOURCE | Generic DG | |

vGMuxInfra | Generic Service | vGMUX (VNF) | Generic VNF | GENERIC-RESOURCE | Generic DG |

“ | “ | MUX_GW (Network) | Generic Ntw | GENERIC-RESOURCE | Generic DG |

vBngInfra | Generic Service | vBNG (VNF) | Generic VNF | GENERIC-RESOURCE | Generic DG |

“ | BRG_BNG (Network) | Generic Ntw | GENERIC-RESOURCE | Generic DG | |

BNG_MUX | Generic Service | BNG_MUX (Network) | Generic Ntw | GENERIC-RESOURCE | Generic DG |

BRG_EMU | Generic Service | BRG_EMU (VNF) | Generic VNF | GENERIC-RESOURCE | Custom Process (Event Handling) |

vCpeResCust | Custom [New] | TunnelXConn (AR) | Custom [New] | GENERIC-RESOURCE | Custom DG [New] |

“ | “ | vG (VNF) | Generic VNF | GENERIC-RESOURCE | Generic DG |

“ | “ | BRG (PNF) | Custom [New] | GENERIC-RESOURCE | Custom DG [New] |

The run time functionality of these generic flows is as follows:

Generic Service Instantiation Flow

Generic Resource Level flow for Networks

Generic Resource Level Flow for VNFs

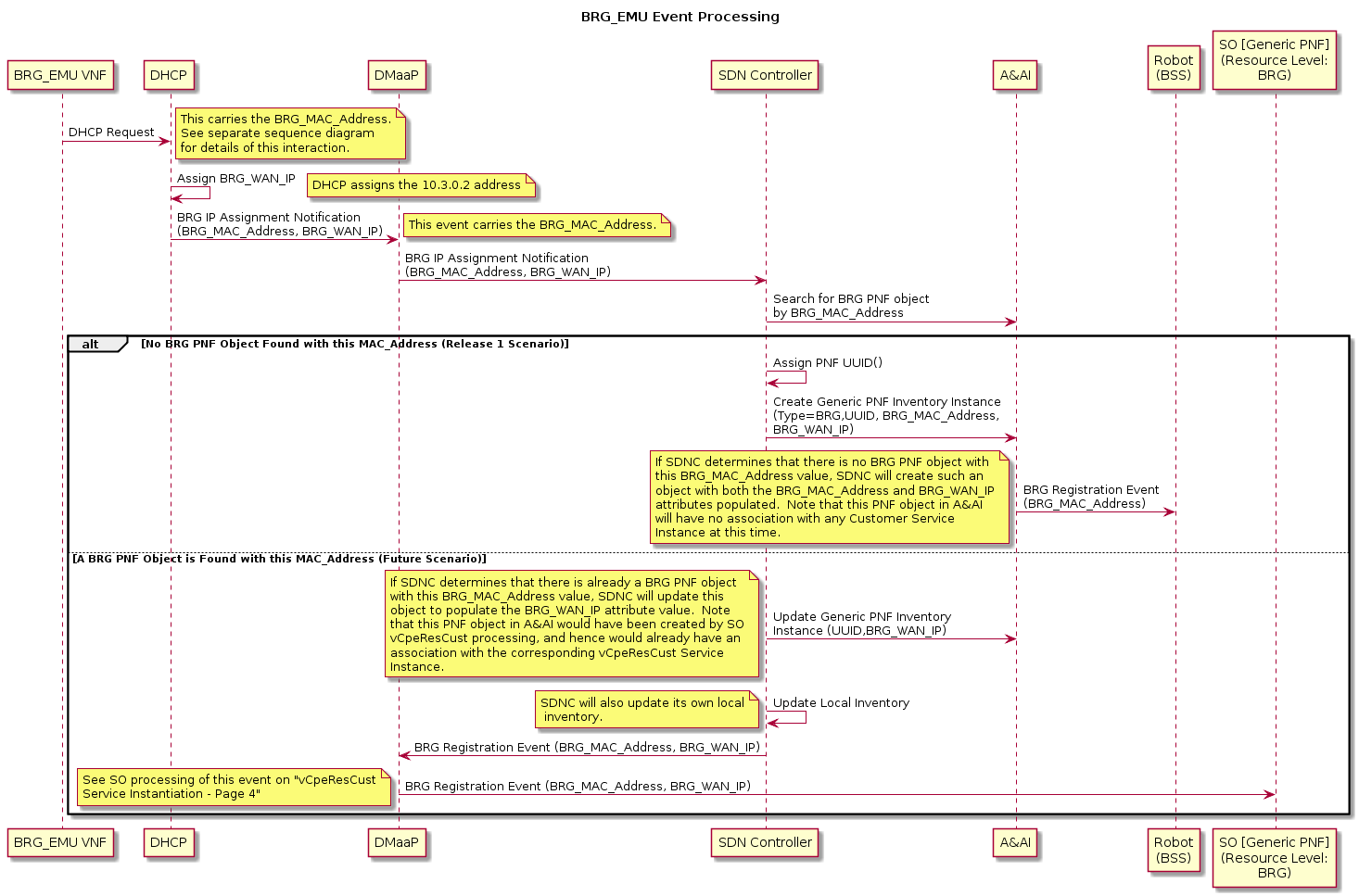

The diagram below shows more detail of step 5 of the Use Case Order of Operation. Note that only the "No BRG PNF Object" alternative flow is in scope for Release 1. (I.e., in Release 1 the assumption is that the BRG PNF (emulated) will always be "plugged in" prior to the "customer service request" being released to ONAP.)

For more details on the instantiation flows described at a high level above, see the attached PDF.

| Work Item | ONAP Member |

| Modeling | Amdocs, AT&T |

| SDC | Amdocs, AT&T |

| SO | AT&T |

| SDNC | Amdocs, AT&T |

| DCAE | AT&T |

| APPC | Amdocs, AT&T |

| A&AI | Amdocs |

| Policy | AT&T |

| Multi-VIM | Wind River |

VPP Based VNFs BRG_Emulator, VBNG, vG_MUX, vG | Intel |

| Integration | Amdocs, Integration Team |

| Use Case Subcommittee | Amdocs, AT&T, Intel, Orange, Wind River, more ? Deliverables: Service definition, service detailed requirements, ONAP flow diagrams, service(s) model(s) |

Note: Work commitment items indicate which ONAP Members have expressed interest and commitment to working on individual items. More than one ONAP Member is welcomed to provide support and work on each of the items.

Jun 30, 2017 Meeting: Closed Loop Control

- Yoav presented the flow on automatic reboot/restart.

- One question was whether we want to restart VNF or VM. The answer is to restart VM.

- Data collection vG_MUX --> DCAE: What needs to be worked out is the VES version and payload to be supported by vG_MUX. DCAE would like to have sample data from the VNF. Yoav will bring these requirements to Danny Lin.

- Events DCAE --> Policy: The content of the events should be well defined.

- Control Policy --> APPC: The detailed interface definition needs to be worked out.

- Mapping from VNF ID to VM ID: The current flow shows that APPC will query AAI to perform the mapping. Further discussion is needed to decide where to do it: APPC or Policy.

Jun 30, 2017 Meeting: Design Time

- Yoav presented the VNF onboarding process

- VxLAN is preferred for the tunnels between BRG and vG MUX. Tunnels from different home networks will share the same VNI but are differentiated using the BRG IP addresses.

- Ron Shacham explained that CLAMP will create a blutprint template that will be used to instantiate a DCAE instance for the use case. CLAMP is not responsible for the development of the DCAE analytics program.

- It is determined that the infrastructure service and per customer service should be created individually.

- Yang Xu from Integration pointed out that VNF address assignment/management (including OAM IP and port IP) needs further discussions. Yoav will update the use case architecture diagram to include OAM IP addresses.

- It is realized that service design is complicated and needs more discussions. Yoav will follow up on these topic and schedule meetings.

- Micheal Lando mentioned that a new version of SDC is being set up in AT&T. Daniel will check whether the community can get access to it.

Jul 6, 2017 Meeting: Design Time

- Recorded meeting is here.

- Brian Freeman explained that APPC should query AAI to get the VM ID and then perform VM restart.

- Currently that APPC polls to get the state after sending out restart command. There were discussions on using callback to get state change. Brian suggested that it be put in R2.

- Discussed the mapping between VxLAN tunnel and the VLAN between vG_MUX and vG1.

Jul 9: closed loop diagram updated reflecting the discussion