This document explains how to run the ONAP demos on Azure using the Beijing release of ONAP.

The Beijing release had certain limitations due to which fixes/workarounds have been provided to execute the demos. The document contains the details of the fixes/workarounds and the steps to deploy them.

| S.No | Component | Issue detail | Current Status | Further Actions |

|---|---|---|---|---|

| 1 | SO | Custom workflow to call Multivim adapter | A downstream image of SO is placed on github which contains the custom workflow BPMN along with the Multivim adapter. | A base version of code is pushed to gerrit that supports SO-Multicloud interaction. But, this won't support Multpart data(CSAR artifacts to pass to Plugin). This need to be upstreamed. |

| 2 | MutliCloud plugin | Current azure plugin on ONAP gerrit does not support vFW and vDNS use-cases | Using the downstream image from github and developed a custom chart in OOM (downstream) to deploy as part of multicloud component set. | Need to upstream the azure-plugin code to support vFW and vDNS use-cases. |

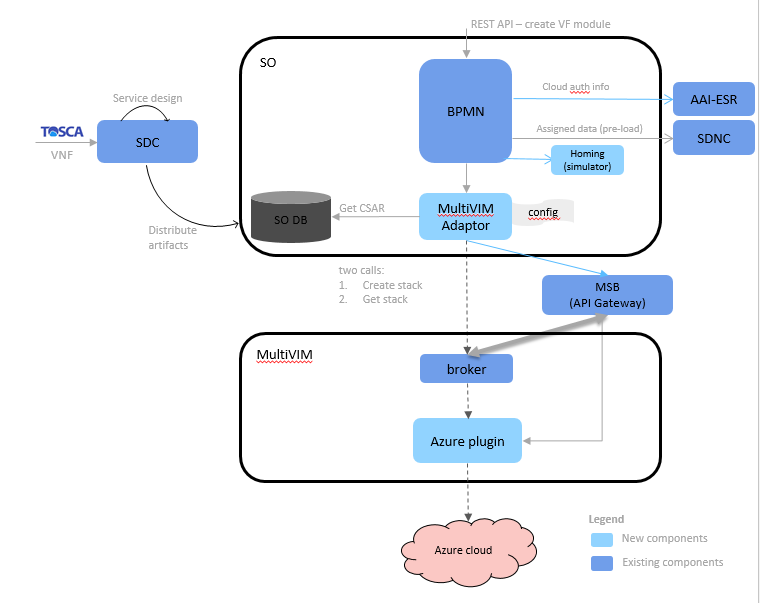

The High level solution architecture can be found here

| Not all ONAP components have been shown in the high level solution. Only the new component/modules that are introduced in the solution are shown. Rest all remains the same. |

ONAP needs to be deployed with the dockers containing the workarounds provided for the limitations in the Beijing release.

The OOM deployment values chart have also been modified to deploy the dockers with the fixes.

The detailed list of changes is given below:

| S.No | Project Name | Docker Image (Pull from dockerhub repo) | Remarks |

|---|---|---|---|

| 1 | OOM | NA | Contains the latest values.yaml files which point to downstream images of: That include:

|

| 2 | SO | elhaydox/mso:1.2.2_azure-1.1.0 | Contains the VFModule fix along with the newly developed BPMN and Multi VIM adapter |

| 3 | multicloud-azure | elhaydox/multicloud-azure | Aria plugin to interface with Azure and instantiate VNFs |

Login to azure

az login --service-principal -u <client_id> -p <client_secret> --tenant <tenant_id/document_id> |

Create a resource group

az group create --name <resource_group_name> --location <location_name> |

Get the deployment templates from ONAP gerrit

git clone -o gerrit https://gerrit.onap.org/r/integration cd integration/deployment/Azure_ARM_Template |

Run the deployment template

az group deployment create --resource-group deploy_onap --template-file arm_cluster_deploy_beijing.json --parameters @arm_cluster_deploy_parameters.json |

The deployment process will take around 30 minutes to complete. You will have a cluster with 12 VMs being created on Azure(as per the parameters). The VM name with the post-index: "0" will run Rancher server. And the remaining VMs form a Kubernetes cluster.

SSH to the VM using root user where rancher server is installed.(VM with postindex:"0" as mentioned before)

When you login to Rancher server VM for the first time, Run: "helm ls" to make sure the client and server are compatible. If it gives error: "Error: incompatible versions client[v2.9.1] server[v2.8.2]", then Execute: helm init --upgrade |

Download the OOM repo from github (because of the downstream images)

git clone -b beijing --single-branch https://github.com/onapdemo/oom.git |

Execute the below commands in sequence to install ONAP

cd oom/kubernetes make all # This will create and store the helm charts in local repo. helm install local/onap --name dev --namespace onap |

Due to network glitches on public cloud, the installation sometimes fail with error: "Error: release dev failed: client: etcd member http://etcd.kubernetes.rancher.internal:2379 has no leader". If one faces this during deployment, we need to re-install ONAP. For that: helm del --purge onap rm -rf /dockerdata-nfs/* #wait for few minuteshelm install local/onap --name dev --namespace onap |

Refer to the below pages to run the ONAP use-cases

If you want to take a look at the fixes and create the dockers for individual components, the source code for the fixes is available Source Code access