This is the initial draft of the design page.

Much of the information here has been pulled from this source document: Promethus-aggregation.pdf

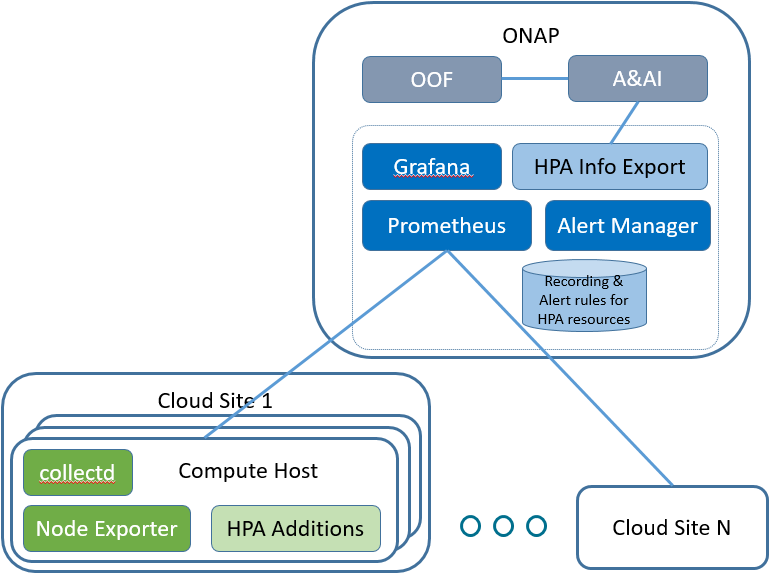

The following diagram illustrates the key components.

The Prometheus server is configured to collect time series data from targets. In this case, the source of data will be the compute hosts in the cloud regions.

The Alert Manager receives alerts based on alerting rules configured for the Prometheus server. Alerts can be forwarded on to clients.

For HPA telemetry capture use case, the idea is to create a client to receive alerts from the Alert Manager and update HPA information in A&AI. See HPA - Telemetry OOF and A&AI

Grafana is configured to query data from Prometheus and display information on HPA telemetry.

There are many projects which can be used to export data to the Prometheus server. The initial components listed here are expected to support an initial list of HPA attributes.

The node exporter runs on the compute hosts and sends a variety of statistics about the node. Some of these will be used to identify the HPA attributes and capacity of the compute host.

The collectd component is another exporter that can be executed on the compute host to collect information. collectd has support for SR-IOV information that will be used to support the HPA telemetry.

It's possible that some HPA attributes may not be supported by any existing exporter. Some additions to existing or new components may be developed to provide support.

The initial phase of HPA Platform Telemetry will involve setting up and operating the basic components.

Design topics to resolve:

With phase 2, the goal is to export HPA information into ONAP A&AI so that OOF can use the data during placement decisions.

Additional components needed:

Some items that could be included here: