| Table of Contents |

|---|

Environment and Resources

Kubernetes cluster with 4 worker nodes, sharing hardware configuration shown in a table below, is deployed in OpenStack cloud operating system. The test components in docker containers are further deployed on the Kubernetes cluster.

...

CPU

...

Test architecture

In order to conduct client tests this will be conducted in following architecture:

- HV-VES Client - produces high amount of events for processing.

- Processing Consumer - consumes events from Kafka topics and creates performance metrics.

- Offset Consumer - reads Kafka offsets.

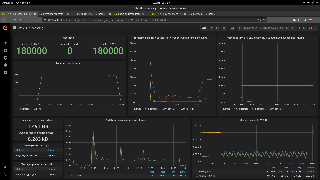

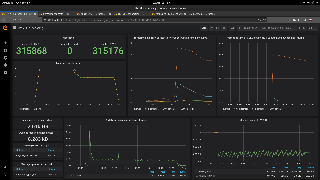

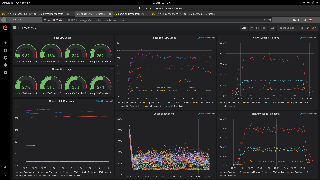

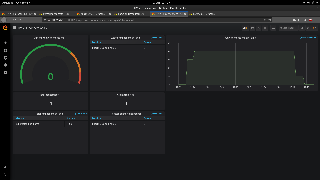

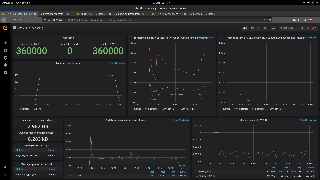

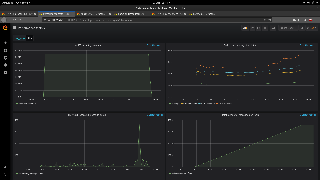

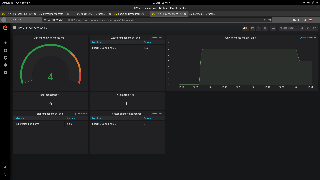

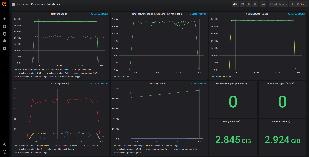

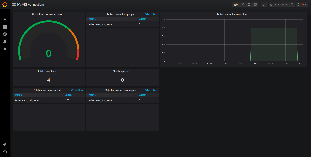

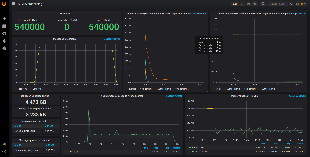

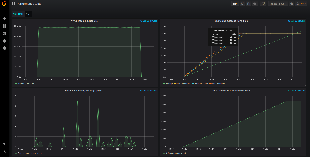

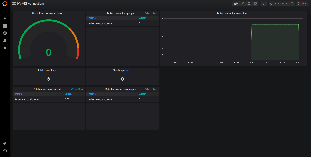

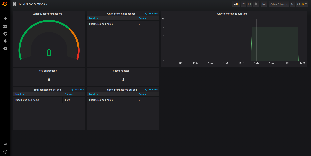

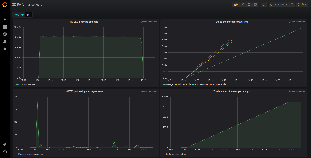

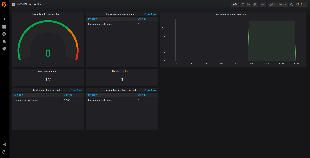

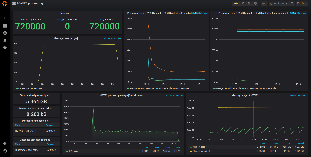

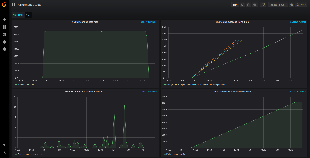

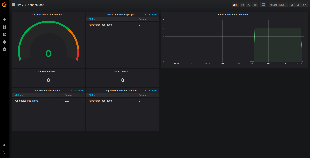

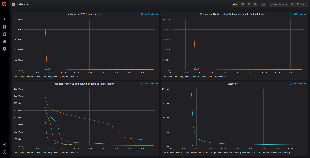

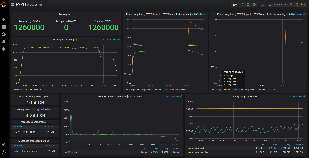

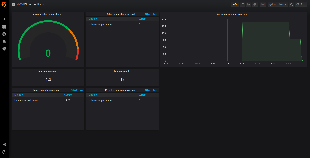

- Prometheus - sends requests for performance metrics to HV-VES, Processing Consumer and Offset Consumer, provides data to Grafana.

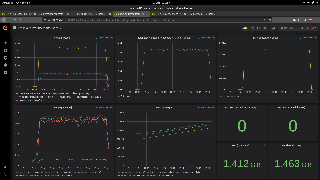

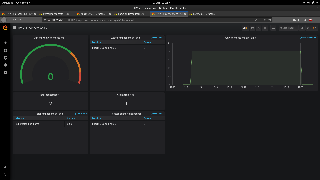

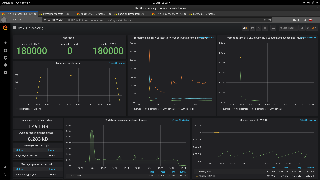

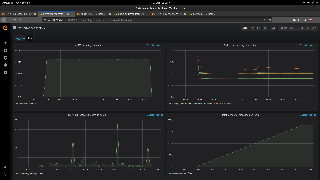

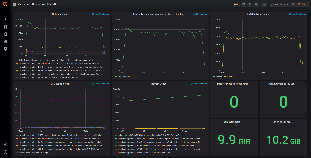

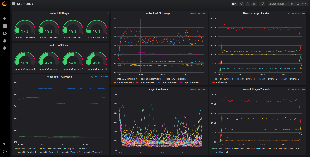

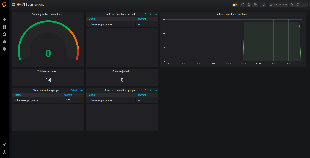

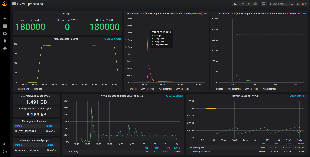

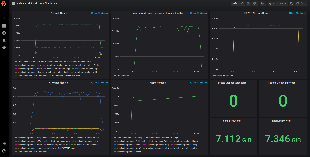

- Grafana - delivers analytics and its visualization.

Link between HV-VES Client and HV-VES is TLS secured (provided scripts generate and place certificates on proper containers).

| Info |

|---|

Note: In the Without DMaaP Kafka tests the DMaaP/Kafka service was substituted with wurstmeister kafka |

Environment and Resources

Kubernetes cluster with 4 worker nodes, sharing hardware configuration shown in a table below, is deployed in OpenStack cloud operating system. The test components in docker containers are further deployed on the Kubernetes cluster.

| Configuration | ||

|---|---|---|

CPU | Model | Intel(R) Xeon(R) CPU E5-2680 v4 |

| No. of cores | 24 | |

| CPU clock speed [GHz] | 2.40 | |

| Total RAM [GB] | 62.9 | |

Network Performance

Pod measurement method

In order to check cluster network performance tests with usage of Iperf3 have been applied. Iperf is a tool for measurement of the maximum bandwidth on IP networks, it runs on two mode: server and client. We used a docker image: networkstatic/iperf3.

Following deployment creates a pod with iperf (server mode) on one worker, and one pod with iperf client for each worker.

| Code Block | ||||

|---|---|---|---|---|

| ||||

apiVersion: apps/v1

kind: Deployment

metadata:

name: iperf3-server

namespace: onap

labels:

|

Network Performance

Pod measurement method

In order to check cluster network performance tests with usage of Iperf3 have been applied. Iperf is a tool for measurement of the maximum bandwidth on IP networks, it runs on two mode: server and client. We used a docker image: networkstatic/iperf3.

Following deployment creates a pod with iperf (server mode) on one worker, and one pod with iperf client for each worker.

| Code Block | ||||

|---|---|---|---|---|

| ||||

apiVersion: apps/v1 kind: Deployment metadata: name: iperf3-server namespace: onap labels: app: iperf3-server spec: replicas: 1 selector: matchLabels: app: iperf3-server spec: replicas: 1 selector: matchLabels: app: iperf3-server template: metadata: labels: app: iperf3-server spec: containers: - name: iperf3-server image: networkstatic/iperf3 args: ['-s'] ports: - containerPort: 5201 name: server --- apiVersion: v1 kind: Service metadata: name: iperf3-server namespace: onap spec: selector: app: iperf3-server ports: - protocol: TCP port: 5201 targetPort: server --- apiVersion: apps/v1 kind: DaemonSet metadata: name: iperf3-clients namespace: onap labels: app: iperf3-client spec: selector: matchLabels: app: iperf3-client template: metadata: labels: app: iperf3-client spec: containers: - name: iperf3-client image: networkstatic/iperf3 command: ['/bin/sh', '-c', 'sleep infinity'] |

...

worker1 (136 MBytes/sec)

Code Block title results collapse true Connecting to host iperf3-server, port 5201 [ 4] local 10.42.5.127 port 39752 connected to 10.43.25.161 port 5201 [ ID] Interval Transfer Bandwidth Retr Cwnd [ 4] 0.00-1.00 sec 141 MBytes 141 MBytes/sec 32 673 KBytes [ 4] 1.00-2.00 sec 139 MBytes 139 MBytes/sec 0 817 KBytes [ 4] 2.00-3.00 sec 139 MBytes 139 MBytes/sec 0 936 KBytes [ 4] 3.00-4.00 sec 138 MBytes 137 MBytes/sec 0 1.02 MBytes [ 4] 4.00-5.00 sec 138 MBytes 137 MBytes/sec 0 1.12 MBytes [ 4] 5.00-6.00 sec 129 MBytes 129 MBytes/sec 0 1.20 MBytes [ 4] 6.00-7.00 sec 129 MBytes 129 MBytes/sec 0 1.27 MBytes [ 4] 7.00-8.00 sec 134 MBytes 134 MBytes/sec 0 1.35 MBytes [ 4] 8.00-9.00 sec 135 MBytes 135 MBytes/sec 0 1.42 MBytes [ 4] 9.00-10.00 sec 135 MBytes 135 MBytes/sec 45 1.06 MBytes - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth Retr [ 4] 0.00-10.00 sec 1.32 GBytes 136 MBytes/sec 77 sender [ 4] 0.00-10.00 sec 1.32 GBytes 135 MBytes/sec receiver

worker2 (87 MBytes/sec)

Code Block title results collapse true Connecting to host iperf3-server, port 5201 [ 4] local 10.42.3.188 port 35472 connected to 10.43.25.161 port 5201 [ ID] Interval Transfer Bandwidth Retr Cwnd [ 4] 0.00-1.00 sec 88.3 MBytes 88.3 MBytes/sec 121 697 KBytes [ 4] 1.00-2.00 sec 96.2 MBytes 96.3 MBytes/sec 0 796 KBytes [ 4] 2.00-3.00 sec 92.5 MBytes 92.5 MBytes/sec 0 881 KBytes [ 4] 3.00-4.00 sec 90.0 MBytes 90.0 MBytes/sec 0 957 KBytes [ 4] 4.00-5.00 sec 87.5 MBytes 87.5 MBytes/sec 0 1.00 MBytes [ 4] 5.00-6.00 sec 88.8 MBytes 88.7 MBytes/sec 0 1.06 MBytes [ 4] 6.00-7.00 sec 80.0 MBytes 80.0 MBytes/sec 0 1.12 MBytes [ 4] 7.00-8.00 sec 81.2 MBytes 81.3 MBytes/sec 25 895 KBytes [ 4] 8.00-9.00 sec 85.0 MBytes 85.0 MBytes/sec 0 983 KBytes [ 4] 9.00-10.00 sec 83.8 MBytes 83.7 MBytes/sec 0 1.03 MBytes - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth Retr [ 4] 0.00-10.00 sec 873 MBytes 87.3 MBytes/sec 146 sender [ 4] 0.00-10.00 sec 870 MBytes 87.0 MBytes/sec receiver

worker3 (135 MBytes/sec)

Code Block title results collapse true Connecting to host iperf3-server, port 5201 [ 4] local 10.42.4.182 port 35288 connected to 10.43.25.161 port 5201 [ ID] Interval Transfer Bandwidth Retr Cwnd [ 4] 0.00-1.00 sec 129 MBytes 129 MBytes/sec 45 1.17 MBytes [ 4] 1.00-2.00 sec 134 MBytes 134 MBytes/sec 32 1.25 MBytes [ 4] 2.00-3.00 sec 135 MBytes 135 MBytes/sec 0 1.32 MBytes [ 4] 3.00-4.00 sec 139 MBytes 139 MBytes/sec 0 1.40 MBytes [ 4] 4.00-5.00 sec 144 MBytes 144 MBytes/sec 0 1.47 MBytes [ 4] 5.00-6.00 sec 131 MBytes 131 MBytes/sec 45 1.14 MBytes [ 4] 6.00-7.00 sec 129 MBytes 129 MBytes/sec 0 1.25 MBytes [ 4] 7.00-8.00 sec 134 MBytes 134 MBytes/sec 0 1.33 MBytes [ 4] 8.00-9.00 sec 138 MBytes 138 MBytes/sec 0 1.39 MBytes [ 4] 9.00-10.00 sec 135 MBytes 135 MBytes/sec 0 1.44 MBytes - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth Retr [ 4] 0.00-10.00 sec 1.31 GBytes 135 MBytes/sec 122 sender [ 4] 0.00-10.00 sec 1.31 GBytes 134 MBytes/sec receiver

worker0 (2282 MBytes/sec) (iperf client and server exist on same worker )

Code Block title results collapse true Connecting to host iperf3-server, port 5201 [ 4] local 10.42.6.132 port 51156 connected to 10.43.25.161 port 5201 [ ID] Interval Transfer Bandwidth Retr Cwnd [ 4] 0.00-1.00 sec 2.13 GBytes 2185 MBytes/sec 0 536 KBytes [ 4] 1.00-2.00 sec 1.66 GBytes 1702 MBytes/sec 0 621 KBytes [ 4] 2.00-3.00 sec 2.10 GBytes 2154 MBytes/sec 0 766 KBytes [ 4] 3.00-4.00 sec 1.89 GBytes 1937 MBytes/sec 0 1.01 MBytes [ 4] 4.00-5.00 sec 1.87 GBytes 1914 MBytes/sec 0 1.39 MBytes [ 4] 5.00-6.00 sec 2.76 GBytes 2826 MBytes/sec 0 1.39 MBytes [ 4] 6.00-7.00 sec 1.81 GBytes 1853 MBytes/sec 792 1.09 MBytes [ 4] 7.00-8.00 sec 2.54 GBytes 2600 MBytes/sec 0 1.21 MBytes [ 4] 8.00-9.00 sec 2.70 GBytes 2763 MBytes/sec 0 1.34 MBytes [ 4] 9.00-10.00 sec 2.82 GBytes 2889 MBytes/sec 0 1.34 MBytes - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth Retr [ 4] 0.00-10.00 sec 22.3 GBytes 2282 MBytes/sec 792 sender [ 4] 0.00-10.00 sec 22.3 GBytes 2282 MBytes/sec receiver

Average speed (without worker 0 ) : 119 MBytes/sec

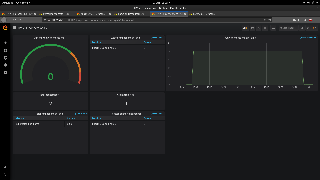

HV-VES Performance

Preconditions

Before start tests, download docker image of producer which is available here. To extract image locally use command:

| Code Block |

|---|

docker load < hv-collector-go-client.tar.gz

|

[ 4] 0.00-10.00 sec 22.3 GBytes 2282 MBytes/sec 792 sender [ 4] 0.00-10.00 sec 22.3 GBytes 2282 MBytes/sec receiver

Average speed (without worker 0 ) : 119 MBytes/sec

Test Setup

Preconditions

- Installed ONAP (Frankfurt)

- Plain TCP connection between HV-VES and clients (default configuration)

- Metric port exposed on HV-VES service

In order to reach metrics endpoint in HV-VES there is a need to add the following lines in the ports section of HV-VES service configuration file:

| Code Block | ||

|---|---|---|

| ||

- name: port-t-6060

port: 6060

protocol: TCP

targetPort: 6060 |

Before start tests, download docker image of producer which is available here:

| View file | ||||

|---|---|---|---|---|

|

To extract image locally use command:

| Code Block |

|---|

docker load < hv-collector-go-client.tar.gz

|

Modify tools/performance/cloud/producer-pod.yaml file to use the above image and set imagePullPolicy to IfNotPresent:

| Code Block | ||

|---|---|---|

| ||

...

spec:

containers:

- name: hv-collector-producer

image: onap/org.onap.dcaegen2.collectors.hv-ves.hv-collector-go-client:latest

imagePullPolicy: IfNotPresent

volumeMounts:

... |

To execute performance tests we have to run functions from a shell script cloud-based-performance-test.sh in HV-VES project directory: ~/tools/performance/cloud/

First we have to generate certificates in ~/tools/ssl folder by using gen_certs. This step only needs to be performed during the first test setup (or if the generated files have been deleted).

Code Block title Generating certificates ./cloud-based-performance-test.sh gen_certsThen we call setup in order to send certificates to HV-VES, and deploy Consumers, Prometheus, Grafana and create their ConfigMaps.

Code Block title Setting up the test environment ./cloud-based-performance-test.sh setupAfter completing previous steps we can call the start function, which provides Producers and starts the test.

Code Block title Performing the test ./cloud-based-performance-test.sh startFor the start function we can use optional arguments:

--load should the test keep defined number of running producers until script interruption (false) --containers number of producer containers to create (1) --properties-file path to file with benchmark properties (./test.properties) --retention-time-minutes retention time of messages in kafka in minutes (60) Example invocations of test start:

Code Block title Starting performance test with single producers creation ./cloud-based-performance-test.sh start --containers 10The command above starts the test that creates 10 producers which send the amount of messages defined in test.properties once.

Code Block title Starting performance test with constant messages load ./cloud-based-performance-test.sh start --load true --containers 10 --retention-time-minutes 30This invocation starts load test, meaning the script will try to keep the amount of running containers at 10 with kafka message retention of 30 minutes.

The test.properties file contains Producers and Consumers configurations and it allows setting following properties:

Producer hvVesAddress HV-VES address (dcae-hv-ves-collector.onap:6061) client.count Number of clients per pod (1) message.size Size of a single message in bytes (16384) message.count Amount of messages to be send by each client (1000) message.interval Interval between messages in miliseconds (1) Certificates paths client.cert.path Path to cert file (/ssl/client.p12) client.cert.pass.path Path to cert's pass file (/ssl/client.pass) Consumer kafka.bootstrapServers Adress of Kafka service to consume from (message-router-kafka:9092) kafka.topics Kafka topics to subscribe to (HV_VES_PERF3GPP)

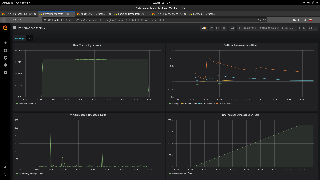

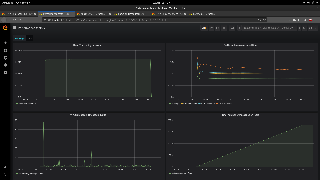

Results can be accessed under following links:

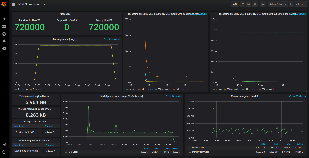

HV-VES Performance test results

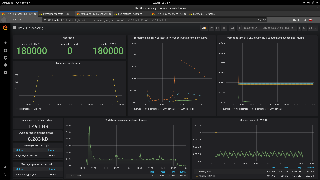

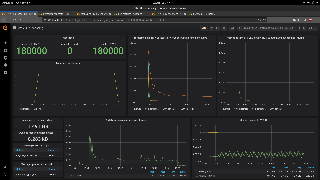

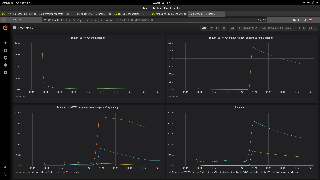

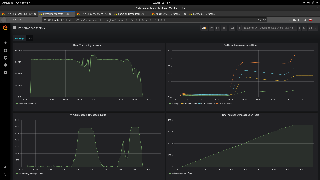

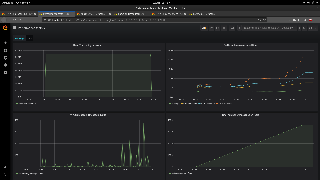

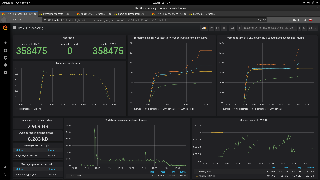

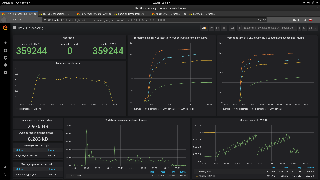

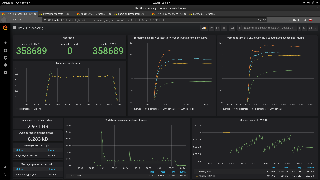

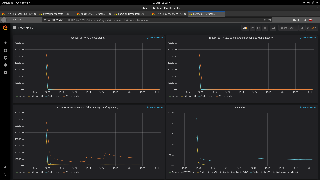

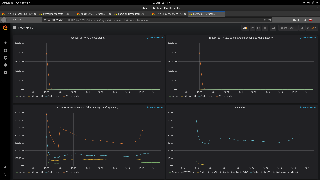

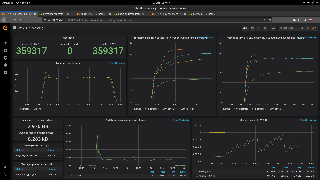

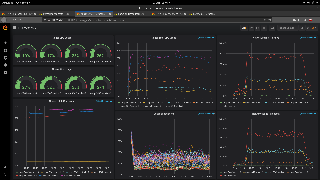

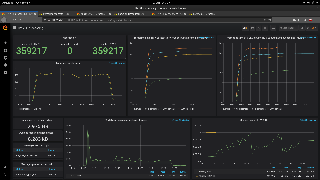

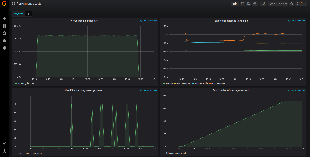

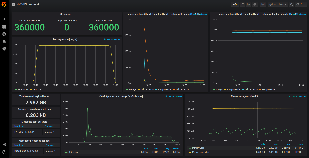

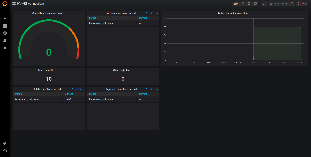

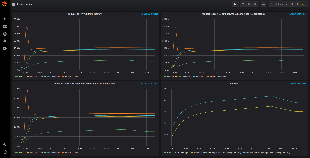

With dmaap Kafka

Conditions

...

Raw results data with screenshots can be found in following files:

- Series 1 - results_series_1_with_dmaap.zip

- Series 2 - results_series_2_with_dmaap.zip

Test Results - series 1

| Expand | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Below tables show the test results across a wide range of containers' number.

|

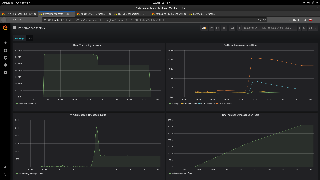

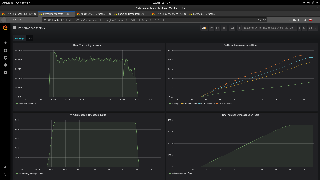

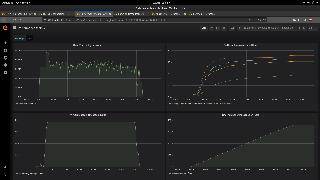

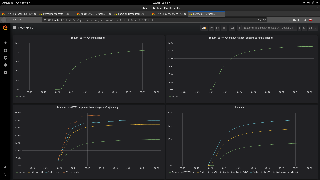

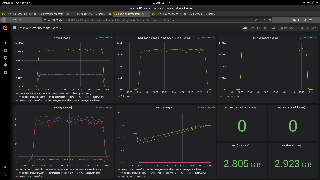

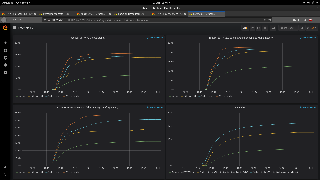

Test Results - series 2

| Expand | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

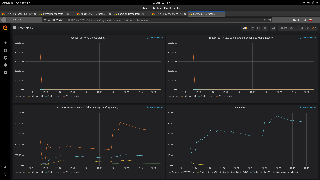

No DMaaP Kafka SetUp

...

Modify tools/performance/cloud scripts to match the names in your deployments, described in the previous step. Here is a diff file (you may need to adapt it to the current code situation):

Go to tools/performance/cloud and reboot the environment:

| Code Block | ||

|---|---|---|

| ||

./reboot-test-environment.sh -v |

Now you are ready to run the test.

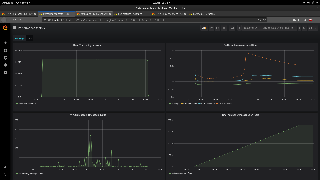

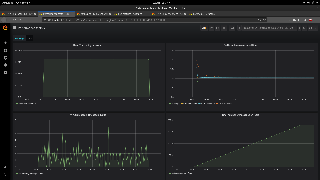

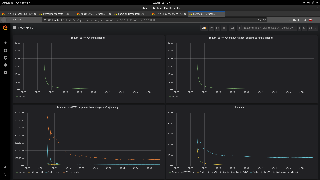

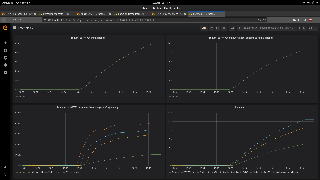

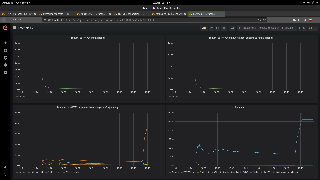

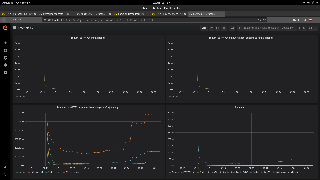

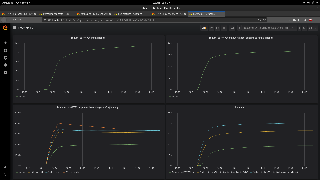

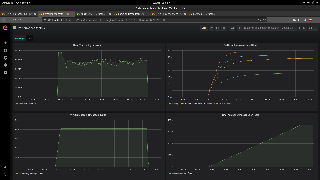

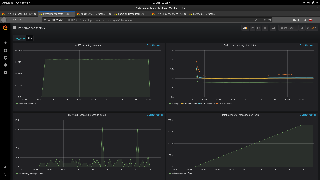

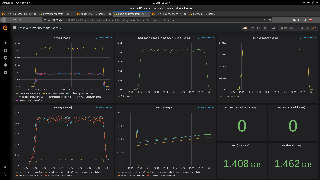

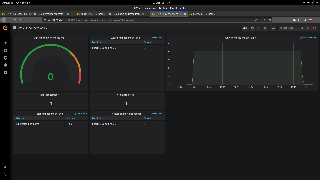

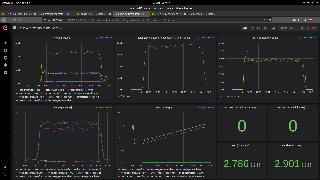

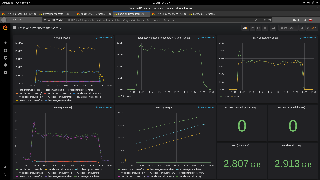

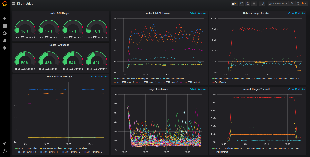

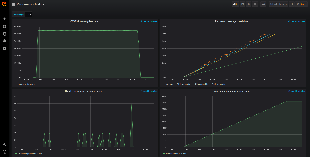

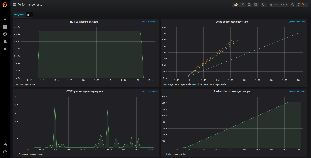

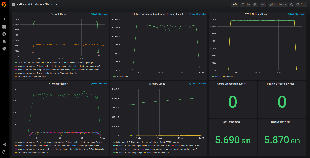

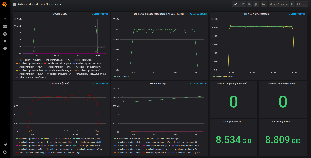

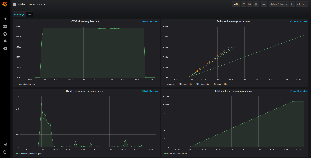

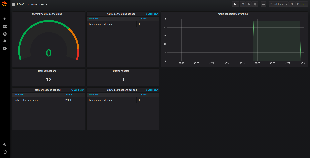

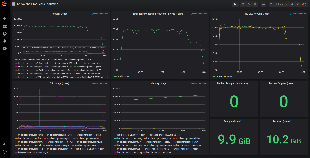

Without DMaaP Kafka

Conditions

To gather tcpdump data another container was added to hv-ves deployment in kubernetes and producer-pod.yaml (command to get tcpdump data file: kubectl cp -n onap <pod-name>:/tcpdump.pcap -c tcpdump ./<pod-name>.pcap)the environment:

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ...

containers:

...

<<default containers>>

...

- name: tcpdump

image: onap-dev-local.esisoj70.emea.nsn-net.net/rjanecze/my-tcpdump:1.0.7

...

| |||||

./reboot-test-environment.sh -v |

Now you are ready to run the test.

Without DMaaP Kafka

Conditions

Tests were performed with following configuration:

...

Raw results data with screenshots can be found in following files:

- Series 1 - results_series_1.zip

- Series 2 - results_series_2.zip

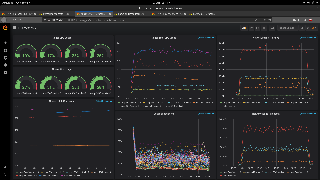

To see custom Kafka metrics you may want to change kafka-and-producers.json (located in HV-VES project directory: tools/performance/cloud/grafana/dashboards) to

...

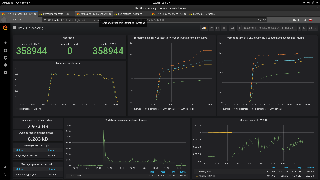

| Expand | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Below tables show the test results across a wide range of containers' number.

|

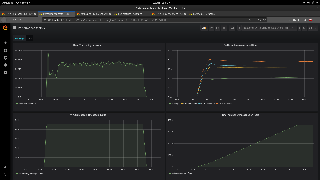

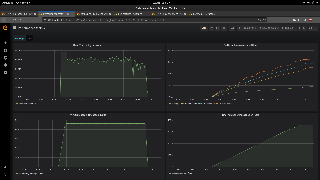

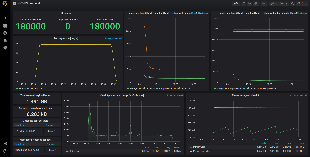

Test results - series 2

| Expand | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Below tables show the test results across a wide range of containers' number.

|