Environment and Resources

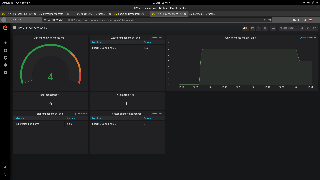

Kubernetes cluster with 4 worker nodes, sharing hardware configuration shown in a table below, is deployed in OpenStack cloud operating system. The test components in docker containers are further deployed on the Kubernetes cluster.

...

CPU

...

Test architecture

In order to conduct client tests this will be conducted in following architecture:

Image Added

Image Added

- HV-VES Client - produces high amount of events for processing.

- Processing Consumer - consumes events from Kafka topics and creates performance metrics.

- Offset Consumer - reads Kafka offsets.

- Prometheus - sends requests for performance metrics to HV-VES, Processing Consumer and Offset Consumer, provides data to Grafana.

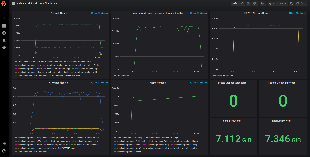

- Grafana - delivers analytics and its visualization.

Link between HV-VES Client and HV-VES is TLS secured (provided scripts generate and place certificates on proper containers).

| Info |

|---|

Note: In the Without DMaaP Kafka tests the DMaaP/Kafka service was substituted with wurstmeister kafka |

Environment and Resources

Kubernetes cluster with 4 worker nodes, sharing hardware configuration shown in a table below, is deployed in OpenStack cloud operating system. The test components in docker containers are further deployed on the Kubernetes cluster.

| Configuration |

|---|

CPU | Model | Intel(R) Xeon(R) CPU E5-2680 v4 |

| No. of cores | 24 |

| CPU clock speed [GHz] | 2.40 |

| Total RAM [GB] | 62.9 |

Network Performance

Pod measurement method

In order to check cluster network performance tests with usage of Iperf3 have been applied. Iperf is a tool for measurement of the maximum bandwidth on IP networks, it runs on two mode: server and client. We used a docker image: networkstatic/iperf3.

Following deployment creates a pod with iperf (server mode) on one worker, and one pod with iperf client for each worker.

| Code Block |

|---|

| title | Deployment |

|---|

| collapse | true |

|---|

|

apiVersion: apps/v1

kind: Deployment

metadata:

name: iperf3-server

namespace: onap

labels:

app: iperf3-server

spec:

|

Network Performance

Pod measurement method

In order to check cluster network performance tests with usage of Iperf3 have been applied. Iperf is a tool for measurement of the maximum bandwidth on IP networks, it runs on two mode: server and client. We used a docker image: networkstatic/iperf3.

Following deployment creates a pod with iperf (server mode) on one worker, and one pod with iperf client for each worker.

| Code Block |

|---|

| title | Deployment |

|---|

| collapse | true |

|---|

|

apiVersion: apps/v1

kind: Deployment

metadata:

name: iperf3-server

namespace: onap

labels:

app: iperf3-server

spec:

replicas: 1

selector:

matchLabels:

app: iperf3-server

template:

metadata:

labels:

app: iperf3-server

spec:

containers:

- name: iperf3-server

image: networkstatic/iperf3

args: ['-s']

ports:

- containerPort: 5201

name: server

---

apiVersion: v1

kind: Service

metadata:

name: iperf3-server

namespace: onap

spec:

selector:

app: iperf3-server

ports:

- protocol: TCP

port: 5201

targetPort: server

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: iperf3-clients

namespace: onap

labels:

app: iperf3-client

spec:

selector:

matchLabels:

app: iperf3-client

template:

metadata:

labels:

app: iperf3-client

spec:

containers:

- name: iperf3-client

image: networkstatic/iperf3

command: ['/bin/sh', '-c', 'sleep infinity'] |

...

Average speed (without worker 0 ) : 119 MBytes/sec

Test Setup

Preconditions

- Installed ONAP (Frankfurt)

- Plain TCP connection between HV-VES

...

Preconditions

Before start tests, download docker image of producer which is available here. To extract image locally use command:

...

- and clients (default configuration)

- Metric port exposed on HV-VES service

In order to reach metrics endpoint in HV-VES there is a need to add the following lines in the ports section of HV-VES service configuration file:

| Code Block |

|---|

| title | Lines to add to ports section of HV-VES service configuration file |

|---|

|

- name: port-t-6060

port: 6060

protocol: TCP

targetPort: 6060 |

Before start tests, download docker image of producer which is available here:

| View file |

|---|

| name | hv-collector-go-client.tar.gz |

|---|

| height | 250 |

|---|

|

To extract image locally use command:

| Code Block |

|---|

docker load < hv-collector-go-client.tar.gz

|

With dmaap Kafka

Conditions

Tests were performed with 5 repetitions for each configuration shown in the table below.

...

Payload size [B]

...

Modify tools/performance/cloud/producer-pod.yaml file to use the above image and set imagePullPolicy to IfNotPresent:

| Code Block |

|---|

|

...

spec:

containers:

- name: hv-collector-producer

image: onap/org.onap.dcaegen2.collectors.hv-ves.hv-collector-go-client:latest

imagePullPolicy: IfNotPresent

volumeMounts:

... |

To execute performance tests we have to run functions from a shell script cloud-based-performance-test.sh in HV-VES project directory: ~/tools/performance/cloud/

First we have to generate certificates in ~/tools/ssl folder by using gen_certs. This step only needs to be performed during the first test setup (or if the generated files have been deleted).

| Code Block |

|---|

| title | Generating certificates |

|---|

|

./cloud-based-performance-test.sh gen_certs |

Then we call setup in order to send certificates to HV-VES, and deploy Consumers, Prometheus, Grafana and create their ConfigMaps.

| Code Block |

|---|

| title | Setting up the test environment |

|---|

|

./cloud-based-performance-test.sh setup |

After completing previous steps we can call the start function, which provides Producers and starts the test.

| Code Block |

|---|

|

./cloud-based-performance-test.sh start |

For the start function we can use optional arguments:

| --load | should the test keep defined number of running producers until script interruption (false) |

| --containers | number of producer containers to create (1) |

| --properties-file | path to file with benchmark properties (./test.properties) |

| --retention-time-minutes | retention time of messages in kafka in minutes (60) |

Example invocations of test start:

| Code Block |

|---|

| title | Starting performance test with single producers creation |

|---|

|

./cloud-based-performance-test.sh start --containers 10 |

The command above starts the test that creates 10 producers which send the amount of messages defined in test.properties once.

| Code Block |

|---|

| title | Starting performance test with constant messages load |

|---|

|

./cloud-based-performance-test.sh start --load true --containers 10 --retention-time-minutes 30 |

This invocation starts load test, meaning the script will try to keep the amount of running containers at 10 with kafka message retention of 30 minutes.

The test.properties file contains Producers and Consumers configurations and it allows setting following properties:

| Producer |

|---|

| hvVesAddress | HV-VES address (dcae-hv-ves-collector.onap:6061) |

| client.count | Number of clients per pod (1) |

| message.size | Size of a single message in bytes (16384) |

| message.count | Amount of messages to be send by each client (1000) |

| message.interval | Interval between messages in miliseconds (1) |

| Certificates paths |

|---|

| client.cert.path | Path to cert file (/ssl/client.p12) |

| client.cert.pass.path | Path to cert's pass file (/ssl/client.pass) |

| Consumer |

|---|

| kafka.bootstrapServers | Adress of Kafka service to consume from (message-router-kafka:9092) |

| kafka.topics | Kafka topics to subscribe to (HV_VES_PERF3GPP) |

Results can be accessed under following links:

HV-VES Performance test results

With dmaap Kafka

Conditions

Tests were performed with 5 repetitions for each configuration shown in the table below.

| Number of producers | Messages per producer | Payload size [B] | Interval [ms] |

|---|

| 2 | 90000 | 8192 | 10 |

|---|

| 4 | 90000 | 8192 | 10 |

|---|

| 6 | 60000 | 8192 | 10 |

|---|

Raw results data

Raw results data with screenshots can be found in following files:

Test Results - series 1

| Expand |

|---|

| title | Click here to see results... |

|---|

|

Raw results data

Raw results data with screenshots can be found in following files:

Test Results - series 1

...

| title | Click here to see results... |

|---|

Below tables show the test results across a wide range of containers' number.

...

...

...

...

...

...

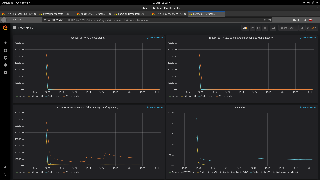

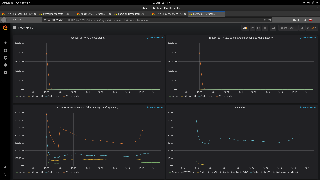

Image Removed

Image Removed

...

...

...

...

...

...

...

...

...

Test Results - series 2

Without DMaaP Kafka

Conditions

Environment was prepared using: Performance test without DMaaP - instruction.

To gather tcpdump data another container was added to hv-ves deployment in kubernetes and producer-pod.yaml (command to get tcpdump data file: kubectl cp -n onap <pod-name>:/tcpdump.pcap -c tcpdump ./<pod-name>.pcap):

...

| title | Tcpdump container |

|---|

| collapse | true |

|---|

...

| 5.8 | 0.97 | | | 6 | 358494 | 1506 | 7080 | 20182 | 4.7 | 4500 | 4.5 | 0.97 | | | 6 | 359244 | 756 | 4900 | 17231 | 4.9 | 1900 | 3.9 | 0.93 | | | 6 | 358944 | 1056 | 6150 | 17200 | 4.9 | 4800 | 5 | 0.97 | | | 6 | 358689 | 1311 | 5410 | 17202 | 4.9 | 4100 | 5 | 0.96 | |

|

Test Results - series 2

| Expand |

|---|

| title | Click here to see results... |

|---|

|

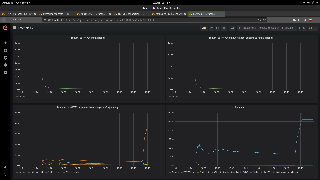

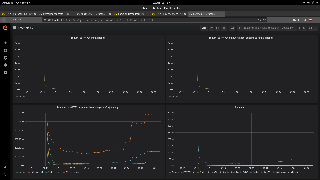

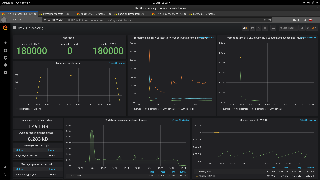

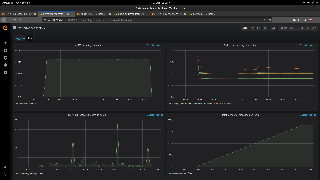

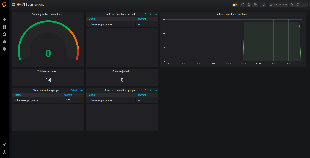

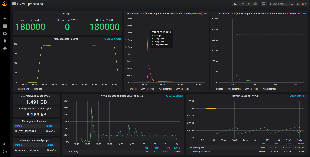

| NUMBER OF PRODUCERS | TOTAL MESSAGES PROCESSED | DIFFERENCE BETWEEN ALL MESSAGES AND SENT TO HV-VES | AVERAGE PROCESSING TIME IN HV-VES WITHOUT ROUTING [ms] | AVERAGE LATENCY TO HV-VES OUTPUT WITH ROUTING [ms] | PEAK INCOMING DATA RATE [MB/s] | PEAK PROCESSING MESSAGE QUEUE SIZE | PEAK CPU LOAD [%] | PEAK MEMORY USAGE [GB] | RESULTS PRESENTED IN GRAFANA |

|---|

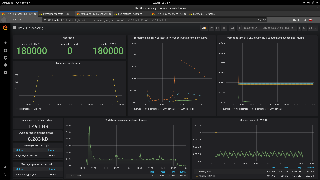

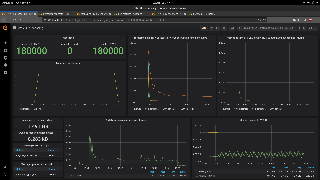

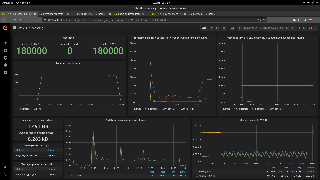

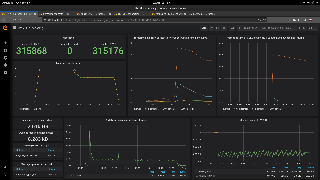

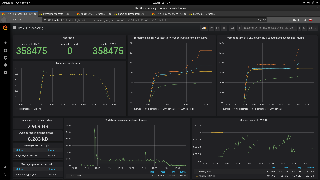

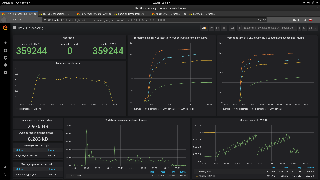

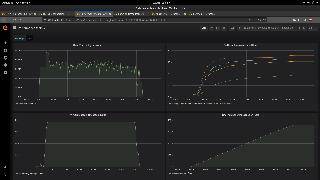

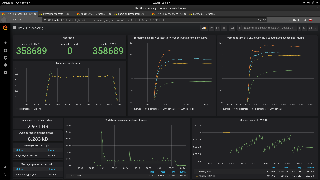

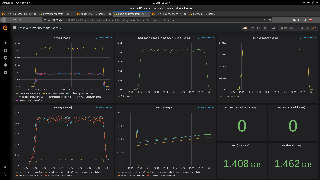

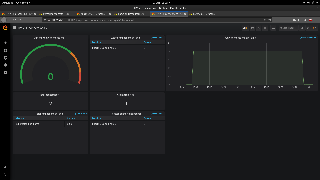

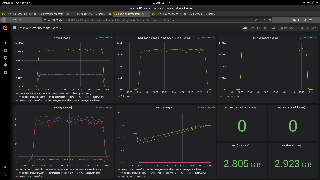

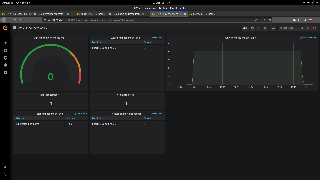

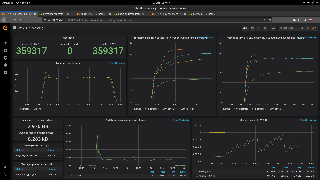

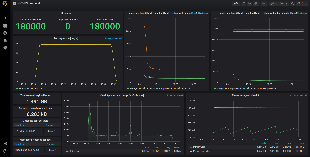

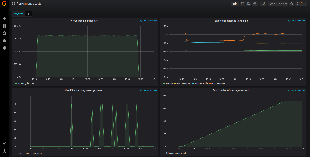

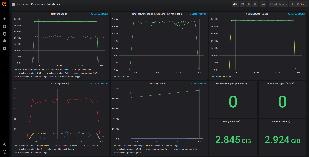

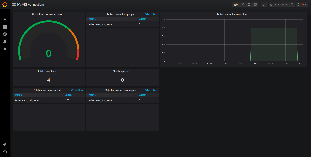

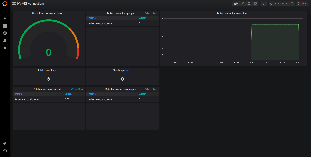

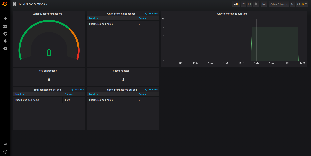

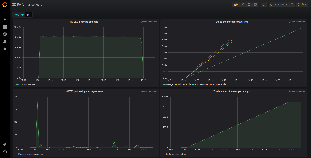

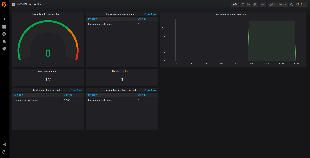

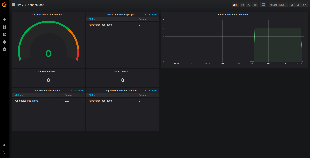

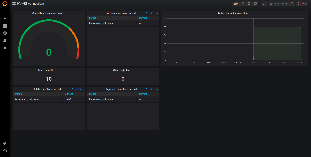

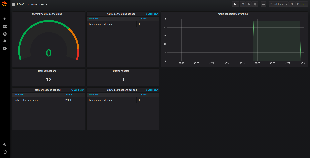

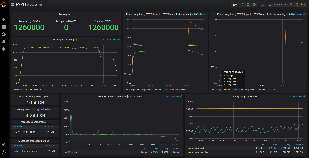

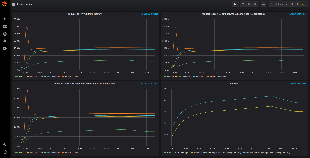

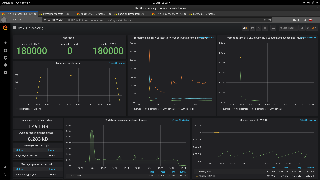

| 2 | 180000 | 0 | 0.026 | 8.9 | 1.6 | 11 | 3 | 0.35 | | | 2 | 180000 | 0 | 0.026 | 11.3 | 1.6 | 23 | 3.2 | 0.35 |  Image Added Image Added |

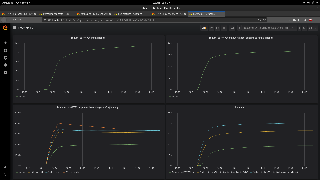

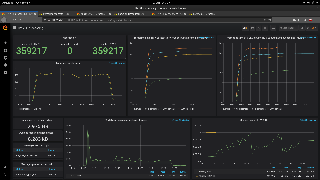

| NUMBER OF PRODUCERS | TOTAL MESSAGES PROCESSED | DIFFERENCE BETWEEN ALL MESSAGES AND SENT TO HV-VES | AVERAGE PROCESSING TIME IN HV-VES WITHOUT ROUTING [s] | AVERAGE LATENCY TO HV-VES OUTPUT WITH ROUTING [ms] | PEAK INCOMING DATA RATE [MB/s] | PEAK PROCESSING MESSAGE QUEUE SIZE | PEAK CPU LOAD [%] | PEAK MEMORY USAGE [GB] | RESULTS PRESENTED IN GRAFANA |

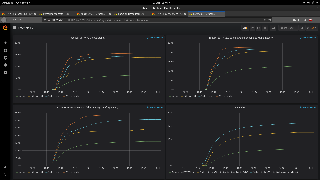

|---|

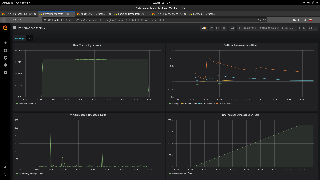

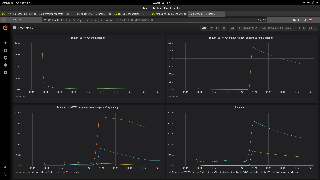

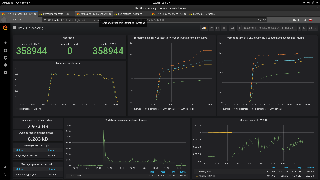

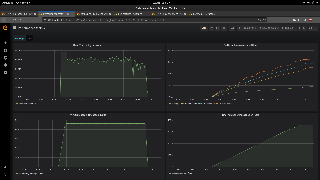

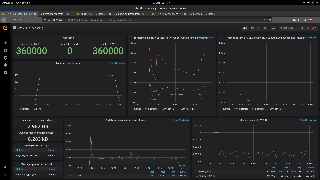

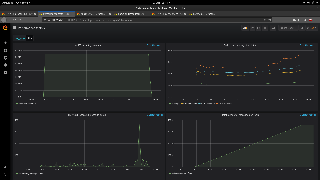

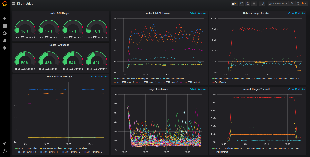

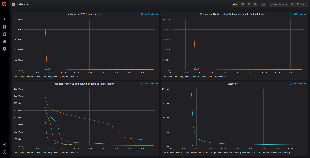

| 4 | 360000 | 0 | 0.022 | 41.3 | 3.2 | 130 | 4 | 0.35 |  Image Added Image Added Image Added Image Added Image Added Image Added | | 4 | 360000 | 0 | 0.023 | 40.7 | 3.2 | 370 | 4.2 | 0.35 | |

| NUMBER OF PRODUCERS | TOTAL MESSAGES PROCESSED | DIFFERENCE BETWEEN ALL MESSAGES AND SENT TO HV-VES | AVERAGE PROCESSING TIME IN HV-VES WITHOUT ROUTING [ms] | AVERAGE LATENCY TO HV-VES OUTPUT WITH ROUTING [ms] | PEAK INCOMING DATA RATE [MB/s] | PEAK PROCESSING MESSAGE QUEUE SIZE | PEAK CPU LOAD [%] | PEAK MEMORY USAGE [GB] | RESULTS PRESENTED IN GRAFANA |

|---|

| 6 | 359317 | 683 | 6240 | 15965 | 4.8 | 4500 | 4.3 | 1 | | | 6 | 359217 | 783 | 6490 | 16834 | 4.8 | 4200 | 5.8 | 1 | |

|

No DMaaP Kafka SetUp

Install Kafka Docker on Kubernetes

(based on: ultimate-guide-to-installing-kafka-docker-on-kuber)

Create config maps

Config maps are required by zookeeper and kafka-broker deployments.

| Code Block |

|---|

| title | Create kafka-config-map |

|---|

|

kubectl -n onap create cm kafka-config-map --from-file=kafka_server_jaas.conf |

| Code Block |

|---|

| title | kafka_server_jaas.conf |

|---|

| collapse | true |

|---|

|

KafkaServer {

org.apache.kafka.common.security.plain.PlainLoginModule required

username="admin"

password="admin_secret"

user_admin="admin_secret";

};

Client {

org.apache.zookeeper.server.auth.DigestLoginModule required

username="kafka"

password="kafka_secret";

}; |

...

| Code Block |

|---|

| title | Create zk-config-map |

|---|

|

kubectl -n onap create cm zk-config-map --from-file=zk_server_jaas.conf |

| Code Block |

|---|

| title | zk_server_jaas.conf |

|---|

| collapse | true |

|---|

|

Server {

org.apache.zookeeper.server.auth.DigestLoginModule required

user_kafka="kafka_secret";

}; |

Create deployments

| Code Block |

|---|

| title | Create zookeeper deployment |

|---|

|

kubectl -n onap create -f zookeeper.yml |

| Code Block |

|---|

| language | yml |

|---|

| title | zookeeper.yml |

|---|

| collapse | true |

|---|

|

---

kind: Deployment

apiVersion: extensions/v1beta1

metadata:

name: zookeeper-deployment-1

spec:

template:

metadata:

labels:

app: zookeeper-1

spec:

containers:

- name: zoo1

image: digitalwonderland/zookeeper

volumeMounts:

- name: config-volume

mountPath: "/home/ubuntu/kafkadepl/myconfig/zk_server_jaas.conf"

subPath: zk_server_jaas.conf

ports:

- containerPort: 2181

env:

- name: ZOOKEEPER_ID

value: "1"

- name: ZOOKEEPER_SERVER_1

value: zoo1

- name: KAFKA_OPTS

value: "-Djava.security.auth.login.config=/home/ubuntu/kafkadepl/myconfig/zk_server_jaas.conf -Dzookeeper.kerberos.removeHostFromPrincipal=true -Dzookeeper.kerberos.removeRealmFromPrincipal=true -Dzookeeper.authProvider.1=org.apache.zookeeper.server.auth.SASLAuthenticationProvider -Dzookeeper.requireClientAuthScheme=sasl"

- name: SERVER_JVMFLAGS

value: "-Djava.security.auth.login.config=/home/ubuntu/kafkadepl/myconfig/zk_server_jaas.conf -Dzookeeper.skipACL=yes"

- name: ZOOKEEPER_AUTHPROVIDER_1

value: "org.apache.zookeeper.server.auth.SASLAuthenticationProvider"

- name: ZOOKEEPER_REQUIRECLIENTAUTHSCHEME

value: "SASL"

volumes:

- name: config-volume

configMap:

name: zk-config-map

---

apiVersion: v1

kind: Service

metadata:

name: zoo1

labels:

app: zookeeper-1

spec:

ports:

- name: client

port: 2181

protocol: TCP

- name: follower

port: 2888

protocol: TCP

- name: leader

port: 3888

protocol: TCP

selector:

app: zookeeper-1

|

...

| Code Block |

|---|

| title | Create kafka-service |

|---|

|

kubectl -n onap create -f kafka-service.yml |

| Code Block |

|---|

| language | yml |

|---|

| title | kafka-service.yml |

|---|

| collapse | true |

|---|

|

---

apiVersion: v1

kind: Service

metadata:

name: kafka-service

labels:

name: kafka

spec:

ports:

- port: 9092

name: kafka-port

protocol: TCP

selector:

app: kafka

id: "0"

type: LoadBalancer |

...

| Code Block |

|---|

|

kubectl -n onap create -f kafka-broker.yml |

| Code Block |

|---|

| language | yml |

|---|

| title | kafka-broker.yml |

|---|

| collapse | true |

|---|

|

---

kind: Deployment

apiVersion: extensions/v1beta1

metadata:

name: kafka-broker0

spec:

template:

metadata:

labels:

app: kafka

id: "0"

spec:

containers:

- name: kafka

image: wurstmeister/kafka

volumeMounts:

- name: config-volume

mountPath: "/home/ubuntu/kafkadepl/myconfig/kafka_server_jaas.conf"

subPath: kafka_server_jaas.conf

ports:

- containerPort: 9092

env:

- name: KAFKA_ADVERTISED_PORT

value: "30718"

- name: KAFKA_ADVERTISED_HOST_NAME

value: 10.43.155.122

- name: KAFKA_ZOOKEEPER_CONNECT

value: "zoo1:2181"

- name: KAFKA_BROKER_ID

value: "0"

- name: KAFKA_DEFULT_REPLICATION_FACTOR

value: "3"

- name: KAFKA_AUTO_CREATE_TOPICS_ENABLE

value: "true"

- name: KAFKA_DELETE_TOPIC_ENABLE

value: "true"

- name: KAFKA_LISTENERS

value: "INTERNAL_SASL_PLAINTEXT://0.0.0.0:9092"

- name: KAFKA_ADVERTISED_LISTENERS

value: "INTERNAL_SASL_PLAINTEXT://kafka-service:9092"

- name: KAFKA_LISTENER_SECURITY_PROTOCOL_MAP

value: "INTERNAL_SASL_PLAINTEXT:SASL_PLAINTEXT,EXTERNAL_SASL_PLAINTEXT:SASL_PLAINTEXT"

- name: KAFKA_INTER_BROKER_LISTENER_NAME

value: "INTERNAL_SASL_PLAINTEXT"

- name: KAFKA_SASL_ENABLED_MECHANISMS

value: "PLAIN"

- name: KAFKA_SASL_MECHANISM_INTER_BROKER_PROTOCOL

value: "PLAIN"

- name: KAFKA_AUTHORIZER_CLASS_NAME

value: "kafka.security.authorizer.AclAuthorizer"

- name: KAFKA_ZOOKEEPER_SET_ACL

value: "true"

- name: KAFKA_OPTS

value: "-Djava.security.auth.login.config=/home/ubuntu/kafkadepl/myconfig/kafka_server_jaas.conf"

- name: KAFKA_ALLOW_EVERYONE_IF_NO_ACL_FOUND

value: "true"

volumes:

- name: config-volume

configMap:

name: kafka-config-map

|

Verify that pods are up and running

| Code Block |

|---|

| title | Verify zookeeper and broker |

|---|

|

kubectl -n onap get pods | grep 'zookeeper-deployment-1\|broker0' |

| Code Block |

|---|

| title | Verify kafka service |

|---|

|

kubectl -n onap get svc | grep kafka-service |

If you need to change some variable or anything in a yml file, delete the current deployment, for example:

| Code Block |

|---|

| title | Delete broker deployment |

|---|

|

kubectl -n onap delete deploy kafka-broker0 |

And after modifying the file create a new deployment as described above.

Run the test

Modify tools/performance/cloud scripts to match the names in your deployments, described in the previous step. Here is a diff file (you may need to adapt it to the current code situation):

tools.diff

Go to tools/performance/cloud and reboot the environment:

| Code Block |

|---|

|

./reboot-test-environment.sh -v |

Now you are ready to run the test.

Without DMaaP Kafka

Conditions

Tests were performed with following configuration:

| Messages per producer | Payload size [B] | Interval [ms] |

|---|

| 90000 | 8192 | 10 |

Raw results data

Raw results data with screenshots can be found in following files:

To see custom Kafka metrics you may want to change kafka-and-producers.json (located in HV-VES project directory: tools/performance/cloud/grafana/dashboards) to

...

| Expand |

|---|

| title | Click here to see results... |

|---|

|

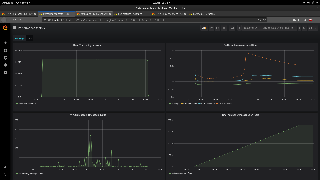

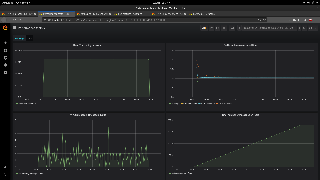

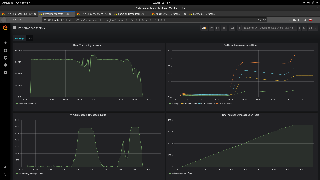

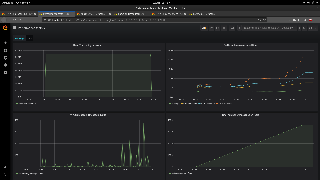

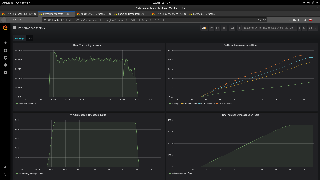

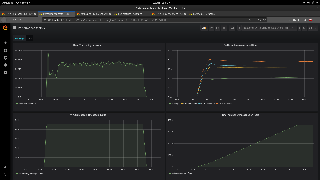

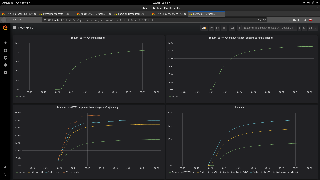

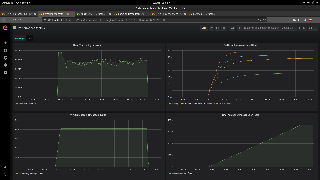

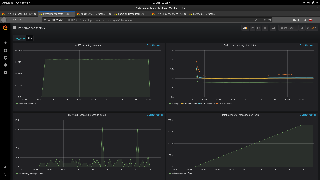

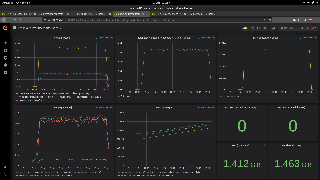

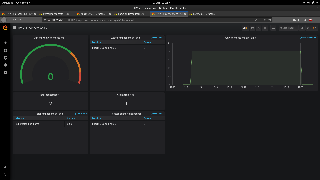

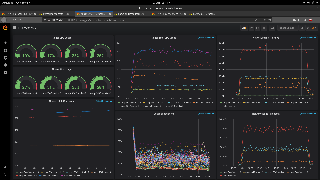

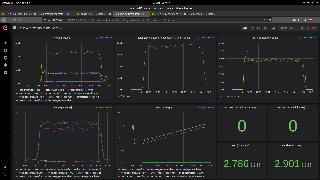

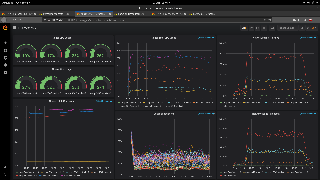

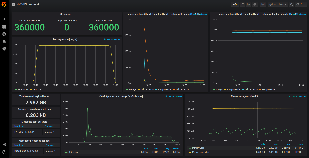

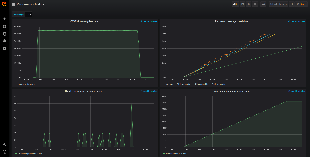

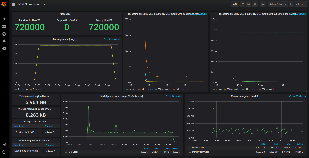

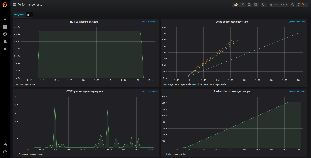

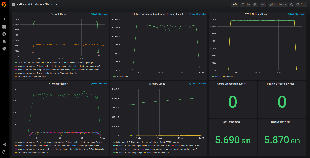

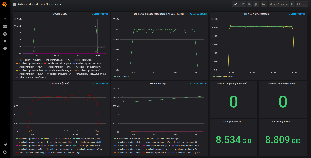

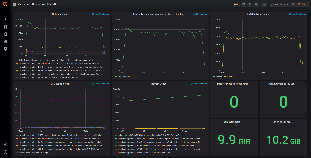

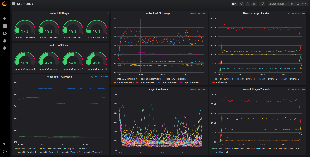

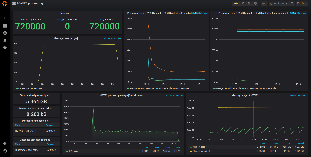

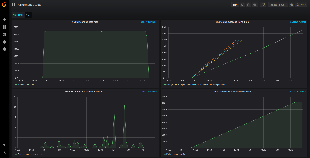

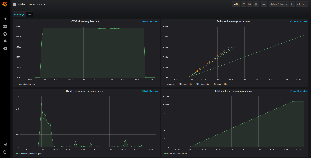

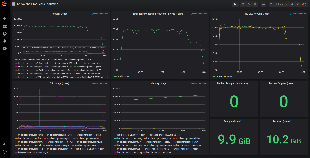

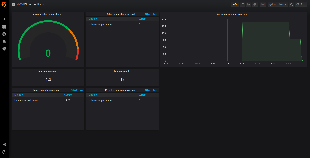

Below tables show the test results across a wide range of containers' number. | NUMBER OF PRODUCERS | TOTAL MESSAGES PROCESSED | DIFFERENCE BETWEEN ALL MESSAGES AND SENT TO HV-VES | AVERAGE PROCESSING TIME IN HV-VES WITHOUT ROUTING [ms] | AVERAGE LATENCY TO HV-VES OUTPUT WITH ROUTING [ms] | PEAK INCOMING DATA RATE [MB/s] | PEAK PROCESSING MESSAGE QUEUE SIZE | PEAK CPU LOAD [%] | PEAK MEMORY USAGE [GB] | RESULTS PRESENTED IN GRAFANA |

|---|

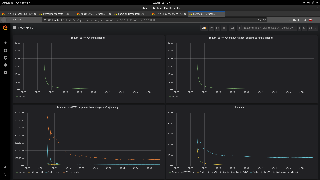

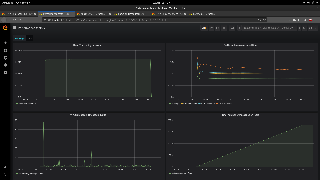

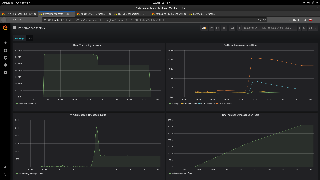

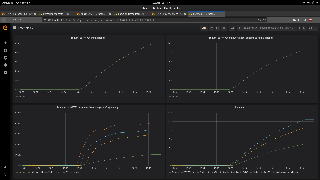

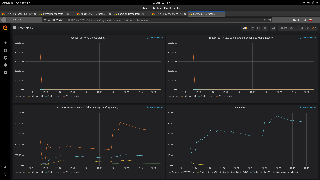

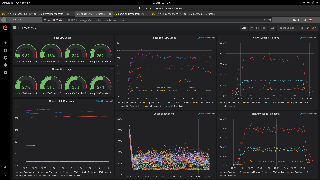

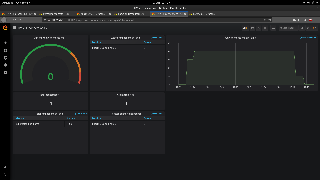

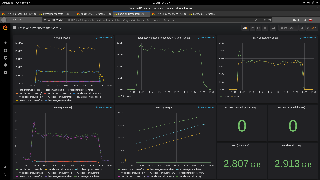

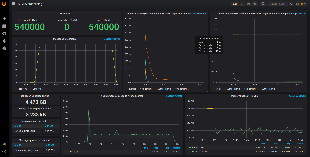

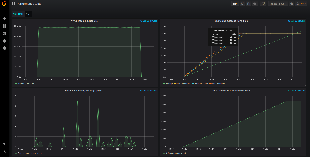

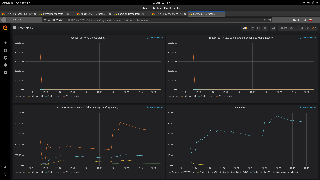

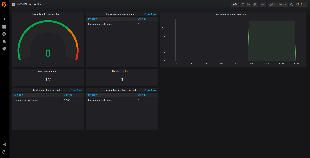

| 2 | 180000 | 0 | 0.024 | 1.5 | 1.6 | 3 | 3.5 | 0.37 | | | 4 | 360000 | 0 | 0.020 | 1.7 | 3.2 | 3 | 5.7 | 0.37 | | | 6 | 540000 | 0 | 0.02 | 2.6 | 4.8 | 24 | 6.0 | 0.37 | | | 8 | 720000 | 0 | 0.02 | 2.8 | 6.4 | 14 | 8.5 | 0.37 | | | 10 | 900000 | 0 | 0.02 | 7.3 | 8.1 | 552 | 8.5 | 0.38 | | | 12 | 10800000 | 0 | 0.02 | 78 | 9.7 | 1077 | 7.5 | 0.41 |  Image Added Image Added Image Removed Image Removed | | 14 | 12600000 | 0 | 6140 | 19000 | 13.0 | 10630 | 9.8 | 0.99 | |

|

Test results - series 2

| Expand |

|---|

| title | Click here to see results... |

|---|

|

Below tables show the test results across a wide range of containers' number. | NUMBER OF PRODUCERS | TOTAL MESSAGES PROCESSED | DIFFERENCE BETWEEN ALL MESSAGES AND SENT TO HV-VES | AVERAGE PROCESSING TIME IN HV-VES WITHOUT ROUTING [ms] | AVERAGE LATENCY TO HV-VES OUTPUT WITH ROUTING [ms] | PEAK INCOMING DATA RATE [MB/s] | PEAK PROCESSING MESSAGE QUEUE SIZE | PEAK CPU LOAD [%] | PEAK MEMORY USAGE [GB] | RESULTS PRESENTED IN GRAFANA |

|---|

| 2 | 180000 | 0 | 0.025 | 1.7 | 1.6 | 3 | 3.4 | 0.37 | | | 4 | 360000 | 0 | 0.021 | 2.1 | 3.2 | 14 | 4.9 | 0.37 | | | 6 | 540000 | 0 | 0.02 | 2.5 | 4.8 | 60 | 6.2 | 0.38 | | | 8 | 720000 | 0 | 0.02 | 2.9 | 6.4 | 24 | 7.4 | 0.37 | | | 10 | 900000 | 0 | 0.018 | 5.5 | 8.0 | 201 | 8.1 | 0.36 | | | 12 | 10800000 | 0 | 0.019 | 141.7 | 9.7 | 1716 | 9.1 | 0.44 | | | 14 | 12600000 | 0 | 31 | 7568 | 16.0 | 5778 | 8.6 | 0.50 | |

|