Warning: Draft Content

This wiki is under construction - this means that content here may be not fully specified or missing.

TODO: determine/fix containers not ready, get DCAE yamls working, fix health tracking issues for healing

The OOM (ONAP Operation Manager) project has pushed Kubernetes based deployment code to the oom repository. This page details getting ONAP running (specifically the vFirewall demo) on Kubernetes for various virtual and native environments.

Undercloud Installation

Note: you need at least 37g RAM (34 for ONAP services - this is without DCAE yet and without running the vFirewall yet).

We need a kubernetes installation either a base installation or with a thin API wrapper like Rancher or Redhat

There are several options - currently Rancher is a focus as a thin wrapper on Kubernetes.

| OS | VIM | Description | Status | Nodes | Links |

|---|---|---|---|---|---|

Ubuntu 16.04.2

| Bare Metal VMWare | Rancher | Recommended approach Issue with kubernetes support only in 1.12 (obsolete docker-machine) on OSX | 1-4 | http://rancher.com/docs/rancher/v1.6/en/quick-start-guide/ |

| AWS EC2 | EC2 VM's (not ECS or EBS PaaS) | Interesting - in the queue... | x | ||

| Linux | Bare Metal or VM | Kubernetes Directly on Ubuntu 16 (no Rancher) | In progress | 1 | https://kubernetes.io/docs/setup/independent/install-kubeadm/ https://lukemarsden.github.io/docs/getting-started-guides/kubeadm/ |

OSX Linux | CoreOS | On Vagrant (Thanks Yves) | Issue: the coreos VM 19G size is insufficient | 1 | https://coreos.com/kubernetes/docs/latest/kubernetes-on-vagrant-single.html Implement OSX fix for Vagrant 1.9.6 https://github.com/mitchellh/vagrant/issues/7747 Avoid the kubectl lock https://github.com/coreos/coreos-kubernetes/issues/886 |

| OSX | MInikube on VMWare Fusion VM | minikube VM not restartable | 1 | https://github.com/kubernetes/minikube | |

| RHEL 7.3 | VMWare VM | Redhat Kubernetes | services deploy, fix kubectl exec | 1 | https://access.redhat.com/documentation/en-us/red_hat_enterprise_linux_atomic_host/7/html-single/getting_started_with_kubernetes/ |

ONAP Installation

Quickstart Installation

ONAP deployment in kubernetes is modelled in the oom project as a 1:1 set of service:pod sets (1 pod per docker container). The fastest way to get ONAP Kubernetes up is via Rancher.

Primary platform is virtual Ubuntu 16.04 VMs on VMWare Workstation 12.5 on a up to two separate 64Gb/6-core 5820K Windows 10 systems.

Secondary platform is bare-metal 4 NUCs (i7/i5/i3 with 16G each)

Install only the 1.12.x (currently 1.12.6) version of Docker (the only version that works with Kubernetes in Rancher 1.6) Install rancher (use 8880 instead of 8080) In Rancher UI (http://127.0.0.1:8880) , Set IP name of master node in config, create a new onap environment as Kubernetes (will setup kube containers), stop default environment register your host(s) - run following on each host (get from "add host" menu) - install docker 1.12 if not already on the host curl https://releases.rancher.com/install-docker/1.12.sh | sh install kubectl paste kubectl config from rancher mkdir ~/.kube vi ~/.kube/config clone oom (scp your onap_rsa private key first) git clone ssh://michaelobrien@gerrit.onap.org:29418/oom fix nexus3 security temporarily for OOM-3 - Getting issue details... STATUS vi oom/kubernetes/oneclick/createAll.bash create_namespace() {

kubectl create namespace $1-$2

+ kubectl --namespace $1-$2 create secret docker-registry regsecret --docker-server=nexus3.onap.org:10001 --docker-username=docker --docker-password=docker --docker-email=email@email.com

+ kubectl --namespace $1-$2 patch serviceaccount default -p '{"imagePullSecrets": [{"name": "regsecret"}]}'

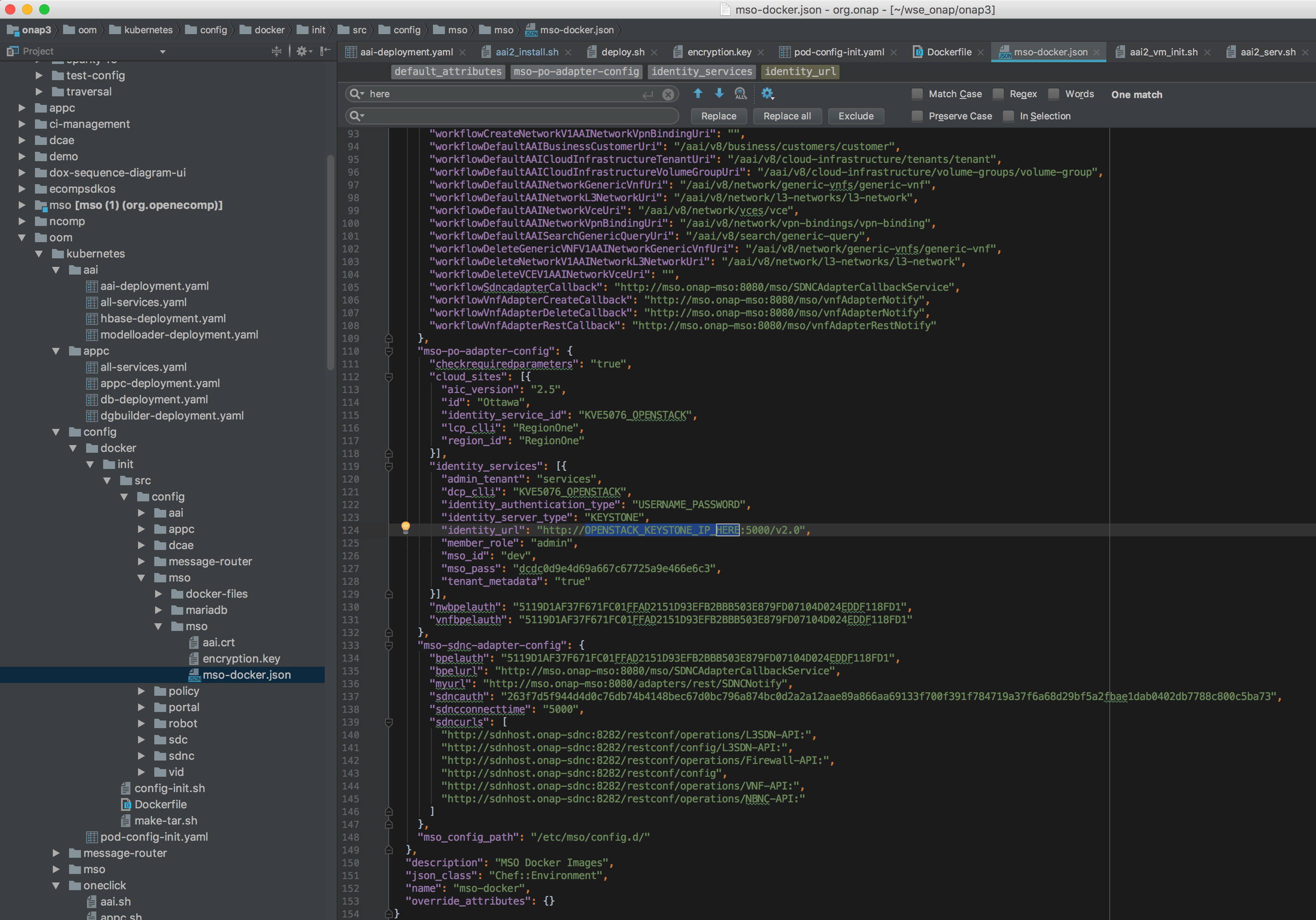

}Wait until all the hosts show green in rancher, then run the script that wraps all the kubectl commands run the one time config pod (with mounts )config-init-root) for all the other pods) - the pod will stop normally cd oom/kubernetes/config Before running pod-config-init.yaml - make sure your config for openstack is setup correctly - so you can deploy the vFirewall VMs for example vi oom/kubernetes/config/docker/init/src/config/mso/mso/mso-docker.json replace for example "identity_services": [{ ~/onap/oom/kubernetes/config# kubectl create -f pod-config-init.yaml pod "config-init" created Fix DNS resolution before running any more pods ( add service.ns.svc.cluster.local or svc.cluster.local temporarily) ~/oom/kubernetes/oneclick# cat /etc/resolv.conf nameserver 192.168.241.2 search localdomain service.ns.svc.cluster.local Note: use only the hardcoded "onap" namespace prefix - as URLs in the config pod are set as follows "workflowSdncadapterCallback": "http://mso.onap-mso:8080/mso/SDNCAdapterCallbackService", cd ../oneclick Wait until the containers are all up - you should see... |

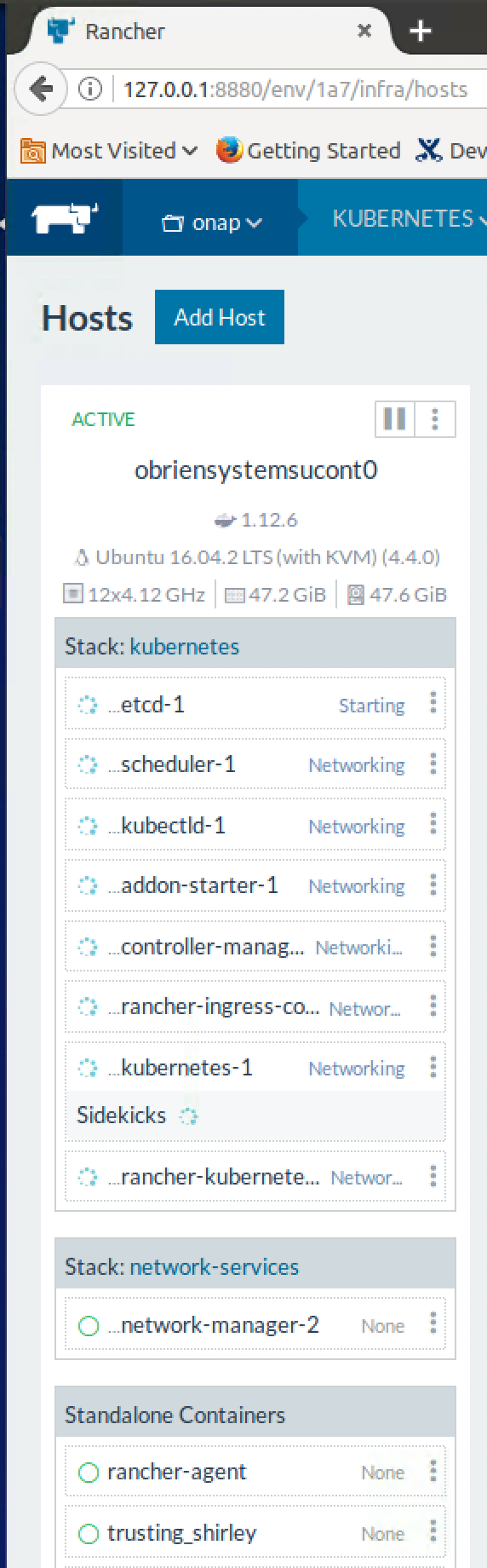

Four host Kubernetes cluster in Rancher

In this case 4 Intel NUCs running Ubuntu 16.04.2 natively

Target Deployment State

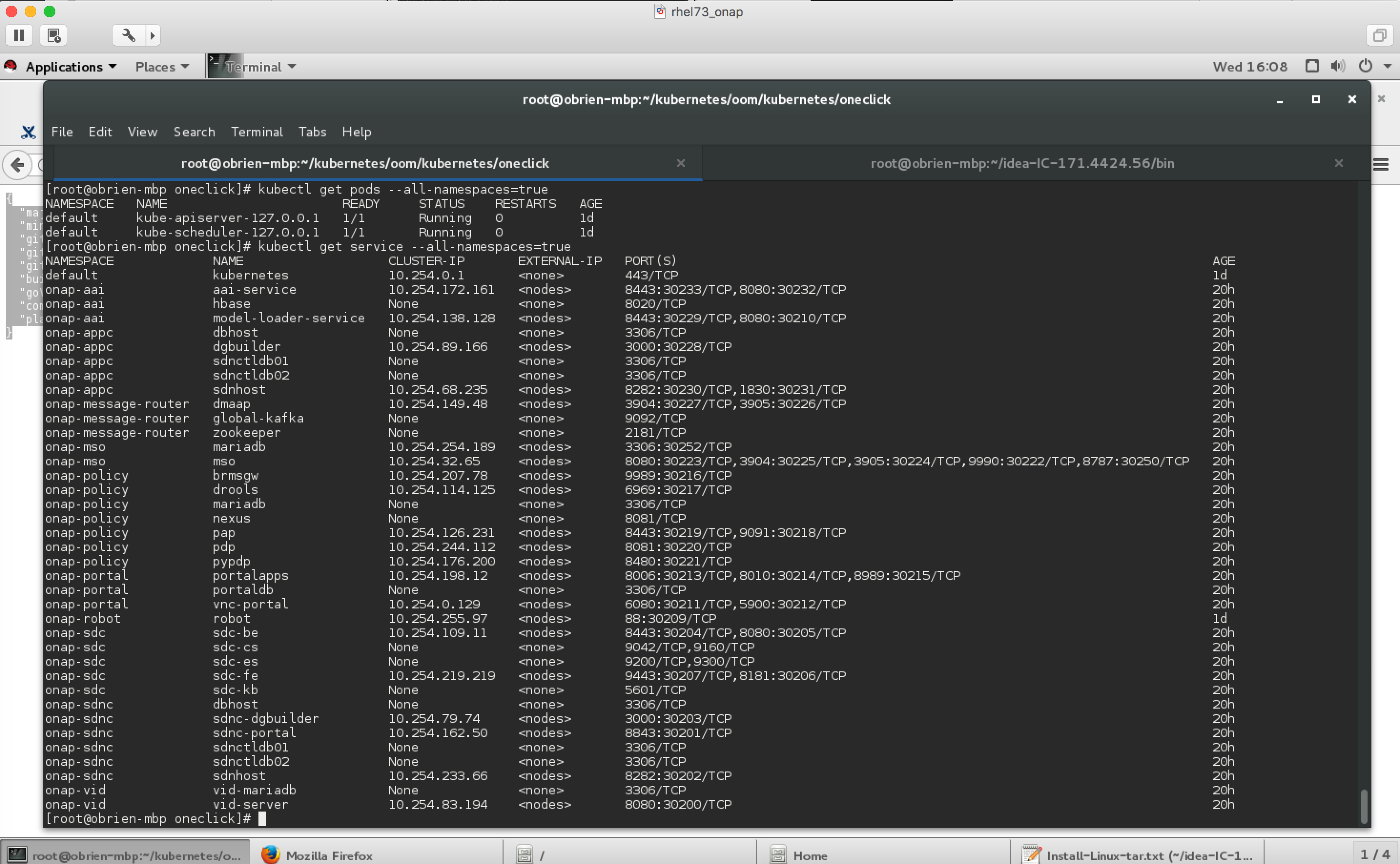

root@obriensystemsucont0:~/onap/oom/kubernetes/oneclick# kubectl get pods --all-namespaces -o wide

to update on 5820k 4.1GHz 12 vCores 48g Ubuntu 16.04.2 VM on 64g host root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl get services --all-namespaces -o wide NAMESPACE NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR default kubernetes 10.43.0.1 <none> 443/TCP 45m <none> kube-system heapster 10.43.39.217 <none> 80/TCP 45m k8s-app=heapster kube-system kube-dns 10.43.0.10 <none> 53/UDP,53/TCP 45m k8s-app=kube-dns kube-system kubernetes-dashboard 10.43.106.248 <none> 9090/TCP 45m k8s-app=kubernetes-dashboard kube-system monitoring-grafana 10.43.18.184 <none> 80/TCP 45m k8s-app=grafana kube-system monitoring-influxdb 10.43.58.26 <none> 8086/TCP 45m k8s-app=influxdb kube-system tiller-deploy 10.43.235.104 <none> 44134/TCP 45m app=helm,name=tiller onap-aai aai-service 10.43.181.245 <nodes> 8443:30233/TCP,8080:30232/TCP 27m app=aai-service onap-aai hbase None <none> 8020/TCP 27m app=hbase onap-aai model-loader-service 10.43.74.55 <nodes> 8443:30229/TCP,8080:30210/TCP 27m app=model-loader-service onap-appc dbhost None <none> 3306/TCP 27m app=appc-dbhost onap-appc dgbuilder 10.43.146.180 <nodes> 3000:30228/TCP 27m app=appc-dgbuilder onap-appc sdnctldb01 None <none> 3306/TCP 27m app=appc-dbhost onap-appc sdnctldb02 None <none> 3306/TCP 27m app=appc-dbhost onap-appc sdnhost 10.43.196.103 <nodes> 8282:30230/TCP,1830:30231/TCP 27m app=appc onap-message-router dmaap 10.43.95.58 <nodes> 3904:30227/TCP,3905:30226/TCP 27m app=dmaap onap-message-router global-kafka None <none> 9092/TCP 27m app=global-kafka onap-message-router zookeeper None <none> 2181/TCP 27m app=zookeeper onap-mso mariadb 10.43.5.166 <nodes> 3306:30252/TCP 27m app=mariadb onap-mso mso 10.43.156.155 <nodes> 8080:30223/TCP,3904:30225/TCP,3905:30224/TCP,9990:30222/TCP,8787:30250/TCP 27m app=mso onap-policy brmsgw 10.43.81.249 <nodes> 9989:30216/TCP 27m app=brmsgw onap-policy drools 10.43.154.250 <nodes> 6969:30217/TCP 27m app=drools onap-policy mariadb None <none> 3306/TCP 27m app=mariadb onap-policy nexus None <none> 8081/TCP 27m app=nexus onap-policy pap 10.43.37.182 <nodes> 8443:30219/TCP,9091:30218/TCP 27m app=pap onap-policy pdp 10.43.123.239 <nodes> 8081:30220/TCP 27m app=pdp onap-policy pypdp 10.43.226.208 <nodes> 8480:30221/TCP 27m app=pypdp onap-portal portalapps 10.43.225.107 <nodes> 8006:30213/TCP,8010:30214/TCP,8989:30215/TCP 27m app=portalapps onap-portal portaldb None <none> 3306/TCP 27m app=portaldb onap-portal vnc-portal 10.43.216.210 <nodes> 6080:30211/TCP,5900:30212/TCP 27m app=vnc-portal onap-robot robot 10.43.52.131 <nodes> 88:30209/TCP 34m app=robot onap-sdc sdc-be 10.43.123.5 <nodes> 8443:30204/TCP,8080:30205/TCP 27m app=sdc-be onap-sdc sdc-cs None <none> 9042/TCP,9160/TCP 27m app=sdc-cs onap-sdc sdc-es None <none> 9200/TCP,9300/TCP 27m app=sdc-es onap-sdc sdc-fe 10.43.233.17 <nodes> 9443:30207/TCP,8181:30206/TCP 27m app=sdc-fe onap-sdc sdc-kb None <none> 5601/TCP 27m app=sdc-kb onap-sdnc dbhost None <none> 3306/TCP 27m app=sdnc-dbhost onap-sdnc sdnc-dgbuilder 10.43.253.47 <nodes> 3000:30203/TCP 27m app=sdnc-dgbuilder onap-sdnc sdnc-portal 10.43.248.245 <nodes> 8843:30201/TCP 27m app=sdnc-portal onap-sdnc sdnctldb01 None <none> 3306/TCP 27m app=sdnc-dbhost onap-sdnc sdnctldb02 None <none> 3306/TCP 27m app=sdnc-dbhost onap-sdnc sdnhost 10.43.51.170 <nodes> 8282:30202/TCP 27m app=sdnc onap-vid vid-mariadb None <none> 3306/TCP 31m app=vid-mariadb onap-vid vid-server 10.43.54.34 <nodes> 8080:30200/TCP 31m app=vid-server sdnc-portal is still downloading node packages root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl --namespace onap-sdnc logs -f sdnc-portal-3375812606-01s1d npm http GET https://registry.npmjs.org/minimist/0.0.8 |

|---|

below any colored container had issues getting to running state (currently 32 of 33 come up after 45 min)

NAMESPACE master:20170715 | NAME | READY | STATUS | RESTARTS (in 14h) | Host | Start time | Notes |

|---|---|---|---|---|---|---|---|

| default | config-init | 0/0 | Terminated (Succeeded) | 0 | 1 | The mount "config-init-root" is in the following location (user configurable VF parameter file below) /dockerdata-nfs/onapdemo/mso/mso/mso-docker.json | |

| onap-aai | aai-service-346921785-624ss | 1/1 | Running | 0 | 1 | ||

| onap-aai | hbase-139474849-7fg0s | 1/1 | Running | 0 | 2 | ||

| onap-aai | model-loader-service-1795708961-wg19w | 0/1 | Init:1/2 | 82 | 2 | ||

| onap-appc | appc-2044062043-bx6tc | 1/1 | Running | 0 | 1 | ||

| onap-appc | appc-dbhost-2039492951-jslts | 1/1 | Running | 0 | 2 | ||

| onap-appc | appc-dgbuilder-2934720673-mcp7c | 1/1 | Running | 0 | 2 | ||

| onap-appc | appctldb01 (missing)? | ||||||

| onap-appc | appctldb02 (missing)? | ||||||

| onap-dcae | not yet pushed | Note: currently there are no DCAE containers running yet (we are missing 6 yaml files (1 for the controller and 5 for the collector,staging,3-cdap pods)) - therefore DMaaP, VES collectors and APPC actions as the result of policy actions (closed loop) - will not function yet. | |||||

| onap-dcae-cdap | not yet pushed | ||||||

| onap-dcae-stg | not yet pushed | ||||||

| onap-dcae-coll | not yet pushed | ||||||

| onap-message-router | dmaap-3842712241-gtdkp | 0/1 | CrashLoopBackOff | 164 | 1 | ||

| onap-message-router | global-kafka-89365896-5fnq9 | 1/1 | Running | 0 | 2 | ||

| onap-message-router | zookeeper-1406540368-jdscq | 1/1 | Running | 0 | 1 | ||

| onap-mso | mariadb-2638235337-758zr | 1/1 | Running | 0 | 1 | ||

| onap-mso | mso-3192832250-fq6pn | 0/1 | CrashLoopBackOff | 167 | 2 | fixed by config-init and resolv.conf | |

| onap-policy | brmsgw-568914601-d5z71 | 0/1 | Init:0/1 | 82 | 1 | fixed by config-init and resolv.conf | |

| onap-policy | drools-1450928085-099m2 | 0/1 | Init:0/1 | 82 | 1 | 45m | fixed by config-init and resolv.conf |

| onap-policy | mariadb-2932363958-0l05g | 1/1 | Running | 0 | 0 | ||

| onap-policy | nexus-871440171-tqq4z | 0/1 | Running | 0 | 2 | ||

| onap-policy | pap-2218784661-xlj0n | 1/1 | Running | 0 | 1 | ||

| onap-policy | pdp-1677094700-75wpj | 0/1 | Init:0/1 | 82 | 2 | fixed by config-init and resolv.conf | |

| onap-policy | pypdp-3209460526-bwm6b | 0/1 | Init:0/1 | 82 | 2 | fixed by config-init and resolv.conf | |

| onap-portal | portalapps-1708810953-trz47 | 0/1 | Init:CrashLoopBackOff | 163 | 2 | Initial dockerhub mariadb download issue - fixed | |

| onap-portal | portaldb-3652211058-vsg8r | 1/1 | Running | 0 | 0 | ||

| onap-portal | vnc-portal-948446550-76kj7 | 0/1 | Init:0/5 | 82 | 1 | fixed by config-init and resolv.conf | |

| onap-robot | robot-964706867-czr05 | 1/1 | Running | 0 | 2 | ||

| onap-sdc | sdc-be-2426613560-jv8sk | 0/1 | Init:0/2 | 82 | 2 | fixed by config-init and resolv.conf | |

| onap-sdc | sdc-cs-2080334320-95dq8 | 0/1 | CrashLoopBackOff | 163 | 2 | fixed by config-init and resolv.conf | |

| onap-sdc | sdc-es-3272676451-skf7z | 1/1 | Running | 0 | 1 | ||

| onap-sdc | sdc-fe-931927019-nt94t | 0/1 | Init:0/1 | 82 | 1 | fixed by config-init and resolv.conf | |

| onap-sdc | sdc-kb-3337231379-8m8wx | 0/1 | Init:0/1 | 82 | 1 | fixed by config-init and resolv.conf | |

| onap-sdnc | sdnc-1788655913-vvxlj | 1/1 | Running | 0 | 0 | ||

| onap-sdnc | sdnc-dbhost-240465348-kv8vf | 1/1 | Running | 0 | 0 | ||

| onap-sdnc | sdnc-dgbuilder-4164493163-cp6rx | 1/1 | Running | 0 | 0 | ||

| onap-sdnc | sdnctlbd01 (missing)? | ||||||

| onap-sdnc | sdnctlb02 (missing)? | ||||||

| onap-sdnc | sdnc-portal-2324831407-50811 | 0/1 | Running | 3=vm 0=nuc | 1 | root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl --namespace onap-sdnc logs -f sdnc-portal-3375812606-01s1d | grep ERR npm ERR! fetch failed https://registry.npmjs.org/is-utf8/-/is-utf8-0.2.1.tgz | |

| onap-vid | vid-mariadb-4268497828-81hm0 | 0/1 | CrashLoopBackOff | 169 | 2 | fixed by config-init and resolv.conf | |

| onap-vid | vid-server-2331936551-6gxsp | 0/1 | Init:0/1 | 82 | 1 | fixed by config-init and resolv.conf |

Run VID if required to verify that config-init has mounted properly (and to verify resolv.conf)

Cloning details

Install the latest version of the OOM (ONAP Operations Manager) project repo - specifically the ONAP on Kubernetes work just uploaded June 2017

https://gerrit.onap.org/r/gitweb?p=oom.git

git clone ssh://yourgerrituserid@gerrit.onap.org:29418/oom cd oom/kubernetes/oneclick Versions oom : master (1.1.0-SNAPSHOT) onap deployments: 1.0.0 |

|---|

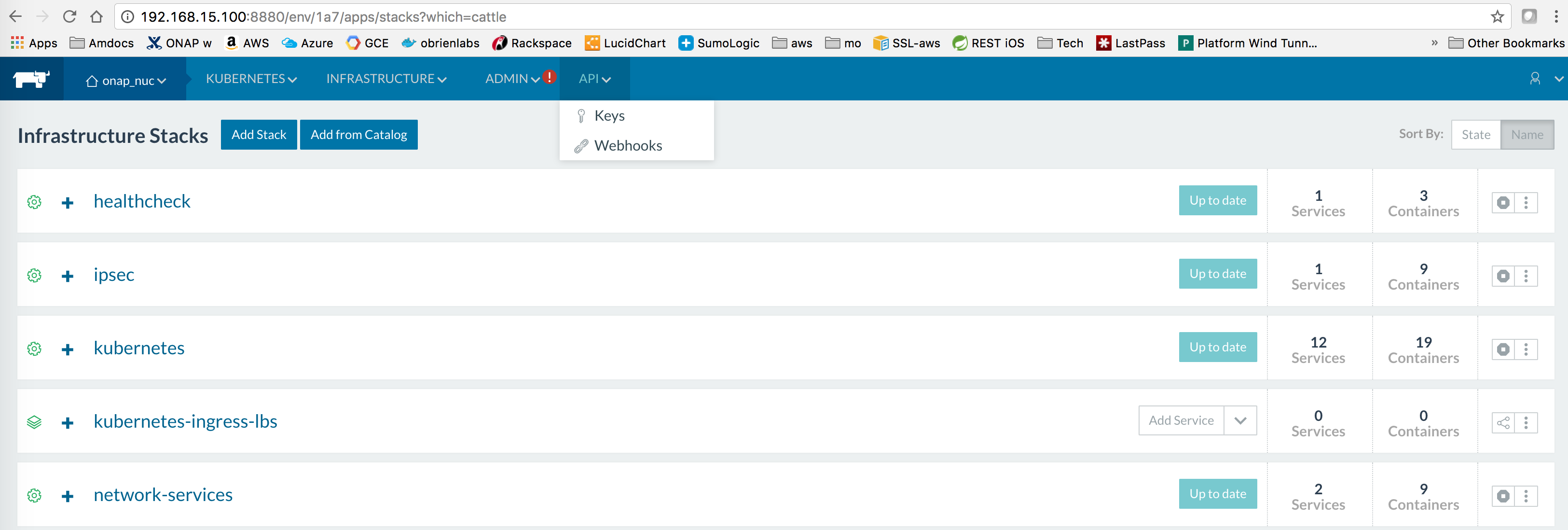

Rancher environment for Kubernetes

setup a separate onap kubernetes environment and disable the exising default environment.

Adding hosts to the Kubernetes environment will kick in k8s containers

Rancher kubectl config

To be able to run the kubectl scripts - install kubectl

Nexus3 security settings

Fix nexus3 security for each namespace

in createAll.bash add the following two lines just before namespace creation - to create a secret and attach it to the namespace (thanks to Jason Hunt of IBM last friday to helping us attach it - when we were all getting our pods to come up). A better fix for the future will be to pass these in as parameters from a prod/stage/dev ecosystem config.

create_namespace() {

kubectl create namespace $1-$2

+ kubectl --namespace $1-$2 create secret docker-registry regsecret --docker-server=nexus3.onap.org:10001 --docker-username=docker --docker-password=docker --docker-email=email@email.com

+ kubectl --namespace $1-$2 patch serviceaccount default -p '{"imagePullSecrets": [{"name": "regsecret"}]}'

} |

|---|

Fix MSO mso-docker.json

Before running pod-config-init.yaml - make sure your config for openstack is setup correctly - so you can deploy the vFirewall VMs for example

vi oom/kubernetes/config/docker/init/src/config/mso/mso/mso-docker.json

| Original | Replacement for Rackspace |

"mso-po-adapter-config": { | "mso-po-adapter-config": { |

|---|

delete/recreate the config poroot@obriensystemskub0:~/oom/kubernetes/config# kubectl --namespace default delete -f pod-config-init.yaml

pod "config-init" deleted

root@obriensystemskub0:~/oom/kubernetes/config# kubectl create -f pod-config-init.yaml

pod "config-init" created

or copy over your changes directly to the mount

root@obriensystemskub0:~/oom/kubernetes/config# cp docker/init/src/config/mso/mso/mso-docker.json /dockerdata-nfs/onapdemo/mso/mso/mso-docker.json

Use only "onap" namespace

Note: use only the hardcoded "onap" namespace prefix - as URLs in the config pod are set as follows "workflowSdncadapterCallback": "http://mso.onap-mso:8080/mso/SDNCAdapterCallbackService",

Monitor Container Deployment

first verify your kubernetes system is up

Then wait 25-45 min for all pods to attain 1/1 state

Kubernetes specific config

https://kubernetes.io/docs/user-guide/kubectl-cheatsheet/

Nexus Docker repo Credentials

Checking out use of a kubectl secret in the yaml files via - https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

Container Endpoint access

Check the services view in the Kuberntes API under robot

robot.onap-robot:88 TCP

robot.onap-robot:30209 TCP

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl get services --all-namespaces -o wide onap-vid vid-mariadb None <none> 3306/TCP 1h app=vid-mariadb onap-vid vid-server 10.43.14.244 <nodes> 8080:30200/TCP 1h app=vid-server |

|---|

Container Logs

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl --namespace onap-vid logs -f vid-server-248645937-8tt6p 16-Jul-2017 02:46:48.707 INFO [main] org.apache.catalina.startup.Catalina.start Server startup in 22520 ms root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl get pods --all-namespaces -o wide NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE onap-robot robot-44708506-dgv8j 1/1 Running 0 36m 10.42.240.80 obriensystemskub0 root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl --namespace onap-robot logs -f robot-44708506-dgv8j 2017-07-16 01:55:54: (log.c.164) server started |

|---|

SSH into ONAP containers

Normally I would via https://kubernetes.io/docs/tasks/debug-application-cluster/get-shell-running-container/

kubectl exec -it robot -- /bin/bash |

|---|

The pod id should be sufficient

root@obriensystemsucont0:~/onap/oom/kubernetes/oneclick# kubectl describe node obriensystemsucont0 | grep robot Namespace Name CPU Requests CPU Limits Memory Requests Memory Limits --------- ---- ------------ ---------- --------------- ------------- |

|---|

https://jira.onap.org/browse/OOM-47

in queue....

Push Files to Pods

Trying to get an authorization file into the robot pod

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl cp authorization onap-robot/robot-44708506-nhm0n:/home/ubuntu above works? |

|---|

Running ONAP Portal UI Operations

see Installing and Running the ONAP Demos

Get the mapped external port by checking the service in kubernetes - here 30200 for VID on a particular node in our cluster.

or run a kube

fix /etc/hosts as usual

192.168.163.132 portal.api.simpledemo.openecomp.org 192.168.163.132 sdc.api.simpledemo.openecomp.org 192.168.163.132 policy.api.simpledemo.openecomp.org 192.168.163.132 vid.api.simpledemo.openecomp.org |

|---|

In order to map internal 8989 ports to external ones like 30215 - we will need to reconfigure the onap config links as below.

Kubernetes Installation Options

Rancher on Ubuntu 16.04

Install Rancher

http://rancher.com/docs/rancher/v1.6/en/quick-start-guide/

http://rancher.com/docs/rancher/v1.6/en/installing-rancher/installing-server/#single-container

Install a docker version that Rancher and Kubernetes support which is currently 1.12.6

http://rancher.com/docs/rancher/v1.5/en/hosts/#supported-docker-versions

curl https://releases.rancher.com/install-docker/1.12.sh | sh |

|---|

Verify your Rancher admin console is up on the external port you configured above

Wait for the docker container to finish DB startup

http://rancher.com/docs/rancher/v1.6/en/hosts/

Registering Hosts in Rancher

Having issues registering a combined single VM (controller + host) - use your real IP not localhost

In settings | Host Configuration | set your IP [root@obrien-b2 etcd]# sudo docker run -e CATTLE_AGENT_IP="192.168.163.128" --rm --privileged -v /var/run/docker.sock:/var/run/docker.sock -v /var/lib/rancher:/var/lib/rancher rancher/agent:v1.2.2 http://192.168.163.128:8080/v1/scripts/A9487FC88388CC31FB76:1483142400000:IypSDQCtA4SwkRnthKqH53Vxoo |

|---|

See your host registered

Troubleshooting

Rancher fails to restart on server reboot

Having issues after a reboot of a colocated server/agent

Docker Nexus Config

OOM-3 - Getting issue details... STATUS

Out of the box we cant pull images - currently working on a config step around https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

| kubectl create secret docker-registry regsecret --docker-server=nexus3.onap.org:10001 --docker-username=docker --docker-password=docker --docker-email=someone@amdocs.com |

|---|

imagePullSecrets: - name: regsecret |

|---|

Failed to pull image "nexus3.onap.org:10001/openecomp/testsuite:1.0-STAGING-latest": image pull failed for nexus3.onap.org:10001/openecomp/testsuite:1.0-STAGING-latest, this may be because there are no credentials on this request. details: (unauthorized: authentication required)

kubelet 172.17.4.99

OOM Repo changes

20170629: fix on 20170626 on a hardcoded proxy - (for those who run outside the firewall) - https://gerrit.onap.org/r/gitweb?p=oom.git;a=commitdiff;h=131c2a42541fb807f395fe1f39a8482a53f92c60

DNS resolution

add "service.ns.svc.cluster.local" to fix

Search Line limits were exceeded, some dns names have been omitted, the applied search line is: default.svc.cluster.local svc.cluster.local cluster.local kubelet.kubernetes.rancher.internal kubernetes.rancher.internal rancher.internal

https://github.com/rancher/rancher/issues/9303

root@obriensystemskub0:~/oom/kubernetes/oneclick# cat /etc/resolv.conf

# Dynamic resolv.conf(5) file for glibc resolver(3) generated by resolvconf(8)

# DO NOT EDIT THIS FILE BY HAND -- YOUR CHANGES WILL BE OVERWRITTEN

nameserver 192.168.241.2

search localdomain service.ns.svc.cluster.local

Deprecated Kubernetes Installation Options

Bare RHEL 7.3 VM - Multi Node Cluster

In progress as of 20170701

https://kubernetes.io/docs/getting-started-guides/scratch/

https://github.com/kubernetes/kubernetes/releases/latest https://github.com/kubernetes/kubernetes/releases/tag/v1.7.0 https://github.com/kubernetes/kubernetes/releases/download/v1.7.0/kubernetes.tar.gz tar -xvf kubernetes.tar optional build from source vi Vagrantfile go directly to binaries /run/media/root/sec/onap_kub/kubernetes/cluster ./get-kube-binaries.sh export Path=/run/media/root/sec/onap_kub/kubernetes/client/bin:$PATH [root@obrien-b2 server]# pwd /run/media/root/sec/onap_kub/kubernetes/server kubernetes-manifests.tar.gz kubernetes-salt.tar.gz kubernetes-server-linux-amd64.tar.gz README tar -xvf kubernetes-server-linux-amd64.tar.gz /run/media/root/sec/onap_kub/kubernetes/server/kubernetes/server/bin build images [root@obrien-b2 etcd]# make

(go lang required - adjust google docs) https://golang.org/doc/install?download=go1.8.3.linux-amd64.tar.gz |

|---|

CoreOS on Vagrant on RHEL/OSX

(Yves alerted me to this) - currently blocked by the 19g VM size (changing the HD of the VM is unsupported in the VirtualBox driver)

https://coreos.com/kubernetes/docs/latest/kubernetes-on-vagrant-single.html

Implement OSX fix for Vagrant 1.9.6 https://github.com/mitchellh/vagrant/issues/7747

Adjust the VagrantFile for your system

NODE_VCPUS = 1 NODE_MEMORY_SIZE = 2048 to (for a 5820K on 64G for example) NODE_VCPUS = 8 NODE_MEMORY_SIZE = 32768 |

|---|

curl -O https://storage.googleapis.com/kubernetes-release/release/v1.6.1/bin/darwin/amd64/kubectl chmod +x kubectl skipped (mv kubectl /usr/local/bin/kubectl) - already there ls /usr/local/bin/kubectl git clone https://github.com/coreos/coreos-kubernetes.git cd coreos-kubernetes/single-node/ vagrant box update sudo ln -sf /usr/local/bin/openssl /opt/vagrant/embedded/bin/openssl vagrant up Wait at least 5 min (Yves is good) (rerun from here) export KUBECONFIG="${KUBECONFIG}:$(pwd)/kubeconfig" kubectl config use-context vagrant-single obrienbiometrics:single-node michaelobrien$ export KUBECONFIG="${KUBECONFIG}:$(pwd)/kubeconfig" obrienbiometrics:single-node michaelobrien$ kubectl config use-context vagrant-single Switched to context "vagrant-single". obrienbiometrics:single-node michaelobrien$ kubectl proxy & [1] 4079 obrienbiometrics:single-node michaelobrien$ Starting to serve on 127.0.0.1:8001 goto $ kubectl get nodes $ kubectl get service --all-namespaces $ kubectl cluster-info git clone ssh://michaelobrien@gerrit.onap.org:29418/oom cd oom/kubernetes/oneclick/ obrienbiometrics:oneclick michaelobrien$ ./createAll.bash -n onap **** Done ****obrienbiometrics:oneclick michaelobrien$ kubectl get service --all-namespaces ... onap-vid vid-server 10.3.0.31 <nodes> 8080:30200/TCP 32s obrienbiometrics:oneclick michaelobrien$ kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system heapster-v1.2.0-4088228293-3k7j1 2/2 Running 2 4h kube-system kube-apiserver-172.17.4.99 1/1 Running 1 4h kube-system kube-controller-manager-172.17.4.99 1/1 Running 1 4h kube-system kube-dns-782804071-jg3nl 4/4 Running 4 4h kube-system kube-dns-autoscaler-2715466192-k45qg 1/1 Running 1 4h kube-system kube-proxy-172.17.4.99 1/1 Running 1 4h kube-system kube-scheduler-172.17.4.99 1/1 Running 1 4h kube-system kubernetes-dashboard-3543765157-qtnnj 1/1 Running 1 4h onap-aai aai-service-346921785-w3r22 0/1 Init:0/1 0 1m ... reset obrienbiometrics:single-node michaelobrien$ rm -rf ~/.vagrant.d/boxes/coreos-alpha/ |

|---|

OSX Minikube

curl -LO https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/darwin/amd64/kubectl chmod +x ./kubectl sudo mv ./kubectl /usr/local/bin/kubectl kubectl cluster-info kubectl completion -h brew install bash-completion curl -Lo minikube https://storage.googleapis.com/minikube/releases/v0.19.0/minikube-darwin-amd64 && chmod +x minikube && sudo mv minikube /usr/local/bin/ minikube start --vm-driver=vmwarefusion kubectl run hello-minikube --image=gcr.io/google_containers/echoserver:1.4 --port=8080 kubectl expose deployment hello-minikube --type=NodePort kubectl get pod curl $(minikube service hello-minikube --url) minikube stop |

|---|

When upgrading from 0.19 to 0.20 - do a minikube delete

RHEL Kubernetes - Redhat 7.3 Enterprise Linux Host

Running onap kubernetes services in a single VM using Redhat Kubernetes for 7.3

Redhat provides 2 docker containers for the scheduler and nbi components and spins up 2 (# is scalable) pod containers for use by onap.

[root@obrien-mbp oneclick]# docker ps |

|---|

Kubernetes setup

Uninstall docker-se (we installed earlier) subscription-manager repos --enable=rhel-7-server-optional-rpms [root@obrien-mbp opt]# ./kubestart.sh [root@obrien-mbp opt]# ss -tulnp | grep -E "(kube)|(etcd)"

|

|---|

References

OOM-1 - Getting issue details... STATUS