Table of Contents

| Note | ||

|---|---|---|

| ||

This wiki is under construction - this means that content here may be not fully specified or missing. TODO: |

...

Architectural details of the OOM project is described here - OOM User Guide

...

Reviews

https://gerrit.onap.org/r/#/c/6179/

Undercloud Installation

Requirements

...

37G w/o DCAE

50G with DCAE

...

100G w/o DCAE

We need a kubernetes installation either a base installation or with a thin API wrapper like Rancher.

There are several options - currently Rancher with Helm on Ubuntu 16.04 is a focus as a thin wrapper on Kubernetes - there are other alternative platforms in the subpage - ONAP on Kubernetes (Alternatives)

...

Ubuntu 16.04.2

!Redhat

...

Bare Metal

VMWare

...

Recommended approach

Issue with kubernetes support only in 1.12 (obsolete docker-machine) on OSX

...

ONAP Installation

Quickstart Installation

1) install rancher, clone oom, run config-init pod, run one or all onap components

...

*****************

Note: uninstall docker if already installed - as Kubernetes only support 1.12.x - as of 20170809

| Code Block |

|---|

% sudo apt-get remove docker-engine |

*****************

ONAP deployment in kubernetes is modelled in the oom project as a 1:1 set of service:pod sets (1 pod per docker container). The fastest way to get ONAP Kubernetes up is via Rancher on any bare metal or VM that supports a clean Ubuntu 16.04 install and more than 50G ram.

(on each host) add to your /etc/hosts to point your ip to your hostname (add your hostname to the end). Add entries for all other hosts in your cluster.

| Code Block | ||

|---|---|---|

| ||

sudo vi /etc/hosts

<your-ip> <your-hostname> |

Try to use root - if you use ubuntu then you will need to enable docker separately for the ubuntu user

| Code Block |

|---|

sudo su -

apt-get update |

(to fix possible modprobe: FATAL: Module aufs not found in directory /lib/modules/4.4.0-59-generic)

(on each host (server and client(s) which may be the same machine)) Install only the 1.12.x (currently 1.12.6) version of Docker (the only version that works with Kubernetes in Rancher 1.6)

| Code Block |

|---|

curl https://releases.rancher.com/install-docker/1.12.sh | sh |

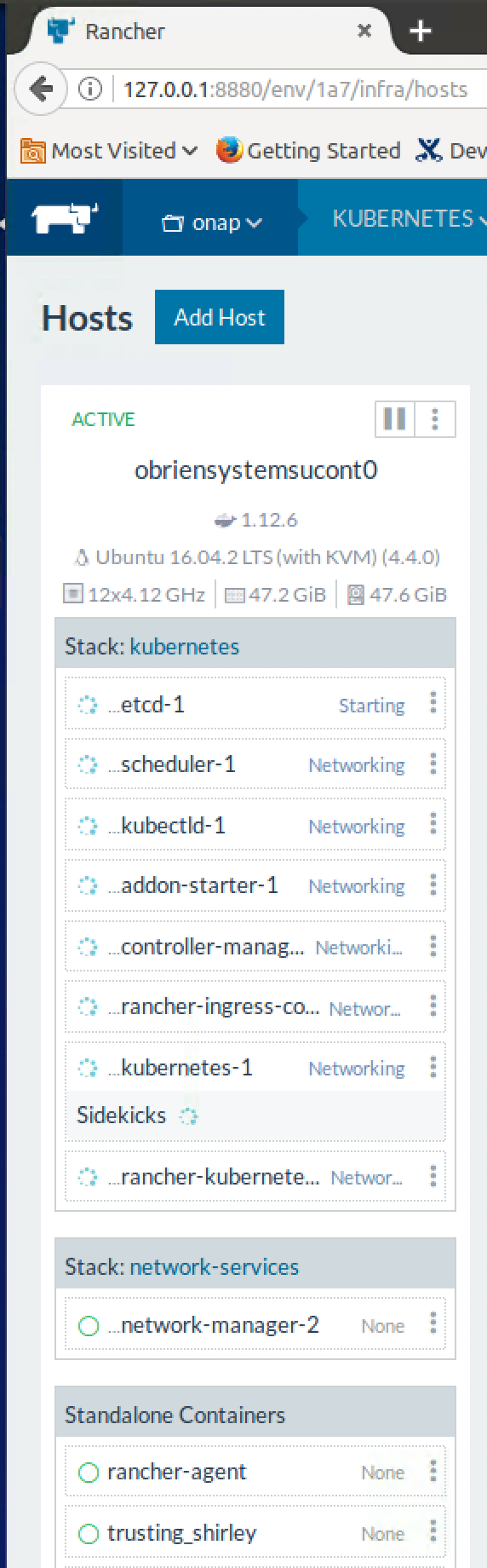

(on the master only) Install rancher (use 8880 instead of 8080) - note there may be issues with the dns pod in Rancher after a reboot or when running clustered hosts - a clean system will be OK - Jira server ONAP JIRA serverId 425b2b0a-557c-3c0c-b515-579789cceedb key OOM-236

| Code Block |

|---|

docker run -d --restart=unless-stopped -p 8880:8080 rancher/server |

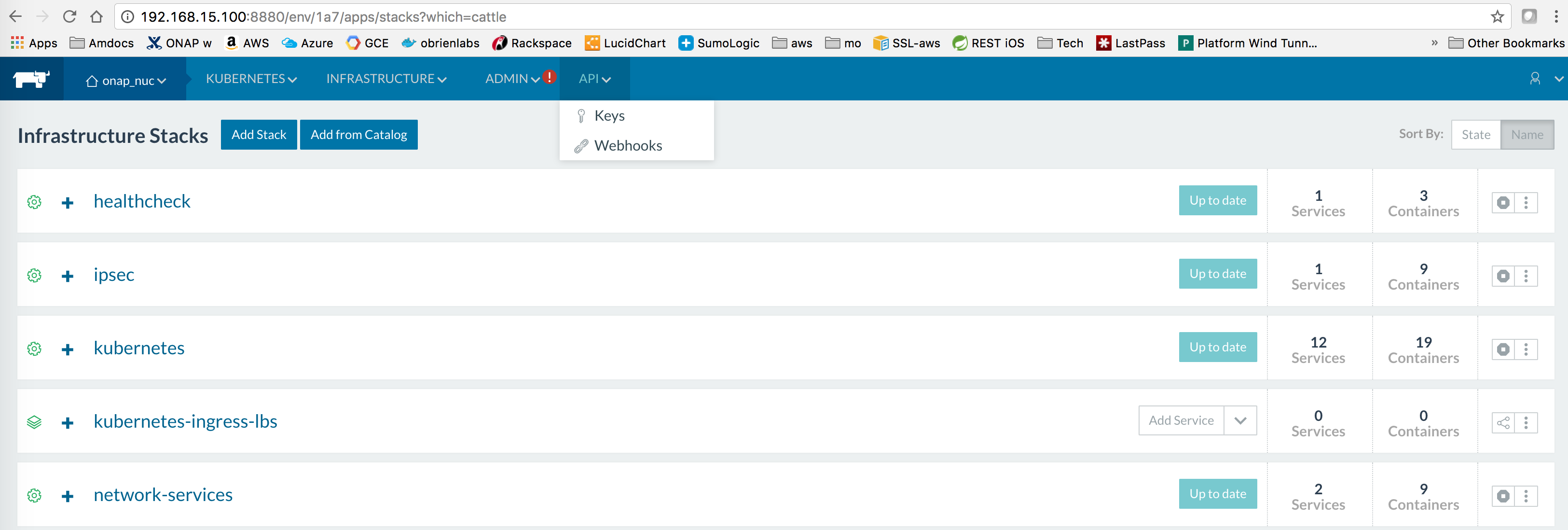

In Rancher UI - dont use (http://127.0.0.1:8880) - use the real IP address - so the client configs are populated correctly with callbacks

You must deactivate the default CATTLE environment - by adding a KUBERNETES environment - and Deactivating the older default CATTLE one - your added hosts will attach to the default

- Default → Manage Environments

- Select "Add Environment" button

- Give the Environment a name and description, then select Kubernetes as the Environment Template

- Hit the "Create" button. This will create the environment and bring you back to the Manage Environments view

- At the far right column of the Default Environment row, left-click the menu ( looks like 3 stacked dots ), and select Deactivate. This will make your new Kubernetes environment the new default.

Register your host(s) - run following on each host (including the master if you are collocating the master/host on a single machine/vm)

For each host, In Rancher > Infrastructure > Hosts. Select "Add Host"

Enter IP of host:

Copy command to register host with Rancher,

Execute command on host, for example:

| Code Block |

|---|

% docker run --rm --privileged -v /var/run/docker.sock:/var/run/docker.sock -v /var/lib/rancher:/var/lib/rancher rancher/agent:v1.2.2 http://192.168.163.131:8880/v1/scripts/BBD465D9B24E94F5FBFD:1483142400000:IDaNFrug38QsjZcu6rXh8TwqA4 |

wait for kubernetes menu to populate with CLI

install kubectl on the server and optionally the other hosts

| Code Block |

|---|

% curl -LO https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubectl

% chmod +x ./kubectl

% mv ./kubectl /usr/local/bin/kubectl

% mkdir ~/.kube

% vi ~/.kube/config |

paste kubectl config from rancher (you will see the CLI menu in Rancher | Kubernetes after the k8s pods are up on your host

Click on "Generate Config" to get your content to add into .kube/config

Verify that Kubernetes config is good

| Code Block |

|---|

root@obrien-kube11-1:~# kubectl cluster-info

Kubernetes master is running at ....

Heapster is running at....

KubeDNS is running at ....

kubernetes-dashboard is running at ...

monitoring-grafana is running at ....

monitoring-influxdb is running at ...

tiller-deploy is running at.... |

Install Helm (use 2.3.0 not current 2.6.0)

| Code Block |

|---|

wget http://storage.googleapis.com/kubernetes-helm/helm-v2.3.0-linux-amd64.tar.gz

tar -zxvf helm-v2.3.0-linux-amd64.tar.gz

mv linux-amd64/helm /usr/local/bin/helm

# test helm

helm help |

Undercloud done - move to ONAP

clone oom (scp your onap_rsa private key first - or clone anon - Ideally you get a full gerrit account and join the community)

see ssh/http/http access links below

https://gerrit.onap.org/r/#/admin/projects/oom

| Code Block |

|---|

anonymous http

1.0 branch

git clone -b release-1.0.0 http://gerrit.onap.org/r/oom

or 1.1/R1 master branch

git clone http://gerrit.onap.org/r/oom

or using your key

git clone -b release-1.0.0 ssh://michaelobrien@gerrit.onap.org:29418/oom |

or use https (substitute your user/pass)

| Code Block |

|---|

git clone -b release-1.0.0 https://michaelnnnn:uHaBPMvR47nnnnnnnnRR3Keer6vatjKpf5A@gerrit.onap.org/r/oom |

Wait until all the hosts show green in rancher, then run the createConfig/createAll scripts that wraps all the kubectl commands

| Jira | ||||||

|---|---|---|---|---|---|---|

|

Run the setenv.bash script in /oom/kubernetes/oneclick/ (new since 20170817)

| Code Block |

|---|

source setenv.bash |

(only if you are planning on closed-loop) - Before running createConfig.sh (see below) - make sure your config for openstack is setup correctly - so you can deploy the vFirewall VMs for example

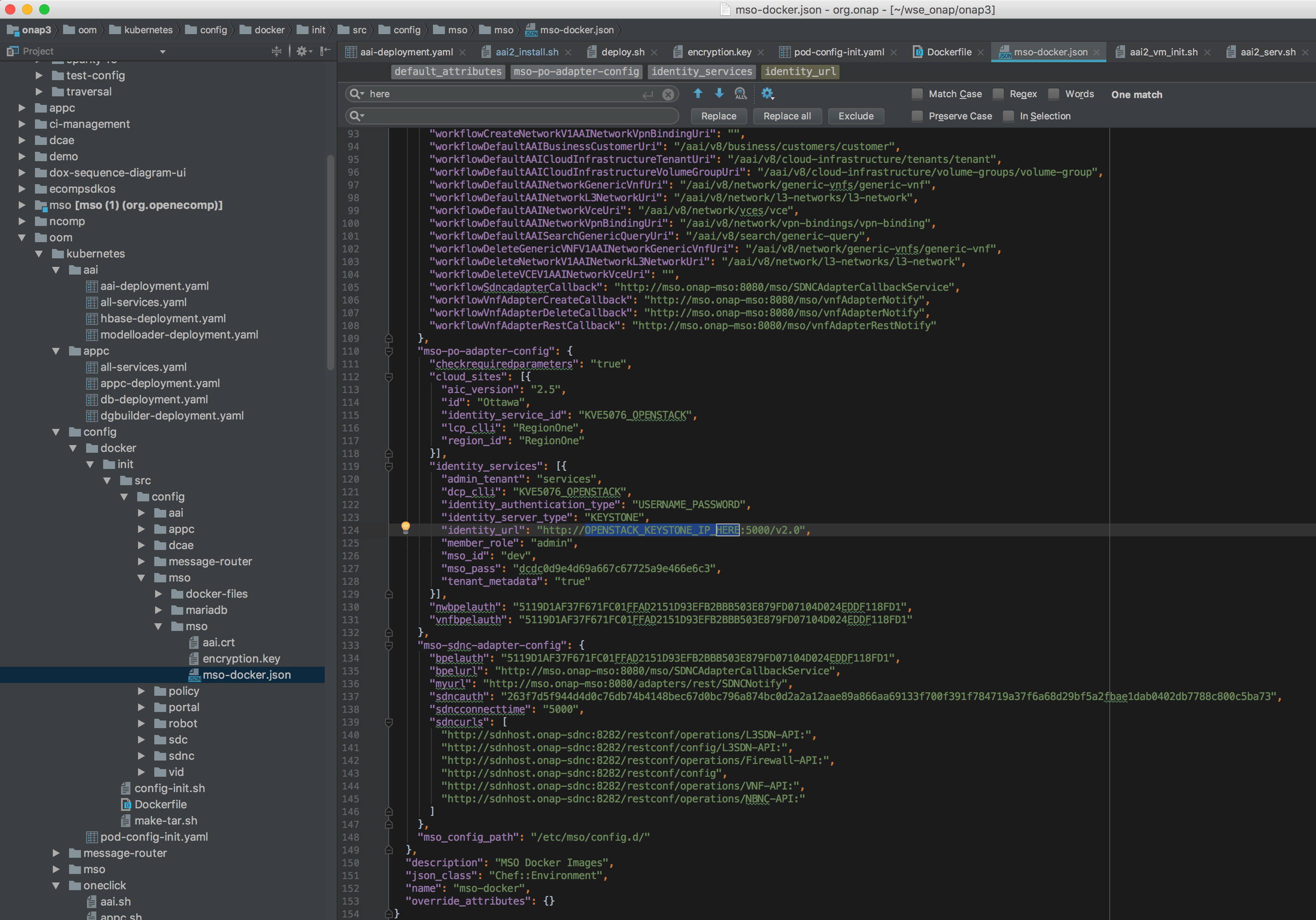

vi oom/kubernetes/config/docker/init/src/config/mso/mso/mso-docker.json

replace for example

"identity_services": [{

"identity_url": "http://OPENSTACK_KEYSTONE_IP_HERE:5000/v2.0",

run the one time config pod - which mounts the volume /dockerdata/ contained in the pod config-init. This mount is required for all other ONAP pods to function.

Note: the pod will stop after NFS creation - this is normal.

| Code Block |

|---|

% cd oom/kubernetes/config

% chmod 777 createConfig.sh (1.0 branch only)

% ./createConfig.sh -n onap

|

**** Creating configuration for ONAP instance: onap

namespace "onap" created

pod "config-init" created

**** Done ****

Wait for the config-init pod is gone before trying to bring up a component or all of ONAP

Note: use only the hardcoded "onap" namespace prefix - as URLs in the config pod are set as follows "workflowSdncadapterCallback": "http://mso.onap-mso:8080/mso/SDNCAdapterCallbackService"

Don't run all the pods unless you have at least 40G (without DCAE) or 50G allocated - if you have a laptop/VM with 16G - then you can only run enough pods to fit in around 11G

Ignore errors introduced around 20170816 - these are non-blocking and will allow the create to proceed -

| Jira | ||||||

|---|---|---|---|---|---|---|

|

| Code Block |

|---|

% cd ../oneclick

% vi createAll.bash

% ./createAll.bash -n onap -a robot|appc|aai |

(to bring up a single service at a time)

Only if you have >50G run the following (all namespaces)

| Code Block |

|---|

% ./createAll.bash -n onap |

ONAP is OK if everything is 1/1 in a the following

| Code Block |

|---|

% kubectl get pods -all-namespaces |

Run the ONAP portal via instructions at RunningONAPusingthevnc-portal

1.1 is currently having helm issues as of 20170825 Jira server ONAP JIRA serverId 425b2b0a-557c-3c0c-b515-579789cceedb key OOM-219

Wait until the containers are all up

List of Containers

Total pods is 47

Docker container list - source of truth: https://git.onap.org/integration/tree/packaging/docker/docker-images.csv

below any coloured container had issues getting to running state (currently 32 of 33 come up after 45 min) - there are an addition 11 dcae pods - bringing the total to 44 with an additional 4 for appc/sdnc and the config-init pod

...

NAMESPACE

master:20170715

...

The mount "config-init-root" is in the following location

(user configurable VF parameter file below)

/dockerdata-nfs/onapdemo/mso/mso/mso-docker.json

...

wurstmeister/zookeeper:latest...

dockerfiles_kafka:latest...

Note: currently there are no DCAE containers running yet (we are missing 6 yaml files (1 for the controller and 5 for the collector,staging,3-cdap pods)) - therefore DMaaP, VES collectors and APPC actions as the result of policy actions (closed loop) - will not function yet.

In review: https://gerrit.onap.org/r/#/c/7287/

| Jira | ||||||

|---|---|---|---|---|---|---|

|

| Jira | ||||||

|---|---|---|---|---|---|---|

|

...

attos/dmaap:latest...

not required

dcae-controller

...

fixed by config-init and resolv.conf

1.0.0 only

...

List of Docker Images

root@obriensystemskub0:~/oom/kubernetes/dcae# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

nexus3.onap.org:10001/openecomp/dcae-collector-common-event latest 325d5b115fc2 7 days ago 538.8 MB

nexus3.onap.org:10001/openecomp/dcae-dmaapbc latest 46891e265574 7 days ago 328.1 MB

wurstmeister/kafka latest f26f76f2e50d 13 days ago 267.7 MB

nexus3.onap.org:10001/openecomp/model-loader 1.0-STAGING-latest 0c7a3eb0682b 2 weeks ago 758.9 MB

nexus3.onap.org:10001/openecomp/ajsc-aai 1.0-STAGING-latest f5c97da83393 2 weeks ago 1.352 GB

nexus3.onap.org:10001/openecomp/vid 1.0-STAGING-latest b80d37a7ac5e 2 weeks ago 725.9 MB

nexus3.onap.org:10001/openecomp/dgbuilder-sdnc-image 1.0-STAGING-latest cb3912913a43 2 weeks ago 850.9 MB

nexus3.onap.org:10001/openecomp/admportal-sdnc-image 1.0-STAGING-latest 825d60fd3abd 2 weeks ago 780.9 MB

nexus3.onap.org:10001/openecomp/sdnc-image 1.0-STAGING-latest c6eff373cc29 2 weeks ago 1.433 GB

nexus3.onap.org:10001/openecomp/testsuite 1.0-STAGING-latest 1b9a4aaa9649 2 weeks ago 1.097 GB

nexus3.onap.org:10001/openecomp/appc-image 1.0-STAGING-latest c5172ae773c5 2 weeks ago 2.235 GB

mariadb 10 b101c8399ee3 2 weeks ago 386.7 MB

nexus3.onap.org:10001/openecomp/mso 1.0-STAGING-latest b45b72cbc99d 2 weeks ago 1.429 GB

oomk8s/config-init 1.0.0 f19045125a44 5 weeks ago 322 MB

ubuntu 16.04 d355ed3537e9 5 weeks ago 119.2 MB

attos/dmaap latest b0ae220fcf1f 5 weeks ago 747.4 MB

mysql/mysql-server 5.6 05712b2a4b84 7 weeks ago 214.3 MB

oomk8s/ubuntu-init 1.0.0 14bb4db11858 8 weeks ago 207 MB

oomk8s/readiness-check 1.0.0 d3923ba1f99c 8 weeks ago 578.6 MB

oomk8s/mariadb-client-init 1.0.0 a5fa953bd4e0 9 weeks ago 251.1 MB

nexus3.onap.org:10001/openecomp/policy/policy-pe 1.0-STAGING-latest 2ffa01f2a8a2 4 months ago 1.421 GB

nexus3.onap.org:10001/openecomp/policy/policy-drools 1.0-STAGING-latest 389f86326698 4 months ago 1.347 GB

nexus3.onap.org:10001/openecomp/policy/policy-db 1.0-STAGING-latest 4f0ed6e92fed 4 months ago 1.142 GB

nexus3.onap.org:10001/openecomp/policy/policy-nexus 1.0-STAGING-latest 82c38af9208a 4 months ago 981.9 MB

nexus3.onap.org:10001/openecomp/sdc-cassandra 1.0-STAGING-latest 8f7c4a92530a 4 months ago 902 MB

nexus3.onap.org:10001/openecomp/sdc-kibana 1.0-STAGING-latest 287d79893e52 4 months ago 463.7 MB

nexus3.onap.org:10001/openecomp/sdc-elasticsearch 1.0-STAGING-latest 6ddf30823600 4 months ago 517.9 MB

nexus3.onap.org:10001/openecomp/sdc-frontend 1.0-STAGING-latest 1e63e5d9dff7 4 months ago 592.8 MB

nexus3.onap.org:10001/openecomp/sdc-backend 1.0-STAGING-latest 163918b87ae7 4 months ago 941.1 MB

nexus3.onap.org:10001/openecomp/portalapps 1.0-STAGING-latest ee0e08b1704b 4 months ago 1.04 GB

nexus3.onap.org:10001/openecomp/portaldb 1.0-STAGING-latest f2a8dc705ba2 4 months ago 394.5 MB

aaidocker/aai-hbase-1.2.3 latest aba535a6f8b5 7 months ago 1.562 GB

wurstmeister/zookeeper latest 351aa00d2fe9 8 months ago 478.3 MB

nexus3.onap.org:10001/mariadb 10.1.11 d1553bc7007f 17 months ago 346.5 MB

missing:

# can be replaced by public dockerHub link

dockerfiles_kafka:latest

# need DockerFile

dcae/pgaas:latest

dcae-dmaapbc:latest

dcae-collector-common-event:latest

dcae-controller:latest

dcae-ves-collector:release-1.0.0

dcae/cdap:1.0.7

Verifying Container Startup

Run VID if required to verify that config-init has mounted properly (and to verify resolv.conf)

Cloning details

Install the latest version of the OOM (ONAP Operations Manager) project repo - specifically the ONAP on Kubernetes work just uploaded June 2017

https://gerrit.onap.org/r/gitweb?p=oom.git

...

git clone ssh://yourgerrituserid@gerrit.onap.org:29418/oom

cd oom/kubernetes/oneclick

Versions

oom : master (1.1.0-SNAPSHOT)

onap deployments: 1.0.0

Rancher environment for Kubernetes

setup a separate onap kubernetes environment and disable the exising default environment.

Adding hosts to the Kubernetes environment will kick in k8s containers

Rancher kubectl config

To be able to run the kubectl scripts - install kubectl

Nexus3 security settings

Fix nexus3 security for each namespace

in createAll.bash add the following two lines just before namespace creation - to create a secret and attach it to the namespace (thanks to Jason Hunt of IBM last friday to helping us attach it - when we were all getting our pods to come up). A better fix for the future will be to pass these in as parameters from a prod/stage/dev ecosystem config.

...

create_namespace() {

kubectl create namespace $1-$2

+ kubectl --namespace $1-$2 create secret docker-registry regsecret --docker-server=nexus3.onap.org:10001 --docker-username=docker --docker-password=docker --docker-email=email@email.com

+ kubectl --namespace $1-$2 patch serviceaccount default -p '{"imagePullSecrets": [{"name": "regsecret"}]}'

}Fix MSO mso-docker.json

Before running pod-config-init.yaml - make sure your config for openstack is setup correctly - so you can deploy the vFirewall VMs for example

vi oom/kubernetes/config/docker/init/src/config/mso/mso/mso-docker.json

...

"mso-po-adapter-config": {

"checkrequiredparameters": "true",

"cloud_sites": [{

"aic_version": "2.5",

"id": "Ottawa",

"identity_service_id": "KVE5076_OPENSTACK",

"lcp_clli": "RegionOne",

"region_id": "RegionOne"

}],

"identity_services": [{

"admin_tenant": "services",

"dcp_clli": "KVE5076_OPENSTACK",

"identity_authentication_type": "USERNAME_PASSWORD",

"identity_server_type": "KEYSTONE",

"identity_url": "http://OPENSTACK_KEYSTONE_IP_HERE:5000/v2.0",

"member_role": "admin",

"mso_id": "dev",

"mso_pass": "dcdc0d9e4d69a667c67725a9e466e6c3",

"tenant_metadata": "true"

}],

...

"mso-po-adapter-config": {

"checkrequiredparameters": "true",

"cloud_sites": [{

"aic_version": "2.5",

"id": "Dallas",

"identity_service_id": "RAX_KEYSTONE",

"lcp_clli": "DFW", # or IAD

"region_id": "DFW"

}],

"identity_services": [{

"admin_tenant": "service",

"dcp_clli": "RAX_KEYSTONE",

"identity_authentication_type": "RACKSPACE_APIKEY",

"identity_server_type": "KEYSTONE",

"identity_url": "https://identity.api.rackspacecloud.com/v2.0",

"member_role": "admin",

"mso_id": "9998888",

"mso_pass": "YOUR_API_KEY",

"tenant_metadata": "true"

}],

The official documentation for installation of ONAP with OOM / Kubernetes is located in Read the Docs:

- OOM User Guide — onap master documentation

- OOM Quick Start Guide — onap master documentation)

- OOM Cloud Setup Guide — onap master documentation

delete/recreate the config poroot@obriensystemskub0:~/oom/kubernetes/config# kubectl --namespace default delete -f pod-config-init.yaml

pod "config-init" deleted

root@obriensystemskub0:~/oom/kubernetes/config# kubectl create -f pod-config-init.yaml

pod "config-init" created

or copy over your changes directly to the mount

root@obriensystemskub0:~/oom/kubernetes/config# cp docker/init/src/config/mso/mso/mso-docker.json /dockerdata-nfs/onapdemo/mso/mso/mso-docker.json

Use only "onap" namespace

Note: use only the hardcoded "onap" namespace prefix - as URLs in the config pod are set as follows "workflowSdncadapterCallback": "http://mso.onap-mso:8080/mso/SDNCAdapterCallbackService",

Monitor Container Deployment

first verify your kubernetes system is up

Then wait 25-45 min for all pods to attain 1/1 state

Kubernetes specific config

https://kubernetes.io/docs/user-guide/kubectl-cheatsheet/

Nexus Docker repo Credentials

Checking out use of a kubectl secret in the yaml files via - https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

Container Endpoint access

Check the services view in the Kuberntes API under robot

robot.onap-robot:88 TCP

robot.onap-robot:30209 TCP

...

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl get services --all-namespaces -o wide

onap-vid vid-mariadb None <none> 3306/TCP 1h app=vid-mariadb

onap-vid vid-server 10.43.14.244 <nodes> 8080:30200/TCP 1h app=vid-server

Container Logs

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl --namespace onap-vid logs -f vid-server-248645937-8tt6p

16-Jul-2017 02:46:48.707 INFO [main] org.apache.catalina.startup.Catalina.start Server startup in 22520 ms

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl get pods --all-namespaces -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE

onap-robot robot-44708506-dgv8j 1/1 Running 0 36m 10.42.240.80 obriensystemskub0

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl --namespace onap-robot logs -f robot-44708506-dgv8j

2017-07-16 01:55:54: (log.c.164) server started

SSH into ONAP containers

Normally I would via https://kubernetes.io/docs/tasks/debug-application-cluster/get-shell-running-container/

...

Get the pod name viakubectl get pods --all-namespaces -o wide

bash into the pod via

kubectl -n onap-mso exec -it mso-1648770403-8hwcf /bin/bash...

Trying to get an authorization file into the robot pod

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl cp authorization onap-robot/robot-44708506-nhm0n:/home/ubuntu

...

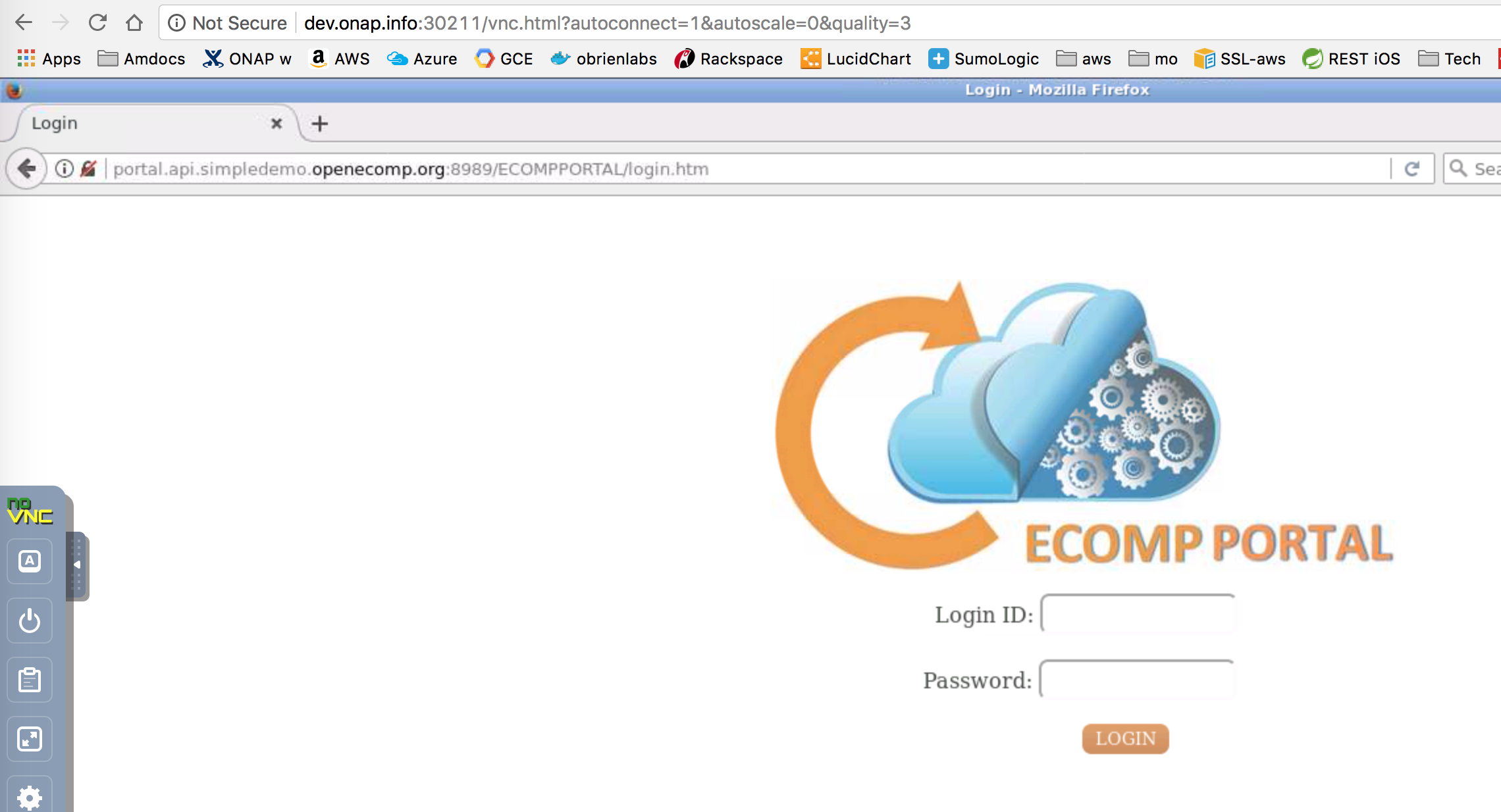

Running ONAP Portal UI Operations

Running ONAP using the vnc-portal

see Installing and Running the ONAP Demos

or run the vnc-portal container to access ONAP using the traditional port mappings. See the following recorded video by Mike Elliot of the OOM team for a audio-visual reference

Check for the vnc-portal port via (it is always 30211)

| Code Block | ||

|---|---|---|

| ||

obrienbiometrics:onap michaelobrien$ ssh ubuntu@dev.onap.info

ubuntu@ip-172-31-93-122:~$ sudo su -

root@ip-172-31-93-122:~# kubectl get services --all-namespaces -o wide

NAMESPACE NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

onap-portal vnc-portal 10.43.78.204 <nodes> 6080:30211/TCP,5900:30212/TCP 4d app=vnc-portal

|

launch the vnc-portal in a browser

password is "password"

Open firefox inside the VNC vm - launch portal normally

http://portal.api.simpledemo.openecomp.org:8989/ECOMPPORTAL/login.htm

login and run SDC

Continue with the normal ONAP demo flow at Tutorial: Onboarding and Distributing a Vendor Software Product (VSP)

Running ONAP directly

Get the mapped external port by checking the service in kubernetes - here 30200 for VID on a particular node in our cluster.

fix /etc/hosts as usual

...

192.168.163.132 portal.api.simpledemo.openecomp.org

192.168.163.132 sdc.api.simpledemo.openecomp.org

192.168.163.132 policy.api.simpledemo.openecomp.org

192.168.163.132 vid.api.simpledemo.openecomp.org

In order to map internal 8989 ports to external ones like 30215 - we will need to reconfigure the onap config links as below.

Kubernetes Installation Options

Rancher on Ubuntu 16.04

Install Rancher

http://rancher.com/docs/rancher/v1.6/en/quick-start-guide/

http://rancher.com/docs/rancher/v1.6/en/installing-rancher/installing-server/#single-container

Install a docker version that Rancher and Kubernetes support which is currently 1.12.6

http://rancher.com/docs/rancher/v1.5/en/hosts/#supported-docker-versions

...

curl https://releases.rancher.com/install-docker/1.12.sh | sh

docker run -d --restart=unless-stopped -p 8880:8080 rancher/server:stable

...

Wait for the docker container to finish DB startup

http://rancher.com/docs/rancher/v1.6/en/hosts/

Registering Hosts in Rancher

Having issues registering a combined single VM (controller + host) - use your real IP not localhost

In settings | Host Configuration | set your IP

...

See your host registered

Troubleshooting

Rancher fails to restart on server reboot

Having issues after a reboot of a colocated server/agent

Installing Clean Ubuntu

...

apt-get install ssh

apt-get install ubuntu-desktop

Docker Nexus Config

| Jira | ||||||

|---|---|---|---|---|---|---|

|

Out of the box we cant pull images - currently working on a config step around https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

...

imagePullSecrets:

- name: regsecret

...

OOM Repo changes

20170629: fix on 20170626 on a hardcoded proxy - (for those who run outside the firewall) - https://gerrit.onap.org/r/gitweb?p=oom.git;a=commitdiff;h=131c2a42541fb807f395fe1f39a8482a53f92c60

DNS resolution

add "service.ns.svc.cluster.local" to fix

Search Line limits were exceeded, some dns names have been omitted, the applied search line is: default.svc.cluster.local svc.cluster.local cluster.local kubelet.kubernetes.rancher.internal kubernetes.rancher.internal rancher.internal

https://github.com/rancher/rancher/issues/9303

root@obriensystemskub0:~/oom/kubernetes/oneclick# cat /etc/resolv.conf

# Dynamic resolv.conf(5) file for glibc resolver(3) generated by resolvconf(8)

# DO NOT EDIT THIS FILE BY HAND -- YOUR CHANGES WILL BE OVERWRITTEN

nameserver 192.168.241.2

search localdomain service.ns.svc.cluster.local

| Jira | ||||||

|---|---|---|---|---|---|---|

|

| Jira | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|

|

Design Issues

DI 10: 20170724: DCAE Integration

| Jira | ||||||

|---|---|---|---|---|---|---|

|

todo:

docker images need to be pushed to nexus

from: registry.stratlab.local:30002/onap/dcae/cdap:1.0.7

to: nexus3.onap.org:10001/openecomp

| Jira | ||||||

|---|---|---|---|---|---|---|

|

2 persistent volumes also created (controller-pvs, collector-pvs)

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl get pods --all-namespaces -o wide | grep dcae

onap-dcae cdap0-801098998-1j83b 0/1 Init:ImagePullBackOff 0 7m 10.42.170.56 obriensystemskub0

onap-dcae cdap1-1109312935-sv8g0 0/1 Init:ImagePullBackOff 0 7m 10.42.184.43 obriensystemskub0

onap-dcae cdap2-2495595959-fxnmg 0/1 Init:ImagePullBackOff 0 7m 10.42.69.133 obriensystemskub0

onap-dcae dcae-collector-common-event-2687859322-jcv7n 1/1 Running 0 7m 10.42.219.171 obriensystemskub0

onap-dcae dcae-collector-dmaapbc-2087600858-sb98v 1/1 Running 0 7m 10.42.225.93 obriensystemskub0

onap-dcae dcae-controller-1960065296-95xxx 0/1 ContainerCreating 0 7m <none> obriensystemskub0

onap-dcae dcae-pgaas-3690783998-v4w60 0/1 ImagePullBackOff 0 7m 10.42.28.81 obriensystemskub0

onap-dcae dcae-ves-collector-1184035059-t0t7s 0/1 ImagePullBackOff 0 7m 10.42.223.26 obriensystemskub0

onap-dcae dmaap-3637563410-2s7b2 0/1 CrashLoopBackOff 6 7m 10.42.7.93 obriensystemskub0

onap-dcae kafka-2923495538-tb218 0/1 CrashLoopBackOff 6 7m 10.42.49.109 obriensystemskub0

onap-dcae zookeeper-2122426841-2n6h5 1/1 Running 0 7m 10.42.192.205 obriensystemskub0

root@obriensystemskub0:~/oom/kubernetes/oneclick# kubectl get services --all-namespaces -o wide | grep dcae

onap-dcae dcae-collector-common-event 10.43.97.177 <nodes> 8080:30236/TCP,8443:30237/TCP,9999:30238/TCP 8m app=dcae-collector-common-event

onap-dcae dcae-collector-dmaapbc 10.43.100.153 <nodes> 8080:30239/TCP,8443:30240/TCP 8m app=dcae-collector-dmaapbc

onap-dcae dcae-controller 10.43.117.220 <nodes> 8000:30234/TCP,9998:30235/TCP 7m app=dcae-controller

onap-dcae dcae-ves-collector 10.43.215.194 <nodes> 8080:30241/TCP,9999:30242/TCP 8m app=dcae-ves-collector

onap-dcae zldciad4vipstg00 10.43.110.169 <nodes> 5432:30245/TCP 8m app=dcae-pgaas

Pushing Docker Images to ONAP

Other projects have a docker-maven-plugin - need to see if I can run this locally.

Questions

https://lists.onap.org/pipermail/onap-discuss/2017-July/002084.html

Links

https://kubernetes.io/docs/user-guide/kubectl-cheatsheet/

Content to edit/merge

Viswa,

Missed that you have config-init in your mail – so covered OK.

I stood up robot and aai on a clean VM to verify the docs (this is inside an openstack lab behind a firewall – that has its own proxy)

Let me know if you have docker proxy issues – I would also like to reference/doc this for others like yourselves.

Also, just verifying that you added the security workaround step – (or make your docker repo trust all repos)

vi oom/kubernetes/oneclick/createAll.bash

create_namespace() {

kubectl create namespace $1-$2

+ kubectl --namespace $1-$2 create secret docker-registry regsecret --docker-server=nexus3.onap.org:10001 --docker-username=docker --docker-password=docker --docker-email=email@email.com

+ kubectl --namespace $1-$2 patch serviceaccount default -p '{"imagePullSecrets": [{"name": "regsecret"}]}'

}

I just happen to be standing up a clean deployment on openstack – lets try just robot – it pulls the testsuite image

root@obrienk-1:/home/ubuntu/oom/kubernetes/config# kubectl create -f pod-config-init.yaml

pod "config-init" created

root@obrienk-1:/home/ubuntu/oom/kubernetes/config# cd ../oneclick/

root@obrienk-1:/home/ubuntu/oom/kubernetes/oneclick# ./createAll.bash -n onap -a robot

********** Creating up ONAP: robot

Creating namespaces **********

namespace "onap-robot" created

secret "regsecret" created

serviceaccount "default" patched

Creating services **********

service "robot" created

********** Creating deployments for robot **********

Robot....

deployment "robot" created

**** Done ****

root@obrienk-1:/home/ubuntu/oom/kubernetes/oneclick# kubectl get pods --all-namespaces | grep onap

onap-robot robot-4262359493-k84b6 1/1 Running 0 4m

One nexus3 onap specific images downloaded for robot

root@obrienk-1:/home/ubuntu/oom/kubernetes/oneclick# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

nexus3.onap.org:10001/openecomp/testsuite 1.0-STAGING-latest 3a476b4fe0d8 2 hours ago 1.16 GB

next try one with 3 onap images like aai

uses

nexus3.onap.org:10001/openecomp/ajsc-aai 1.0-STAGING-latest c45b3a0ca00f 2 hours ago 1.352 GB

aaidocker/aai-hbase-1.2.3 latest aba535a6f8b5 7 months ago 1.562 GB

hbase is first

root@obrienk-1:/home/ubuntu/oom/kubernetes/oneclick# ./createAll.bash -n onap -a aai

root@obrienk-1:/home/ubuntu/oom/kubernetes/oneclick# kubectl get pods --all-namespaces | grep onap

onap-aai aai-service-3351257372-tlq93 0/1 PodInitializing 0 2m

onap-aai hbase-1381435241-ld56l 1/1 Running 0 2m

onap-aai model-loader-service-2816942467-kh1n0 0/1 Init:0/2 0 2m

onap-robot robot-4262359493-k84b6 1/1 Running 0 8m

aai-service is next

root@obrienk-1:/home/ubuntu/oom/kubernetes/oneclick# kubectl get pods --all-namespaces | grep onap

onap-aai aai-service-3351257372-tlq93 1/1 Running 0 5m

onap-aai hbase-1381435241-ld56l 1/1 Running 0 5m

onap-aai model-loader-service-2816942467-kh1n0 0/1 Init:1/2 0 5m

onap-robot robot-4262359493-k84b6 1/1 Running 0 11m

model-loader-service takes the longest

Subject: Re: [onap-discuss] [OOM] Using OOM kubernetes based seed code over Rancher

Hi , Search line limits turn out to be a red-herring – this is a non-fatal bug in Rancher you can ignore – they put too many entries in the search domain list (Ill update the wiki)

Verify you have a stopped config-init pod – it won’t show in the get pods command – goto the rancher or kubernetes gui.

The fact you have non-working robot – looks like you may be having issues pulling images from docker – verify your image list and that docker can pull from nexus3

>docker images

Should show some nexus3.onap.org ones if the pull were ok

If not try an image pull to verify this:

ubuntu@obrienk-1:~/oom/kubernetes/config$ docker login -u docker -p docker nexus3.onap.org:10001

Login Succeeded

ubuntu@obrienk-1:~/oom/kubernetes/config$ docker pull nexus3.onap.org:10001/openecomp/mso:1.0-STAGING-latest

1.0-STAGING-latest: Pulling from openecomp/mso

23a6960fe4a9: Extracting [===================================> ] 32.93 MB/45.89 MB

e9e104b0e69d: Download complete

If so then you need to set the docker proxy – or run outside the firewall like I do.

Also to be able to run the 34 pods (without DCAE) you will need 37g+ (33 + 4 for rancher/k82 + some for the OS) – also plan for over 100G of HD space.

/michael

1.1 robot 2017830 status - no scripts

root@obriensystemskub0:~/11/oom/kubernetes/oneclick# ./createAll.bash -n onap -a robot

********** Creating instance 1 of ONAP with port range 30200 and 30399

********** Creating ONAP: robot

Creating namespace **********

namespace "onap-robot" created

Creating registry secret **********

secret "onap-docker-registry-key" created

Creating service **********

service "robot" created

********** Creating deployments for robot **********

Robot....

deployment "robot" created

**** Done ****

root@obriensystemskub0:~/11/oom/kubernetes/oneclick# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system heapster-859001963-kz210 1/1 Running 5 43d

kube-system kube-dns-1759312207-jd5tf 3/3 Running 8 43d

kube-system kubernetes-dashboard-2463885659-xv986 1/1 Running 4 43d

kube-system monitoring-grafana-1177217109-sm5nq 1/1 Running 4 43d

kube-system monitoring-influxdb-1954867534-vvb84 1/1 Running 4 43d

kube-system tiller-deploy-1933461550-gdxch 1/1 Running 4 43d

onap-robot robot-3494393958-d5drg 1/1 Running 0 8s

root@obriensystemskub0:~/11/oom/kubernetes/oneclick# kubectl -n onap-robot exec -it robot-3494393958-d5drg bash

root@robot-3494393958-d5drg:/# ls /opt

...