Warning: Draft Content

This wiki is under construction

References

OOM-1 - Getting issue details... STATUS

The OOM (ONAP Operation Manager) project has pushed Kubernetes based deployment code to the oom repository. This page details on getting ONAP running on Kubernetes for various environments.

Note: currently there are no DCAE containers running yet (we are missing 6 yaml files (1 for the controller and 5 for the collector,staging,3-cdap pods)) - therefore DMaaP, VES collectors and APPC actions as the result of policy actions (closed loop) - will not function yet.

Undercloud Installation

We need a kubernetes installation with the proper architecture components running. This architecture can be provided by vendors like Redhat or Rancher

https://kubernetes.io/docs/concepts/overview/components/

There are several options

| OS | VIM | Description | Status | Links |

|---|---|---|---|---|

OSX Linux | CoreOS | On Vagrant (Thanks Yves) | in-progress | https://coreos.com/kubernetes/docs/latest/kubernetes-on-vagrant-single.html Implement OSX fix for Vagrant 1.9.6 https://github.com/mitchellh/vagrant/issues/7747 Avoid the kubectl lock https://github.com/coreos/coreos-kubernetes/issues/886 Nexus auth issues TBD |

| OSX | MInikube on VMWare Fusion | minikube VM not restartable | https://github.com/kubernetes/minikube | |

| RHEL 7.3 | Redhat Kubernetes | services deploy, but pod IP's not reachable, likely my missing 2 networks (public, onap_oam) | https://access.redhat.com/documentation/en-us/red_hat_enterprise_linux_atomic_host/7/html-single/getting_started_with_kubernetes/ | |

| Ubuntu 16.04 | Rancher | Issues registering with controller rest endpoint | http://rancher.com/docs/rancher/v1.5/en/quick-start-guide/ |

Kubernetes specific config

Dashboard

start the dashboard at http://localhost:8001/ui

| kubectl proxy & |

|---|

Nexus Docker repo Credentials

Checking out use of a kubectl secret in the yaml files via - https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

CoreOS on Vagrant

(Yves alerted me to this)

https://coreos.com/kubernetes/docs/latest/kubernetes-on-vagrant-single.html

Implement OSX fix for Vagrant 1.9.6 https://github.com/mitchellh/vagrant/issues/7747

Adjust the VagrantFile for your system

NODE_VCPUS = 1 NODE_MEMORY_SIZE = 2048 to (for a 5820K on 64G for example) NODE_VCPUS = 8 NODE_MEMORY_SIZE = 32768 |

|---|

curl -O https://storage.googleapis.com/kubernetes-release/release/v1.6.1/bin/darwin/amd64/kubectl chmod +x kubectl skipped (mv kubectl /usr/local/bin/kubectl) - already there ls /usr/local/bin/kubectl git clone https://github.com/coreos/coreos-kubernetes.git cd coreos-kubernetes/single-node/ vagrant box update sudo ln -sf /usr/local/bin/openssl /opt/vagrant/embedded/bin/openssl vagrant up Wait at least 5 min (Yves is good) export KUBECONFIG="${KUBECONFIG}:$(pwd)/kubeconfig" kubectl config use-context vagrant-single obrienbiometrics:single-node michaelobrien$ export KUBECONFIG="${KUBECONFIG}:$(pwd)/kubeconfig" obrienbiometrics:single-node michaelobrien$ kubectl config use-context vagrant-single Switched to context "vagrant-single". $ kubectl get nodes NAME STATUS AGE VERSION 172.17.4.99 Ready 4h v1.5.4+coreos.0 $ kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system heapster-v1.2.0-4088228293-3k7j1 2/2 Running 2 4h kube-system kube-apiserver-172.17.4.99 1/1 Running 1 4h kube-system kube-controller-manager-172.17.4.99 1/1 Running 1 4h kube-system kube-dns-782804071-jg3nl 4/4 Running 4 4h kube-system kube-dns-autoscaler-2715466192-k45qg 1/1 Running 1 4h kube-system kube-proxy-172.17.4.99 1/1 Running 1 4h kube-system kube-scheduler-172.17.4.99 1/1 Running 1 4h kube-system kubernetes-dashboard-3543765157-qtnnj 1/1 Running 1 4h $ kubectl get service --all-namespaces NAMESPACE NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE default kubernetes 10.3.0.1 <none> 443/TCP 4h kube-system heapster 10.3.0.95 <none> 80/TCP 4h kube-system kube-dns 10.3.0.10 <none> 53/UDP,53/TCP 4h kube-system kubernetes-dashboard 10.3.0.66 <none> 80/TCP 4h $ kubectl cluster-info Kubernetes master is running at https://172.17.4.99:443 Heapster is running at https://172.17.4.99:443/api/v1/proxy/namespaces/kube-system/services/heapster KubeDNS is running at https://172.17.4.99:443/api/v1/proxy/namespaces/kube-system/services/kube-dns kubernetes-dashboard is running at https://172.17.4.99:443/api/v1/proxy/namespaces/kube-system/services/kubernetes-dashboard git clone ssh://michaelobrien@gerrit.onap.org:29418/oom cd oom/kubernetes/oneclick/ obrienbiometrics:oneclick michaelobrien$ ./createAll.bash -n onap ********** Creating up ONAP: sdc aai mso message-router robot vid sdnc portal policy appc Creating namespaces ********** namespace "onap-sdc" created Creating services ********** service "sdc-es" created service "sdc-cs" created service "sdc-kb" created service "sdc-be" created service "sdc-fe" created Creating namespaces ********** namespace "onap-aai" created Creating services ********** service "hbase" created service "aai-service" created service "model-loader-service" created Creating namespaces ********** namespace "onap-mso" created Creating services ********** service "mariadb" created service "mso" created Creating namespaces ********** namespace "onap-message-router" created Creating services ********** service "zookeeper" created service "global-kafka" created service "dmaap" created Creating namespaces ********** namespace "onap-robot" created Creating services ********** service "robot" created Creating namespaces ********** namespace "onap-vid" created Creating services ********** service "vid-mariadb" created service "vid-server" created Creating namespaces ********** namespace "onap-sdnc" created Creating services ********** service "dbhost" created service "sdnctldb01" created service "sdnctldb02" created service "sdnc-dgbuilder" created service "sdnhost" created service "sdnc-portal" created Creating namespaces ********** namespace "onap-portal" created Creating services ********** service "portaldb" created service "portalapps" created service "vnc-portal" created Creating namespaces ********** namespace "onap-policy" created Creating services ********** service "mariadb" created service "nexus" created service "drools" created service "pap" created service "pdp" created service "pypdp" created service "brmsgw" created Creating namespaces ********** namespace "onap-appc" created Creating services ********** service "dbhost" created service "sdnctldb01" created service "sdnctldb02" created service "sdnhost" created service "dgbuilder" created ********** Creating deployments for sdc aai mso message-router robot vid sdnc portal policy appc ********** SDC.... deployment "sdc-es" created deployment "sdc-cs" created deployment "sdc-kb" created deployment "sdc-be" created deployment "sdc-fe" created AAI.... deployment "hbase" created deployment "aai-service" created deployment "model-loader-service" created MSO.... deployment "mariadb" created deployment "mso" created Message Router.... deployment "zookeeper" created deployment "global-kafka" created deployment "dmaap" created Robot.... deployment "robot" created VID.... deployment "vid-mariadb" created deployment "vid-server" created SDNC.... deployment "sdnc-dbhost" created deployment "sdnc" created deployment "sdnc-dgbuilder" created deployment "sdnc-portal" created Portal.... deployment "portaldb" created deployment "portalapps" created deployment "vnc-portal" created Policy.... deployment "mariadb" created deployment "nexus" created deployment "pap" created deployment "pdp" created deployment "brmsgw" created deployment "pypdp" created deployment "drools" created App-c.... deployment "appc-dbhost" created deployment "appc" created deployment "appc-dgbuilder" created **** Done ****obrienbiometrics:oneclick michaelobrien$ kubectl get service --all-namespaces NAMESPACE NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE default kubernetes 10.3.0.1 <none> 443/TCP 4h kube-system heapster 10.3.0.95 <none> 80/TCP 4h kube-system kube-dns 10.3.0.10 <none> 53/UDP,53/TCP 4h kube-system kubernetes-dashboard 10.3.0.66 <none> 80/TCP 4h onap-aai aai-service 10.3.0.48 <nodes> 8443:30233/TCP,8080:30232/TCP 34s onap-aai hbase None <none> 8020/TCP 34s onap-aai model-loader-service 10.3.0.188 <nodes> 8443:30229/TCP,8080:30210/TCP 34s onap-appc dbhost None <none> 3306/TCP 31s onap-appc dgbuilder 10.3.0.38 <nodes> 3000:30228/TCP 31s onap-appc sdnctldb01 None <none> 3306/TCP 31s onap-appc sdnctldb02 None <none> 3306/TCP 31s onap-appc sdnhost 10.3.0.158 <nodes> 8282:30230/TCP,1830:30231/TCP 31s onap-message-router dmaap 10.3.0.55 <nodes> 3904:30227/TCP,3905:30226/TCP 33s onap-message-router global-kafka None <none> 9092/TCP 33s onap-message-router zookeeper None <none> 2181/TCP 33s onap-mso mariadb 10.3.0.208 <nodes> 3306:30252/TCP 34s onap-mso mso 10.3.0.129 <nodes> 8080:30223/TCP,3904:30225/TCP,3905:30224/TCP,9990:30222/TCP,8787:30250/TCP 33s onap-policy brmsgw 10.3.0.46 <nodes> 9989:30216/TCP 31s onap-policy drools 10.3.0.252 <nodes> 6969:30217/TCP 31s onap-policy mariadb None <none> 3306/TCP 31s onap-policy nexus None <none> 8081/TCP 31s onap-policy pap 10.3.0.39 <nodes> 8443:30219/TCP,9091:30218/TCP 31s onap-policy pdp 10.3.0.28 <nodes> 8081:30220/TCP 31s onap-policy pypdp 10.3.0.242 <nodes> 8480:30221/TCP 31s onap-portal portalapps 10.3.0.130 <nodes> 8006:30213/TCP,8010:30214/TCP,8989:30215/TCP 32s onap-portal portaldb None <none> 3306/TCP 32s onap-portal vnc-portal 10.3.0.236 <nodes> 6080:30211/TCP,5900:30212/TCP 32s onap-robot robot 10.3.0.79 <nodes> 88:30209/TCP 33s onap-sdc sdc-be 10.3.0.186 <nodes> 8443:30204/TCP,8080:30205/TCP 34s onap-sdc sdc-cs None <none> 9042/TCP,9160/TCP 34s onap-sdc sdc-es None <none> 9200/TCP,9300/TCP 34s onap-sdc sdc-fe 10.3.0.120 <nodes> 9443:30207/TCP,8181:30206/TCP 34s onap-sdc sdc-kb None <none> 5601/TCP 34s onap-sdnc dbhost None <none> 3306/TCP 32s onap-sdnc sdnc-dgbuilder 10.3.0.104 <nodes> 3000:30203/TCP 32s onap-sdnc sdnc-portal 10.3.0.240 <nodes> 8843:30201/TCP 32s onap-sdnc sdnctldb01 None <none> 3306/TCP 32s onap-sdnc sdnctldb02 None <none> 3306/TCP 32s onap-sdnc sdnhost 10.3.0.33 <nodes> 8282:30202/TCP 32s onap-vid vid-mariadb None <none> 3306/TCP 33s onap-vid vid-server 10.3.0.31 <nodes> 8080:30200/TCP 32s obrienbiometrics:oneclick michaelobrien$ kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system heapster-v1.2.0-4088228293-3k7j1 2/2 Running 2 4h kube-system kube-apiserver-172.17.4.99 1/1 Running 1 4h kube-system kube-controller-manager-172.17.4.99 1/1 Running 1 4h kube-system kube-dns-782804071-jg3nl 4/4 Running 4 4h kube-system kube-dns-autoscaler-2715466192-k45qg 1/1 Running 1 4h kube-system kube-proxy-172.17.4.99 1/1 Running 1 4h kube-system kube-scheduler-172.17.4.99 1/1 Running 1 4h kube-system kubernetes-dashboard-3543765157-qtnnj 1/1 Running 1 4h onap-aai aai-service-346921785-w3r22 0/1 Init:0/1 0 1m onap-aai hbase-139474849-86brc 0/1 ContainerCreating 0 1m onap-aai model-loader-service-1795708961-k3824 0/1 Init:0/2 0 1m onap-appc appc-2044062043-w4bpk 0/1 Init:0/1 0 56s onap-appc appc-dbhost-2039492951-bzjcl 0/1 ContainerCreating 0 56s onap-appc appc-dgbuilder-2934720673-0qmkl 0/1 Init:0/1 0 56s onap-message-router dmaap-3842712241-5rp5p 0/1 Init:0/1 0 1m onap-message-router global-kafka-89365896-92mwd 0/1 Init:0/1 0 1m onap-message-router zookeeper-1406540368-hgtfj 0/1 ContainerCreating 0 1m onap-mso mariadb-2638235337-zc9bg 0/1 ContainerCreating 0 1m onap-mso mso-3192832250-9kxl9 0/1 Init:0/1 0 1m onap-policy brmsgw-568914601-g6mtq 0/1 Init:0/1 0 57s onap-policy drools-1450928085-xnffx 0/1 Init:0/1 0 56s onap-policy mariadb-2932363958-2jxf9 0/1 ContainerCreating 0 58s onap-policy nexus-871440171-21vzr 0/1 Init:0/1 0 58s onap-policy pap-2218784661-2fdkg 0/1 Init:0/2 0 57s onap-policy pdp-1677094700-16jd3 0/1 Init:0/1 0 57s onap-policy pypdp-3209460526-gv25r 0/1 Init:0/1 0 56s onap-portal portalapps-1708810953-wr4l3 0/1 Init:0/2 0 58s onap-portal portaldb-3652211058-xk4s4 0/1 ContainerCreating 0 59s onap-portal vnc-portal-948446550-nv6hj 0/1 Init:0/5 0 58s onap-robot robot-964706867-4vnlf 0/1 ContainerCreating 0 1m onap-sdc sdc-be-2426613560-pq2ds 0/1 Init:0/2 0 1m onap-sdc sdc-cs-2080334320-ffgs6 0/1 Init:0/1 0 1m onap-sdc sdc-es-3272676451-cp3ls 0/1 ImagePullBackOff 0 1m onap-sdc sdc-fe-931927019-2tgkv 0/1 Init:0/1 0 1m onap-sdc sdc-kb-3337231379-v46zd 0/1 Init:0/1 0 1m onap-sdnc sdnc-1788655913-0z2wq 0/1 Init:0/1 0 1m onap-sdnc sdnc-dbhost-240465348-gfc32 0/1 ContainerCreating 0 1m onap-sdnc sdnc-dgbuilder-4164493163-s0v1s 0/1 Init:0/1 0 59s onap-sdnc sdnc-portal-2324831407-whp7d 0/1 Init:0/1 0 59s onap-vid vid-mariadb-4268497828-8hg7t 0/1 ContainerCreating 0 1m onap-vid vid-server-2331936551-3zz6j 0/1 Init:0/1 0 1m |

|---|

Ubuntu 16.04 Install Session

Install Rancher

http://rancher.com/docs/rancher/v1.5/en/quick-start-guide/

http://rancher.com/docs/rancher/v1.6/en/installing-rancher/installing-server/#single-container

Install a docker version that Rancher and Kubernetes support which is currently 1.12.3

http://rancher.com/docs/rancher/v1.5/en/hosts/#supported-docker-versions

curl https://releases.rancher.com/install-docker/1.12.sh | sh |

|---|

Verify your Rancher admin console is up on the external port you configured above

Wait for the docker container to finish DB startup

Having issues registering a combined single VM (controller + host) - moving on to using 2 VM's

http://rancher.com/docs/rancher/v1.6/en/hosts/

ONAP Installation

Clone

Install the latest version of the OOM (ONAP Operations Manager) project repo - specifically the ONAP on Kubernetes work just uploaded June 2017

https://gerrit.onap.org/r/gitweb?p=oom.git

git clone ssh://michaelobrien@gerrit.onap.org:29418/oom cd oom/kubernetes/oneclick |

|---|

OSX

Minicube (not in use)

curl -LO https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/darwin/amd64/kubectl chmod +x ./kubectl sudo mv ./kubectl /usr/local/bin/kubectl kubectl cluster-info kubectl completion -h brew install bash-completion curl -Lo minikube https://storage.googleapis.com/minikube/releases/v0.19.0/minikube-darwin-amd64 && chmod +x minikube && sudo mv minikube /usr/local/bin/ minikube start --vm-driver=vmwarefusion kubectl run hello-minikube --image=gcr.io/google_containers/echoserver:1.4 --port=8080 kubectl expose deployment hello-minikube --type=NodePort kubectl get pod curl $(minikube service hello-minikube --url) minikube stop |

|---|

Redhat 7.3

Running onap kubernetes services in a single VM using Redhat Kubernetes for 7.3

Redhat provides 2 docker containers for the scheduler and nbi components and spins up 2 (# is scalable) pod containers for use by onap.

[root@obrien-mbp oneclick]# docker ps |

|---|

Kubernetes setup

Uninstall docker-se (we installed earlier) subscription-manager repos --enable=rhel-7-server-optional-rpms [root@obrien-mbp opt]# ./kubestart.sh Jun 27 14:26:08 obrien-mbp.onap.org dockerd-current[90732]: time="2017-06-27T14:26:08.923309259-07:00" level=info msg="[graphdriver] using prior storage driver \"overlay\"" Jun 27 14:26:09 obrien-mbp.onap.org systemd[1]: Started Kubernetes Kube-Proxy Server. Jun 27 14:26:09 obrien-mbp.onap.org systemd[1]: Started Kubernetes Kubelet Server. |

|---|

Provision

Manually

Start a service

In this case robot - to check your Kubernetes installation.

[root@obrien-mbp oneclick]# ./createAll.bash -n onap -a robot ********** Creating up ONAP: robot Creating namespaces ********** Creating services ********** ********** Creating deployments for robot ********** Robot.... To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. |

|---|

Kubernetes Rest api

{ |

|---|

Pod List

In verification

[root@obrien-mbp oneclick]# ./createAll.bash -n onap

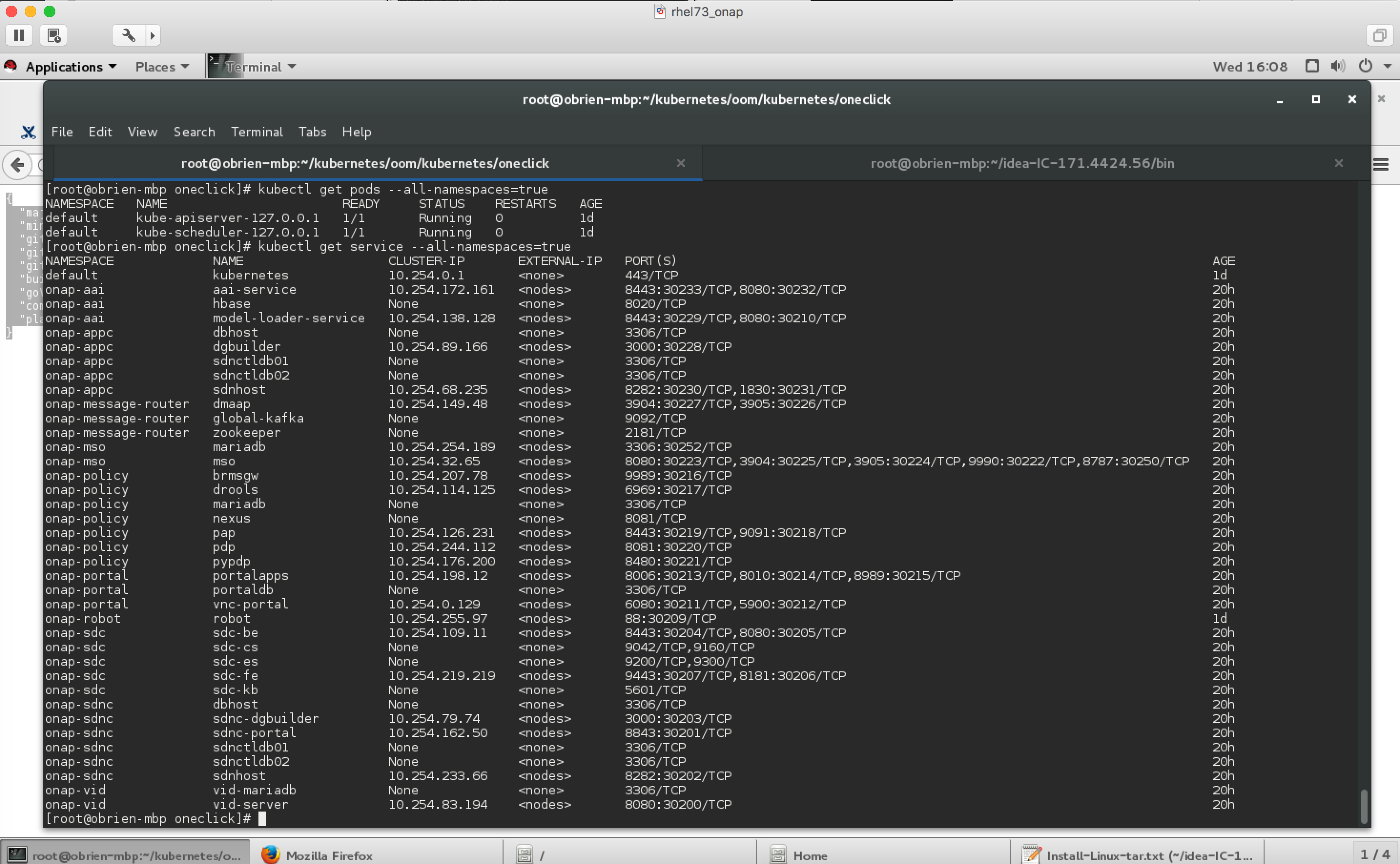

[root@obrien-mbp oneclick]# kubectl get service --all-namespaces=true

NAMESPACE NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default kubernetes 10.254.0.1 <none> 443/TCP 5h

onap-aai aai-service 10.254.172.161 <nodes> 8443:30233/TCP,8080:30232/TCP 1m

onap-aai hbase None <none> 8020/TCP 1m

onap-aai model-loader-service 10.254.138.128 <nodes> 8443:30229/TCP,8080:30210/TCP 1m

onap-appc dbhost None <none> 3306/TCP 1m

onap-appc dgbuilder 10.254.89.166 <nodes> 3000:30228/TCP 1m

onap-appc sdnctldb01 None <none> 3306/TCP 1m

onap-appc sdnctldb02 None <none> 3306/TCP 1m

onap-appc sdnhost 10.254.68.235 <nodes> 8282:30230/TCP,1830:30231/TCP 1m

onap-message-router dmaap 10.254.149.48 <nodes> 3904:30227/TCP,3905:30226/TCP 1m

onap-message-router global-kafka None <none> 9092/TCP 1m

onap-message-router zookeeper None <none> 2181/TCP 1m

onap-mso mariadb 10.254.254.189 <nodes> 3306:30252/TCP 1m

onap-mso mso 10.254.32.65 <nodes> 8080:30223/TCP,3904:30225/TCP,3905:30224/TCP,9990:30222/TCP,8787:30250/TCP 1m

onap-policy brmsgw 10.254.207.78 <nodes> 9989:30216/TCP 1m

onap-policy drools 10.254.114.125 <nodes> 6969:30217/TCP 1m

onap-policy mariadb None <none> 3306/TCP 1m

onap-policy nexus None <none> 8081/TCP 1m

onap-policy pap 10.254.126.231 <nodes> 8443:30219/TCP,9091:30218/TCP 1m

onap-policy pdp 10.254.244.112 <nodes> 8081:30220/TCP 1m

onap-policy pypdp 10.254.176.200 <nodes> 8480:30221/TCP 1m

onap-portal portalapps 10.254.198.12 <nodes> 8006:30213/TCP,8010:30214/TCP,8989:30215/TCP 1m

onap-portal portaldb None <none> 3306/TCP 1m

onap-portal vnc-portal 10.254.0.129 <nodes> 6080:30211/TCP,5900:30212/TCP 1m

onap-robot robot 10.254.255.97 <nodes> 88:30209/TCP 5h

onap-sdc sdc-be 10.254.109.11 <nodes> 8443:30204/TCP,8080:30205/TCP 1m

onap-sdc sdc-cs None <none> 9042/TCP,9160/TCP 1m

onap-sdc sdc-es None <none> 9200/TCP,9300/TCP 1m

onap-sdc sdc-fe 10.254.219.219 <nodes> 9443:30207/TCP,8181:30206/TCP 1m

onap-sdc sdc-kb None <none> 5601/TCP 1m

onap-sdnc dbhost None <none> 3306/TCP 1m

onap-sdnc sdnc-dgbuilder 10.254.79.74 <nodes> 3000:30203/TCP 1m

onap-sdnc sdnc-portal 10.254.162.50 <nodes> 8843:30201/TCP 1m

onap-sdnc sdnctldb01 None <none> 3306/TCP 1m

onap-sdnc sdnctldb02 None <none> 3306/TCP 1m

onap-sdnc sdnhost 10.254.233.66 <nodes> 8282:30202/TCP 1m

onap-vid vid-mariadb None <none> 3306/TCP 1m

onap-vid vid-server 10.254.83.194 <nodes> 8080:30200/TCP 1m

Troubleshooting

Docker Nexus Config

Out of the box we cant pull images - currently working on a config step

Failed to pull image "nexus3.onap.org:10001/openecomp/testsuite:1.0-STAGING-latest": image pull failed for nexus3.onap.org:10001/openecomp/testsuite:1.0-STAGING-latest, this may be because there are no credentials on this request. details: (unauthorized: authentication required)

kubelet 172.17.4.99

OOM Repo changes

20170629: fix on 20170626 on a hardcoded proxy - (for those who run outside the firewall) - https://gerrit.onap.org/r/gitweb?p=oom.git;a=commitdiff;h=131c2a42541fb807f395fe1f39a8482a53f92c60