- Problem Statement and Requirement (User Story): -

As an ONAP Operator- We require the ability to backup and restore ONAP state data, We want to have Disaster recovery solution for ONAP deployment done over K8.

Basic Use case would be: -

1) Add/Update/Modify the POD Data or DB Data.

2) Simulate a Disaster

3) Restore using Backup.

4) POD Data/DB entries should be recovered.

- Solution Description: -

Narrowed down upon a tool which can be used for K8 Backup and Restoration for ONAP deployments named as Heptio-ARK

Ark is an Opensource tool to back up and restore your Kubernetes cluster resources and persistent volumes. Ark lets you:

- Take backups of your cluster and restore in case of loss.

- Copy cluster resources across cloud providers. NOTE: Cloud volume migrations are not yet supported.

Replicate your production environment for development and testing environments.

Ark consists of:

- A server that runs on your cluster

- A command-line client that runs locally

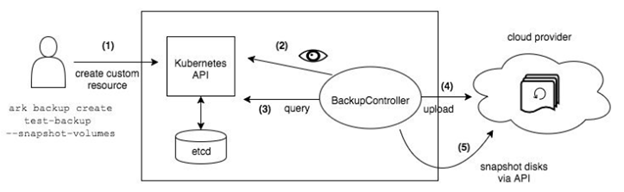

Working Flow diagram: -

- Installation: -

Prerequisites

- Access to a Kubernetes cluster, version 1.7 or later.

- A DNS server on the cluster

- kubectl installed

- Labels should be defined there.

Script Delivered: -

https://jira.onap.org/secure/attachment/12222/oom_ark_setup.sh

- Below script will setup the ARK server and Client as well, It is using the MINIO, an S3-compatible storage service that runs locally on your cluster, but yes it gives liberty to modify according to your cloud provider

#!/bin/bash

PWD=`pwd`

ARK_VERSION=0.9.3

#Download Ark repo

git clone https://github.com/heptio/ark.git

PWD=`pwd`

ARK_VERSION=0.9.3

#Run the Pre-requistites

kubectl apply -f $PWD/ark/examples/common/00-prereqs.yaml

#Run the Ark POD deployment

kubectl apply -f $PWD/ark/examples/minio/

#Download the Client and Make it executable

cd ark

wget https://github.com/heptio/ark/releases/download/v0.9.3/ark-v${ARK_VERSION}-linux-amd64.tar.gz

sudo tar -zxvf ark-v${ARK_VERSION}-linux-amd64.tar.gz

sudo chmod +x ./ark

sudo mv ./ark /usr/local/bin/ark

exit 0

Code Delivered:-

As Labels need to be defined, because that is a unique identity which we need to have for any backup of our k8 containers,

So in OOM code, Where -ever we don't have labels, We need to define that whether its configmap or secret, for eg below:-

labels:

app: {{ include "common.name" . }} chart: {{ .Chart.Name }}-{{ .Chart.Version | replace "+" "_" }} release: {{ .Release.Name }} heritage: {{ .Release.Service }}

- Running ARK Example (Backup and Restoration with Logs): -

1) INSTALL SO COMPONENT:-

root@k8s-1:/vaibhav/backup/oom/kubernetes# helm install so -n bkup --namespace test3

NAME: bkup

LAST DEPLOYED: Fri Jul 20 06:59:09 2018

NAMESPACE: test3

STATUS: DEPLOYED

RESOURCES:

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

bkup-so-db-744fccd888-w67zk 0/1 Init:0/1 0 0s

bkup-so-7668c746c-vngk8 0/2 Init:0/1 0 0s

==> v1/Secret

NAME TYPE DATA AGE

bkup-so-db Opaque 1 0s

==> v1/ConfigMap

NAME DATA AGE

confd-configmap 1 0s

so-configmap 5 0s

so-docker-file-configmap 1 0s

so-filebeat-configmap 1 0s

so-log-configmap 11 0s

==> v1/PersistentVolume

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

bkup-so-db 2Gi RWX Retain Bound test3/bkup-so-db 0s

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

bkup-so-db Bound bkup-so-db 2Gi RWX 0s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

so-db NodePort 10.43.63.96 <none> 3306:30252/TCP 0s

so NodePort 10.43.59.93 <none> 8080:30223/TCP,3904:30225/TCP,3905:30224/TCP,9990:30222/TCP,8787:30250/TCP 0s

==> v1beta1/Deployment

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

bkup-so-db 1 1 1 0 0s

bkup-so 1 1 1 0 0s

NOTES:

1. Get the application URL by running these commands:

export NODE_PORT=$(kubectl get --namespace test3 -o jsonpath="{.spec.ports[0].nodePort}" services so)

export NODE_IP=$(kubectl get nodes --namespace test3 -o jsonpath="{.items[0].status.addresses[0].address}")

echo http://$NODE_IP:$NODE_PORT

2) CHECKING STATUS OF POD:-

root@k8s-1:/vaibhav/backup/oom/kubernetes# kubectl get pods --all-namespaces | grep -i so

NAMESPACE NAME READY STATUS RESTARTS AGE

test3 bkup-so-7668c746c-vngk8 2/2 Running 0 8m

test3 bkup-so-db-744fccd888-w67zk 1/1 Running 0 8m

root@k8s-1:/vaibhav/backup/oom/kubernetes#

3) CREATING BACKUP OF DEPLOYMENT:-

Here I am using selector label as release name

root@k8s-1:/vaibhav/backup/oom/kubernetes# ark backup create so-backup --selector release=bkup

Backup request "so-backup" submitted successfully.

Run `ark backup describe so-backup` for more details.

root@k8s-1:/vaibhav/backup/oom/kubernetes#

4) CHECKING BACKUP LOGS:-

root@k8s-1:/vaibhav/backup/oom/kubernetes# ark backup describe so-backup

Name: so-backup

Namespace: heptio-ark

Labels: <none>

Annotations: <none>

Phase: Completed

Namespaces:

Included: *

Excluded: <none>

Resources:

Included: *

Excluded: <none>

Cluster-scoped: auto

Label selector: release=bkup

Snapshot PVs: auto

TTL: 720h0m0s

Hooks: <none>

Backup Format Version: 1

Started: 2018-07-20 07:09:51 +0000 UTC

Completed: 2018-07-20 07:09:53 +0000 UTC

Expiration: 2018-08-19 07:09:51 +0000 UTC

Validation errors: <none>

Persistent Volumes: <none included>

5) SIMULATING A DISASTER:-

root@k8s-1:/vaibhav/backup/oom/kubernetes# helm delete --purge bkup

release "bkup" deleted

6)CREATE BACKUP OF THE PODS USING ARK :-

root@k8s-1:/vaibhav/backup/oom/kubernetes# ark restore create --from-backup so-backup

Restore request "so-backup-20180720071236" submitted successfully.

Run `ark restore describe so-backup-20180720071236` for more details.

root@k8s-1:/vaibhav/backup/oom/kubernetes#

7) CHECKING RESTORATION LOGS:-

root@k8s-1:/vaibhav/backup/oom/kubernetes# ark restore describe so-backup-20180720071236

Name: so-backup-20180720071236

Namespace: heptio-ark

Labels: <none>

Annotations: <none>

Backup: so-backup

Namespaces:

Included: *

Excluded: <none>

Resources:

Included: *

Excluded: nodes, events, events.events.k8s.io, backups.ark.heptio.com, restores.ark.heptio.com

Cluster-scoped: auto

Namespace mappings: <none>

Label selector: <none>

Restore PVs: auto

Phase: Completed

Validation errors: <none>

Warnings: <none>

Errors: <none>

8)CHECK TARBALL:-

root@k8s-1:/vaibhav/backup/resources# tree

.

??? configmaps

? ??? namespaces

? ??? test3

? ??? confd-configmap.json

? ??? so-configmap.json

? ??? so-docker-file-configmap.json

? ??? so-filebeat-configmap.json

? ??? so-log-configmap.json

??? deployments.apps

? ??? namespaces

? ??? test3

? ??? bkup-so-db.json

? ??? bkup-so.json

??? endpoints

? ??? namespaces

? ??? test3

? ??? so-db.json

? ??? so.json

??? persistentvolumeclaims

? ??? namespaces

? ??? test3

? ??? bkup-so-db.json

??? persistentvolumes

? ??? cluster

? ??? bkup-so-db.json

??? pods

? ??? namespaces

? ??? test3

? ??? bkup-so-7668c746c-vngk8.json

? ??? bkup-so-db-744fccd888-w67zk.json

??? replicasets.apps

? ??? namespaces

? ??? test3

? ??? bkup-so-7668c746c.json

? ??? bkup-so-db-744fccd888.json

??? secrets

? ??? namespaces

? ??? test3

? ??? bkup-so-db.json

??? services

??? namespaces

??? test3

??? so-db.json

??? so.json

26 directories, 18 files

9) RESTORE RUN :-

root@k8s-1:/vaibhav/backup/oom/kubernetes# ark restore get

NAME BACKUP STATUS WARNINGS ERRORS CREATED SELECTOR

so-backup-20180720071236 so-backup Completed 0 0 2018-07-20 07:12:36 +0000 UTC <none>

10) CHECK THE POD STATUS:-

root@k8s-1:/vaibhav/backup/oom/kubernetes# kubectl get pods --all-namespaces | grep -i so

NAMESPACE NAME READY STATUS RESTARTS AGE

test3 bkup-so-7668c746c-vngk8 2/2 Running 0 8m

test3 bkup-so-db-744fccd888-w67zk 1/1 Running 0 8m

Another Example with DB and PV Backup:-

***APPC COMPONENT BACKUP and RESTORATION**

root@rancher:~/oom/kubernetes# kubectl get pods --all-namespaces | grep -i appconap bk-appc-0 1/2 Running 0 1monap bk-appc-cdt-7cd6f6d674-5thwj 1/1 Running 0 1monap bk-appc-db-0 2/2 Running 0 1monap bk-appc-dgbuilder-59895d4d69-7rp9q 1/1 Running 0 1mroot@rancher:~/oom/kubernetes#root@rancher:~/oom/kubernetes#

** CREATING DUMMY ENTRY IN DB **

root@rancher:~/oom/kubernetes# kubectl exec -it -n default bk-appc-db-0 bashDefaulting container name to appc-db.Use 'kubectl describe pod/bk-appc-db-0 -n onap' to see all of the containers in this pod.root@bk-appc-db-0:/#root@bk-appc-db-0:/#root@bk-appc-db-0:/#root@bk-appc-db-0:/# mysql -u root -pEnter password:Welcome to the MySQL monitor. Commands end with ; or \g.Your MySQL connection id is 42Server version: 5.7.23-log MySQL Community Server (GPL)

Copyright (c) 2000, 2018, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or itsaffiliates. Other names may be trademarks of their respectiveowners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql>mysql>mysql>mysql> connect mysqlReading table information for completion of table and column namesYou can turn off this feature to get a quicker startup with -A

Connection id: 44Current database: mysql

mysql>mysql>mysql> select * from servers;Empty set (0.00 sec)

mysql> desc servers;+-------------+----------+------+-----+---------+-------+| Field | Type | Null | Key | Default | Extra |+-------------+----------+------+-----+---------+-------+| Server_name | char(64) | NO | PRI | | || Host | char(64) | NO | | | || Db | char(64) | NO | | | || Username | char(64) | NO | | | || Password | char(64) | NO | | | || Port | int(4) | NO | | 0 | || Socket | char(64) | NO | | | || Wrapper | char(64) | NO | | | || Owner | char(64) | NO | | | |+-------------+----------+------+-----+---------+-------+9 rows in set (0.00 sec)

mysql> insert into servers values ("test","ab","sql","user","pwd",1234,"test","wrp","vaib");Query OK, 1 row affected (0.03 sec)

mysql>mysql>mysql>mysql> select * from servers;+-------------+------+-----+----------+----------+------+--------+---------+-------+| Server_name | Host | Db | Username | Password | Port | Socket | Wrapper | Owner |+-------------+------+-----+----------+----------+------+--------+---------+-------+| abc | ab | sql | user | pwd | 1234 | test | wrp | vaib |+-------------+------+-----+----------+----------+------+--------+---------+-------+1 row in set (0.00 sec)

mysql>mysql>mysql> exitByeroot@bk-appc-db-0:/#root@bk-appc-db-0:/#root@bk-appc-db-0:/#root@bk-appc-db-0:/#root@bk-appc-db-0:/#root@bk-appc-db-0:/#root@bk-appc-db-0:/# exibash: exi: command not foundroot@bk-appc-db-0:/# exitexitcommand terminated with exit code 127root@rancher:~/oom/kubernetes#root@rancher:~/oom/kubernetes#root@rancher:~/oom/kubernetes#root@rancher:~/oom/kubernetes# kubectl get pods --all-namespaces | grep -i appconap bk-appc-0 1/2 Running 0 5monap bk-appc-cdt-7cd6f6d674-5thwj 1/1 Running 0 5monap bk-appc-db-0 2/2 Running 0 5monap bk-appc-dgbuilder-59895d4d69-7rp9q 1/1 Running 0 5mroot@rancher:~/oom/kubernetes#root@rancher:~/oom/kubernetes#

*** CREATING DUMMY FILE IN APPC PV ***

root@rancher:~/oom/kubernetes# kubectl exec -it -n onap bk-appc-0 bash

Defaulting container name to appc.

Use 'kubectl describe pod/bk-appc-0 -n onap' to see all of the containers in this pod.

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/# cd /opt/opendaylight/current/d

daexim/ data/ deploy/

root@bk-appc-0:/# cd /opt/opendaylight/current/daexim/

root@bk-appc-0:/opt/opendaylight/current/daexim# ls

root@bk-appc-0:/opt/opendaylight/current/daexim# ls

root@bk-appc-0:/opt/opendaylight/current/daexim#

root@bk-appc-0:/opt/opendaylight/current/daexim#

root@bk-appc-0:/opt/opendaylight/current/daexim# touch abc.txt

root@bk-appc-0:/opt/opendaylight/current/daexim# ls

abc.txt

root@bk-appc-0:/opt/opendaylight/current/daexim# exit

exit

root@rancher:~/oom/kubernetes# kubectl get pods --all-namespaces | grep -i appc

onap bk-appc-0 1/2 Running 0 6m

onap bk-appc-cdt-7cd6f6d674-5thwj 1/1 Running 0 6m

onap bk-appc-db-0 2/2 Running 0 6m

onap bk-appc-dgbuilder-59895d4d69-7rp9q 1/1 Running 0 6m

root@rancher:~/oom/kubernetes#

** CREATING BACKUP USING ARK **

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes# ark backup create appc-bkup1 --selector release=bk

Backup request "appc-bkup1" submitted successfully.

Run `ark backup describe appc-bkup1` for more details.

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes# ark backup describe appc-bkup1

Name: appc-bkup1

Namespace: heptio-ark

Labels: <none>

Annotations: <none>

Phase: Completed

Namespaces:

Included: *

Excluded: <none>

Resources:

Included: *

Excluded: <none>

Cluster-scoped: auto

Label selector: release=bk

Snapshot PVs: auto

TTL: 720h0m0s

Hooks: <none>

Backup Format Version: 1

Started: 2018-08-27 05:07:45 +0000 UTC

Completed: 2018-08-27 05:07:47 +0000 UTC

Expiration: 2018-09-26 05:07:44 +0000 UTC

Validation errors: <none>

Persistent Volumes: <none included>

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes# pwd

/root/oom/kubernetes

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes# ls

aaf appc cli config contrib dist esr LICENSE Makefile multicloud onap policy portal README.md sdc sniro-emulator uui vid

aai clamp common consul dcaegen2 dmaap helm log msb nbi oof pomba readiness robot sdnc so vfc vnfsdk

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

** SIMULATING DISASTER BY DELETING APPC **

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes# helm delete --purge bk

release "bk" deleted

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

** RESTORATION USING ARK **

root@rancher:~/oom/kubernetes# ark restore create --from-backup appc-bkup1

Restore request "appc-bkup1-20180827052651" submitted successfully.

Run `ark restore describe appc-bkup1-20180827052651` for more details.

root@rancher:~/oom/kubernetes# ark restore describe appc-bkup1-20180827052651

Name: appc-bkup1-20180827052651

Namespace: heptio-ark

Labels: <none>

Annotations: <none>

Backup: appc-bkup1

Namespaces:

Included: *

Excluded: <none>

Resources:

Included: *

Excluded: nodes, events, events.events.k8s.io, backups.ark.heptio.com, restores.ark.heptio.com

Cluster-scoped: auto

Namespace mappings: <none>

Label selector: <none>

Restore PVs: auto

Phase: InProgress

Validation errors: <none>

Warnings: <none>

Errors: <none>

root@rancher:~/oom/kubernetes# ark restore describe appc-bkup1-20180827052651

Name: appc-bkup1-20180827052651

Namespace: heptio-ark

Labels: <none>

Annotations: <none>

Backup: appc-bkup1

Namespaces:

Included: *

Excluded: <none>

Resources:

Included: *

Excluded: nodes, events, events.events.k8s.io, backups.ark.heptio.com, restores.ark.heptio.com

Cluster-scoped: auto

Namespace mappings: <none>

Label selector: <none>

Restore PVs: auto

Phase: Completed

Validation errors: <none>

Warnings: <error getting warnings: Get http://minio.heptio-ark.svc:9000/ark/appc-bkup1/restore-appc-bkup1-20180827052651-results.gz?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Content-Sha256=UNSIGNED-PAYLOAD&X-Amz-Credential=minio%2F20180827%2Fminio%2Fs3%2Faws4_request&X-Amz-Date=20180827T052706Z&X-Amz-Expires=600&X-Amz-SignedHeaders=host&X-Amz-Signature=0f806ff4dbbb40fa5563a969b27d19c87405bc23d707f4a5d80f6b2a49ac7053: dial tcp: lookup minio.heptio-ark.svc on 10.11.0.13:53: no such host>

Errors: <error getting errors: Get http://minio.heptio-ark.svc:9000/ark/appc-bkup1/restore-appc-bkup1-20180827052651-results.gz?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Content-Sha256=UNSIGNED-PAYLOAD&X-Amz-Credential=minio%2F20180827%2Fminio%2Fs3%2Faws4_request&X-Amz-Date=20180827T052706Z&X-Amz-Expires=600&X-Amz-SignedHeaders=host&X-Amz-Signature=0f806ff4dbbb40fa5563a969b27d19c87405bc23d707f4a5d80f6b2a49ac7053: dial tcp: lookup minio.heptio-ark.svc on 10.11.0.13:53: no such host>

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

** RESTORATION DETAILS **

root@rancher:~/oom/kubernetes# ark restore describe appc-bkup1-20180827052651

Name: appc-bkup1-20180827052651

Namespace: heptio-ark

Labels: <none>

Annotations: <none>

Backup: appc-bkup1

Namespaces:

Included: *

Excluded: <none>

Resources:

Included: *

Excluded: nodes, events, events.events.k8s.io, backups.ark.heptio.com, restores.ark.heptio.com

Cluster-scoped: auto

Namespace mappings: <none>

Label selector: <none>

Restore PVs: auto

Phase: Completed

Validation errors: <none>

Warnings: <error getting warnings: Get http://minio.heptio-ark.svc:9000/ark/appc-bkup1/restore-appc-bkup1-20180827052651-results.gz?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Content-Sha256=UNSIGNED-PAYLOAD&X-Amz-Credential=minio%2F20180827%2Fminio%2Fs3%2Faws4_request&X-Amz-Date=20180827T052709Z&X-Amz-Expires=600&X-Amz-SignedHeaders=host&X-Amz-Signature=d716695beaab18b8ef8f98c5ac1a54fc9d38922a7499224926b564695f4cf969: dial tcp: lookup minio.heptio-ark.svc on 10.11.0.13:53: no such host>

Errors: <error getting errors: Get http://minio.heptio-ark.svc:9000/ark/appc-bkup1/restore-appc-bkup1-20180827052651-results.gz?X-Amz-Algorithm=AWS4-HMAC-SHA256&X-Amz-Content-Sha256=UNSIGNED-PAYLOAD&X-Amz-Credential=minio%2F20180827%2Fminio%2Fs3%2Faws4_request&X-Amz-Date=20180827T052709Z&X-Amz-Expires=600&X-Amz-SignedHeaders=host&X-Amz-Signature=d716695beaab18b8ef8f98c5ac1a54fc9d38922a7499224926b564695f4cf969: dial tcp: lookup minio.heptio-ark.svc on 10.11.0.13:53: no such host>

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes# ark restore get

NAME BACKUP STATUS WARNINGS ERRORS CREATED SELECTOR

appc-bkup-20180827045955 appc-bkup Completed 2 0 2018-08-27 04:59:52 +0000 UTC <none>

appc-bkup1-20180827052651 appc-bkup1 Completed 5 0 2018-08-27 05:26:48 +0000 UTC <none>

vid-bkp-20180824053001 vid-bkp Completed 149 2 2018-08-24 05:29:59 +0000 UTC <none>

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

** RESTORATION SUCCESSFUL ***

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes# kubectl get pods --all-namespaces | grep -i appc

onap bk-appc-0 1/2 Running 0 26m

onap bk-appc-cdt-7cd6f6d674-5thwj 1/1 Running 0 26m

onap bk-appc-db-0 2/2 Running 0 26m

onap bk-appc-dgbuilder-59895d4d69-7rp9q 1/1 Running 0 26m

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes# kubectl exec -it -n onap bk-appc-db-0 bash

Defaulting container name to appc-db.

Use 'kubectl describe pod/bk-appc-db-0 -n onap' to see all of the containers in this pod.

root@bk-appc-db-0:/#

root@bk-appc-db-0:/#

root@bk-appc-db-0:/#

** RESTORATION OF DB SUCCESSFUL **

root@bk-appc-db-0:/# mysql -u root

ERROR 1045 (28000): Access denied for user 'root'@'localhost' (using password: NO)

root@bk-appc-db-0:/# mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 335

Server version: 5.7.23-log MySQL Community Server (GPL)

Copyright (c) 2000, 2018, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> connect mysql

Reading table information for completion of table and column names

You can turn off this feature to get a quicker startup with -A

Connection id: 337

Current database: mysql

mysql> select * from servers;

+-------------+------+-----+----------+----------+------+--------+---------+-------+

| Server_name | Host | Db | Username | Password | Port | Socket | Wrapper | Owner |

+-------------+------+-----+----------+----------+------+--------+---------+-------+

| abc | ab | sql | user | pwd | 1234 | test | wrp | vaib |

+-------------+------+-----+----------+----------+------+--------+---------+-------+

1 row in set (0.00 sec)

mysql> quit

Bye

root@bk-appc-db-0:/# exit

exit

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

** RESTORATION of PV SUCCESSFUL ***

root@rancher:~/oom/kubernetes# kubectl get pods --all-namespaces | grep -i appc

onap bk-appc-0 1/2 Running 0 27m

onap bk-appc-cdt-7cd6f6d674-5thwj 1/1 Running 0 27m

onap bk-appc-db-0 2/2 Running 0 27m

onap bk-appc-dgbuilder-59895d4d69-7rp9q 1/1 Running 0 27m

root@rancher:~/oom/kubernetes# kubectl exec -it -n onap bk-appc-0 bash

Defaulting container name to appc.

Use 'kubectl describe pod/bk-appc-0 -n onap' to see all of the containers in this pod.

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/#

root@bk-appc-0:/# cd /opt/opendaylight/current/daexim/

root@bk-appc-0:/opt/opendaylight/current/daexim# ls

abc.txt

root@bk-appc-0:/opt/opendaylight/current/daexim#

root@bk-appc-0:/opt/opendaylight/current/daexim#

root@bk-appc-0:/opt/opendaylight/current/daexim# exit

exit

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@rancher:~/oom/kubernetes#

root@k8s-1:/vaibhav/backup# ark restore get

NAME BACKUP STATUS WARNINGS ERRORS CREATED SELECTOR

oof-back-20180820084100 oof-back Completed 2 0 2018-08-20 08:41:00 +0000 UTC <none>

root@k8s-1:/vaibhav/backup# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

default bkup-oof-9764b4bc4-szvz7 0/1 Init:0/1 2 26m

default bkup-oof-has-api-d696fb4c8-dk559 1/1 Running 0 26m

default bkup-oof-has-cassandra-6bb5bd84f9-jhb8b 1/1 Running 0 26m

default bkup-oof-has-controller-f949cc6f9-n9d2c 1/1 Running 0 26m

default bkup-oof-has-data-95bbc6f9c-749xn 1/1 Running 0 26m

default bkup-oof-has-music-684f6d5ddc-gq5lf 1/1 Running 0 26m

default bkup-oof-has-reservation-849f49fc7-2vhhx 1/1 Running 0 26m

default bkup-oof-has-solver-687f699b5c-7rshd 1/1 Running 0 26m

default bkup-oof-has-zookeeper-5c447c8f-6d528 1/1 Running 0 26m

root@k8s-1:/vaibhav/backup# kubectl exec -it -n default bkup-oof-has-cassandra-6bb5bd84f9-jhb8b bash

root@bkup-oof-has-cassandra-6bb5bd84f9-jhb8b:/#

)

root@bkup-oof-has-cassandra-6bb5bd84f9-jhb8b:/# /usr/bin/cqlsh -u root -p Aa123456

Connected to Test Cluster at 127.0.0.1:9042.

[cqlsh 5.0.1 | Cassandra 3.0.16 | CQL spec 3.4.0 | Native protocol v4]

Use HELP for help.

root@cqlsh>

root@cqlsh>

root@cqlsh>

root@cqlsh>

root@cqlsh> DESC cycling.cyclist_category

CREATE TABLE cycling.cyclist_category (

category text,

points int,

id uuid,

lastname text,

PRIMARY KEY (category, points)

) WITH CLUSTERING ORDER BY (points DESC)

AND bloom_filter_fp_chance = 0.01

AND caching = {'keys': 'ALL', 'rows_per_partition': 'NONE'}

AND comment = ''

AND compaction = {'class': 'org.apache.cassandra.db.compaction.SizeTieredCompactionStrategy', 'max_threshold': '32', 'min_threshold': '4'}

AND compression = {'chunk_length_in_kb': '64', 'class': 'org.apache.cassandra.io.compress.LZ4Compressor'}

AND crc_check_chance = 1.0

AND dclocal_read_repair_chance = 0.1

AND default_time_to_live = 0

AND gc_grace_seconds = 864000

AND max_index_interval = 2048

AND memtable_flush_period_in_ms = 0

AND min_index_interval = 128

AND read_repair_chance = 0.0

AND speculative_retry = '99PERCENTILE';

root@cqlsh>

Use Cases:-Disaster recovery

Using Schedules and Restore-Only Mode

If you periodically back up your cluster’s resources, you are able to return to a previous state in case of some unexpected mishap, such as a service outage.

Cluster migration

Using Backups and Restores

Heptio Ark can help you port your resources from one cluster to another, as long as you point each Ark Config to the same cloud object storage.

References

https://heptio.github.io/ark/v0.9.0/

7 Comments

Brian Freeman

what would be the approach to backup an entire ONAP instance particualarly SDC, AAI, SDNC data ? would it be a script with all the references to the helm deploy releases or something that does a helm list and then for each entry does the ark backup ?

Keong Lim

Hi Brian Freeman,

Part of the solution for AAI could be: How to A&AI data snapshot and restore in ONAP Beijing

Venkata Harish Kajur James Forsyth can expand on this technique.

There was also discussion on 2018-11-14 AAI Meeting Notes related to "Hbase to Cassandra migration" that used the similar technique (that one also included data massage of the snapshot to do the schema change).

Keong Lim

Part of the solution for AAI in Casablanca: A&AI Data Restore for OOM - Casablanca

We think there is a bigger question that needs consideration too.

Brian Freeman

Not sure where the backups are saved and how to make sure things like AAI cassandra data is saved. Seems like the backup takes 10 seconds or so which seems short.

root@oom-rancher:/home/ubuntu/ARK-TEST/ark/examples/minio# kubectl -n heptio-ark get pod -o=wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

ark-6f847bb659-p9h4r 1/1 Running 0 21m 10.42.98.40 oom-k8s-03 <none>

minio-6b8ff5c8b6-z2vtc 1/1 Running 0 21m 10.42.2.7 oom-k8s-04 <none>

minio-setup-ld7q5 0/1 Completed 0 21m 10.42.223.239 oom-k8s-03 <none>

restic-6grmz 1/1 Running 0 21m 10.42.171.227 oom-k8s-03 <none>

restic-6s9cx 1/1 Running 0 21m 10.42.242.101 oom-orch-1 <none>

restic-8jcb4 1/1 Running 0 21m 10.42.151.119 oom-etcd-1 <none>

restic-f466b 1/1 Running 0 21m 10.42.110.152 oom-k8s-04 <none>

restic-fsz2n 1/1 Running 0 21m 10.42.19.245 oom-etcd-3 <none>

restic-hrxv9 1/1 Running 0 21m 10.42.226.38 oom-orch-2 <none>

restic-jgztm 1/1 Running 0 21m 10.42.84.136 oom-k8s-08 <none>

restic-kc52b 1/1 Running 0 21m 10.42.134.198 oom-k8s-05 <none>

restic-mb4vv 1/1 Running 0 21m 10.42.205.205 oom-k8s-01 <none>

restic-msgtn 1/1 Running 0 21m 10.42.235.14 oom-etcd-2 <none>

restic-n649k 1/1 Running 0 21m 10.42.159.61 oom-k8s-07 <none>

restic-t8m2w 1/1 Running 0 21m 10.42.136.65 oom-k8s-02 <none>

restic-vpj6g 1/1 Running 0 21m 10.42.91.96 oom-k8s-06 <none>

Brian Freeman

I did a backup of AAI but no persistent volumes were listed. I opened up the gz on the minio container and couldnt find any uuid's or vnf data. Am I looking in the wrong place ?

Vaibhav Chopra

Hi Brian,

For PVs via restic, you will see it in restic-repo

https://restic.readthedocs.io/en/latest/100_references.html#terminology

Also, the version we tested in this user story doesn't support PV migration. but Latest ARK version support that 0.10.0.

https://heptio.github.io/ark/v0.10.0/locations

So recommended to check this new version, We may see if me or someone in my team have bandwidth to do same and update you guys.

https://github.com/heptio/ark

Sushil Masal

Hi Brian Freeman Vaibhav Chopra

Even with latest Arc version v0.10.0 and Restic plugin backup of PVs wont be taken.

Restic plugin does support backup of PV with type EFS, AzureFile, NFS, emptyDir, local etc., but ONAP applications create all persistent volumes with type hostPath and none of the plugins listed here including Restic supports hostPath volumes backup.

So as a result backup/restore of pv need to be handled separately. One possible solution will be to backup directory used by pv as hostPath using NFS or similar storage provider.