Name of Use Case:

vFW/vDNS

Use Case Authors:

AT&T

Description:

The vFW/vDNS use case as implemented currently and described in the demo configuration here:

Installing and Running the ONAP Demos

UCA-17

-

Getting issue details...

STATUS

Users and Benefit:

Allows for quick and easy demos without any infrastructure or lab constrains.

Can be easily automated for daily integration testing.

VNF:

vFW

vPacketGenerator

vDataSink

vDNS

vLoadBalancer

all VPP based.

Work Flows:

- Instantiation

- Performance based control loop

In this section we will show the work flows for the vFW and vDNS use case. Two section: one is the reference from the original prototype and the second is the new sequence diagram for Release 1.

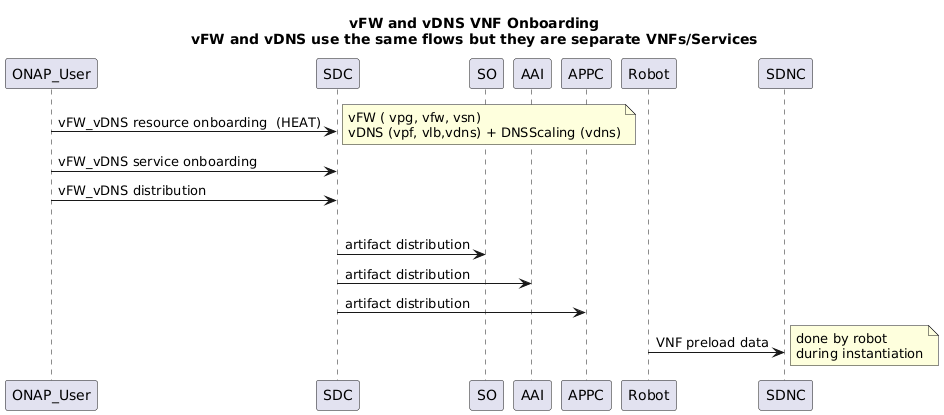

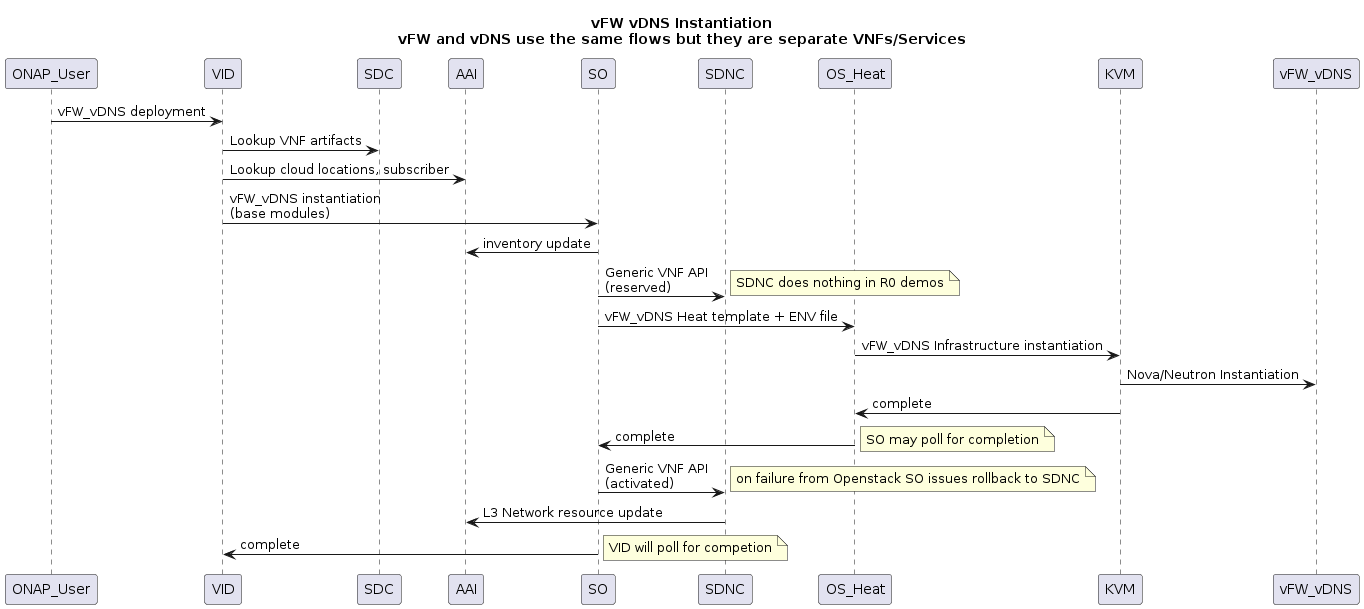

0. Original Sequence Diagram (R0)

0.1 Onboarding (Old Flow)

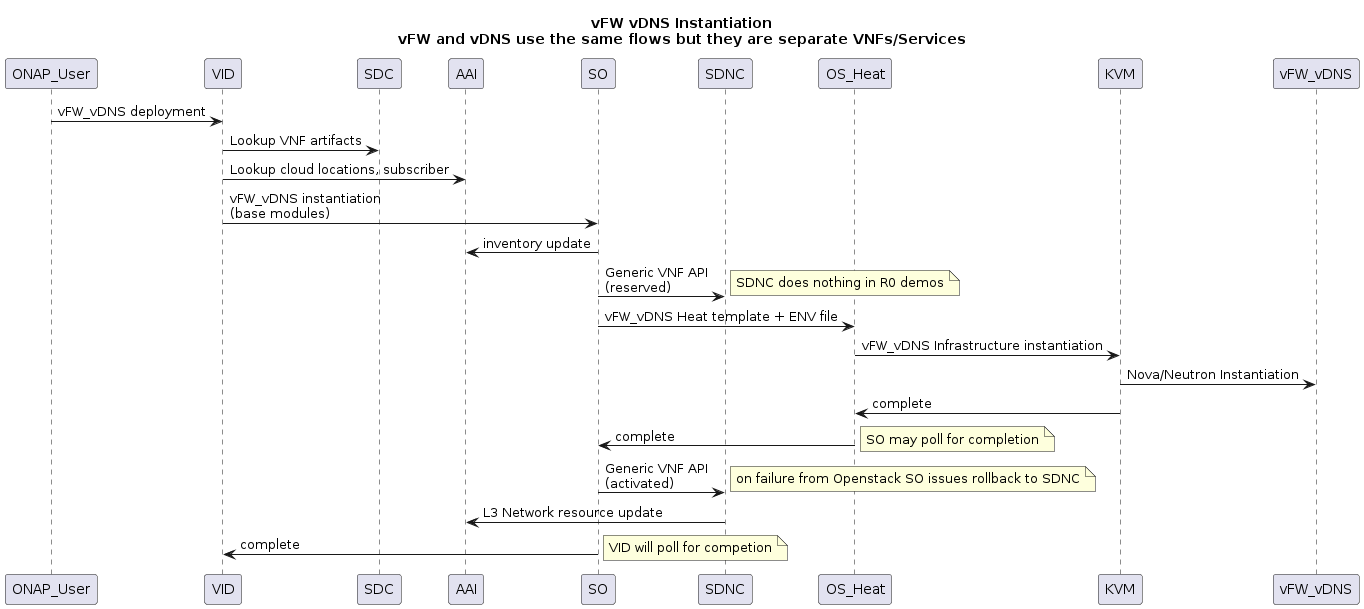

0.2 Instantiation (old flow)

0.3 Control Loop for vFW (Old Flow)

UML text

@startuml

title vFW Closed Loop

participant vPG

participant vFW

participant vSN

vPG -> vFW : traffic

vFW -> vSN : traffic sink

|||

note left: vPG automatically \nincreases traffic based on script

vFW -> DCAE : VES Telemetry

DCAE -> Policy : Threhold Crossing (High)

Policy -> Policy : pattern-match-replace vFW to vPG data

Policy -> APPC : ModifyConfig (lower PG traffic)

APPC -> vPG : Netconf modify config

note right : reduce number of traffic streams

vPG -> vFW : lower traffic

vFW -> DCAE : VES Telemetry

DCAE -> Policy : Event cleared (lower traffic)

@enduml

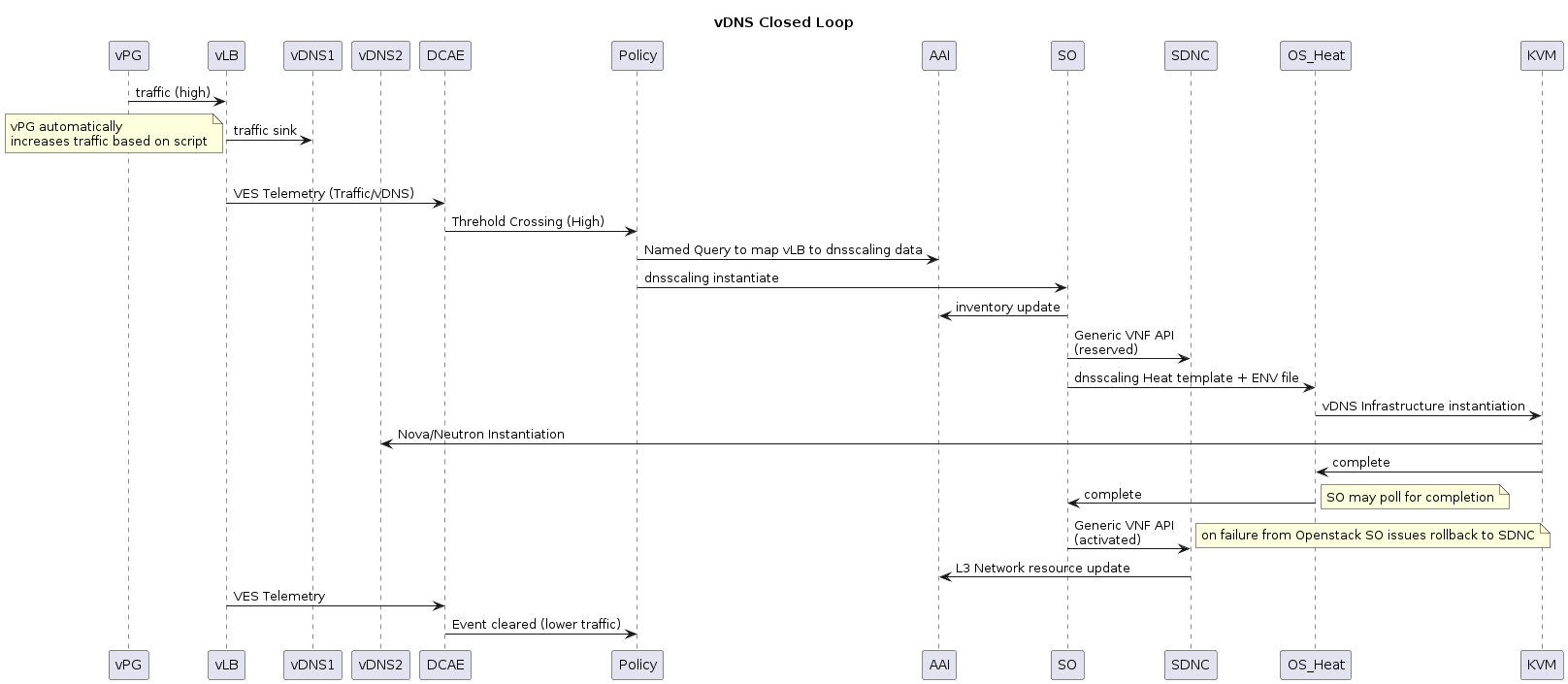

0.4 Control Loop vDNS (Old Flow)

Release 1 Flows:

The order of flows would not be how CI testing would flow. that would be VNF Certification, Onboarding, CLAMP, Instantiation, Closed Loop vFW , Closed Loop vDNS and then Delete of vFW and vDNS.

Most of the R1 flows will be the same except for the addition of new components that should support the vFW/vDNS regression test.

there is one functional change. We will move the query to AAI for data from Policy to APPC so that we can use the APPC interface to AAI which is the correct flow.

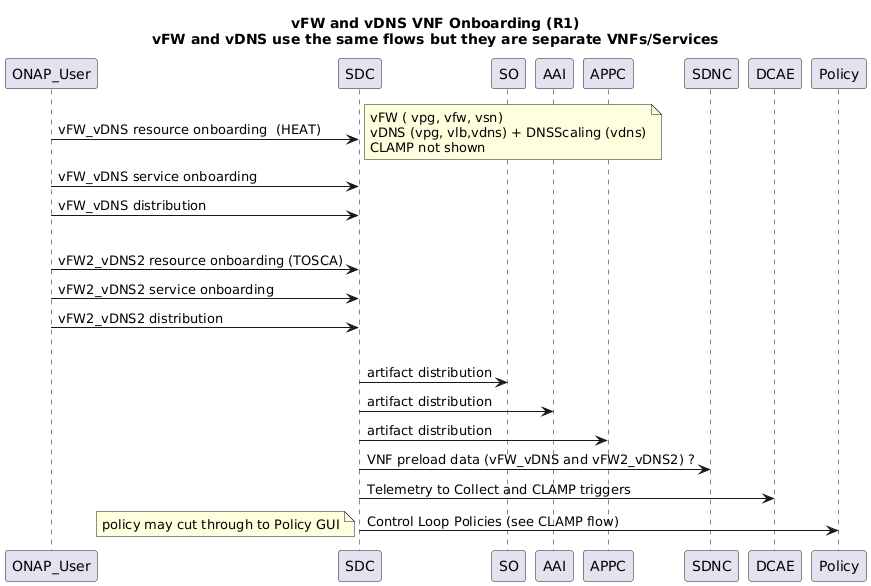

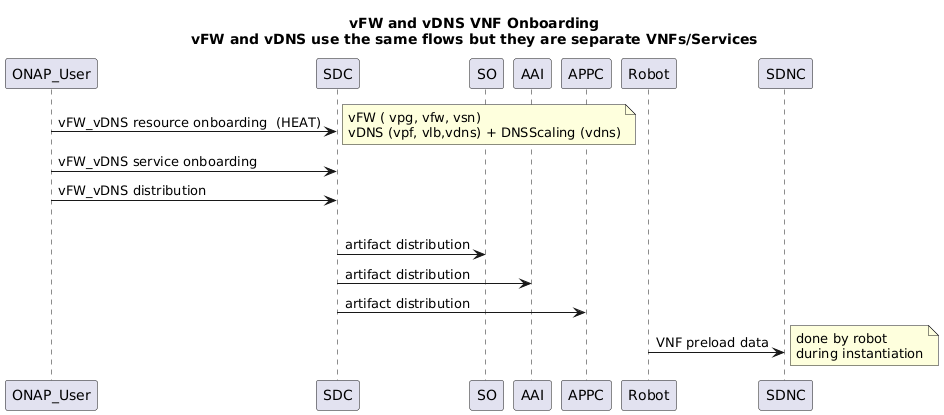

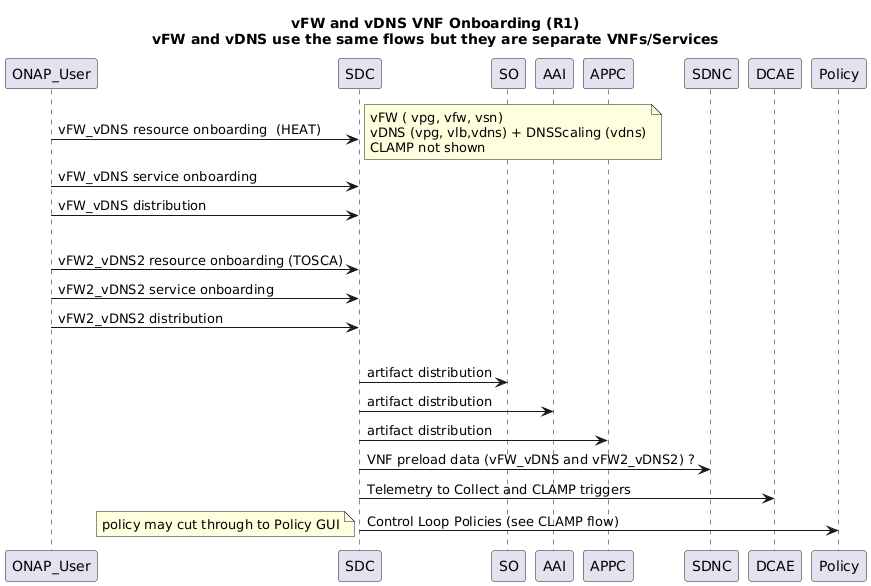

1.1 Onboarding

UML text

@startuml

title vFW and vDNS VNF Onboarding (R1)\nvFW and vDNS use the same flows but they are separate VNFs/Services

ONAP_User -> SDC : vFW_vDNS resource onboarding (HEAT)

note right : vFW ( vpg, vfw, vsn)\nvDNS (vpg, vlb,vdns) + DNSScaling (vdns)\nCLAMP not shown

ONAP_User -> SDC : vFW_vDNS service onboarding

ONAP_User -> SDC : vFW_vDNS distribution

|||

ONAP_User -> SDC : vFW2_vDNS2 resource onboarding (TOSCA)

ONAP_User -> SDC : vFW2_vDNS2 service onboarding

ONAP_User -> SDC : vFW2_vDNS2 distribution

|||

SDC -> SO : artifact distribution

SDC -> AAI : artifact distribution

SDC -> APPC : artifact distribution

SDC -> SDNC : VNF preload data (vFW_vDNS and vFW2_vDNS2) ?

SDC -> DCAE : Telemetry to Collect and CLAMP triggers

SDC -> Policy : Control Loop Policies (see CLAMP flow)

note left: policy may cut through to Policy GUI

@enduml

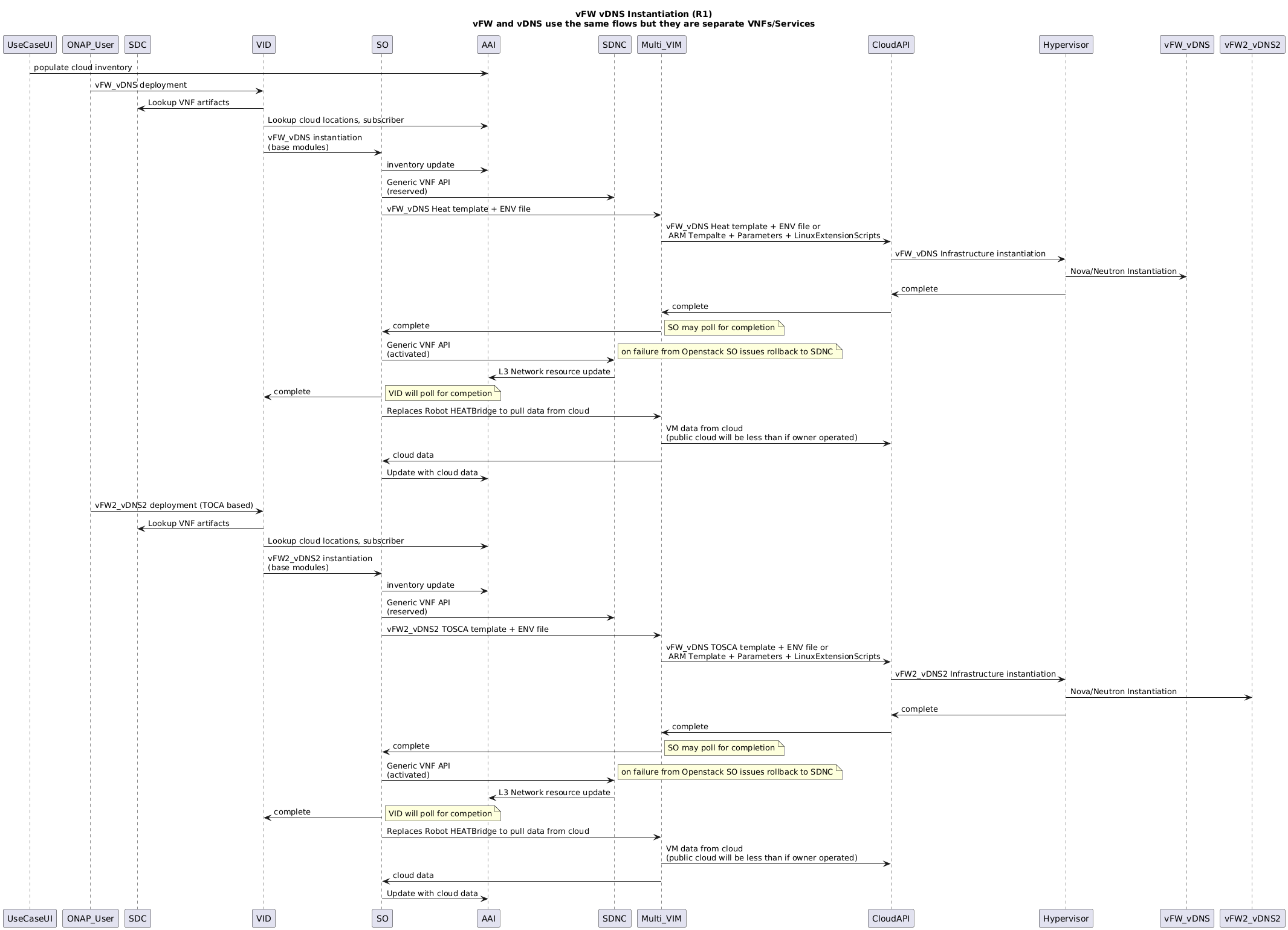

1.2 Instantiation

UML text

@startuml

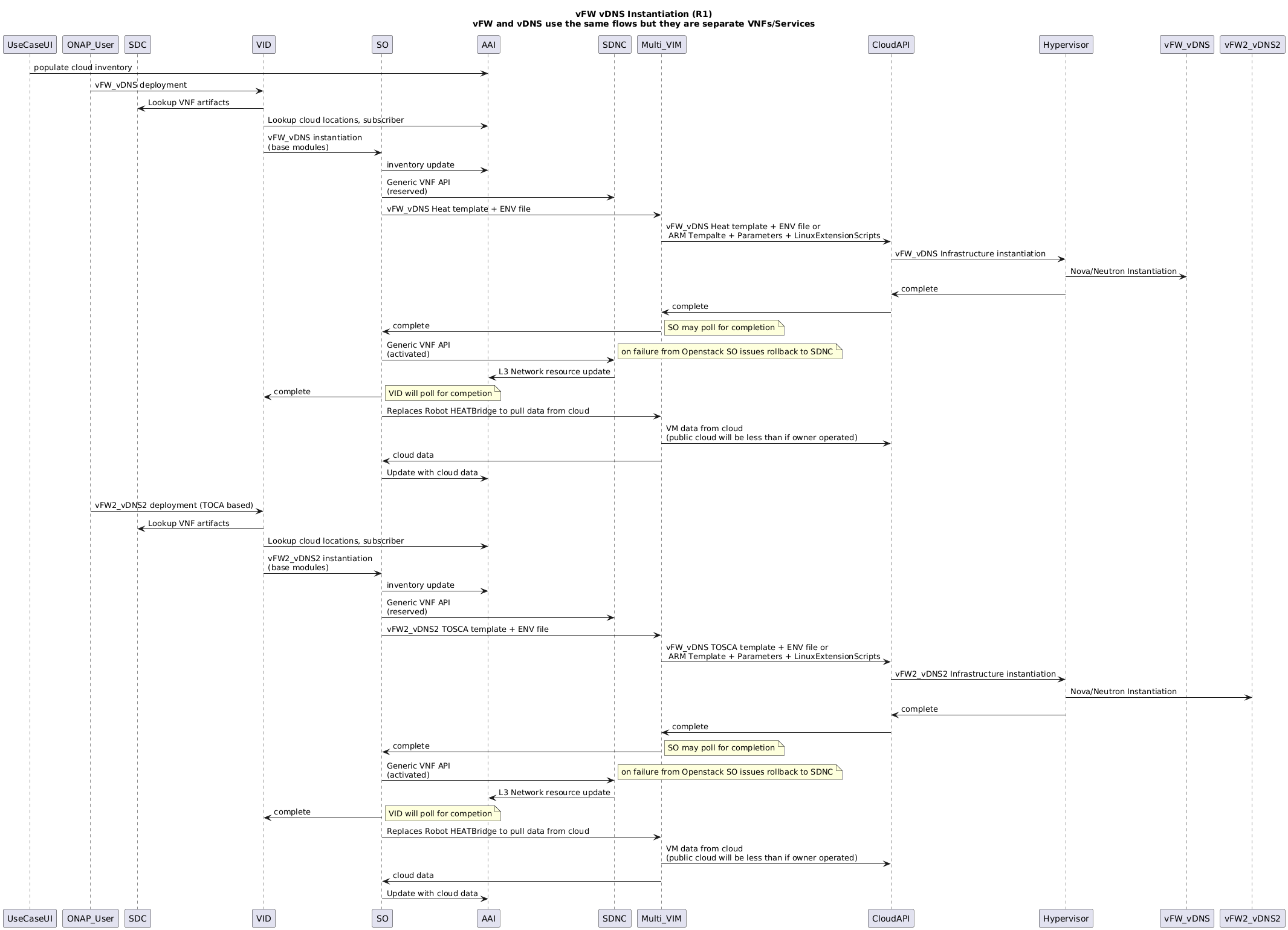

title vFW vDNS Instantiation (R1)\nvFW and vDNS use the same flows but they are separate VNFs/Services

participant UseCaseUI

participant ONAP_User

Participant SDC

Participant VID

Participant SO

UseCaseUI -> AAI : populate cloud inventory

ONAP_User -> VID : vFW_vDNS deployment

VID -> SDC : Lookup VNF artifacts

VID -> AAI : Lookup cloud locations, subscriber

VID -> SO : vFW_vDNS instantiation\n(base modules)

SO -> AAI : inventory update

SO -> SDNC : Generic VNF API\n(reserved)

SO -> Multi_VIM : vFW_vDNS Heat template + ENV file

Multi_VIM -> CloudAPI : vFW_vDNS Heat template + ENV file or\n ARM Tempalte + Parameters + LinuxExtensionScripts

CloudAPI -> Hypervisor : vFW_vDNS Infrastructure instantiation

Hypervisor -> vFW_vDNS : Nova/Neutron Instantiation

Hypervisor -> CloudAPI : complete

CloudAPI -> Multi_VIM : complete

Multi_VIM -> SO : complete

note right : SO may poll for completion

SO -> SDNC: Generic VNF API\n(activated)

note right : on failure from Openstack SO issues rollback to SDNC

SDNC -> AAI : L3 Network resource update

SO -> VID : complete

note right : VID will poll for competion

SO -> Multi_VIM : Replaces Robot HEATBridge to pull data from cloud

Multi_VIM -> CloudAPI : VM data from cloud\n(public cloud will be less than if owner operated)

Multi_VIM -> SO : cloud data

SO -> AAI : Update with cloud data

|||

ONAP_User -> VID : vFW2_vDNS2 deployment (TOCA based)

VID -> SDC : Lookup VNF artifacts

VID -> AAI : Lookup cloud locations, subscriber

VID -> SO : vFW2_vDNS2 instantiation\n(base modules)

SO -> AAI : inventory update

SO -> SDNC : Generic VNF API\n(reserved)

SO -> Multi_VIM : vFW2_vDNS2 TOSCA template + ENV file

Multi_VIM -> CloudAPI : vFW_vDNS TOSCA template + ENV file or\n ARM Template + Parameters + LinuxExtensionScripts

CloudAPI -> Hypervisor : vFW2_vDNS2 Infrastructure instantiation

Hypervisor -> vFW2_vDNS2 : Nova/Neutron Instantiation

Hypervisor -> CloudAPI : complete

CloudAPI -> Multi_VIM : complete

Multi_VIM -> SO : complete

note right : SO may poll for completion

SO -> SDNC: Generic VNF API\n(activated)

note right : on failure from Openstack SO issues rollback to SDNC

SDNC -> AAI : L3 Network resource update

SO -> VID : complete

note right : VID will poll for competion

SO -> Multi_VIM : Replaces Robot HEATBridge to pull data from cloud

Multi_VIM -> CloudAPI : VM data from cloud\n(public cloud will be less than if owner operated)

Multi_VIM -> SO : cloud data

SO -> AAI : Update with cloud data

@enduml

1.3. Closed Loop vFW

There are no changes in the vFW closed loop for R1

UML text

cl

@startuml

title vFW Closed Loop

participant vPG

participant vFW

participant vSN

vPG -> vFW : traffic

vFW -> vSN : traffic sink

|||

note left: vPG automatically \nincreases traffic based on script

vFW -> DCAE : VES Telemetry

DCAE -> Policy : Threhold Crossing (High)

Policy -> Policy : pattern-match-replace vFW to vPG data

Policy -> APPC : ModifyConfig (lower PG traffic)

APPC -> vPG : Netconf modify config

note right : reduce number of traffic streams

vPG -> vFW : lower traffic

vFW -> DCAE : VES Telemetry

DCAE -> Policy : Event cleared (lower traffic)

@enduml

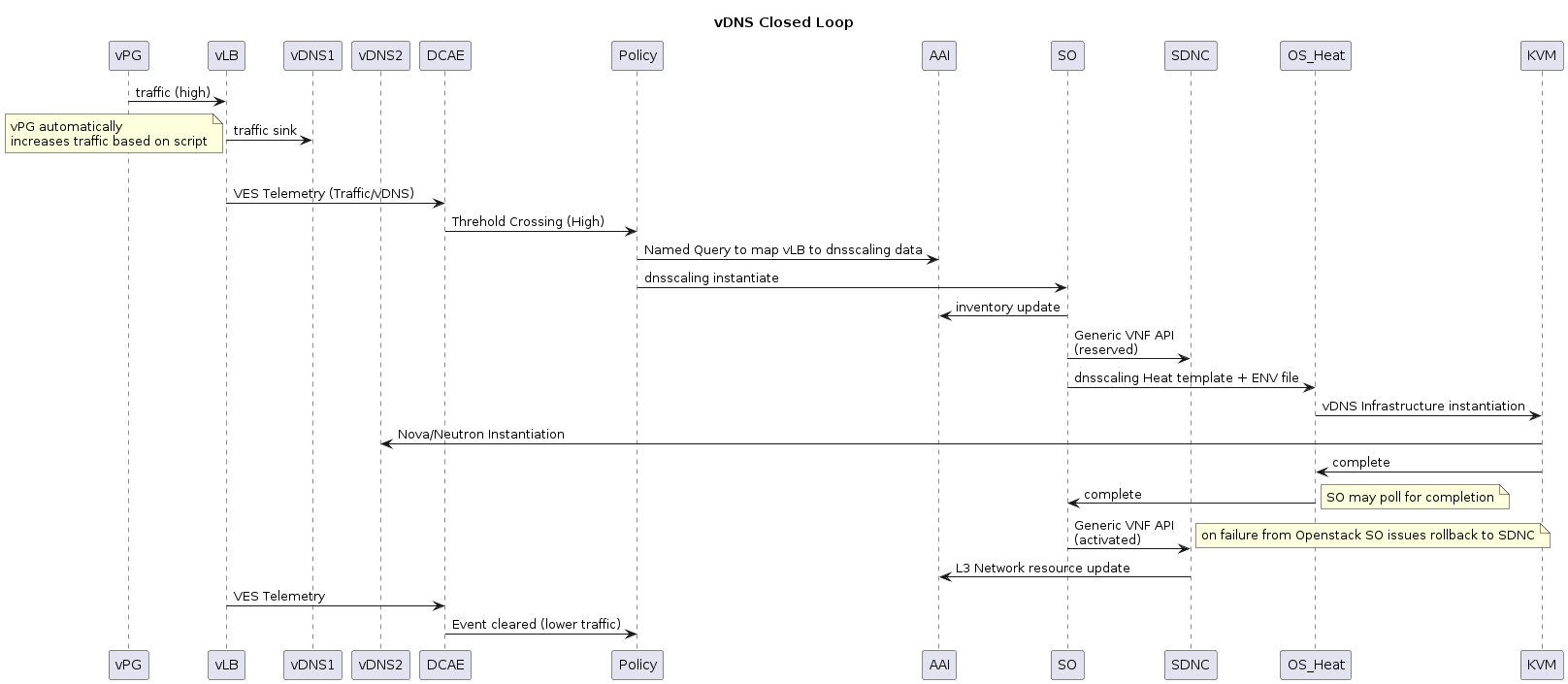

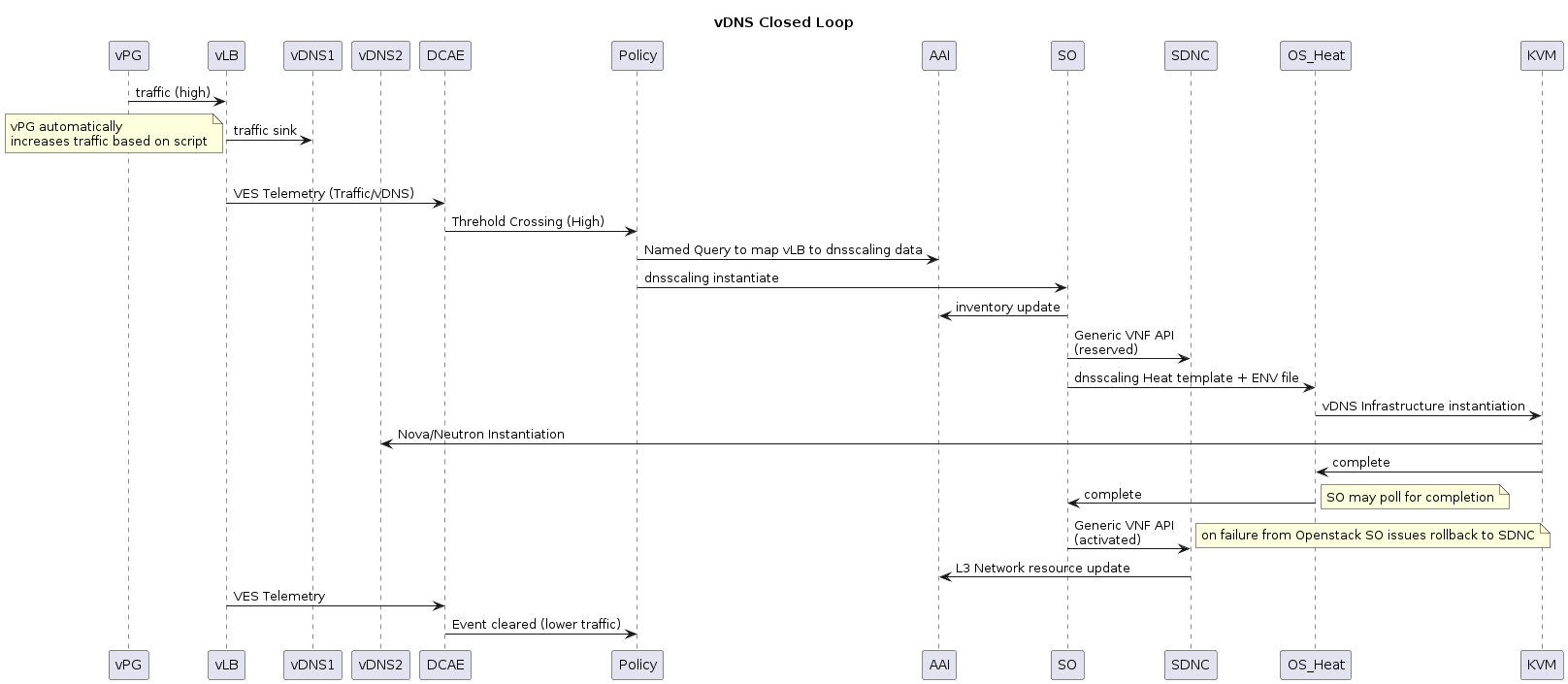

1.4 Close Loop vDNS

This flow has the APPC to AAI query which moves the query from Policy to APPC.

There are no changes in the vDNS flow for R1. Originally we looked at moving the query from Policy to APPC but there were design details on executing the dnsscaling API call and whether APPC should call SO or MultiVIM directly that were not able to work out in time for R1. So we will continue with the original flow.

vDNS Flow (R1)

Future Flow to be considered (After R1)

UML text

@startuml

title vDNS Closed Loop

participant vPG

participant vLB

participant vDNS1

participant vDNS2

vPG -> vLB : traffic (high)

vLB -> vDNS1 : traffic sink

|||

note left: vPG automatically \nincreases traffic based on script

vLB -> DCAE : VES Telemetry (Traffic/vDNS)

DCAE -> Policy : Threhold Crossing (High)

Policy -> APPC: Scale VNF

APPC -> AAI : Named Query to map vLB to dnsscaling data

APPC -> SO : dnsscaling instantiate

SO -> AAI : inventory update

SO -> SDNC : Generic VNF API\n(reserved)

SO -> Multi_VIM: dnsscaling Heat template + ENV file

Multi_VIM -> OS_Heat : dnsscaling Heat template + ENV file

OS_Heat -> KVM : vDNS Infrastructure instantiation

KVM -> vDNS2 : Nova/Neutron Instantiation

KVM -> OS_Heat : complete

OS_Heat -> Multi_VIM : complete

Multi_VIM -> SO : complete

note right : SO may poll for completion

SO -> SDNC: Generic VNF API\n(activated)

note right : on failure from Openstack SO issues rollback to SDNC

SDNC -> AAI : L3 Network resource update

|||

note right : Alternative with TOSCA based dnsscaling

APPC -> AAI : inventory update

APPC -> SDNC : Generic VNF API\n(reserved)

APPC -> Multi_VIM : scale out request (Need to confirm since vFW/vDNS do not have VNFM)

Multi_VIM -> OS_Heat : dnsscaling Heat template + ENV file

OS_Heat -> KVM : vDNS Infrastructure instantiation

KVM -> vDNS2 : Nova/Neutron Instantiation

KVM -> OS_Heat : complete

OS_Heat -> Multi_VIM : complete

Multi_VIM -> APPC : complete

note right : APPC may poll for completion

APPC -> SDNC: Generic VNF API\n(activated)

note right : on failure from Openstack APPC issues rollback to SDNC

SDNC -> AAI : L3 Network resource update

|||

vLB -> DCAE : VES Telemetry

DCAE -> Policy : Event cleared (lower traffic)

@enduml

1.5 CLAMP creation of control loops (new in R1)

UML text

@startuml

title CLAMP Closed Loop Design for vFW and vDNS \nvFW and vDNS use the same flows but they are separate VNFs/Services

participant ONAP_User

participant CLAMP

participant SDC

ONAP_User -> CLAMP : Create Control Loop from Template

CLAMP -> SDC : Query for Services and VNFs

ONAP_User -> CLAMP : Choose Service and VNF

CLAMP -> SDC : Query for Alarm data from VNFs

ONAP_User -> CLAMP : Create Operational Policy\nChooses Chain of Actions\nSave Policy

ONAP_User -> CLAMP : Select TCA box,\nclicks and creates one or more \nassociated signature rules.\nAssociates them with the operational policy \ncreated earlier

ONAP_User -> CLAMP : Repeat as Operational Policy/Signature as needed\n(total 1 vFW, 1 vDNS)

CLAMP -> Policy : Create Operational Policies

CLAMP -> Policy : Create String Matcher to Policy association

note right: executed for both vFW and vDNS policies

CLAMP -> SDC : Distribute Blueprint

ONAP_User -> SDC : check in, test, certify, distribute Blueprint

SDC -> DMaaP : Distribute control loop for DCAE

DMaaP -> DCAE : Control Loop Blueprint

CLAMP -> DMaaP : Poll for distribution complete

@enduml

1.6 VNF Certification (new in R1)

Control Automation:

See: Installing and Running the ONAP Demos

Override the default ssh key in the env file to be able to ssh to any of the 3 VMs instantiated by the demo.

Project Impact:

No additional work.

Work Commitment:

AT&T

3 Comments

Laurentiu Soica

Is the scaling close loop functional in ONAP Amsterdam ? Are there any videos / instructions on how to run this use case ?

Nour Gritli

Hello,

Can anyone explain to me why the VF-C is not needed in this use case.

Thanks

Vijayalakshmi H

Hi, I have instantiated the vLoadBalancer/vDNS stack.

Using either ONAP/directly openstack stack create, the VMs of the stack are not reachable.

Many times the VMs are not reachable right from the time they are created. Or few times they become unreachable once the install scripts and init scripts run in the VMs.

Any solutions or workarounds?

Thanks

Vijaya