DCAE MOD ( Microservices Onboarding and Design ) is being redesigned with angular based UI to have a great user experience, self managed catalog with APIs to ease access and manage life cycle of different version of microservices.

It also provides extensibility to include pluggable modules for generating deployment artifacts and communication with other systems such as Policy Engine and DCAE dashboard.

Details of the target architecture and initial implementation can be found in below ppt slides and initial API Specifications

DCAE MOD Catalog and UI redesign draft - DCAE_MOD_redesign_v3.pptx

API Specification - swagger.yml

Below picture depicts the target architecture of redesigned MOD

It is composed of UI and Backend with Catalog service and Authentication Service.

UI

UI is developed using angular framework and enables users including designers and developers to onboard microservices. It currently provides a user-friendly menu options to onboard microservices and its instances per release, associate a component specification and generate deployment artifact that can be deployed using DCAE dashboard.

It will be enhanced to onboard policy models and data format to support policy-based control and composite microservice models.

Backend

The backend for MOD composed of a catalog service and authentication service. Catalog Service will manage microservices, its instances and generated deployment artifacts. Authentication service will provide access control for designers and developers to perform different operations.

Catalog Service

Catalog service is composed of APIs to manage base microservice, microservice instances, component specifications associated with microservices and to generate deployment artifacts.

Authentication Service

Authentication service provides APIs to manage users, currently used by UI.

Below ER diagram represents the entities present in Mongo Database

User Guide

Deployment using Docker compose

MOD can be deployed using docker-compose with below link for docker-compose,yml file on any Ubuntu VMs

https://git.onap.org/dcaegen2/platform/tree/mod2/assembly/docker-compose.yml

Create a directory and create docker-compose.yml file with the above contents.

Set DACE_HOSTNAME by running export DCAE_HOSTNAME=<IP Address of Server>. This is required for UI to determine the DCAE MOD backend server.

Note : Additional attributes are introduced in Honolulu release are APIs for distributing policies to policy framework and distributing deployment artifacts to DCAE dashboard. If you are planning to use those APIs, export environment variables for POLICYMODEL and DCAE_PLATFORM as specified in above attached docker-compose.yml

Run below command to download the latest built version and deploy on docker container.

docker-compose -f docker-compose.yml up &

After deployment is successful, you can list using docker ps -a command and it will show the below deployments.

Note: For Guilin POC, the above deployment procedure would be followed to install MOD components. For future releases, it will be enhanced to use helm chart.

Deployment using Helm (introduced in Honolulu Release)

Download the charts (all the files and directories) from git with below link

https://git.onap.org/dcaegen2/platform/tree/mod2/assembly/helm

Under components/catalog-service, update K8SNodeip and PASSWORD for policy framework deployment and DCAE Dashboard deployment and verify other configuration values in values,yaml

policyModelDevServer: "<K8SNodeip>"

policyModelDevPort: "30522"

policyModelDevUser: "healthcheck"

policyModelDevPassword: "<PASSWORD>"

dcaePlatformDevServer: "<K8SNodeip>"

dcaePlatformDevPort: "30418"

dcaePlatformDevUser: "su1234"

dcaePlatformDevPassword: "<PASSWORD>"

Under components/ui, update K8SNodeip for master node in values.yaml

dcaeHostname: <K8SNodeip>

After updating the above configurations, run below command from the parent directory to generate the charts with all dependencies.

helm dep update

Then, run below command to install all the components under desired NAMESPACE

helm install -n dcaemod2 --namespace <NAMESPACE> ./

Verify all the components (pods) are up

dcaemod2-mongo-0

dcaemod2-ui

catalog-service

auth-service

Working with UI

After deploying the mod2 components using docker compose or helm charts, UI will be available in below URL where hostname would be the hostname or IP address of the deployed VM

https:// <hostname>:30997 (or the node port configured in the helm chart - 31009 in the current helm chart)

Initial user/password would be set to admin/admin@mod.

Below is the snapshot of initial landing page of MOD UI

Steps to onboard a microservice and generate deployment artifact

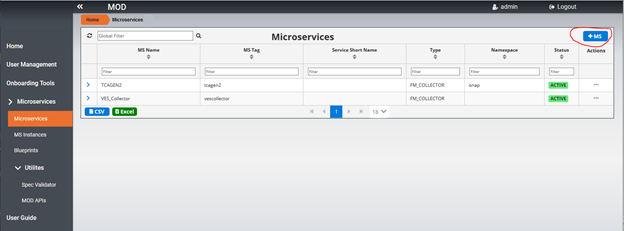

1. Click on Microservices... option on the page, following sample page will be displayed. Currently this page contains on-boarded microservices in the list. For initial deployment, the list will be empty

2. Click on +MS button on the page below shown in red to add a Microservice that needs to be onboarded.

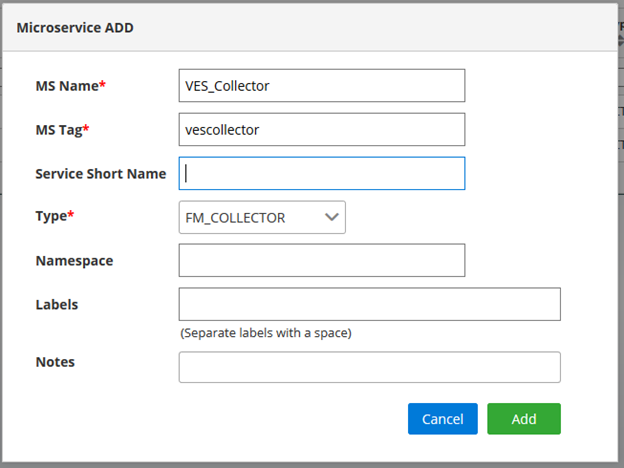

3. Below Popup page will be displayed and enter details of Microservice and press Add to add the Microservce in the list.

4. To add an instance for a release click on … on the right as circled below in the list of Microservice by selecting s specific Microservice

5. Below popup page will be displayed. Select a release and developer details. Then click on Add button to add a Microservice instance.

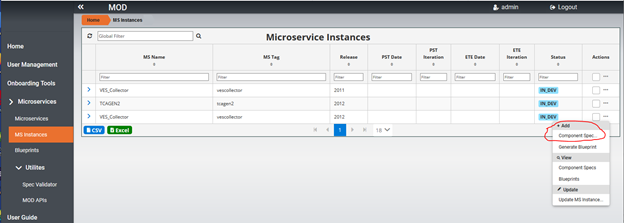

6. To add a component spec for Microservice instance, Select MS Instances on the left menu, it will bring up the list of Microservice instances and choose … on the right side for the specific Microservice instance as below. Click on Add Component spec option.

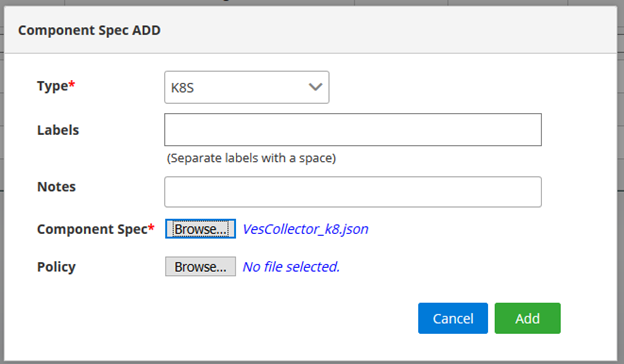

7. Below popup page will be displayed for adding component spec json file. Select the type and browse to choose component spec json file. Choose Add to add the component spec for the specific Microservice instance.

8. To generate deployment artifact, choose … on the right side for the specific Microservice instance as below. Then choose Generate Blueprint option to generate the deployment Artifact.

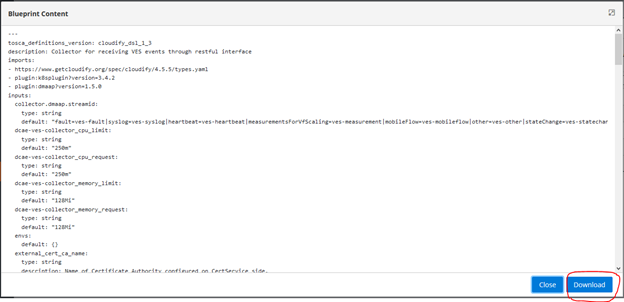

9. Choose Blueprints on left side menu to get a list of blueprints that are generated. Choose … option menu for specific blueprint and choose “View BP Content”

10. The Blueprint content can be downloaded by choosing download option show below.

Currently after the deployment artifact is downloaded, you have to go manually to DCAE dashboard to deploy.

In Honolulu release, below APIs are provided to onboard and distribute policy to policy framwork engine. Also APIs to distribute the deployment artifact on to DCAE Dashboard is available.

On-boarding Policy Model and Distributing to Policy Framework Engine Using API ( Introduced in Honolulu Release)

Below APIs are introduced to onboard a policy model and distribute to policy framework engine in catalog service. In order to look into Policy Model APIs, the swagger document is available in UI.

When logged on to UI, select MOD APIs option under UI, it will bring up below swagger UI and will find Policy Model and Policy Model Distribution APIs

Following Policy Model APIs are available in swagger UI and details of the APIs are found when selecting each API

For on-boarding ahe policy model - http://<Node IP>:31001/api/policy-model?user="admin" and sample input JSON as below.

{

"name": "onap.policies.Monitoring",

"owner": "admin",

"version": "1.0.0",

"content": "tosca_definitions_version: tosca_simple_yaml_1_1_0\r\npolicy_types:\r\n onap.policies.Monitoring:\r\n derived_from: tosca.policies.Root\r\n version: 1.0.0\r\n name: onap.policies.Monitoring\r\n description: a base policy type for all policies that govern monitoring provisioning\r\n onap.policies.monitoring.tcagen2:\r\n derived_from: onap.policies.Monitoring\r\n version: 1.0.0\r\n name: onap.policies.monitoring.tcagen2\r\n properties:\r\n tca.policy:\r\n type: onap.datatypes.monitoring.tca_policy\r\n description: TCA Policy JSON\r\n required: true\r\ndata_types:\r\n onap.datatypes.monitoring.metricsPerEventName:\r\n derived_from: tosca.datatypes.Root\r\n properties:\r\n controlLoopSchemaType:\r\n type: string\r\n required: true\r\n description: Specifies Control Loop Schema Type for the event Name e.g. VNF, VM\r\n constraints:\r\n - valid_values:\r\n - VM\r\n - VNF\r\n eventName:\r\n type: string\r\n required: true\r\n description: Event name to which thresholds need to be applied\r\n policyName:\r\n type: string\r\n required: true\r\n description: TCA Policy Scope Name\r\n policyScope:\r\n type: string\r\n required: true\r\n description: TCA Policy Scope\r\n policyVersion:\r\n type: string\r\n required: true\r\n description: TCA Policy Scope Version\r\n thresholds:\r\n type: list\r\n required: true\r\n description: Thresholds associated with eventName\r\n entry_schema:\r\n type: onap.datatypes.monitoring.thresholds\r\n onap.datatypes.monitoring.tca_policy:\r\n derived_from: tosca.datatypes.Root\r\n properties:\r\n domain:\r\n type: string\r\n required: true\r\n description: Domain name to which TCA needs to be applied\r\n default: measurementsForVfScaling\r\n constraints:\r\n - equal: measurementsForVfScaling\r\n metricsPerEventName:\r\n type: list\r\n required: true\r\n description: Contains eventName and threshold details that need to be applied to given eventName\r\n entry_schema:\r\n type: onap.datatypes.monitoring.metricsPerEventName\r\n onap.datatypes.monitoring.thresholds:\r\n derived_from: tosca.datatypes.Root\r\n properties:\r\n closedLoopControlName:\r\n type: string\r\n required: true\r\n description: Closed Loop Control Name associated with the threshold\r\n closedLoopEventStatus:\r\n type: string\r\n required: true\r\n description: Closed Loop Event Status of the threshold\r\n constraints:\r\n - valid_values:\r\n - ONSET\r\n - ABATED\r\n direction:\r\n type: string\r\n required: true\r\n description: Direction of the threshold\r\n constraints:\r\n - valid_values:\r\n - LESS\r\n - LESS_OR_EQUAL\r\n - GREATER\r\n - GREATER_OR_EQUAL\r\n - EQUAL\r\n fieldPath:\r\n type: string\r\n required: true\r\n description: Json field Path as per CEF message which needs to be analyzed for TCA\r\n constraints:\r\n - valid_values:\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedTotalPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedOctetsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedUnicastPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedMulticastPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedBroadcastPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedDiscardedPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedErrorPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedTotalPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedOctetsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedUnicastPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedMulticastPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedBroadcastPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedDiscardedPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].receivedErrorPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedTotalPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedOctetsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedUnicastPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedMulticastPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedBroadcastPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedDiscardedPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedErrorPacketsDelta\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedTotalPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedOctetsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedUnicastPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedMulticastPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedBroadcastPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedDiscardedPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.vNicPerformanceArray[*].transmittedErrorPacketsAccumulated\r\n - $.event.measurementsForVfScalingFields.cpuUsageArray[*].cpuIdle\r\n - $.event.measurementsForVfScalingFields.cpuUsageArray[*].cpuUsageInterrupt\r\n - $.event.measurementsForVfScalingFields.cpuUsageArray[*].cpuUsageNice\r\n - $.event.measurementsForVfScalingFields.cpuUsageArray[*].cpuUsageSoftIrq\r\n - $.event.measurementsForVfScalingFields.cpuUsageArray[*].cpuUsageSteal\r\n - $.event.measurementsForVfScalingFields.cpuUsageArray[*].cpuUsageSystem\r\n - $.event.measurementsForVfScalingFields.cpuUsageArray[*].cpuWait\r\n - $.event.measurementsForVfScalingFields.cpuUsageArray[*].percentUsage\r\n - $.event.measurementsForVfScalingFields.meanRequestLatency\r\n - $.event.measurementsForVfScalingFields.memoryUsageArray[*].memoryBuffered\r\n - $.event.measurementsForVfScalingFields.memoryUsageArray[*].memoryCached\r\n - $.event.measurementsForVfScalingFields.memoryUsageArray[*].memoryConfigured\r\n - $.event.measurementsForVfScalingFields.memoryUsageArray[*].memoryFree\r\n - $.event.measurementsForVfScalingFields.memoryUsageArray[*].memoryUsed\r\n - $.event.measurementsForVfScalingFields.additionalMeasurements[*].arrayOfFields[0].value\r\n severity:\r\n type: string\r\n required: true\r\n description: Threshold Event Severity\r\n constraints:\r\n - valid_values:\r\n - CRITICAL\r\n - MAJOR\r\n - MINOR\r\n - WARNING\r\n - NORMAL\r\n thresholdValue:\r\n type: integer\r\n required: true\r\n description: Threshold value for the field Path inside CEF message\r\n version:\r\n type: string\r\n required: true\r\n description: Version number associated with the threshold"

}

The sample response will contain "id": "604bed36cbb899758eb2734d", which can be used in get, update, and distribute a policy model to policy framework engine.

Distributing Deployment Artifact to DCAE Dashboard Using API ( Introduced in Honolulu Release)

Below APIs are introduced to distribute the generated deployment artifact (blueprint) to DCAE Dashboard. Once distributed, it will be available in DCAE dashboard for deployment.

Under MOD APIs → swagger UI, you will find this API as below.

Deployment Artifact ID can retrieved by calling get all deployment artifacts using below API

API Reference : swagger.yml - swagger.yml

Note: Update in progress...

3 Comments

Barnali Sengupta

I am trying to use admin/admin@mod to log into the DCAE MOD GUI, but it doesn't work. Is there a different login credentials that I can use to see the UI?

Sivakumar Santharam

Before bring up the components, make sure you have set DCAE_HOSTNAME=localhost or the host the backend is deployed.

If not set, UI won't be able to communicate to the backend.

Barnali Sengupta

Thanks for your reply. I did set the DCAE_HOSTNAME to the mongo db server, but it was not working. Today I tried cleaning up everything and reran the docker compose command and I am able to log into ModFE. The OnboardingTools tab doesn't seem to be working. Am I missing something?