Pre-requisite

Setup the OOM Infrastructure; I've used OOM on Rancher in OpenStack

Running vFW demo - Close-loop

Video of onboarding

I had a hickup at the end, due to the fact I already had another vFW deployed, hence the ip it tried to assign was used. To fix this, I remove the existing stack.

Video of instantiation

I had a a hickup for the vFW_PG due to the fact I pre-loaded on the wrong instance. After realizing, all went well.

Let's start by running the init goal (assuming K8S namespace, where ONAP has been installed is "onap")

cd oom/kubernetes/robot $ ./demo-k8s.sh onap init

Result:

Login into the VNC. Password is password

<kubernetes-vm-ip>:30211

Open the browser and navigate to the ONAP Portal

Login using the Designer user. cs0008/demo123456!

http://portal.api.simpledemo.onap.org:8989/ONAPPORTAL/login.htm

- Virtual Licence Model creation

- Open SDC application, click on the OnBoard tab.

- click Create new VLM (Licence Model)

- Use onap as Vendor Name, and enter a description

- click save

- click Licence Key Group and Add Licence KeyGroup, then fill in the required fields

- click Entitlements Pools and Add Entitlement Pool, then fill in the required fields

- click Feature Groups and Add Feature Group, then fill in the required fields. Also, under the Entitlement Pools tab, drag the created entitlement pool to the left. Same for the License Key Groups

- click Licence Agreements and Add Licence Agreement, then fill in the required fields. Under the tab Features Groups, drag the feature group created previously.

- then check-in and submit

- go back to OnBoard page

- click Create new VLM (Licence Model)

- Open SDC application, click on the OnBoard tab.

- Vendor Software Product onboarding and testing

- click Create a new VSP

- First we create the vFW sinc; give it a name, i.e. vFW_SINC. Select the Vendor (onap) and the Category (Firewall) and give it a description.

- Click on the warning, and add a licence model

- Get the zip package: vfw-sinc.zip

- Click on overview, and import the zip

- Click Proceed to validation then check-in then submit

- click Create a new VSP

- Then we create the vFW packet generator; give it a name, i.e. vFW_PG. Select the Vendor (onap) and the Category (Firewall) and give it a description.

- Click on the warning, and add a licence model

- Get the zip package: vfw_pg.zip

- Click on overview, and import the zip

- Click Proceed to validation then check-in then submit

- Go to SDC home. Click on the top right icon with the orange arrow.

- Import the VSP one by one

- Submit for both testing

- Logout and Login as the tester: jm0007/demo123456!

- Go to the SDC portal

- Test and accept the two VSP

- click Create a new VSP

- Service Creation

- Logout and login as the designer: cs0008/demo123456!

- Go to the SDC home page

- Click Add a Service

- Fill in the required field

- Click Create

- Click on the Composition left tab

- In the search bar, type "vFW" to narrow down the created VSP, and drag them both.

- Then click Submit for Testing

- Service Testing

- Logout and Login as the tester: jm0007/demo123456!

- Go to the SDC portal

- Test and accept the service

- Service Approval

- Logout and Login as the governor: gv0001/demo123456!

- Go to the SDC portal

- Approve the service

- Service Distribution

- Logout and Login as the operator: op0001/demo123456!

- Go to the SDC portal

- Distribute the service

- Click on the left tab monitor and click on arrow to open the distribution status

- Wait until everything is distributed (green tick)

- Service Instance creation:

- Logout and Login as the user: demo/demo123456!

- Go to the VID portal

- Click the Browse SDC Service Models tab

- Click Deploy on the service to deploy

- Fill in the required field, call it vFW_Service for instance. Once done, this will redirect you to a new screen

- Click Add VNF, and select the vFW_SINC VNF first

- Fill in the required field. Call it vFW_SINC_VNF, for instance.

- Click Add VNF, and select the vFW_PG_VNF first

- Fill in the required field. Call it vFW_PG_VNF, for instance.

- SDNC preload:

Then go to the SDNC Admin portal and create an account

<kubernetes-host-ip>:30201/signup

Login into the SDNC admin portal

<kubernetes-host-ip>:30201/login

- Click Profiles then Add VNF Profile

- The VNF Type is the string that looks like this: VfwPg..base_vpkg..module-0 It can be copy/paste from VID, when attempting to create the VF-Module

- Enter 100 for the Availability Zone Count

- Enter vFW for Equipment Role

- Repeat the same for the other VNF

Pre-load the vFW SINC. Mind the following values:

service-type: it's the service instance ID of the service instance created step 9

vnf-name: the name to give to the VF-Module. The same name will have to be re-use when creating the VF-Module

vnf-type: Same as the one used to add the profile in SDNC admin portal

generic-vnf-name: The name of the created VNF, see step 9f

vfw_name_0: is the same as the generic-vnf-name

generic-vnf-type: Can be find in VID, please see video if not found.

dcae_collector_ip: Has to be the IP address of the dcaedoks00 VM

Make sure image_name, flavor_name, public_net_id, onap_private_net_id, onap_private_subnet_id, key_name and pub_key reflect your environmentExpected result:

{ "output": { "svc-request-id": "robot12", "response-code": "200", "ack-final-indicator": "Y" } }Pre-load the vFW PG. Mind the following values:

service-type: it's the service instance ID of the service instance created step 9

vnf-name: the name to give to the VF-Module. The same name will have to be re-use when creating the VF-Module

vnf-type: Same as the one used to add the profile in SDNC admin portal

generic-vnf-name: The name of the created VNF, see step 9h

vpg_name_0: is the same as the generic-vnf-name

generic-vnf-type: Can be find in VID, please see video if not found.

Make sure image_name, flavor_name, public_net_id, onap_private_net_id, onap_private_subnet_id, key_name and pub_key reflect your environmentExpected result:

{ "output": { "svc-request-id": "robot12", "response-code": "200", "ack-final-indicator": "Y" } }- Create the VF-Module for vFW_SINC

- The instance name must be the vnf-name setup in the preload phase.

- After a few minutes, the stack should be created.

- Create the VF-Module for vFW_PG

- The instance name must be the vnf-name setup in the preload phase.

- After a few minutes, the stack should be created.

Close loop

Run heatbridge robot tag to tell AAI about the relationship between the created HEAT stack (SINC one) and the service instance id.

To run this, you need:

- the heat stack name of the vSINC

- the service instance id- Upload operational policy: this is to tell policy that for this specific instance, we should apply this policy.

Retrieve from MSO Catalog the modelInvariantUuid for the vFW_PG. Specify in the below request the service-model-name, as defined step 5.c.

curl -X GET \ 'http://<kubernetes-host>:30223/ecomp/mso/catalog/v2/serviceVnfs?serviceModelName=<service-model-name>' \ -H 'Accept: application/json' \ -H 'Authorization: Basic SW5mcmFQb3J0YWxDbGllbnQ6cGFzc3dvcmQxJA==' \ -H 'Content-Type: application/json' \ -H 'X-FromAppId: Postman' \ -H 'X-TransactionId: get_service_vnfs'

Based on the payload below, result would be: 86a1bdd8-1f59-4796-bf30-3002108068f6

Under

oom/kubernetes/policy/script

invoke the script as follows:

Usage: update-vfw-op-policy.sh <k8s-host> <policy-pdp-node-port> <policy-drools-node-port> <resource-id> ./update-vfw-op-policy.sh 10.195.197.53 30220 30221 86a1bdd8-1f59-4796-bf30-3002108068f

Result can look like, with debug enable (/bin/bash -x)

- Mount APPC

Get the VNF instance ID, either through VID or through AAI. Below the AAI request

curl -X GET \ https://<kubernetes-host>:30233/aai/v8/network/generic-vnfs/ \ -H 'Accept: application/json' \ -H 'Authorization: Basic QUFJOkFBSQ==' \ -H 'Content-Type: application/json' \ -H 'X-FromAppId: Postman' \ -H 'X-TransactionId: get_generic_vnf'

In the result, search for the vFW_PG_VNF and get its vnf-id. In the payload below, it would be e6fd60b4-f436-4a21-963c-cc9060127633

- Get the public IP address of the Packet Generator from your deployment.

In the below curl request, replace <vnf-id> with the VNF ID retrieved at step 2.a (it needs to be updated at two places), and replace <vnf-ip> with the ip retrieved at step 2.b.

curl -X PUT \ http://<kubernetes-host>:30230/restconf/config/network-topology:network-topology/topology/topology-netconf/node/<vnf-id> \ -H 'Accept: application/xml' \ -H 'Authorization: Basic YWRtaW46S3A4Yko0U1hzek0wV1hsaGFrM2VIbGNzZTJnQXc4NHZhb0dHbUp2VXkyVQ==' \ -H 'Content-Type: text/xml' \ -d '<node xmlns="urn:TBD:params:xml:ns:yang:network-topology"> <node-id><vnf-id></node-id> <host xmlns="urn:opendaylight:netconf-node-topology"><vnf-ip></host> <port xmlns="urn:opendaylight:netconf-node-topology">2831</port> <username xmlns="urn:opendaylight:netconf-node-topology">admin</username> <password xmlns="urn:opendaylight:netconf-node-topology">admin</password> <tcp-only xmlns="urn:opendaylight:netconf-node-topology">false</tcp-only> </node>'

If you want to verify the NETCONF connection has successfully being established, use the following request (replace <vnd-id> with yours

curl -X GET \ http://<kubernetes-host>:30230/restconf/operational/network-topology:network-topology/topology/topology-netconf/node/<vnf-id> \ -H 'Accept: application/json' \ -H 'Authorization: Basic YWRtaW46S3A4Yko0U1hzek0wV1hsaGFrM2VIbGNzZTJnQXc4NHZhb0dHbUp2VXkyVQ=='

Result should be:

Using NETCONF, let's get the current streams being active in our Packet Generator. The number of streams will change along the time, this is the result of close-loop policy. When the traffic goes over a certain threshold, DCAE will publish an event on the unauthenticated.DCAE_CL_OUTPUT topic that will be picked up by APPC, that will send a NETCONF request to the packet generator to adjust the traffic it's sending.

curl -X GET \ http://10.195.197.53:30230/restconf/config/network-topology:network-topology/topology/topology-netconf/node/e6fd60b4-f436-4a21-963c-cc9060127633/yang-ext:mount/sample-plugin:sample-plugin/pg-streams \ -H 'Accept: application/json' \ -H 'Authorization: Basic YWRtaW46S3A4Yko0U1hzek0wV1hsaGFrM2VIbGNzZTJnQXc4NHZhb0dHbUp2VXkyVQ=='

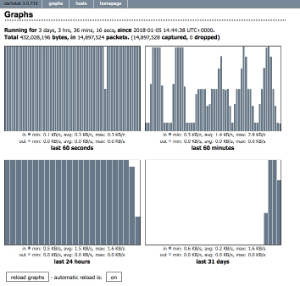

Browse to the zdfw1fwl01snk01 on port 667 to see a graph representing the traffic being received:

http://<zdfw1fwl01snk01>:667/

As you can see in the below graph, looking at the top right square, we can see the first two fluctuations are going from very low to very high. This is when close-loop isn't running.

Once close-loop is running, you'll have some medium bars.Check the events sent by Virtual Event Collector (VES) to Threshold Crossing Analytic (TCA) app:

curl -X GET \ http://<K8S_IP>:3904/events/unauthenticated.SEC_MEASUREMENT_OUTPUT/group1/C1 \ -H 'Accept: application/json' \ -H 'Content-Type: application/cambria'

The VES resides in the VNF itself, whereas the TCA is an application running on Cask. A DCAE component.

Check the events sent by TCA on unauthenticated.DCAE_CL_OUTPUT:

curl -X GET \ http://<K8S_IP>:3904/events/unauthenticated.DCAE_CL_OUTPUT/group1/C1 \ -H 'Accept: application/json' \ -H 'Content-Type: application/cambria'

Those events are the resulting of the TCA application, e.g. TCA has noticed an event was crossing a given threshold, hence is sending a message of that particular topic. Then Policy will grab this event and perform the appropriate action, as defined in the Policy. In the case of vFWCL, Policy will send an event on the APPC_CL topic, that APPC will consume. This will trigger a NETCONF request to the packet generator to adjust the traffic.

I hope everything worked for you, if not, please leave a comment. Thanks

142 Comments

Vidhu Shekhar Pandey

Hi Alexis,

I am using OOM 1.1.0 release and following the steps/videos posted in this wiki. At step 10g (create VF-Module for vFW_SINC) I am facing the error “MSO failure”

and VF module on the VID page shows orchestration status as “pending delete”. Also the stack doesn’t get created in my OpenStack environment. How do I get past this? Any suggestions on what could be failing/missing.

All the containers are in running state, and also robot health check is passing for all except for DCAE. Also, I am able to ping AAI from MSO container ( I saw that as an issue posted in some mailing list queries). But, upon logging into AAI portal I do not see any VNF/Service there. Also, as shown in the video, when I fetch the service-subscriptions from AAI, I see “Error java.lang.NullPointerException” there. Is this the trouble?

I understand that the image_name, flavor_name, public_net_id, onap_private_net_id, onap_private_subnet_id, key_name and pub_key should already be created in my OpenStack before I execute this step 10f. Do I also need to pre-create unprotected_private_net_id, unprotected_private_subnet_id, protected_private_net_id, protected_private_subnet_id ?

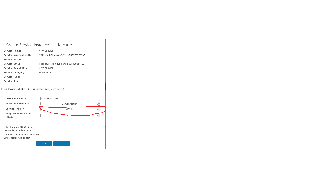

The instantiation transactions for the failed service instance are as below in the VID screenshot.

Thanks,

Vidhu

Alexis de Talhouët

Hi, no you don't need to create the following resources: unprotected_private_net_id, unprotected_private_subnet_id, protected_private_net_id, protected_private_subnet_id; they will be created by the vFW_PG deployment.

Based on the error I can see in your screenshot, you seem to face Authentication Failure. So I believe you have wrong username/password in your initial configuration. Please double check and confirm.

Thanks

Vidhu Shekhar Pandey

Thanks Alexis for the clarity on OpenStack resources. I will check on the authentication error and get back.

Rahul Sharma

Alexis de Talhouët: Thank you for the page; helped me create the vFW deployment on my local Openstack cloud.

Couple of things that I found during my exercise:

Not sure why vFW_PG deployment did not create it for me?('cause i instantiated vFW_PG before vFW_SINC)2017-12-19T20:13:27.810Z|2017-12-19T20:13:28.847Z|08d06356-75aa-46b6-a2f9-1a2381185b38|575cea7d-d1a2-4b9e-8909-06c0fd7f03fc|Thread-287||CreateVfModule|BPELClient|OpenStack

|CreateStack|ERROR|505|Create VF Module vFW/vFW_PG 0::VfwPg..base_vpkg..module-0 in RegionOne/09f7009ea6c94354b3d82a658103da74: 400 Bad Request: The server could not comply with the request since it is either malformed or otherwise incorrect., error.type=StackValidationFailed, error.message=Property error: : resources.vpg_private_0_port.properties.network: : Error validating value 'zdfw1fwl01_unprotected': Unable to find network with name or id 'zdfw1fwl01_unprotected'|ccebf893-0eba-474c-852e-56ae1b0702c9|INFO|0|10.42.246.118|1037|mso-3784963895-bnkb1|10.42.0.1||||vFW_PG_Module||||

Alexis de Talhouët

Hi, I'm aware of point 1. and it's going to be resolved soon. As for point 2. you need first to create the vFW_SINC before the vFW_PG, as it's the vFW_SINC that creates the network resources.

Alexis de Talhouët

I just noticed I said the opposite in my previous comment, the source of truth is the written workflow, hence sinc then pg. Thanks and sorry for the confusion.

Rahul Sharma

Thanks; yes it's Sinc that creates the network.

Nishank Trivedi

Hi Rahul,

On a re-setup, for some reason I am seeing a smilar error. Can you please let me know what you added in "GLOBAL_INJECTED_SCRIPT_VERSION" in /dockerdata-nfs/onap/robot/eteshare/config/vm_properties.py?

That would help.

Thanks,

Nishank

Rahul Sharma

Nishank Trivedi, hello,

I just added an empty string as it's value; so:

Alexis de Talhouët

Sorry for this regression, fix is here: https://gerrit.onap.org/r/#/c/29525/

Nishank Trivedi

Thanks Rahul. Co-incidentally, I did a similar thing. I just added a random version like "1.0", re-ran robot script. it failed. Then, I removed GLOBAL_INJECTED_SCRIPT_VERSION. Somehow, it did the trick!

Co-incidentally, I did a similar thing. I just added a random version like "1.0", re-ran robot script. it failed. Then, I removed GLOBAL_INJECTED_SCRIPT_VERSION. Somehow, it did the trick!

But now thanks to Alexis' fix fresh image-pullers probably won't encounter it.

Thanks,

Nishank

Vidhu Shekhar Pandey

Hi Alexis,

Now the stacks are getting created in my OpenStack after some changes in network parameters. Screen shot below shows the instances and network topology:

To brief you:

I am using the json provided in step 10e and 10f (for pre-loading) and replacing the values for parameters mentioned in these steps according to my OpenStack environment. In earlier attempts the stacks were getting created but then were getting rolled back as well. The reason found was that OpenStack was expecting a network in range of 10.0.100.0 to be present instead of 10.0.0.0. So I created a private network “onap_net” and a sub net “onap_sub” in that range in my OpenStack tenant and changed the following network specific vnf-parameters

For vFW_SINK:

{

"vnf-parameter-name": "onap_private_net_id",

"vnf-parameter-value": "onap_net"

},

{

"vnf-parameter-name": "onap_private_subnet_id",

"vnf-parameter-value": "onap_sub"

},

{

"vnf-parameter-name": "onap_private_net_cidr",

"vnf-parameter-value": "10.0.100.0/24" ( originally it was "10.0.0.0/24")

},

For vFW_PG :

{

"vnf-parameter-name": "onap_private_net_id",

"vnf-parameter-value": "onap_net"

},

{

"vnf-parameter-name": "onap_private_subnet_id",

"vnf-parameter-value": "onap_sub"

},

{

"vnf-parameter-name": "onap_private_net_cidr",

"vnf-parameter-value": "10.0.100.0/24" ( originally it was “10.0.0.0/16")

},

{

"vnf-parameter-name": "vpg_private_ip_1",

"vnf-parameter-value": "10.0.100.112" ( originally it was "10.0.80.2")

},

Thanks for the steps/videos provided in this wiki. All went fine for me except that I had to make the above network changes.

Regards,

Vidhu

Rahul Sharma

Vidhu Shekhar Pandey: My understanding is that 10.0.0.0/16 should have worked for an IP Address 10.0.100.x since /16 basically means you are not masking the last 2 octects. /16 gives you 65534 hosts. See https://www.aelius.com/njh/subnet_sheet.html

Pavithra Radhakrishnan

Hi Alexis,

I am following the above steps to bring up ONAP 1.1.0. At step 10g (create VF-Module for vFW_SINC) I am facing "Maximum number of poll exceeded" error. I have preloaded the values with respect to my Openstack setup.Please find below the error screenshots and help to figure out why exactly its failing and resolve the issue.

Alexis de Talhouët

Hi, " Maximum number of poll exceeded" doens't necessarly mean it failed, it means it hasn't completed the deployment in the number of poll configured.

Do you actually see the stack in OS? What's the status of the vf-module in VID?

Pavithra Radhakrishnan

Hi Alexis

My VF module is in pending delete state and I could not see stacks created in Openstack

Alexis de Talhouët

Ok, this means something is not correctly configured. To narrow this down, I would advice looking at the BPMN debug logs in MSO. Enable debug logs for BPMN: curl -X GET \ http://<your-k8s-host>:30223/mso/logging/debug , delete the vf-module from AAI, and retry the create. Else, you can double check the parameters, make sure you have sufficient quota, etc ...

Pavithra Radhakrishnan

Hi Alexis

The Delete vf module from the VID portal is not working . Could you please elaborate on how to delete the vf-module from AAI.We could not get anything from A&AI UI aswell

Alexis de Talhouët

You can use the following request, it will give everything for the service under the given customer.

GET https://<kubernetes-host>:30233/aai/v11/business/customers/customer/<customer-name>/service-subscriptions/service-subscription/<service-name>?depth=all

<customer-name> = Demonstration

<service-name> = Name given at step 5

You can also use the get generic-vnf to retrieve all the existing VNFs. If you have a VF module attached to a VNF, it should show under the vf-module list.

GET https://<kubernetes-host>:30233/aai/v11/network/generic-vnfs

Then, get the generic-vnf-id vf-module-id and vf-module-resource-id, and delete using the following request:

DELETE https://<kubernetes-host>:30233/aai/v11/network/generic-vnfs/generic-vnf/<generic-vnf-id>/vf-modules/vf-module/<vf-module-id>?resource-version=<vf-module-resource-id>

HTH

Pavithra Radhakrishnan

Hi Alexis

The above requests are not fetching any output.In postman it fails with error connecting to the URL. And through CURL it shows empty reply from server

Radhika Kaslikar

Hi,

Were you able to delete the vf module?

I tried with above curl queries as well as through the VID UI, but both dont seem to be working.

Thanks and Regards,

Radhika

Pavithra Radhakrishnan

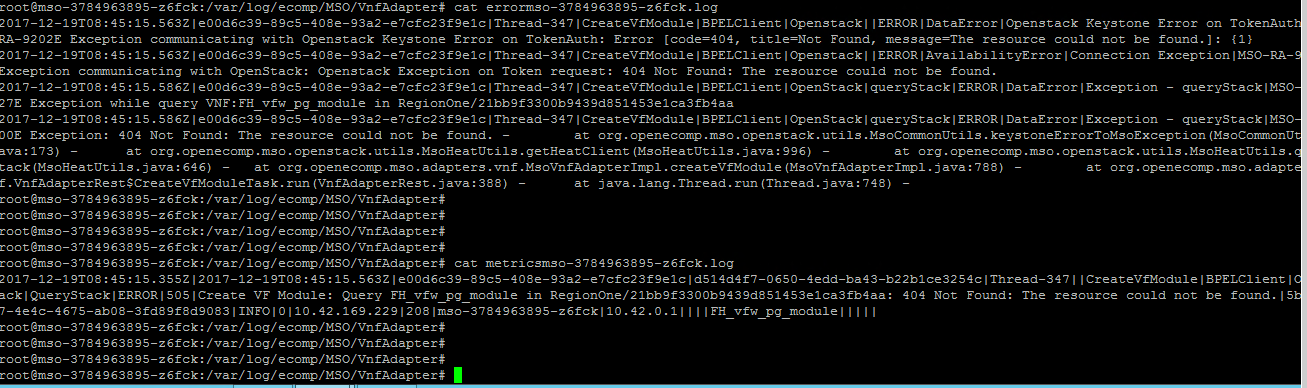

Hi Alexis,

We found Connection Exception and Resource not found error from MSO logs. We made some changes in onap-parameters.yaml file today but it is not getting applied. Please let us know how to make the changes effective and also find the attached error log.

Alexis de Talhouët

Hi, the onap-parameters.yaml is intended to be used once only, before anything is started. If you made some typos in there. I suggest the following:

For example, if you change values under /dockerdata-nfs/onap/mso you have to restart mso application.

HTH

Pavithra Radhakrishnan

Hi Alexis

We modified the parameters.yaml and now its able to contact Openstack and start the stack creation. But the stack creation is failing with error " No fixed IPs available for public_net."

Pavithra Radhakrishnan

Hi Alexis,

The above error was due to availability of free ips . We fixed it and successfully completed the demo. Thank you for the steps/videos and comments to resolve the issue we faced. The only thing not working in deletion of Vf-module with Ui as well as Rest call. We are facing "error connecting to the URL" in postman . And through CURL it shows "empty reply from server"

Alexis de Talhouët

Hi, happy you figured it out and it worked for you.

shubhra garg

Hi Alexis,

Yesterday we went on add service instance (vfirewall_service_instance1) in VID and createdtwo VNF's for SYNC and PG but as noticed today, the three creations ( service instance and two VNF's) are missing from VID UI. PFA the document.

2. But, via curl GET query, we have checked that VNF is present. Snippet has been attached for your reference. PFA

3. Today, we were trying to fill the onap and openstack values in VNF SYNC preload operation performed on SDNC. The file has been attached for your reference. Since, the created VNF's are missing in VID, then how to add VF module ? We need to opt to create the service instance and the VNF's again ( as mentioned in step 1 )

4. Could you please let me know what is protected and unprotected network ID ? Also, for ONAP parameters that we need to fill inside the above file, we have fetched the values for ONAP from

/dockerdata-nfs/ -→"robot/eteshare/config/integration_preload_parameters.py" path. Not sure whether I am doing it right or not?

Could you please help!

Best Regards,

Shubhra

Alexis de Talhouët

Hi, the unprotected network and the protected network are being created by the vFW_SINC vf-module. Look at step 10.e and 10.f to understand what are the expected values to be filled-in for the two vf-module, and what they relates to. You shouldn't modify the other values, unless you know what you're doing.

Radhika Kaslikar

Hi All,

We are trying to run demo for vFW.

In this process we are running the script demo-k8s.sh init and it is failing with the below error,

We are getting this error when we receive response from ASDC for POST request made to it, when it distributes model for vFWCL.

Can anyone please help us as to how we can resolve this.

Thanks and Regards,

Radhika.

Alexis de Talhouët

409 Conflict - You're trying to add something that already exist, trying to overwrite something identified by the passed name , hence a conflict. Maybe you could delete the conflicting resource from SDC using REST API, then try to run robot again.

Vidhu Shekhar Pandey

Hi Alexis,

I wanted to confirm whether it is possible for OOM to be configured for multiple tenants and regions of the same Openstack? Actually while adding a new VNF, the VID dialog box presents a drop down for tenants/region which makes us believe it is possible to have > 1 tenant/region. However we understand that in onap-parameters.yaml file we can only provide the information of a single tenant/region.

Attaching the screenshot showing the VID dialog box drop down options for VNF instance creations.

Can you please guide us?

Thanks,

Vidhu

Alexis de Talhouët

Hi, out of the box, the OOM onap-parameters.yaml file configuration file, as you observed, allows to configure only one tenant, and only one Region. You can configure multiple region and multiple tenant. Note: Region name must be unique, you shall never have two regions with the same name. To add Region, you need to do the following:

Note, the identity_service_id is what is used to authenticate to this region, if it's the same as the other region, using the same one.

"RegionTwo": { "region_id": "RegionTwo", "clli": "RegionTwo", "aic_version": "2.5", "identity_service_id": "REGION_TWO_KEYSTONE" }To add the identity_service_id, under identity_services, in the same file, add the following

Replace KEYSTONE_URL_WITH_API_VERSION, USERNAME and ENCRYPTED_PASSWORD

"REGION_TWO_KEYSTONE": { "identity_url": "KEYSTONE_URL_WITH_API_VERSION", "mso_id": "USERNAME", "mso_pass": "ENCRYPTED_PASSWORD", "admin_tenant": "service", "member_role": "admin", "tenant_metadata": true, "identity_server_type": "KEYSTONE", "identity_authentication_type": "USERNAME_PASSWORD" }To encrypt the password, use the following SO API

The config is reloaded every minute, to check-out running config, using the following request

Replace REGION_NAME, TENANT_NAME, TENANT_ID

- Create a cloud region

POST https://<k8s>:30233/aai/v11/cloud-infrastructure/cloud-regions/cloud-region/CloudOwner/REGION_NAME { "cloud-owner": "CloudOwner", "cloud-region-id": "REGION_NAME", "cloud-type": "SharedNode", "owner-defined-type": "OwnerType", "cloud-region-version": "v1", "cloud-zone": "CloudZone", "sriov-automation": false, "resource-version": "1515506147118", "relationship-list": { "relationship": [ { "related-to": "complex", "related-link": "/aai/v11/cloud-infrastructure/complexes/complex/clli1", "relationship-data": [ { "relationship-key": "complex.physical-location-id", "relationship-value": "clli1" } ] } ] } }- Create the tenant in the region for the 4 different services with the right tenant id:

PUT https://<k8s>:30233/aai/v11/cloud-infrastructure/cloud-regions/cloud-region/CloudOwner/REGION_NAME/tenants/ { "tenant-id": "TENANT_ID", "tenant-name": "TENANT_NAME", "relationship-list": { "relationship": [ { "related-to": "service-subscription", "related-link": "/aai/v11/business/customers/customer/Demonstration/service-subscriptions/service-subscription/vLB", "relationship-data": [ { "relationship-key": "customer.global-customer-id", "relationship-value": "Demonstration" }, { "relationship-key": "service-subscription.service-type", "relationship-value": "vLB" } ] }, { "related-to": "service-subscription", "related-link": "/aai/v11/business/customers/customer/Demonstration/service-subscriptions/service-subscription/vIMS", "relationship-data": [ { "relationship-key": "customer.global-customer-id", "relationship-value": "Demonstration" }, { "relationship-key": "service-subscription.service-type", "relationship-value": "vIMS" } ] }, { "related-to": "service-subscription", "related-link": "/aai/v11/business/customers/customer/Demonstration/service-subscriptions/service-subscription/vFWCL", "relationship-data": [ { "relationship-key": "customer.global-customer-id", "relationship-value": "Demonstration" }, { "relationship-key": "service-subscription.service-type", "relationship-value": "vFWCL" } ] }, { "related-to": "service-subscription", "related-link": "/aai/v11/business/customers/customer/Demonstration/service-subscriptions/service-subscription/vCPE", "relationship-data": [ { "relationship-key": "customer.global-customer-id", "relationship-value": "Demonstration" }, { "relationship-key": "service-subscription.service-type", "relationship-value": "vCPE" } ] } ] } }Vidhu Shekhar Pandey

Thanks a lot Alexis.

Bin Yang

Add a few clarification and facts which I just realized recently.

First of all, with ONAP in Amsterdam release, the VIM registration is not centralized in one place, as far as I know , there are two places to store VIM registration information which concerning service/VNF instantiation: SO and AAI. This is very important context for anyone who want to add or modify VIM registration information.

Several facts you need to know to register a new VIM instance to ONAP for orchestration:

1) To put VIM registration into AAI, there are various way to do that, the most user-friend one is to leverage the ESR portal, which will trigger multicloud to discover VIM/cloud resources automatically. Please refer to docs at http://onap.readthedocs.io/en/latest/submodules/aai/esr-server.git/docs/platform/installation.html

MultiCloud is using this VIM registration to mediate API calls from SO/APPC/DCAEgen2/VFC/etc. to underlying VIMs

2) To put VIM registration into SO, you need login SO VM and attach to the container, then you can change the config file as stated above: https://wiki.onap.org/display/DW/vFWCL+instantiation%2C+testing%2C+and+debuging?focusedCommentId=22252150#comment-22252150

I guess VID is using this VIM registration information.

3) The 2 VIM registration procedures above are loosely coupled

Let me give you several examples:

Case 1: SO instantiate a VF modules without MultiCloud

In this case, VIM registration information refers to directly identity services of underlying VIMs (those VIM exposes OpenStack keystone V2.0 API ), so the identity_url looks like:

"identity_url": "http://10.12.25.2:5000/v2.0",

Case 2: SO instantiate a VF modules via MultiCloud

In this case, VIM registration information refers to MultiCloud endpoints which is a proxy to underlying VIMs, so the identity_url looks like:

"identity_url": " http://10.0.14.1:80/api/multicloud-titanium_cloud/v0/pod25_RegionOne/identity/v2.0",

Nishank Trivedi

Hi Alexis,

Thanks to this detailed doc here we have been able to successfully spawn a vFirewall and sink. Thanks for that.

However, I am facing an issue of not being able to ssh into the vfirewall and vsink.

I am trying the following command ssh -i private_key.pvt root@171.168.1.153, the IP being that of the vfw.

This private key was located inside robot (as suggested in Verifying your ONAP Deployment#sshkeys)

(/var/lib/docker/aufs/mnt/3fbf976cce6d850015bafb79f9f8992bcd6802b2b99937024f667c6844459ad2/var/opt/OpenECOMP_ETE/robot/assets/keys/robot_ssh_private_key.pvt)

I also tried using the pem file corresponding to the public key I used in preload step but I didnt succeed.

The error upon doing ssh is "access denied (public key)". I have also tried chmod 600 private_key.pvt because I saw that as an issue mentioned by some but it didnt help.

I would really appreciate hints on :

Thanks,

Nishank

Alexis de Talhouët

During the preload step, you added a public key. To ssh, you should you the private key that goes with that public key.

The username might be ubuntu if its a vanilla ubuntu image.

Nishank Trivedi

Yeah , thanks for confirming about the key, Alexis. It helps!

vishwanath jayaraman

Alexis,

When using DCAE, I used ssh-keygen to create a key on the OpenStack machine, took the private and public keys and stuck them in the onap-parameters.yaml file.

I was next able to ssh into the DCAE VMs without having to input password or specify keyfile.

For the VNF VM case, do I do something similar from the OpenStack machine? If yes, I know that the public key needs to be used as part of preload step, however where would the private key go and does it require any services restart for it to take effect?

Thanks

Alexis de Talhouët

Hi, for the VNF, it's the same. You have a priv/pub key pair that should be defined in OS. Use the pub key as part of the preload.

Bin Sun

Hi Alexis,

I have followed your directions to do the close loop, but in the 'http://<zdfw1fwl01snk01>:667/' page,

I can only see the graph but no data in it.

the process has no error within,

could you help deal with it?

Alexis de Talhouët

If the graph has no data, it means the packet generator isn't generating any traffic. Maybe you should try and log in the packet generator vm and look if the cloud-init has errors. Also, you could try delete those stacks, and re-deploy them.

hideyuki izumi

Hi Alexis

I'm now attempting to create testing view of vFWCL

then I have a question about section of 9.Service Distribution.

In this section,the expected output picture have no green tick on dcae.

is it correctly ditributed?

what is the point to see whether correctly distributed vFW_sinc and pg or not

on monitor.

I'm glad to hear answer for that.

best regards

hideyuki

Alexis de Talhouët

Hi, the reason why dcae has no green tick is probably because they don't acknowledge the received message. It could be some other reason, I have to say I'm not too sure about this one.

The monitor tab let you make sure the various components have downloaded the artifacts from SDC when the service is distributed (for SO, AAI, and DCAE when it runs)

hideyuki izumi

dear Alexis

maybe my quantity of questions are too much, so, I shrink myquestoin except essence.

thank you for your reply

so,then is it correct to conclude that distribution succeeded if total distribution deployed have green tick

regardless individual components have no green tick(like DCAE).

and if we want to sure individual component ditributed or not, check whether individual components downloaded or not.

is it no problem?(Does amount of downloaded concerned with result? )

best regards

hideyuki

Alexis de Talhouët

I'm not too sure about this, I'm not an SDC expert. I suggest you ask the mailing list about this. SDC experts will provide better insight.

hideyuki izumi

Alexis

thanks for the reply on your busy schedule

I'll try that solution.

best regards

hideyuki

RAMANJANEYUL AKULA

Hi Alex,

Thanks a lot for the wonderful document. It really gives step by step instructions on how to achieve the close loop use case for vFW.

I'm trying to follow it and create Closed Loop for generic VM (ubuntu).

I was able to deploy the VM. But then when I tried to perform heatbridge creation using demo.sh on robot,

first thing that I noticed is we need to add a mapping in

OpenECOMP_ETE/robot/assets/service_mappings.py to find the vserver name.

I have added that. But then my AAI named query is failing with 404 error

<AAI IP>:8443/aai/search/named-query

{"query-parameters":

{ "named-query":

{

"named-query-uuid": "f199cb88-5e69-4b1f-93e0-6f257877d066"

}

},

"instance-filters": {

"instance-filter": [

{

"vserver":

{

"vserver-name": "u2"

}

}

]

}

}

When I looked at my AAI tenants, I don't see the vservers/vserver records created.

May I know at what phase these records would be created?

And in general, it would be really great if there is a sequence diagram on the workflow between different components for a successful deployment of a service and the closed loop use case.

Thanks and regards

Ramu

Alexis de Talhouët

Ramu, there might be a sequence diagram somewhere in this wiki, but I'm not aware of it. Regarding the vservers and stuff in AAI, those are added while running heatbridge robot goal. It will map the stack details to your service instance in AAI. I didn't have to edit any mapping for this to work.

RAMANJANEYUL AKULA

Thanks Alexis for clarifying it and the hints.

Now the heatbridge is working for me.

In some of the AAI logs I noticed that it was not able to resolve the host mr.api.simpledemo.openecomp.org.

On DNS server I have un-commented the line

;mr.api.simpledemo.openecomp.org. IN CNAME vm1.mr.simpledemo.openecomp.org.

So that the DNS query can go through.

I have deleted the customer and recreated and deployed the Service again and ran the heatbridge case.

With this change I'm able to successfully create the heatbridge.

warm regards, Ramu

Eddy Hautot

In the step 5 Create a new VSP

I do not see any place to import the zip, is it normal?

Thanks,

Eddy

Alexis de Talhouët

No, not normal. This is wierd. Can you refresh, and/or bounce completely the portal app.

Vijayalakshmi H

Hi Alexis,

I have followed the steps mentioned here to deploy vFW. Continuing to Run Demo Vnf and DCAE Deployment on OOM Amsterdam release , I am facing 2 Issues at Hand . I have tried rebuilding the pod and things around it . but some how It is not helping .

Pls find the Onap-parameter.yaml attached .

The issue faced are Two .

a- At the Time of deploying the services below error is seen .Indicating Model-Version not found .

b- W.r.t to Service Distribution ,

The artifcats are distributed to SO only(17 artifacts getting distributed) .It looks Like there is no artifcats distribution happening between A&AI . Any Pointer why this could be happening and recommendation will be helpful .

Thanks

Vijaya

Alexis de Talhouët

AAI model-loader has probably failed. please bounce the pod and try again, it should fix your issue.

pranjal sharma

Hi Alexis,

I have tried to install onap usning rancher on kubernetes with amsterdam release.

All the pods are working in running condition.

However, when i run the command <./ete-k8s.sh health>, Health check up are failing for asdc, appc and usecaseui-gui API witg 500, 400 and 502 error code.

Basic ASDC Health Check | FAIL |

500 != 200

------------------------------------------------------------------------------

Basic APPC Health Check | FAIL |

400 != 200

------------------------------------------------------------------------------

usecaseui-gui API Health Check | FAIL |

502 != 200

------------------------------------------------------------------------------

because of that sdc application are not coming up in vnc portal.

o/p of demo script is as follows:

root@cadmin:~/oom/kubernetes/robot# ./demo-k8s.sh init_robot

WEB Site Password for user 'test': Starting Xvfb on display :89 with res 1280x1024x24

Executing robot tests at log level TRACE

==============================================================================

OpenECOMP ETE

==============================================================================

OpenECOMP ETE.Robot

==============================================================================

OpenECOMP ETE.Robot.Testsuites

==============================================================================

OpenECOMP ETE.Robot.Testsuites.Update Onap Page :: Initializes ONAP Test We...

==============================================================================

Update ONAP Page | FAIL |

ConnectionError: HTTPConnectionPool(host='controller', port=8774): Max retries exceeded with url: /v2.1/servers/detail (Caused by NewConnectionError('<urllib3.connection.HTTPConnection object at 0x7fa7c7251590>: Failed to establish a new connection: [Errno -2] Name or service not known',))

------------------------------------------------------------------------------

OpenECOMP ETE.Robot.Testsuites.Update Onap Page :: Initializes ONA... | FAIL |

1 critical test, 0 passed, 1 failed

1 test total, 0 passed, 1 failed

==============================================================================

OpenECOMP ETE.Robot.Testsuites | FAIL |

1 critical test, 0 passed, 1 failed

1 test total, 0 passed, 1 failed

==============================================================================

OpenECOMP ETE.Robot | FAIL |

1 critical test, 0 passed, 1 failed

1 test total, 0 passed, 1 failed

==============================================================================

OpenECOMP ETE | FAIL |

1 critical test, 0 passed, 1 failed

1 test total, 0 passed, 1 failed

==============================================================================

Output: /share/logs/demo/UpdateWebPage/output.xml

Log: /share/logs/demo/UpdateWebPage/log.html

Report: /share/logs/demo/UpdateWebPage/report.html

please let me know the workaround for this problem

Alexis de Talhouët

You shouldn't not proceed further if health check is failing. Try to re-deploy SDC and APP-C, it might be a transiant timing issue.

Suraj Kant Singh

Try ./demo-k8s.sh init instead of ./demo-k8s.sh init_robot

Alexis de Talhouët

The init is meant to create Demonstration customer in AAI, and create CloudRegion. Whereas init_robot let you configure the HTTP access to Robot log server. Very two distinct/different things.

pranjal sharma

oke..but whet we do with /ete-k8s.sh health script.

Is there any solution for this problem.

Thanks

Brian Freeman

there are race conditions in the oom startup. try stopping the A&AI docker containers (k8 will restart them) , wait for them to come fully up and then do a re-distribute from the SDC portal as op0001. Click on monitor and refresh as necessary to confirm that A&AI and SO have both picked up the models. If SDNC is not picking up models then you probably should stop the sdnc:ueb_listener container as well for the same reason. What appears to be happening is that sdc isn't up when the clients come up so the clients don't get their dmaap keys (or perhaps some other problem in the dmaap subscription)

pranjal sharma

vfw_sinc_vnf, vfw_pg_vnf and vsinc Vm's has been created in openstack dashboard.

there are 2 things i want to ask :

i dont see the vsinc module in the VID portal since it showed the mso maximum poll reached error

and i am not able to login into the vm, and key_pait these vm took is public one. please let me know how to login into that.

Thanks

Pranjal

pranjal sharma

Hello All,

fyi Alexis de Talhouët Vidhu Shekhar Pandey

Got an error message while creating service instance on step 10.e

Also there is no vFW option under Service type , so chose vFWCL . Not sure that could be an issue.

Healthcheck has the following failed :

.

root@cadmin:~/oom/kubernetes/robot# ./ete-k8s.sh health | grep FAIL

Basic DCAE Health Check | FAIL |

usecaseui-gui API Health Check | FAIL |

OpenECOMP ETE.Robot.Testsuites.Health-Check :: Testing ecomp compo... | FAIL |

OpenECOMP ETE.Robot.Testsuites | FAIL |

OpenECOMP ETE.Robot | FAIL |

OpenECOMP ETE | FAIL |

Please advise

:

Alexis de Talhouët

Hi, this is exactly the issue that Brian explained the comment above. Please bounce AAI model-loader. It should all be fixed after that.

Pedro Barros

hi Alexis de Talhouët,

i have the exactly same error and i restarted model-loader a few times. Any idea??

thnks

Razanne Abu-Aisheh

Hi All,

When we get to point 11 g, it fails giving an MSO error (Maximum number of poll attempts exceeded). In the MSO logs it says: "missing or invalid properties file: /etc/mso/config.d/mso.vfc.properties , we tried to look up the file, however it doesn't seem to exist anywhere.

would appreciate any help with this,

Thank you in advance...

Alexis de Talhouët

Hi, I don't know about this one... this is strange, though, I never faced this. Have you modified the mso-docker.json file before starting SO?

Razanne Abu-Aisheh

Hi, we have managed to find it here: https://gerrit.onap.org/r/#/c/28747/1/templates/default/mso-po-adapter-config/mso.vfc.properties, it created the heat stack fine now. also another question, do we need DCAE to get any graphs at all or is it just for the closed loop graphs?

Thanks in advance...

deity990

Hello, I'm trying to vFW demo.

But I can't mount to APPC.

http://{ip_address}:30230/restconf/operational/network-topology:network-topology/topology/topology-netconf/node/736249ee-ce93-402e-9be4-bef3a1ea3e88

<node xmlns="urn:TBD:params:xml:ns:yang:network-topology">

<node-id>736249ee-ce93-402e-9be4-bef3a1ea3e88</node-id>

<host xmlns="urn:opendaylight:netconf-node-topology">1.2.1.1</host> → ip address of vFW_PG

<connection-status xmlns="urn:opendaylight:netconf-node-topology">connecting</connection-status>

<port xmlns="urn:opendaylight:netconf-node-topology">2831</port>

</node>

<errors xmlns="urn:ietf:params:xml:ns:yang:ietf-restconf">

<error>

<error-type>protocol</error-type>

<error-tag>data-missing</error-tag>

<error-message>Mount point does not exist.</error-message>

</error>

</errors>

How can I fix the problem..?

Alexis de Talhouët

Hi, can you make sure APP-C is able to reach the IP of the PG you're trying to mount. Is the port open? Checkout the PG state.

deity990

Thank you for your response!

1) APP-C is able to reach the PG. (ping to PG command is OK) and port is opened.

buntu@vfw-pg-vnf:/home$ netstat -t | grep 2831

.... ok .....

2) But, there are some Exceptions when I execute appc mount script.

When I execute the robot shell "./demo-k8.sh appc {mymoudle_name} ", appc logs are below.

{appc_container}/var/log/onap/appc/karaf.log

-------------------------------------------------------------------------------------------------------------------------------------------

<log4j:event logger="org.opendaylight.netconf.nettyutil.handler.ssh.client.AsyncSshHandler" timestamp="1519954033349" level="WARN" thread="nioEventLoopGroupCloseable-3-27">

<log4j:message><![CDATA[Unable to setup SSH connection on channel: [id: 0xbf1c294f]]]></log4j:message>

<log4j:throwable><![CDATA[org.apache.sshd.common.SshException: Session is closed

at org.apache.sshd.client.session.ClientUserAuthServiceNew.preClose(ClientUserAuthServiceNew.java:220)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$AbstractCloseable.close(CloseableUtils.java:284)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$AbstractInnerCloseable.doCloseGracefully(CloseableUtils.java:351)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$AbstractCloseable.close(CloseableUtils.java:285)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$ParallelCloseable.doClose(CloseableUtils.java:182)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$SimpleCloseable.close(CloseableUtils.java:151)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$SequentialCloseable$1.operationComplete(CloseableUtils.java:205)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$SequentialCloseable$1.operationComplete(CloseableUtils.java:200)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$SequentialCloseable.doClose(CloseableUtils.java:215)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$SimpleCloseable.close(CloseableUtils.java:151)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$AbstractInnerCloseable.doCloseGracefully(CloseableUtils.java:351)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.util.CloseableUtils$AbstractCloseable.close(CloseableUtils.java:285)[30:org.apache.sshd.core:0.14.0]

at org.opendaylight.netconf.nettyutil.handler.ssh.client.AsyncSshHandler.disconnect(AsyncSshHandler.java:273)[339:org.opendaylight.netconf.netty-util:1.2.1.Carbon]

at org.opendaylight.netconf.nettyutil.handler.ssh.client.AsyncSshHandler.close(AsyncSshHandler.java:240)[339:org.opendaylight.netconf.netty-util:1.2.1.Carbon]

at io.netty.channel.AbstractChannelHandlerContext.invokeClose(AbstractChannelHandlerContext.java:625)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.AbstractChannelHandlerContext.close(AbstractChannelHandlerContext.java:609)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.ChannelOutboundHandlerAdapter.close(ChannelOutboundHandlerAdapter.java:71)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.AbstractChannelHandlerContext.invokeClose(AbstractChannelHandlerContext.java:625)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.AbstractChannelHandlerContext.close(AbstractChannelHandlerContext.java:609)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.ChannelOutboundHandlerAdapter.close(ChannelOutboundHandlerAdapter.java:71)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.AbstractChannelHandlerContext.invokeClose(AbstractChannelHandlerContext.java:625)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.AbstractChannelHandlerContext.close(AbstractChannelHandlerContext.java:609)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.AbstractChannelHandlerContext.close(AbstractChannelHandlerContext.java:466)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.DefaultChannelPipeline.close(DefaultChannelPipeline.java:964)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.AbstractChannel.close(AbstractChannel.java:234)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.ChannelFutureListener$2.operationComplete(ChannelFutureListener.java:56)[139:io.netty.transport:4.1.8.Final]

at io.netty.channel.ChannelFutureListener$2.operationComplete(ChannelFutureListener.java:52)[139:io.netty.transport:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:507)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise.notifyListeners0(DefaultPromise.java:500)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:479)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise.access$000(DefaultPromise.java:34)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise$1.run(DefaultPromise.java:431)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.AbstractEventExecutor.safeExecute(AbstractEventExecutor.java:163)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.SingleThreadEventExecutor.runAllTasks(SingleThreadEventExecutor.java:403)[138:io.netty.common:4.1.8.Final]

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:445)[139:io.netty.transport:4.1.8.Final]

at io.netty.util.concurrent.SingleThreadEventExecutor$5.run(SingleThreadEventExecutor.java:858)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultThreadFactory$DefaultRunnableDecorator.run(DefaultThreadFactory.java:144)[138:io.netty.common:4.1.8.Final]

at java.lang.Thread.run(Thread.java:748)[:1.8.0_151]

]]></log4j:throwable>

<log4j:properties>

<log4j:data name="bundle.id" value="339"/>

<log4j:data name="bundle.name" value="org.opendaylight.netconf.netty-util"/>

<log4j:data name="bundle.version" value="1.2.1.Carbon"/>

</log4j:properties>

</log4j:event>

<log4j:event logger="io.netty.util.concurrent.DefaultPromise" timestamp="1519954033349" level="WARN" thread="globalEventExecutor-1-4">

<log4j:message><![CDATA[An exception was thrown by org.opendaylight.protocol.framework.ReconnectPromise$2.operationComplete()]]></log4j:message>

<log4j:throwable><![CDATA[java.lang.IllegalStateException: complete already: ReconnectPromise@7830e775(failure: java.util.concurrent.CancellationException)

at io.netty.util.concurrent.DefaultPromise.setFailure(DefaultPromise.java:116)[138:io.netty.common:4.1.8.Final]

at org.opendaylight.protocol.framework.ReconnectPromise$2.operationComplete(ReconnectPromise.java:65)[328:org.opendaylight.controller.protocol-framework:0.9.1.Carbon]

at io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:507)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise.notifyListeners0(DefaultPromise.java:500)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:479)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise.access$000(DefaultPromise.java:34)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultPromise$1.run(DefaultPromise.java:431)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.GlobalEventExecutor$TaskRunner.run(GlobalEventExecutor.java:233)[138:io.netty.common:4.1.8.Final]

at io.netty.util.concurrent.DefaultThreadFactory$DefaultRunnableDecorator.run(DefaultThreadFactory.java:144)[138:io.netty.common:4.1.8.Final]

at java.lang.Thread.run(Thread.java:748)[:1.8.0_151]

Caused by: java.util.concurrent.CancellationException

at io.netty.util.concurrent.DefaultPromise.cancel(...)(Unknown Source)[138:io.netty.common:4.1.8.Final]

]]></log4j:throwable>

Alexis de Talhouët

Looks like an issue with the SSH Client vs Java Cipher:

java.security.InvalidAlgorithmParameterException: DH key size must be multiple of 64, and can only range from 512 to 2048 (inclusive). The specific key size 4096 is not supported

deity990

Right! We have exceptions in "karaf.log" file as you mentioned.

We found out that the configuration file "/opt/opendaylight/distribution-karaf-0.6.1-Carbon/etc/org.apache.karaf.shell.cfg"

But how can I change the default keySize into "2048", please give more detail information and check points about that. Thank you in advance. ^^

#

# Self defined key size in 1024, 2048, 3072, or 4096

# If not set, this defaults to 4096.

#

# keySize = 4096

Error logs "root@appc-775c4db7db-l4w76:/var/log/onap/appc/karaf.log"

<log4j:event logger="org.apache.sshd.client.session.ClientSessionImpl" timestamp="1519488167181" level="WARN" thread="sshd-SshClient[1afe1a32]-nio2-thread-8">

<log4j:message><![CDATA[Exception caught]]></log4j:message>

<log4j:throwable><![CDATA[java.security.InvalidAlgorithmParameterException: DH key size must be multiple of 64, and can only range from 512 to 2048 (inclusive). The specific key size 4096 is not supported

at com.sun.crypto.provider.DHKeyPairGenerator.initialize(DHKeyPairGenerator.java:128)[sunjce_provider.jar:1.8.0_151]

at java.security.KeyPairGenerator$Delegate.initialize(KeyPairGenerator.java:674)[:1.8.0_151]

at java.security.KeyPairGenerator.initialize(KeyPairGenerator.java:411)[:1.8.0_151]

at org.apache.sshd.common.kex.DH.getE(DH.java:65)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.client.kex.DHGEX.next(DHGEX.java:118)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.session.AbstractSession.doHandleMessage(AbstractSession.java:425)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.session.AbstractSession.handleMessage(AbstractSession.java:326)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.client.session.ClientSessionImpl.handleMessage(ClientSessionImpl.java:306)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.session.AbstractSession.decode(AbstractSession.java:780)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.session.AbstractSession.messageReceived(AbstractSession.java:308)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.AbstractSessionIoHandler.messageReceived(AbstractSessionIoHandler.java:54)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.io.nio2.Nio2Session$1.onCompleted(Nio2Session.java:184)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.io.nio2.Nio2Session$1.onCompleted(Nio2Session.java:170)[30:org.apache.sshd.core:0.14.0]

at org.apache.sshd.common.io.nio2.Nio2CompletionHandler$1.run(Nio2CompletionHandler.java:32)[30:org.apache.sshd.core:0.14.0]

at java.security.AccessController.doPrivileged(Native Method)[:1.8.0_151]

at org.apache.sshd.common.io.nio2.Nio2CompletionHandler.completed(Nio2CompletionHandler.java:30)[30:org.apache.sshd.core:0.14.0]

at sun.nio.ch.Invoker.invokeUnchecked(Invoker.java:126)[:1.8.0_151]

at sun.nio.ch.Invoker$2.run(Invoker.java:218)[:1.8.0_151]

at sun.nio.ch.AsynchronousChannelGroupImpl$1.run(AsynchronousChannelGroupImpl.java:112)[:1.8.0_151]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)[:1.8.0_151]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)[:1.8.0_151]

at java.lang.Thread.run(Thread.java:748)[:1.8.0_151]

]]></log4j:throwable>

Alexis de Talhouët

This is more a issue with the JDK itself, not allowing such keys. You could use BountyCastly security-provider instead of the default JDK one. See https://stackoverflow.com/a/39711275

Sandeep Shah

Hi deity990 and Alexis de Talhouët --

I would very much appreciate if you please let me know how you fixed this issue (SSH connection refused)? Seems like I am also running into a similar issue…

Thanks!

Praveen Ch

Hi All,

Quick question , while deploying ONAP , we have used keystone url as OPENSTACK_KEYSTONE_URL: "http://1.1.1.1:35357"

instead of OPENSTACK_KEYSTONE_URL: "http://1.1.1.1:5000" .

Will this impact deploying vFW , if so how can I amend this value to 5000 .

Do note we have deployed ONAP and current performing vFW .

We currently are on 11E,11F .

Kindly advise at the earliest .

Alexis de Talhouët

Shouldn't matter.

Praveen Ch

Thanks Alexis ,

However we have seen a change in behaviour , when we changed it from 35357 to 5000 .

It might as well be because we removed v2,v3 on our openstack database suggested by David perez below .

Not sure which solved the issue , but we no more get the keystone error .

Priyanka Khavatkopp

Hi All,

I am a beginner

I am not able to find the option from GUI to delete one of the SDC component i.e VSP(certified) or is there alternate solution or m i wrong?

can anyone help?

Bharath Thiruveedula

Priyanka Khavatkopp, In this release, we don't have option to delete SDC models.

Priyanka Khavatkopp

Hi,

While deploying VNF using ONAP using the below link :

https://wiki.onap.org/display/DW/vFWCL+instantiation%252C+testing%252C+and+debuging

we have used keystone url as OPENSTACK_KEYSTONE_URL: "http://1.1.1.1:35357"

instead of OPENSTACK_KEYSTONE_URL: "http://1.1.1.1:5000" .

Do note we have deployed ONAP and current performing vFW and currently performing step 11 g and 11h.

I have read your comment on k8s forum to change the url in mso and aai.

I have changed the keystone url port number from 35357 to 5000 in the /dockerdata-nfs/onap/mso/mso/mso-docker.json file.

But the error is still persisting as mso failure.

Did you i need to change in aai file separately. if yes, please suggest the file path for aai.

please refer the attached error file.

Kindly advise at the earliest .

David Perez Caparros

Hi,

maybe an issue with keystone API version? See section 'How to use both v2 and v3 Openstack Keystone API' in ONAP Installation in Vanilla OpenStack

David

Alexis de Talhouët

In SO you should edit the /etc/mso.d/config/cloud-config.json. The file is reloaded every minute.

Use the bellow request to see it's current state.

Basically, at startup, SO processes the mso-docker.json using Chef recipes, and explod it in small config file that it puts under a configurable path, which is by default /etc/mso.d/config

Praveen Ch

Thanks Alexis ,

We could modify the URL .

However have the following issue while deploying vFW_SINC during preload :

"requestId": "45c34fb0-d15b-436e-b8c6-9faad04e16ad",

"requestType": "createInstance",

"timestamp": undefined,

"requestState": "FAILED",

"requestStatus": "Received vfModuleException from VnfAdapter: category='INTERNAL' message='404 Not Found: The resource could not be found.' rolledBack='true'",

"precentProgress": "100"

"requestId": "45c34fb0-d15b-436e-b8c6-9faad04e16ad",

"requestType": "createInstance",

"timestamp": undefined,

"requestState": "IN_PROGRESS",

"requestStatus": undefined,

"precentProgress": "20"

03/01/18 18:48:43 HTTP Status: Accepted (202)

{

"requestReferences": {

"instanceId": "eb7f21d7-3728-4d4d-bc55-835a6b2a30fd",

"requestId": "45c34fb0-d15b-436e-b8c6-9faad04e16ad"

}

}

Alexis de Talhouët

Looks like the tenant information you provided to SO doesn't have to appropriate priviledge. Is that possible?

Praveen Ch

Thanks Alexis,

I've just logged in with the same credentials , no issues .

Also we have single username/password for this tenant , and it has admin access . As we are able to create VM's just fine using the same credentials .

Praveen Ch

Hi ALL,

How do i solve the issue , with health check :

usecaseui-gui API Health Check | FAIL |

Alexis de Talhouët

You don't usecase-ui is working fine, it's a false positive. The URI use for health-check seems wrong to me, but I'm not the expert in the area. See https://lists.onap.org/pipermail/onap-discuss/2018-February/008224.html

usecase-ui is working fine, it's a false positive. The URI use for health-check seems wrong to me, but I'm not the expert in the area. See https://lists.onap.org/pipermail/onap-discuss/2018-February/008224.html

Praveen Ch

Thanks Alexis ,

Rest of the components have PASS .

So believe we have some other issues.

pranjal sharma

vfw_sinc_vnf, vfw_pg_vnf and vsinc Vm's has been created in openstack dashboard.

there are 2 things i want to ask :

i dont see the vsinc module in the VID portal since it showed the mso maximum poll reached error

and i am not able to login into any of the vm's, and key_pair these vm took is public one. please let me know how to login into that.

Thanks

Pranjal

Praveen Ch

Hi All,

Keep failing at the last step , deploying the VNF .

get the below error :

"requestId": "e0002ab6-2221-4c6f-9b09-2a4f4ef31540",

"requestType": "createInstance",

"timestamp": undefined,

"requestState": "FAILED",

"requestStatus": "Received vfModuleException from VnfAdapter: category='INTERNAL' message='org.openecomp.mso.openstack.exceptions.MsoIOException: Unknown Host: controller' rolledBack='true'",

"precentProgress": "100"

"requestId": "e0002ab6-2221-4c6f-9b09-2a4f4ef31540",

"requestType": "createInstance",

"timestamp": undefined,

"requestState": "IN_PROGRESS",

"requestStatus": undefined,

"precentProgress": "20"

"requestId": "e0002ab6-2221-4c6f-9b09-2a4f4ef31540",

"requestType": "createInstance",

"timestamp": undefined,

"requestState": "IN_PROGRESS",

"requestStatus": undefined,

"precentProgress": "20"

"requestId": "e0002ab6-2221-4c6f-9b09-2a4f4ef31540",

"requestType": "createInstance",

"timestamp": undefined,

"requestState": "IN_PROGRESS",

"requestStatus": undefined,

"precentProgress": "20"

03/01/18 19:54:09 HTTP Status: Accepted (202)

{

"requestReferences": {

"instanceId": "44fbc19a-a889-483a-b14a-720f35f01e4e",

"requestId": "e0002ab6-2221-4c6f-9b09-2a4f4ef31540"

}

}

"controller" is the server name of our openstack controller , having said that we made sure we used the IP address for the Keystone URL .

How can i check my VIM configuration used by ONAP .

Praveen Ch

This is my configuration on MSO for VIM :

:/etc/mso/config.d# cat cloud_config.json

{

"cloud_config":

{

"identity_services":

{

"DEFAULT_KEYSTONE":

{

"identity_url": "http://1.1.1.1:5000/v2.0",

"mso_id": "admin",

"mso_pass": "81fd67a842ba6352729017088bb2b8ee",

"admin_tenant": "service",

"member_role": "admin",

"tenant_metadata": true,

"identity_server_type": "KEYSTONE",

"identity_authentication_type": "USERNAME_PASSWORD"

}

},

"cloud_sites":

{

"RegionOne":

{

"region_id": "RegionOne",

"clli": "RegionOne",

"aic_version": "2.5",

"identity_service_id": "DEFAULT_KEYSTONE"

}

}

}

}

ONAP-param.yaml has the following :

OPENSTACK_USERNAME: "admin"

OPENSTACK_PASSWORD: "xxxxx"

OPENSTACK_TENANT_NAME: "admin"

OPENSTACK_TENANT_ID: "96b8f4d527904bbc8c81d876fb962b4b"

OPENSTACK_REGION: "RegionOne"

# Either v2.0 or v3

OPENSTACK_API_VERSION: "v3"

OPENSTACK_KEYSTONE_URL: "http://1.1.1.1:5000"

# Don't change this if you don't know what it is

OPENSTACK_SERVICE_TENANT_NAME: "service"

Any help is greatly appreciated .

Davide Cherubini

Hi Praveen,

the error is Unknown Host: controller' therefore updating the /etc/hosts file into the mso POD with something like:

1.1.1.1 controller

should resolve the issue.

Praveen Ch

Hi Davide,

Thanks a lot , that did the trick .

vishwanath jayaraman

I saw postman collection being used in the video. It would be very helpful if that collection could be shared or if its already shared, a link to download that would be appreciated.

Alexis de Talhouët

I have a postman collection, but I cannot share it. It contains to many sensible data regarding the use cases we've implemented internally. If I get a change to do some triage, I'll upload it, but don't count on this anytime soon.

Suraj Kant Singh

Are we missing some steps ?

Please note we have not followed https://wiki.onap.org/display/DW/Pre-Onboarding or https://wiki.onap.org/display/DW/running+vFW+Demo+on+ONAP+Amsterdam+Release to create tenants/customer/owner/region etc or changed the .zip files

Rahul Sharma

Suraj Kant Singh, Hello,

I am a bit surprised that even though 2.a. shows no artifacts were distributed, you still have a service distributed in VID that you can deploy in step 2.b. - which basically means that VID was able to retrieve the artifacts from AAI - even though AAI never received them in step 2.a.

Most probably this will happen if you used Robot to generate the artifact and distributed them. You must have executed demo init which would have created the needed artifacts in AAI including Customer/region etc. However, even that failed to create artifacts in SO as the message in screenshot 2.b. suggests.

I faced something similar (as your point 2.a. above) - my service was distributed but none of the components received it!

After some analysis, I figured out that AAI-Model Loader (one of the µServices in AAI that receives the artifact) was having trouble getting notifications from SDC (via DMaap). I got similar exceptions in SO logs.

My educated guess is that the SDC UEBClusters somehow takes more time to be up than the time-outs defined in MSO and AAI... So restarting AAI-ML and then MSO pods to actually have the SDC-UEBServers registered in them, did the trick for me...

You can do a quick check by looking at the logs in AAI (AAI-ML logs) and MSO (ASDC-Controller logs under /var/log/ecomp/MSO/). See if they are both complaining of unable to communicate with UEBClusters.

deity990

We try to demo vFWCL to understand ONAP close loop, but we can't update policy.

APPC mount succeed by changing honeycomb configuration of vPG. (https://docs.fd.io/hc2vpp/1.17.04-SNAPSHOT/release-notes-aggregator/user_honeycomb_and_ODL.html)

But, we have some problems with this demonstration.

Please, let me know check point to address this issue as detail as possible. Thanks in advance.

1) Can't get policies after running update "update-vfw-op-policy.sh"

2) Can't find the "dcae-sch" row when distributing service in step 9. The service distribution status is below.

3) We have some exceptions in policy drools container. (policy@drools-2762157365-742s9:/var/log/onap/error.log)

[2018-03-05 03:31:26,661|WARN|CambriaConsumerImpl|UEB-source-APPC-LCM-WRITE] Topic not found: /events/APPC-LCM-WRITE/d1817e7c-d47b-44fc-af42-e98cc99304a6/0?timeout=15000&limit=100

[2018-03-05 03:31:36,604|ERROR|InlineBusTopicSink|UEB-source-PDPD-CONFIGURATION] SingleThreadedUebTopicSource [getTopicCommInfrastructure()=UEB, toString()=SingleThreadedBusTopicSource [co

nsumerGroup=fc3db8a4-5b66-4673-935f-67678bb3215a, consumerInstance=0, fetchTimeout=15000, fetchLimit=100, consumer=CambriaConsumerWrapper [fetchTimeout=15000], alive=true, locked=false, ue

bThread=Thread[UEB-source-PDPD-CONFIGURATION,5,main], topicListeners=0, toString()=BusTopicBase [apiKey=, apiSecret=, useHttps=false, allowSelfSignedCerts=false, toString()=TopicBase [serv

ers=[dmaap.onap-message-router], topic=PDPD-CONFIGURATION, #recentEvents=0, locked=false, #topicListeners=1]]]]: cannot fetch because of

java.net.SocketTimeoutException: Read timed out

at java.net.SocketInputStream.socketRead0(Native Method)

at java.net.SocketInputStream.socketRead(SocketInputStream.java:116)

at java.net.SocketInputStream.read(SocketInputStream.java:171)

at java.net.SocketInputStream.read(SocketInputStream.java:141)

at org.apache.http.impl.io.SessionInputBufferImpl.streamRead(SessionInputBufferImpl.java:139)

at org.apache.http.impl.io.SessionInputBufferImpl.fillBuffer(SessionInputBufferImpl.java:155)

at org.apache.http.impl.io.SessionInputBufferImpl.readLine(SessionInputBufferImpl.java:284)

at org.apache.http.impl.conn.DefaultHttpResponseParser.parseHead(DefaultHttpResponseParser.java:140)

at org.apache.http.impl.conn.DefaultHttpResponseParser.parseHead(DefaultHttpResponseParser.java:57)

at org.apache.http.impl.io.AbstractMessageParser.parse(AbstractMessageParser.java:261)

at org.apache.http.impl.DefaultBHttpClientConnection.receiveResponseHeader(DefaultBHttpClientConnection.java:165)

at org.apache.http.impl.conn.CPoolProxy.receiveResponseHeader(CPoolProxy.java:167)

at org.apache.http.protocol.HttpRequestExecutor.doReceiveResponse(HttpRequestExecutor.java:272)

at org.apache.http.protocol.HttpRequestExecutor.execute(HttpRequestExecutor.java:124)

at org.apache.http.impl.execchain.MainClientExec.execute(MainClientExec.java:271)

at org.apache.http.impl.execchain.ProtocolExec.execute(ProtocolExec.java:184)

at org.apache.http.impl.execchain.RetryExec.execute(RetryExec.java:88)

at org.apache.http.impl.execchain.RedirectExec.execute(RedirectExec.java:110)

at org.apache.http.impl.client.InternalHttpClient.doExecute(InternalHttpClient.java:184)

at org.apache.http.impl.client.CloseableHttpClient.execute(CloseableHttpClient.java:82)

at com.att.nsa.apiClient.http.HttpClient.runCall(HttpClient.java:622)

at com.att.nsa.apiClient.http.HttpClient.get(HttpClient.java:380)

at com.att.nsa.apiClient.http.HttpClient.get(HttpClient.java:364)

at com.att.nsa.cambria.client.impl.CambriaConsumerImpl.fetch(CambriaConsumerImpl.java:87)

at com.att.nsa.cambria.client.impl.CambriaConsumerImpl.fetch(CambriaConsumerImpl.java:64)

at org.onap.policy.drools.event.comm.bus.internal.BusConsumer$CambriaConsumerWrapper.fetch(BusConsumer.java:140)

at org.onap.policy.drools.event.comm.bus.internal.SingleThreadedBusTopicSource.run(SingleThreadedBusTopicSource.java:245)

at java.lang.Thread.run(Thread.java:748)

[2018-03-05 03:31:36,604|WARN|HostSelector|UEB-source-PDPD-CONFIGURATION] All hosts were blacklisted; reverting to full set of hosts.

[2018-03-05 03:31:38,422|ERROR|InlineBusTopicSink|UEB-source-APPC-CL] SingleThreadedUebTopicSource [getTopicCommInfrastructure()=UEB, toString()=SingleThreadedBusTopicSource [consumerGroup

=45a1644a-6588-4526-9d95-96885bc41a81, consumerInstance=0, fetchTimeout=15000, fetchLimit=100, consumer=CambriaConsumerWrapper [fetchTimeout=15000], alive=true, locked=false, uebThread=Thr

ead[UEB-source-APPC-CL,5,main], topicListeners=0, toString()=BusTopicBase [apiKey=, apiSecret=, useHttps=false, allowSelfSignedCerts=false, toString()=TopicBase [servers=[dmaap.onap-messag

e-router], topic=APPC-CL, #recentEvents=0, locked=false, #topicListeners=1]]]]: cannot fetch because of

java.net.SocketTimeoutException: Read timed out

at java.net.SocketInputStream.socketRead0(Native Method)

at java.net.SocketInputStream.socketRead(SocketInputStream.java:116)

at java.net.SocketInputStream.read(SocketInputStream.java:171)

at java.net.SocketInputStream.read(SocketInputStream.java:141)

at org.apache.http.impl.io.SessionInputBufferImpl.streamRead(SessionInputBufferImpl.java:139)

at org.apache.http.impl.io.SessionInputBufferImpl.fillBuffer(SessionInputBufferImpl.java:155)

at org.apache.http.impl.io.SessionInputBufferImpl.readLine(SessionInputBufferImpl.java:284)

at org.apache.http.impl.conn.DefaultHttpResponseParser.parseHead(DefaultHttpResponseParser.java:140)

at org.apache.http.impl.conn.DefaultHttpResponseParser.parseHead(DefaultHttpResponseParser.java:57)

at org.apache.http.impl.io.AbstractMessageParser.parse(AbstractMessageParser.java:261)

at org.apache.http.impl.DefaultBHttpClientConnection.receiveResponseHeader(DefaultBHttpClientConnection.java:165)

at org.apache.http.impl.conn.CPoolProxy.receiveResponseHeader(CPoolProxy.java:167)

at org.apache.http.protocol.HttpRequestExecutor.doReceiveResponse(HttpRequestExecutor.java:272)

at org.apache.http.protocol.HttpRequestExecutor.execute(HttpRequestExecutor.java:124)

at org.apache.http.impl.execchain.MainClientExec.execute(MainClientExec.java:271)

at org.apache.http.impl.execchain.ProtocolExec.execute(ProtocolExec.java:184)

at org.apache.http.impl.execchain.RetryExec.execute(RetryExec.java:88)

at org.apache.http.impl.execchain.RedirectExec.execute(RedirectExec.java:110)

at org.apache.http.impl.client.InternalHttpClient.doExecute(InternalHttpClient.java:184)

deity990

Is there any check point to resolve this issue? Please, give me some comment!

Syed Atif Husain

Rahul Sharma How can we have multiple users login simultaneously to the ONAP portal?

If I login with designer, then demo user logs off or any other user.

is it possible for multiple users to login simultaneously to the same onap portal in separate sessions?

Rahul Sharma

Syed Atif Husain: Sorry for being super late.

I just tried this by opening another tab in the same mozilla firefox browser. One logged in as designer and other logged in as demo user; was able to work on these simultaneously.

dhebeha mj

Hi,

I am not able to add vnf after creating service instance. The product family shows "undefined".I have attached the screenshot.

Vijayalakshmi H

Hi Alexis and Brian,

Release: Amsterdam without dcae.

Issue: MSO Authentication error while creating the VNF Module.

I see that the mso_pass for the mso_id is not encrypted correctly.

Verified using http://172.16.20.40:30223/networks/rest/cloud/encryptPassword/<tenant_password>

Solution: Used the correct encrypted password using the above link and replaced the "mao_pass" in mso_docker.json under /dockerdata_nfs/onap/mso/

Once this was done and bounced the MSO pod, the MSO Auth error was resolved.

Can you check if this was a transient problem on MSO or something needs to be changed in MSO??

Thanks

Vijaya

Suraj Kant Singh

Hi,

I was also using with no DCAE and stuck at the same location, Vijaya were you also getting the same error ? I assume yes, as after taking your solution. It seems my onap was able to communicate to the open stack. I am now getting a different error given below.

MSO failure - see log below for details

"requestId": "cb6d27c7-535a-4a17-879d-0f0a518354db",

"requestType": "createInstance",

"timestamp": undefined,

"requestState": "FAILED",

"requestStatus": "Received vfModuleException from VnfAdapter: category='INTERNAL' message='Exception during create VF 500 Internal Server Error: The server has either erred or is incapable of performing the requested operation., error.type=AuthorizationFailure, error.message=Authorization failed.' rolledBack='true'",

"precentProgress": "100"

After changing the mso_pass I started to get the following error now.

MSO failure - see log below for details

"requestId": "fa9c7331-175c-4d6e-b7a1-4d6b877f0e86",

"requestType": "createInstance",

"timestamp": undefined,

"requestState": "FAILED",