1.1 (R1)

Dependencies

https://docs.openstack.org/designate/pike/index.html

TBD

https://onap.readthedocs.io/en/latest/submodules/dcaegen2.git/docs/sections/blueprints/DockerHost.html

https://onap.readthedocs.io/en/latest/submodules/dcaegen2.git/docs/sections/blueprints/deploymenthandler.html

https://onap.readthedocs.io/en/latest/submodules/dcaegen2.git/docs/sections/blueprints/centos_vm.html

https://onap.readthedocs.io/en/latest/submodules/dcaegen2.git/docs/sections/blueprints/ves.html

http://onap.readthedocs.io/en/latest/submodules/dcaegen2.git/docs/sections/installation.html

1.0.0 (Feb 2017)

The Data Collection, Analytics, and Events (DCAE) subsystem, in conjunction with other ONAP components, gathers performance, usage, and configuration data from the managed environment. This data is then fed to various analytic applications, and if anomalies or significant events are detected, the results trigger appropriate actions, such as publishing to other ONAP components such as Policy, MSO, or Controllers.

The primary functions of the DCAE subsystem are to

- Collect, ingest, transform and store data as necessary for analysis

- Provide a framework for development of analytics

These functions enable closed-loop responses by various ONAP components to events or other conditions in the network.

DCAE provides the ability to detect anomalous conditions in the network. Such conditions, might be, for example, fault conditions that need healing or capacity conditions that require resource scaling. DCAE gathers performance, usage, and configuration data about the managed environment, such as about virtual network functions and their underlying infrastructure. This data is then distributed to various analytic micro-services, and if anomalies or significant events are detected, the results trigger appropriate actions. In addition, the micro-services might persist the data (or some transformations of the data) in the storage lake. In addition to supporting closed-loop control, DCAE also makes the data and events available for higher-level correlation by business and operations activities, including business support systems (BSS) and operational support systems (OSS).

Usage and other event processing applications can be created in the DCAE environment. In addition to real-time processing of events, these applications can perform mediation of the usage and other events to external BSSs or OSSs. For example, events about bill-impacting configuration changes or consumption of any new product or service can be subscribed to by external BSS applications for various purposes such as rating, balance management and charge calculations.

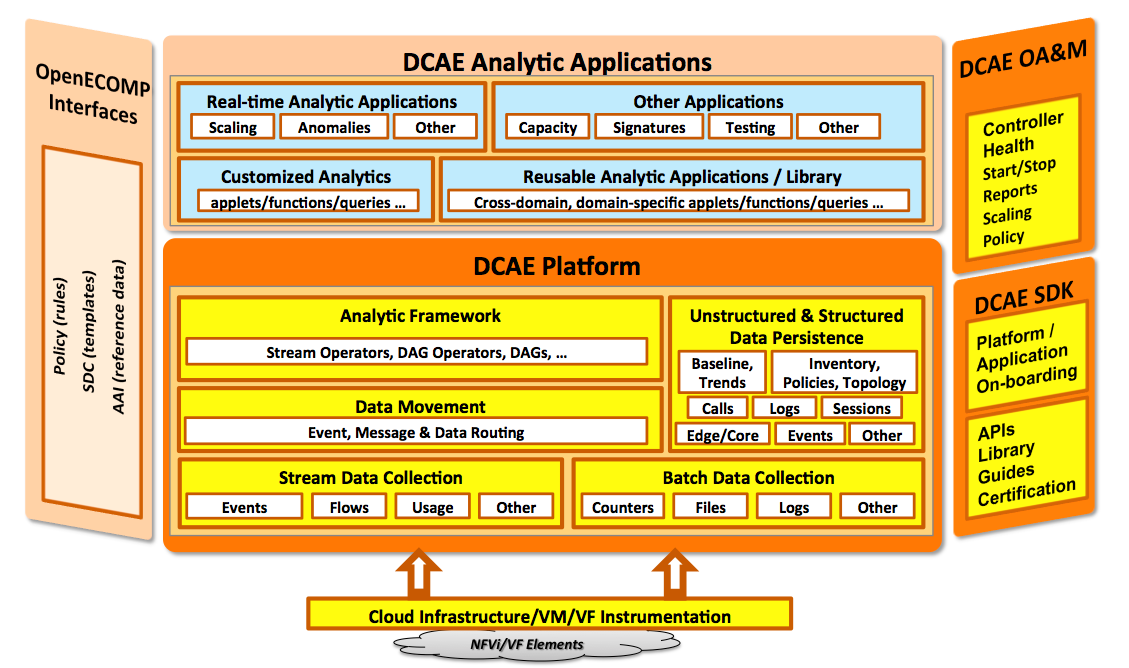

The following figure provides a functional view of the DCAE Platform architecture.

Figure 1. DCAE Platform high-level architecture

DCAE Platform Components

The DCAE Platform consists of several functional components: Collection Framework, Data Movement, Storage Lakes, Analytic Framework, and Analytic Applications.

In large scale deployments, DCAE components are generally distributed in multiple sites that are organized hierarchically. For example, to provide DCAE function for a large scale ONAP system that covers multiple sites spanning across a large geographical area, there will be edge DCAE sites, central DCAE sites, and so on. Edge sites are physically close to the network functions under collection, for reasons such as processing latency, data transport, and security, but often have limited computing and communications resources. On the other hand, central sites generally have more processing capacity and better connectivity to the rest of the ONAP system. This hierarchical organization offers better flexibility, performance, resilience, and security.

Collection Framework

The collection layer provides the various data collectors that are needed to collect the instrumentation that is available from the cloud infrastructure. Included are both physical and virtual elements. For example, collection of the following types of data is supported:

events data for monitoring the health of the managed environment

data to compute the key performance and capacity indicators necessary for elastic management of the resources

granular data needed for detecting network and service conditions (such as flow, session and call records)

The collection layer supports both real-time streaming and batch collection.

Data Movement

This component (known as DMaaP) facilitates the movement of messages and data between various publishers and interested subscribers that may reside at different sites. While a key component within DCAE, this is also the component that enables data movement between various ONAP components.

Edge and Central Lake

DCAE supports a variety of applications and use cases. These range from real-time applications that have stringent latency requirements to other analytic applications that have a need to process a range of unstructured and structured data. The DCAE storage lake supports these needs and is scalable so that new storage technologies can be incorporated as they become available. The storage lake uses big-data storage technologies such as in-memory repositories and support for raw, structured, unstructured and semi-structured data to accommodate a broad scope of requirements such as large volume, velocity, and variety.

While there may be detailed data retained at the DCAE edge layer for analysis and trouble-shooting, applications should optimize the use of bandwidth and storage resources by propagating only the required data (for example, reduced, transformed, or aggregated) to the core data lake for other analyses.

Analytic Framework

Analytics and related applications run in the Analytic Framework of DCAE. The Analytic Framework enables agile development of analytic applications. This framework supports creation of applications that process data from multiple streams and sources. Applications can be real-time – for example, analytics, anomaly detection, capacity monitoring, congestion monitoring, or alarm correlation – or non-real time, such as applications that perform analytics on previously collected data or forward synthesized, aggregated or transformed data to big data stores and other applications. The framework can process both real-time streams of data and data collected through traditional batch methods. Analytic applications are managed by the DCAE controller.

Analytic Applications

The following list provides examples of the types of applications that can be built on top of DCAE:

Analytics These will be the most common applications that are processing collected data and deriving interesting metrics or analytics for use by other applications. These analytics applications range from very simple ones (from a single source of data) that compute usage, utilization, latency, and similar metrics to very complex ones that detect specific conditions based on data collected from various sources. The analytics could be capacity indicators used to adjust resources or could be performance indicators pointing to anomalous conditions requiring response.

Fault / event correlation: This is a key application type that processes events and thresholds published by managed resources or other applications that detect specific conditions. Based on defined rules, policies, known signatures and other knowledge about the network or service behavior, an application of this kind would determine root cause for various conditions and notify other interested applications.

Performance surveillance and visualization: This class of application provides a window to an operations organization, notifying it of network and service conditions. The notifications could include outages and impacted services or customers based on various dimensions of interest. They provide visual aids ranging from geographic dashboards to virtual information model browsers to detailed drilldown to specific service or customer impacts.

Capacity planning: This class of application provides planners and engineers the ability to adjust forecasts based on observed demands as well as plan specific capacity augments at various levels, e.g., NFVI level (technical plant, racks, clusters, etc.), Network level (bandwidth, circuits, etc.), Service or Customer levels.

Testing and troubleshooting: This class of application provides operations the tools to test & trouble-shoot specific conditions. They could range from simple health checks for testing purposes, to complex service emulations orchestrated for troubleshooting purposes. In both cases, DCAE provides the ability to collect the results of health checks and tests that are conducted. These checks and tests could be done on an ongoing basis, scheduled or conducted on demand.

Security: Some components of the infrastructure may expose new targets for security threats. Orchestration and control, decoupled hardware and software, and commodity hardware may be more susceptible to attack than proprietary hardware. However, SDN and virtual networks also offer an opportunity for collecting a rich set of data for security analytics applications to detect anomalies that signal a security threat, such as DDoS attack, and automatically trigger mitigating action.

Other: The applications listed here are by no means exhaustive and the open architecture of DCAE lends itself to integration of additional application capabilities over time.

DCAE System Flows

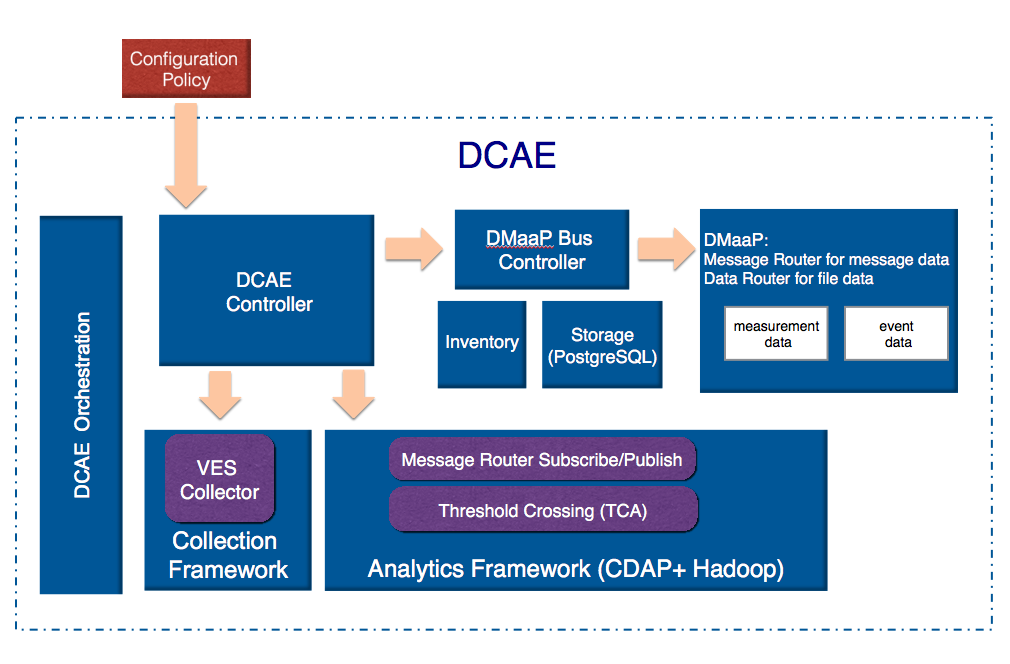

The following figures show the implemented system architecture and flows for the first release of ONAP. DCAE for this release is "minimalistic" in the sense that it is a single DCAE site with all DCAE functions.

Figure 2 shows the DCAE configuration flow. The flow proceeds as follows:

- The DCAE Controller is instantiated from an ONAP Heat template.

- The DCAE Controller instantiates the rest of the DCAE components, including both infrastructure and service/application components.

- The DCAE Controller configures service/application components with static configurations, configuration policies fetched at run-time (for example data processing configurations or alert configurations), and any DMaap topics required for communication.

Figure 2. DCAE configuration flow (Control plane)

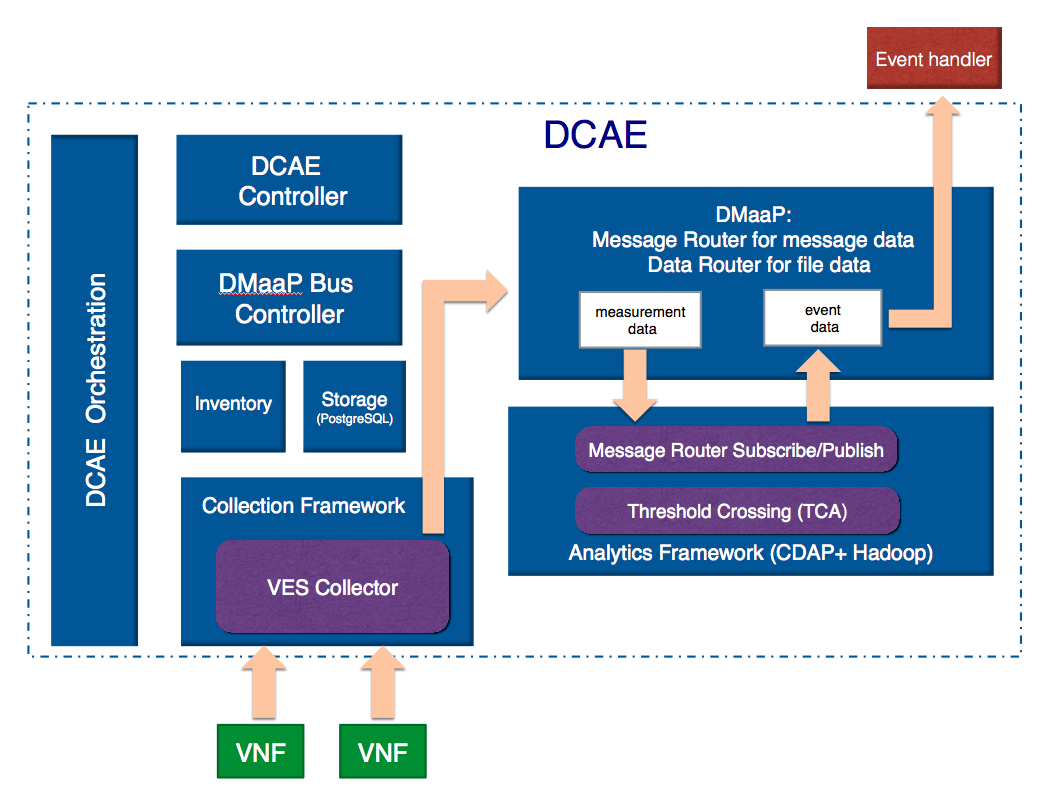

Figure 3 shows the DCAE data flow. This flow proceeds as follows:

- VNFs use REST calls to push measurement data to the DCAE VES collector.

- The VES collector validates, filters, and packages the received measurement data, and publishes the data to the "measurement data" topic of DMaaP.

- The analytics application receives measurement data from the DMaaP "measurement data" topic.

- The analytics application analyzes measurement data, and if alert conditions (defined by the alert policy that was installed by the DCAE Controller) are met, publishes an alert event to the DMaaP "event data" topic.

- Other ONAP components, for example the Policy or MSO subsystems, receive alert events from the DMaaP "event data" topic and react accordingly.

Figure 3. DCAE data flow (Data plane)

References

39 Comments

ramki krishnan

Nicely done and well described. Some thoughts

Reuben Klein

We chose CDAP because we needed a tool which met the basic requirements and could be opensourced. Cask met that criteria and many of our immediate needs so we adopted it on that basis in order to move forward. Because we needed to handle streaming analytics, not just data mining, there were not a lot of good alternatives that we could see.

Going forward, we will also be adding machine learning microservices to supply adaptive analysis which could be progressively improved as DCAE’s experience with any subject VNF goes on. How that gets implemented in ONAP is a great community discussion.

ramki krishnan

Many thanks Reuben. Looking forward to the ML discussion – are there any meetups planned for this topic?

Yusuf Mirza

1 - In the DCAE data flow, "VNFs use REST calls to push measurement data to the DCAE VES collector" - Is this happening over the DCAE DMaaP topic OR the VES collector exposes RESTAPIs which is directly used by VNF?

2 - from DCAE "event data" topic, how is the event moved to ONAP DCAE-CL-EVENT topic for the closed loop control?

3- At what stage PolicyName/ClosedLoopControlName parameter is set in the event which will eventually match with a BRMS policy and which component within DCAE will set it? is it done as part of the microservice? In the current release, for the provided vFirewall Closed loop policy, how does BRMSParamvFWDemoPolicy.EVENT rule is matched/invoked?

4 - My understanding from the sample policy and code is that the "actor" (e.g. APPC, MSO, etc.) is set/decided within the policy and not passed by DCAE as an event parameter, but it can passed by DCAE as well. Could you pls confirm this.

I am trying to stitch the end to end flow of an event from VNF triggering a closed loop from the available sample policies in the current release. Once it reaches the policy, the drool engine will fire it to APPC or the set actor (via DMaap) and close the loop.

Alok Gupta

Yusuf: following is a response to your question.

The flow is: VNF will send the VES supported telemetry data to DCAE collector, which will validate security credentials and syntax, It applies topic control and places data on DMaaP message bus. The other systems (Adaptation, Mapping, Enrichment, Correlation, Analytics etc.) typically would subscribe to the data, process it and put it back on the DMaaP. Policies would be used by other systems to take an action or further process the data.

We also have a yaml on-boarding artifact in ASDC that can be used to start creation of Policies. We will review some of these details during May 5th Service Assurance Break-out session.

https://lists.onap.org/pipermail/onap-tsc/2017-April/000215.html .

Yusuf Mirza

Thanks Alok, looking forward to the updates/decisions from May 5th Session. I have not seen the yaml artifact for the creation of policies. Is it available in the current release and could you pls point me to that?

Vijay Venkatesh Kumar

Hello Yosuf - below are the response to your query.

#1 VES collector exposes restful endpoint for VNF to send events;the endpoints are defined based on VES specification.

#2 The Event data topic may actually be referring to CL topic. Note the topic name is configurable parameter passed by controller.

#3 This is set in AF based on policy parameters (these are setup at deployment time via configuration and the controller monitor policy changes). For demo purpose - the ClosedLoopControlName was preconfigured to match policy configuration.

#4 Yes ,this is not something DCAE sets currently. The actor is probably determined based on the policy-PDP rules.

Yusuf Mirza

Thanks Vijay for the inputs. On point #1, is there a specific reason to expose RESTAPIs from Collectors instead of using pub/sub on a topic as used by other components? For e.g. if we want to introduce redundancies at collector layer pub/sub mechanism is better suited as there will be no impact (license or technical) on VNF side.

For point #3 - Is there any documentation or reference files that I can refer to understand it better? I have not yet seen much of application framework tool in the current release.

Vijay Venkatesh Kumar

Yes, the collector on demo usecase was simplified, however it support additional functions. It can verify the schema against the VES standard and returns appropriate status code. Based on the type of VES events - it also identifies appropriate DMAAP topic and forwards accordingly. As VES standard support multiple domain (fault, measurement, syslog etc) - the VES standard simplifies VNF to send data into one client rather than building separate channel/interface with DMAAP.

And presence of collector layer between VNF and DMAAP enables more control for suppression/filtering/alarm parsing need. DCAE has several other collector as individual MS - SNMP Trap, SNMP stats poller, Syslog etc which was not part ONAP R1(they will be added into ONAP roadmap somepoint I believe). The flow VNF->Collector->DMAAP will be consistent across all of these individual MS.

On #3 - sorry, documentation is work in progress. We will try adding them in coming weeks.

Yusuf Mirza

Thanks Vijay & Alok. It would really help if you guys can release minimalistic set of instructions to set up a closed/open loop control end to end (VNF → Collector → DCAE bus → TCA/xx Analytics → ONAP bus → BRMS Policy → ONAP bus → APPC/MSO) As there is some bit of config to be done at all the involved components to make it work (driven through DCAE controller or scripts?).

From the sample policy code, I could figure out ONAP bus → BRMS Policy → ONAP bus → APPC/MSO part. But need some code level understanding of the other components on how they are stitched.

Viswanath Kumar Skand Priya

Hi Vijay Venkatesh Kumar,

I understand the rationale behind choosing REST over Pub/sub. However I would like to understand if http/2 streaming support has been explored. Wouldn't streaming over http greatly improve the performance here?

BR,

Viswa

Sridhar Bhaskaran

Hi Vijay Venkatesh Kumar ,

I would like to ask the same question as Viswanath Kumar Skand Priya . Is there any plan for supporting HTTP/2?

Also is there any design rationale for the event source to provide the client throttling state to VES event collector instead of the VES event collector providing an event subscription filter to the event source? Usually its the event receiver who would get overloaded and would ask the event source to throttle by providing filters. It would be helpful to understand the rationale for doing it the other way.

BR

Sridhar

Vijay Venkatesh Kumar

Hi Sridhar Bhaskaran - DCAE has streaming collector HV-VES (protobuf over TCP) introduced in Casablanca rls. You can find the related doc here - https://onap.readthedocs.io/en/dublin/submodules/dcaegen2.git/docs/sections/services/ves-hv/index.html. For HTTP/2 protocol exclusively, currently no plans. If there is usecase requiring it - we can look into it.

As for event throttling - I believe this was part of original VES design (may not be valid anymore). Original design was indeed for VES Collector to specify the domain/events for suppression (based on commandlist in response). However the newer VES design/approach is to have this managed by controllers (e.g APPC).

Sridhar Bhaskaran

Thank you Vijay Venkatesh Kumar for the clarification. This helps.

Aayush Bhatnagar

Where are the REST APIs documented for DCAE ? Should we use Swagger or similar tools ? If this information is available and published - please share the link.

Alok Gupta

VES 5.0 Documents can be found at the following att github location.

https://github.com/att/evel-test-collector/tree/master/docs/att_interface_definition

Please note: we are in the process of updating evel library and it will be available later this month. For collector Code we plan to provide the DCAE Collector Code.

Susan Ye

What else does DCAE controller do besides instantiating and configuring the rest of the DCAE components? If we move all the DCAE components into docker containers and have them managed by the Kubernetes, can we safely discard the controller?

Beejal Shah

Susan Ye, DCAE is also responsible for distributing policy configuration to CDAP. If you remove it, you still need some mechanism to distribute policies and configuration to your CDAP clusters every time a change is made or a new policy is implemented.

Christopher Rath

DCAE not only deploys and manages the platform components, (Cloudify, Consul, PostgreSQL, CDAP, etc.), but also deploys and manages the micro-services that make up service assurance flows that run on the platform. Those micro-services are not limited to Docker containers. Our analytics platform of choice today is CDAP, so the controller provides methods for deploying CDAP applications on the CDAP cluster when they are referenced in blueprints. These same methods also apply to connecting components using the data bus.

Seshu Kumar Mudiganti

I have some questions about DCAE APIs.

Which APIs help to define aforementioned policies for DCAE ?

Christopher Rath

In general, policy-related information for DCAE collectors and analytics is stored and managed in the Policy Engine component. DCAE has an interface to retrieve the latest configuration policy for a component when it is deployed on DCAE, and to receive update notifications so the configuration can be updated.

Components are associated with a Policy in the blueprints from which they are deployed. It is up to the blueprint developer to determine whether policies are re-used across instances of a component or if each copy has its own policy, (thus allowing for VNF-type specific policies vs VNF-specific policies).

Storage retention is partly based on the blueprint design, (e.g. the output of a particular analytic component is published to a local storage facility or to a central storage facility, to a relational or NoSql database), while specifics about which data is stored may be in a configuration policy.

Analytic and collector components all behave in the same way with respect to policy.

If by Event Policies you mean policies that control closed-loop operations based on events, that is generally handled by rules in the Policy Engine component itself, rather than in DCAE.

Usman Anwar

Hi,

Controllers and their Adapters use NETCONF APIs for VNF configuration, so, is there support available for receiving NETCONF notifications in DCAE (any other component)?

Michel Chevanne

Hi

To build a new analytics application, can you confirm that the following APIs are available:

br

You Li

Hi, if I would like to develop a new analysis application ?like TCA?for dcae, how can I intergrate it into DCAE? Do I have to add my application code to DCAE-APOD and rebuild the whole DCAE, or there is other way to add plugin to DCAE without building the project ?

Evguenia (Jane) Freider

Actually I have a similar question. I was reading DCAE code and whatever I could find about TCA but didn't go far much. I see some configuration files for TCA but couldn't find classes where TCA logic is actually implemented. We don't necessarily need a new application but an ability to configure TCA to process inbound measurement events and send an outbound event so we could catch it somehow. I didn't find any documentation on how to tweak it or what queue to subscribe to even just see those processed events... So far we only could read measurement events outbound from VES.

Any advice on where this code is located? Or how to configure TCA? And how to read outbound events from it? Thanks a lot.

Evguenia (Jane) Freider

Actually I'm making some progress today and wanted to clarify - so we can send messages to VES and read output from it using unauthenticated.SEC_MEASUREMENT_OUTPUT topic. And using BASE/topics DmaaP API call I also found unauthenticated.TCA_EVENT_OUTPUT topic however nothing comes out of it My guess is we need to configure TCA better to do something, instead of some default processing.

My guess is we need to configure TCA better to do something, instead of some default processing.

Vijay Venkatesh Kumar

Hi - the current openecomp analytics is supported by application called "dcae-analytics-tca" under apod.analytics repo. This app however is specifically built to support Openecomp demo cases (hence the configuration model are tied closely to vF5 and vDNS usecase). We are currently working on couple of enhancement for this analytics mS in DCAE to support R1 usecases generically.

With R1 - the ms onboarding into DCAE platform does change significantly (pls refer DCAE Project Proposal (5/11/17) & MicroServices Onboarding in ONAP for high level info). Typically each mS source would require separate repo although sourcing common libraries from other repo (e.g ccsdk).

Alok Gupta

I had presented to the DCAE team VES frame work, which includes a yaml on-boarding artifact that defines Measurement events with suggested Thresholds and action that should be taken once threshold is reached. We can convert the yaml to Policies that can drive TCA micro-service with the action. We can provide a recommend policies that can be used as a starting point.

If interested I can review the info in the next DCAE meeting or would be happy to provide it one-on-one.

Evguenia (Jane) Freider

Sounds interesting! Is there a way for you to attach any documentation here?

I might not be able to attend next couple DCAE meetings but I'm sure it would be helpful, especially if the meeting could be recorded for later viewing. Thank you.

Xin Miao

Hi, Alok,

Could you please provide the 'yaml on-boarding artifact that defines Measurement events with suggested Thresholds and action that should be taken once threshold is reached'? Has any of the documents mentioned above attached anywhere on the wiki?

Thanks!

Evguenia (Jane) Freider

Hi Alok, can you present at the DCAE meeting this week? If not, perhaps provide links to the some documentation so we could read on our own? I still can't find any info about TCA interfaces and how to define policies and test them by interfacing with TCA.Thanks a lot.

Alok Gupta

Jane:

I had provided a data collection frame work using VES and also defined the onboarding yaml artifact that includes information on what events are received from VNF and the fields with measurements that should be monitored for thresholds. We have a CDAP based thresholding micro-service, that I think Lusheng Ji can provide details on. I will talk to Lusheng and see if he is willing to go over it during DCAE team meeting.

Alok

Sripriya Belagall

Can anyone help me with all with steps to build and compile DCAE?

Need the exact links for the plugins mentioned above that are required for DCAE compilation. The given major.minor are not found in eclipse marketplace.

And It would also be helpful if I get to know the sequence of code modules to be compiled to avoid dependency issues among the various DCAE repos.

Elena Mititelu

Hi,

I have a problem with the dcae boot container.

When the boot container comes up, is trying to download the dnsdesig-1.0.0-py27-none-any.wgn plugin.

The download fails and the uninstall flow starts

{{Downloading from https://nexus.onap.org/service/local/repositories/raw/content/org.onap.ccsdk.platform.plugins/plugins/dnsdesig-1.0.0-py27-none-any.wgn to /tmp/tmp4lDpBd/dnsdesig-1.0.0-py27-none-any.wgnBootstrap failed! (https://nexus.onap.org/service/local/repositories/raw/content/org.onap.ccsdk.platform.plugins/plugins/dnsdesig-1.0.0-py27-none-any.wgn is neither a valid local path nor a valid url)

Executing teardown due to failed bootstrap...

2017-11-16 19:26:13 CFY <manager> Starting 'uninstall' workflow execution

2017-11-16 19:26:13 CFY <manager> [sanity_4c871] Stopping node}}

The URL seams to be ok.

Does anyone know how to work around it?

Sana Tariq

Can someone explain how are new Policies written in Amsterdam Release? Last I tried in ECOMP was Drool code ....Is there any improvement in developing conditional rules in easy ways? Looking forward to explore Amsterdam release and explore more but wanted an early feedback from those who have already explored.

Flavio Poletti

I have some doubts regarding the usage of VES in association with DCAE for performance management.

On the one hand, VES does not seem tailored for carrying lots of domain-specific data, especially when they are usually represented through hierarchical models. One such example are XML measurement files as traditionally found in 3GPP. Looking at VES, my impression is that it expects to carry somehow "higher level" measurements (KPI) than the detail about every little part.

On the other hand, the aim of DCAE is to collect as much data as possible, so that analytics applications are allowed to extract meaningful information from data afterwards. Here, I would say that the attention to the detail should matter a lot.

So... isn't the choice of VES limiting the range of data and elaborations that can be done on that data in DCAE? Are there alternative ways to collect measurement data at a fine granularity level?

navodita Jaiswal

I have some doubts, could you please clarify how many collectors are there in the collection framework? and what are those? also, what kind of file format does each collectors support?

Vijay Venkatesh Kumar

Pls check here for details on DCAE MS/collectors - https://docs.onap.org/en/latest/submodules/dcaegen2.git/docs/sections/services/serviceindex.html

navodita Jaiswal

Thanks Mr. Vijay, It was really helpful.